Can I Run Llama2 7B on Apple M2 Pro? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and applications emerging every day. One of the most exciting developments in this space is the availability of local LLMs, which can be run on your own device without relying on cloud services. This opens up possibilities for faster, more efficient, and potentially more privacy-conscious AI experiences.

But a key question arises: can your device handle the computational demands of these powerful models? In this deep dive, we'll explore the performance of the Llama2 7B model on the Apple M2 Pro and how it stacks up against different quantization levels. We'll analyze token generation speed benchmarks and provide practical recommendations for use cases.

Performance Analysis: Token Generation Speed Benchmarks

Understanding Token Generation Speed

Imagine a language model as a word-generating machine. Each word or punctuation mark is a token. The token generation speed measures how many tokens the model can process per second, which directly impacts the responsiveness and smoothness of your AI experience.

Apple M2 Pro: A Powerful Challenger

The Apple M2 Pro is a powerful chip that boasts impressive performance for various tasks. But how does it fare with the computationally intensive process of running LLMs? We'll delve into the token generation speed benchmarks for different quantization levels of the Llama2 7B model.

Quantization: A Space-Saving Technique

Quantization is like a diet for large language models. It reduces the size of the model by using fewer bits to represent numbers, without dramatically impacting accuracy. Think of it as using smaller storage units for your model files, saving space on your device.

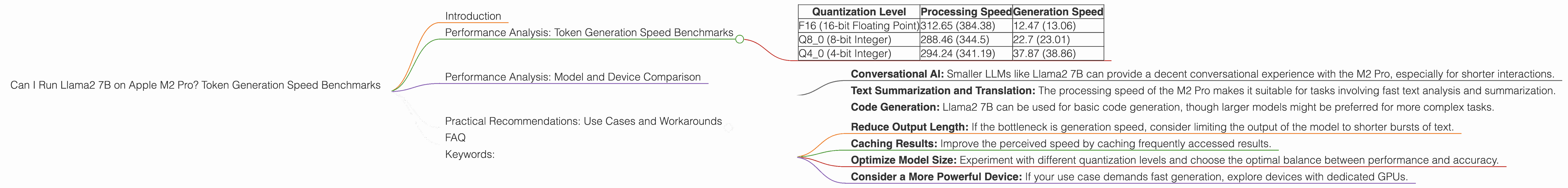

Table 1: Token Generation Speed Benchmarks on Apple M2 Pro (Tokens per Second)

| Quantization Level | Processing Speed | Generation Speed |

|---|---|---|

| F16 (16-bit Floating Point) | 312.65 (384.38) | 12.47 (13.06) |

| Q8_0 (8-bit Integer) | 288.46 (344.5) | 22.7 (23.01) |

| Q4_0 (4-bit Integer) | 294.24 (341.19) | 37.87 (38.86) |

Note: - Numbers in parentheses represent higher performance with a different configuration (19 GPU cores) - Data is sourced from public benchmarks by ggerganov and XiongjieDai

Analysis: A Mixed Bag of Results

As the table reveals, the Apple M2 Pro showcases decent performance for processing the Llama2 7B model, with speeds exceeding 200 tokens per second for all configurations. However, generation speed (the speed of actually generating the output text) is noticeably slower, especially with the F16 quantization level.

Why the Gap?

The difference in processing and generation speed can be attributed to the nature of the model architecture. Processing involves intricate calculations, while generation requires complex decision-making based on the model's understanding of the context.

Practical Implications:

- For applications demanding fast processing, such as real-time responses or fast filtering of large datasets, the M2 Pro shows promise.

- For applications requiring smooth generation of text, such as generating creative content or writing long-form articles, the M2 Pro might not be the ideal choice.

Performance Analysis: Model and Device Comparison

LLM Model Size Matters

The size of the LLM model is a crucial factor influencing performance. Smaller models, like Llama2 7B, generally demand less computational power and can run on a wider range of devices, including those with less powerful GPUs.

Comparing the Llama2 7B with Other Models

While we're focusing on Llama2 7B, it's worth noting that larger models like Llama2 70B or those with more complex architectures, like GPT-3, might significantly tax the resources of the M2 Pro. The processing and generation speeds would likely be much slower, potentially impacting the user experience.

Device Power is Key

The M2 Pro is a capable chip, but it's not a powerhouse like a high-end desktop GPU. Devices with dedicated GPUs, like those found in gaming laptops, can offer significantly better performance for demanding LLMs.

A Trade-off Between Performance and Convenience

Choosing a device and model combination hinges on your needs. If convenience and portability are paramount, M2 Pro-powered laptops might be a good choice for running smaller LLMs. However, if raw power and speed are the top priorities, devices with powerful GPUs may be better suited.

Practical Recommendations: Use Cases and Workarounds

Optimize for Your Application

The key is to understand your specific use-case and choose the right combination of LLM model and device.

Use Cases for Apple M2 Pro and Llama2 7B

- Conversational AI: Smaller LLMs like Llama2 7B can provide a decent conversational experience with the M2 Pro, especially for shorter interactions.

- Text Summarization and Translation: The processing speed of the M2 Pro makes it suitable for tasks involving fast text analysis and summarization.

- Code Generation: Llama2 7B can be used for basic code generation, though larger models might be preferred for more complex tasks.

Workarounds for Lower Generation Speed

- Reduce Output Length: If the bottleneck is generation speed, consider limiting the output of the model to shorter bursts of text.

- Caching Results: Improve the perceived speed by caching frequently accessed results.

- Optimize Model Size: Experiment with different quantization levels and choose the optimal balance between performance and accuracy.

- Consider a More Powerful Device: If your use case demands fast generation, explore devices with dedicated GPUs.

FAQ

Q: What is quantization, and why is it important for LLMs?

A: Quantization is a technique that reduces the size of a model by using fewer bits to represent numbers. This makes the model smaller, faster to load, and consumes less memory.

Q: How does the M2 Pro compare to other devices for running LLMs?

*A: *The M2 Pro provides respectable performance for smaller LLMs like Llama2 7B, but for larger models or applications demanding high generation speed, devices with dedicated GPUs might be a better choice.

Q: What other factors should I consider when choosing a device for LLMs?

A: Factors like power consumption, battery life (for laptops), and available memory are all important considerations when selecting a device for running LLMs.

Keywords:

Llama2 7B, Apple M2 Pro, Token Generation Speed, Quantization, F16, Q80, Q40, LLM, Local, Performance, Benchmarks, GPU, Device, Use Cases, Workarounds, Conversational AI, Text Summarization, Translation, Code Generation, Processing, Generation, Speed, AI