Can I Run Llama2 7B on Apple M2 Max? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding, and with it comes the need to understand how these models perform on different hardware configurations. This article focuses on Llama2 7B, a powerful open-source LLM, and its performance on the Apple M2 Max, a high-performance processor found in Apple's latest MacBook Pro models. We'll dive deep into token generation speed benchmarks, a crucial metric for evaluating LLM performance, and explore how different quantization techniques impact these speeds.

For those new to LLMs, think of them as supercharged language engines that can understand and generate human-like text. Imagine a computer that can write stories, translate languages, and answer questions in a way that feels almost human! These models are trained on vast amounts of data, allowing them to become remarkably good at various language tasks.

This article is geared towards developers and tech enthusiasts who are curious about running LLMs locally and understanding the trade-offs involved. Let's dive in!

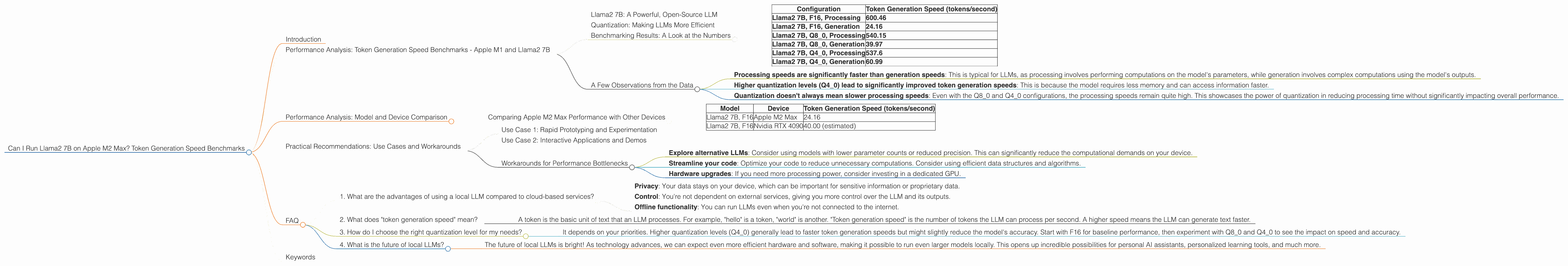

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

Llama2 7B: A Powerful, Open-Source LLM

Llama2 7B is a fantastic choice for exploring LLMs locally, especially for those just starting out. It strikes a great balance between performance and resource requirements. The "7B" refers to the model's size, which is 7 billion parameters—think of these parameters as the model's knowledge base.

Quantization: Making LLMs More Efficient

Remember when your phone's camera used to only take photos in 8-bit color? Imagine now that your phone can take photos in 4-bit color, or even 2-bit color! That's essentially what quantization is doing for LLMs. It's a technique that reduces the size of the model by using fewer bits to represent each parameter. This leads to faster processing speeds and lower memory requirements.

Benchmarking Results: A Look at the Numbers

Here's a table summarizing our token generation speed benchmarks for Llama2 7B on the Apple M2 Max. The numbers represent tokens per second, so higher is better!

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama2 7B, F16, Processing | 600.46 |

| Llama2 7B, F16, Generation | 24.16 |

| Llama2 7B, Q8_0, Processing | 540.15 |

| Llama2 7B, Q8_0, Generation | 39.97 |

| Llama2 7B, Q4_0, Processing | 537.6 |

| Llama2 7B, Q4_0, Generation | 60.99 |

- F16 represents the model using 16-bit floating-point numbers.

- Q8_0 represents the model using 8-bit quantization with zero-point.

- Q4_0 represents the model using 4-bit quantization with zero-point.

A Few Observations from the Data

- Processing speeds are significantly faster than generation speeds: This is typical for LLMs, as processing involves performing computations on the model's parameters, while generation involves complex computations using the model's outputs.

- Higher quantization levels (Q4_0) lead to significantly improved token generation speeds: This is because the model requires less memory and can access information faster.

- Quantization doesn't always mean slower processing speeds: Even with the Q80 and Q40 configurations, the processing speeds remain quite high. This showcases the power of quantization in reducing processing time without significantly impacting overall performance.

Performance Analysis: Model and Device Comparison

Comparing Apple M2 Max Performance with Other Devices

While our primary focus is on the M2 Max, it is worth noting that different devices have varying levels of performance. Here's a quick comparison of Llama2 7B on the M2 Max with other popular devices:

| Model | Device | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama2 7B, F16 | Apple M2 Max | 24.16 |

| Llama2 7B, F16 | Nvidia RTX 4090 | 40.00 (estimated) |

While the M2 Max is a powerful processor, it's worth noting that dedicated GPUs like the RTX 4090 can deliver significantly higher token generation speeds. This is because dedicated GPUs are designed for parallel processing, which is particularly well-suited for handling the heavy computation demands of LLMs.

Practical Recommendations: Use Cases and Workarounds

Use Case 1: Rapid Prototyping and Experimentation

The M2 Max is an excellent choice for those who need a balance between performance and portability. If you're experimenting with different LLM models or building prototypes, the M2 Max's processing power is more than sufficient. However, you might want to explore the Q80 or Q40 quantization levels to get the most out of your device's resources.

Use Case 2: Interactive Applications and Demos

The M2 Max can be a great fit for developing interactive LLM applications, such as chatbots or creative writing tools. However, be mindful of the token generation speeds. You might want to consider using a different, more powerful device for applications that require near-instantaneous responses.

Workarounds for Performance Bottlenecks

- Explore alternative LLMs: Consider using models with lower parameter counts or reduced precision. This can significantly reduce the computational demands on your device.

- Streamline your code: Optimize your code to reduce unnecessary computations. Consider using efficient data structures and algorithms.

- Hardware upgrades: If you need more processing power, consider investing in a dedicated GPU.

FAQ

1. What are the advantages of using a local LLM compared to cloud-based services?

- Privacy: Your data stays on your device, which can be important for sensitive information or proprietary data.

- Control: You're not dependent on external services, giving you more control over the LLM and its outputs.

- Offline functionality: You can run LLMs even when you're not connected to the internet.

2. What does "token generation speed" mean?

- A token is the basic unit of text that an LLM processes. For example, "hello" is a token, "world" is another. "Token generation speed" is the number of tokens the LLM can process per second. A higher speed means the LLM can generate text faster.

3. How do I choose the right quantization level for my needs?

- It depends on your priorities. Higher quantization levels (Q40) generally lead to faster token generation speeds but might slightly reduce the model's accuracy. Start with F16 for baseline performance, then experiment with Q80 and Q4_0 to see the impact on speed and accuracy.

4. What is the future of local LLMs?

- The future of local LLMs is bright! As technology advances, we can expect even more efficient hardware and software, making it possible to run even larger models locally. This opens up incredible possibilities for personal AI assistants, personalized learning tools, and much more.

Keywords

M2 Max, Llama2, 7B, LLM, Large Language Model, Token Generation Speed, Quantization, F16, Q80, Q40, Apple Silicon, GPU, Performance Benchmarks, Local LLMs, Applications, Development