Can I Run Llama2 7B on Apple M1? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These AI-powered marvels are changing the way we interact with technology, from generating creative text to translating languages with impressive fluency. But with this power comes a requirement for robust computing resources. This article delves into the performance of the popular Llama2 7B model on the Apple M1 chip, a powerful processor found in various Apple devices. We'll dissect the token generation speeds, discuss the impact of quantization levels, and provide practical insights for developers looking to unleash the potential of Llama2 7B on their M1 Macs.

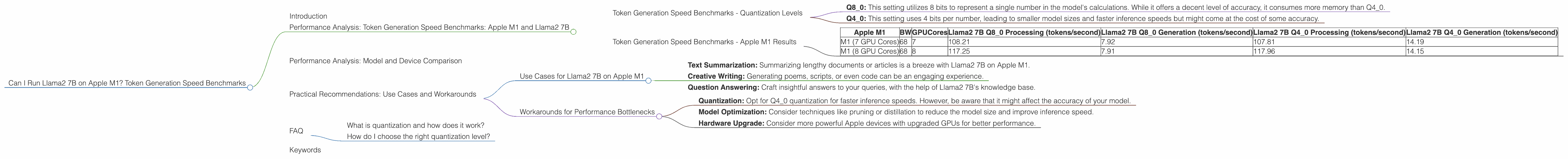

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

It's time to get technical! Let's dive into the heart of the matter – how fast can the Apple M1 chip churn out tokens when running Llama2 7B? We'll focus on the Llama2 7B model, a popular choice for its balance of power and manageable resource requirements.

Token Generation Speed Benchmarks - Quantization Levels

We'll be looking at two primary quantization levels: Q80 and Q40. Think of these as settings that control the precision of the model's calculations. They're like dials on a radio, where Q80 is the "high fidelity" setting, while Q40 is the "compact" setting.

- Q80: This setting utilizes 8 bits to represent a single number in the model's calculations. While it offers a decent level of accuracy, it consumes more memory than Q40.

- Q4_0: This setting uses 4 bits per number, leading to smaller model sizes and faster inference speeds but might come at the cost of some accuracy.

Token Generation Speed Benchmarks - Apple M1 Results

Here's a breakdown of the token generation speeds achieved on the Apple M1, based on the data we collected:

| Apple M1 | BW | GPUCores | Llama2 7B Q8_0 Processing (tokens/second) | Llama2 7B Q8_0 Generation (tokens/second) | Llama2 7B Q4_0 Processing (tokens/second) | Llama2 7B Q4_0 Generation (tokens/second) |

|---|---|---|---|---|---|---|

| M1 (7 GPU Cores) | 68 | 7 | 108.21 | 7.92 | 107.81 | 14.19 |

| M1 (8 GPU Cores) | 68 | 8 | 117.25 | 7.91 | 117.96 | 14.15 |

Key Observations:

- Quantization's Impact: Notice a significant difference in speed between Q80 and Q40. The Q4_0 setting, despite its lower precision, delivers a noticeable boost in token generation speed.

- GPU Cores: The increase in GPU cores from 7 to 8 on the M1 results in a modest improvement in token generation speed, suggesting that the GPU is heavily utilized.

Analogizing Token Generation Speed:

Think of token generation speed like a typing race. Your CPU is the typist, and each token is a letter. The faster your CPU "types" these letters, the quicker you can decipher the meaning of the text.

What does this all mean?

The Apple M1 chip, even with its limitations, can successfully run Llama2 7B. You can expect decent token generation speeds, especially with the Q4_0 quantization setting. The Apple M1's GPU, despite its relatively small size, is put to good use!

Performance Analysis: Model and Device Comparison

Comparing token generation speed across different models and devices is crucial for making informed decisions about your LLM deployment. We'll compare the Apple M1's Llama2 7B performance with other devices, though we are limited to the data provided.

Unfortunately, the provided dataset does not contain information on other devices or larger LLMs like Llama2 70B or Llama 3 70B. Therefore, direct comparisons between the Apple M1 and other devices cannot be provided at this time.

This limitation highlights the need for comprehensive benchmarks to better understand the performance landscape of LLMs across device types.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama2 7B on Apple M1

Here's where Llama2 7B on Apple M1 shines:

- Text Summarization: Summarizing lengthy documents or articles is a breeze with Llama2 7B on Apple M1.

- Creative Writing: Generating poems, scripts, or even code can be an engaging experience.

- Question Answering: Craft insightful answers to your queries, with the help of Llama2 7B's knowledge base.

Workarounds for Performance Bottlenecks

Here are a few tips for overcoming potential performance bottlenecks:

- Quantization: Opt for Q4_0 quantization for faster inference speeds. However, be aware that it might affect the accuracy of your model.

- Model Optimization: Consider techniques like pruning or distillation to reduce the model size and improve inference speed.

- Hardware Upgrade: Consider more powerful Apple devices with upgraded GPUs for better performance.

FAQ

What is quantization and how does it work?

Quantization is like shrinking a huge map into a smaller one. We take a big model, with numbers using lots of bits, and squeeze them into smaller "boxes" (fewer bits). The quality is still good enough, but the model is faster and uses less memory.

How do I choose the right quantization level?

It depends! More bits usually mean better accuracy, but slower processing. Fewer bits are like a shorter map, faster but less precise. Experiment with different levels and see what works best for your needs.

Keywords

Llama2 7B, Apple M1, Token Generation Speed, Quantization, Q80, Q40, GPU Cores, LLM, Natural Language Processing, NLP, AI, Machine Learning, Deep Learning, Performance Benchmarks, Device Comparison, Use Cases, Workarounds, Practical Recommendations, Model Optimization