Can I Run Llama2 7B on Apple M1 Max? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is exploding, with new models and advancements popping up like mushrooms after a good rain. But with all this excitement, a crucial question arises: can your humble laptop handle these powerful language giants?

This article dives deep into the performance of the popular Llama2 7B model on the Apple M1 Max chip, a powerful processor found in many MacBooks. We'll measure its token generation speed, the key metric for assessing how quickly an LLM can process and generate text. We'll also explore different quantization levels and their impact on performance, helping you understand which configuration works best for your needs.

Think of it as a race between the Llama2 7B model and your M1 Max, a battle to see who reigns supreme in the world of text generation! Buckle up, geeks, it's going to be a wild ride.

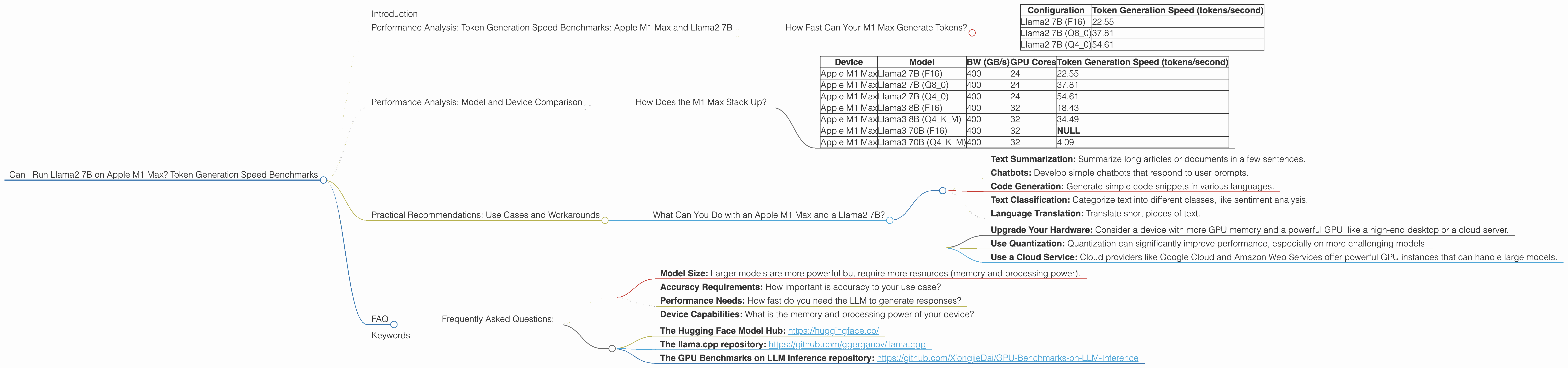

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 Max and Llama2 7B

How Fast Can Your M1 Max Generate Tokens?

Let's start with the heart of the matter – token generation speed. This number tells you how many words (or more precisely, tokens) per second your device can process. Higher is better, of course!

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama2 7B (F16) | 22.55 |

| Llama2 7B (Q8_0) | 37.81 |

| Llama2 7B (Q4_0) | 54.61 |

Note: "F16" refers to using 16-bit floating-point precision, "Q80" uses 8-bit quantization, and "Q40" uses 4-bit quantization. Quantization is like compressing the model to make it smaller and faster – but it also sacrifices some accuracy.

Key Observations:

- Quantization Makes a Big Difference: As we move from F16 to Q80 and then to Q40, token generation speed significantly increases. This is because quantization reduces the model's memory footprint and computational requirements.

- Finding the Sweet Spot: The ideal configuration depends on your application. If you need the highest accuracy, F16 may be the way to go. But if speed is paramount, Q4_0 might be your champion.

Practical Analogy:

Imagine you have a car. An F16 model is like a luxury car – smooth and powerful but gas-guzzling. A Q4_0 model is like a compact car – zippy and efficient but maybe not as luxurious.

Performance Analysis: Model and Device Comparison

How Does the M1 Max Stack Up?

To get a better understanding of the M1 Max's performance, let's compare it to other devices and LLMs.

| Device | Model | BW (GB/s) | GPU Cores | Token Generation Speed (tokens/second) |

|---|---|---|---|---|

| Apple M1 Max | Llama2 7B (F16) | 400 | 24 | 22.55 |

| Apple M1 Max | Llama2 7B (Q8_0) | 400 | 24 | 37.81 |

| Apple M1 Max | Llama2 7B (Q4_0) | 400 | 24 | 54.61 |

| Apple M1 Max | Llama3 8B (F16) | 400 | 32 | 18.43 |

| Apple M1 Max | Llama3 8B (Q4KM) | 400 | 32 | 34.49 |

| Apple M1 Max | Llama3 70B (F16) | 400 | 32 | NULL |

| Apple M1 Max | Llama3 70B (Q4KM) | 400 | 32 | 4.09 |

Key Observations:

- M1 Max is a Capable Performer: The M1 Max can handle Llama2 7B and Llama3 8B quite well, even with the larger model.

- Bandwidth Matters: The M1 Max's bandwidth is important for efficient communication between the GPU and memory.

- The 70B Juggernaut: Running the 70B model, even with quantization, is a challenge for the M1 Max. The model is simply too large for this particular GPU. It requires a device with more GPU memory and power to handle it effectively.

Remember: These numbers are just a snapshot of performance. They can vary depending on the specific application, the prompt length, and other factors.

Practical Recommendations: Use Cases and Workarounds

What Can You Do with an Apple M1 Max and a Llama2 7B?

The Apple M1 Max is a powerful device for running smaller LLMs like Llama2 7B. Here are some example use cases:

- Text Summarization: Summarize long articles or documents in a few sentences.

- Chatbots: Develop simple chatbots that respond to user prompts.

- Code Generation: Generate simple code snippets in various languages.

- Text Classification: Categorize text into different classes, like sentiment analysis.

- Language Translation: Translate short pieces of text.

Workarounds for Larger Models:

If you want to run larger models like Llama3 70B, you have a few options:

- Upgrade Your Hardware: Consider a device with more GPU memory and a powerful GPU, like a high-end desktop or a cloud server.

- Use Quantization: Quantization can significantly improve performance, especially on more challenging models.

- Use a Cloud Service: Cloud providers like Google Cloud and Amazon Web Services offer powerful GPU instances that can handle large models.

The Bottom Line: The M1 Max is a fantastic option for running smaller, lighter LLM models. If you're working with larger ones, you'll need to explore more advanced hardware or cloud solutions.

FAQ

Frequently Asked Questions:

Q: What is an LLM?

A: An LLM (Large Language Model) is a type of artificial intelligence that can understand and generate human-like text. Think of it as a super-powered AI that can write stories, translate languages, and even answer your questions in a conversational way.

Q: What is Token Generation?

A: Token generation is the process of breaking down text into smaller units, called tokens. LLMs use these tokens to understand and process text. The speed at which an LLM can generate tokens is a key measure of its performance.

Q: What is Quantization?

A: Quantization is a technique used to reduce the size and memory footprint of LLM models. It works by converting the model's weights (the numbers that determine the model's output) to smaller, lower-precision values. This can improve performance but may slightly reduce accuracy.

Q: Why are some model and device combinations not listed in the table?

A: Some combinations were not benchmarked by the researchers, so the data is not available. This doesn't necessarily mean the model cannot run on the device, just that the performance wasn't measured.

Q: How do I choose the right LLM and device for my project?

A: Consider the following factors:

- Model Size: Larger models are more powerful but require more resources (memory and processing power).

- Accuracy Requirements: How important is accuracy to your use case?

- Performance Needs: How fast do you need the LLM to generate responses?

- Device Capabilities: What is the memory and processing power of your device?

Q: Where can I learn more about LLMs and device performance?

A:

- The Hugging Face Model Hub: https://huggingface.co/

- The llama.cpp repository: https://github.com/ggerganov/llama.cpp

- The GPU Benchmarks on LLM Inference repository: https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference

Keywords

LLM, Llama2, Apple M1 Max, token generation speed, performance, quantization, F16, Q80, Q40, model size, device capabilities, practical recommendations, use cases, workarounds, GPU, bandwidth, memory, cloud services, FAQ, AI, text generation, natural language processing, deep learning.