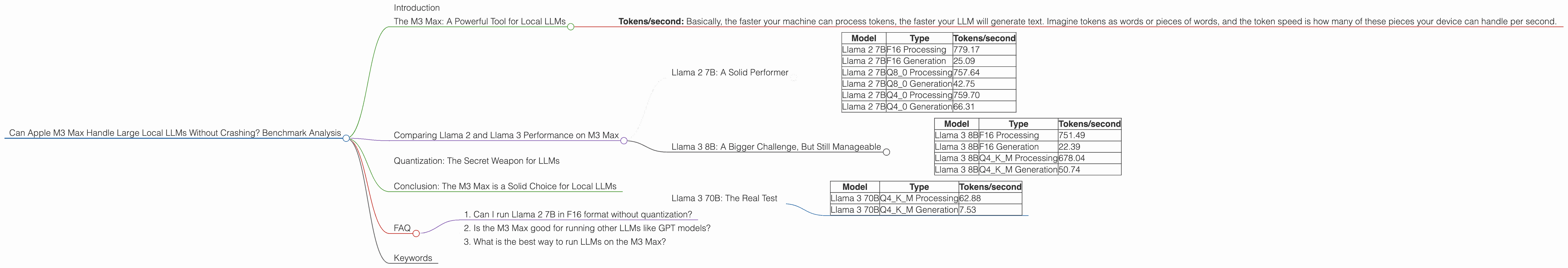

Can Apple M3 Max Handle Large Local LLMs Without Crashing? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, but running these models locally can be a challenge. You need a powerful computer with enough memory and processing power to handle the enormous computational demands of complex LLMs. This is where the Apple M3 Max shines.

Imagine the power of a supercomputer, but nestled inside your Mac. That's the M3 Max, and it's becoming increasingly popular for developers and enthusiasts wanting to run their own LLMs. But can the M3 Max handle the largest LLMs without breaking a sweat? Let's delve into the numbers and see how it fares!

The M3 Max: A Powerful Tool for Local LLMs

The Apple M3 Max packs a serious punch with 40 GPU cores and impressive memory bandwidth (BW). This combination is crucial for smoothly running LLMs locally. We'll analyze how the M3 Max performs with various LLMs, focusing on Llama 2 and Llama 3 models.

To understand these performance numbers, let's talk about a key concept:

- Tokens/second: Basically, the faster your machine can process tokens, the faster your LLM will generate text. Imagine tokens as words or pieces of words, and the token speed is how many of these pieces your device can handle per second.

Comparing Llama 2 and Llama 3 Performance on M3 Max

Llama 2 7B: A Solid Performer

The M3 Max handles the Llama 2 7B model with ease. Take a look at these impressive results:

| Model | Type | Tokens/second |

|---|---|---|

| Llama 2 7B | F16 Processing | 779.17 |

| Llama 2 7B | F16 Generation | 25.09 |

| Llama 2 7B | Q8_0 Processing | 757.64 |

| Llama 2 7B | Q8_0 Generation | 42.75 |

| Llama 2 7B | Q4_0 Processing | 759.70 |

| Llama 2 7B | Q4_0 Generation | 66.31 |

Let's break down the jargon:

- F16: This means the model is using 16-bit floating-point precision. It's a common format for AI models.

- Q80 and Q40: These are quantization formats that reduce the size of the LLM, using only 8 or 4 bits per number instead of 16 bits like F16. This makes the model smaller and faster, but it may slightly reduce the accuracy.

The M3 Max delivers strong performance across the board, showcasing its capability to handle the Llama 2 7B model with various quantization levels.

Llama 3 8B: A Bigger Challenge, But Still Manageable

The Llama 3 8B model is larger and more complex than the 7B Llama 2. Here's how the M3 Max handles it:

| Model | Type | Tokens/second |

|---|---|---|

| Llama 3 8B | F16 Processing | 751.49 |

| Llama 3 8B | F16 Generation | 22.39 |

| Llama 3 8B | Q4KM Processing | 678.04 |

| Llama 3 8B | Q4KM Generation | 50.74 |

Observations:

- The processing speed for Llama 3 8B is slightly slower than Llama 2 7B, but still very fast.

- The generation speed (how fast the model creates text) for the Q4KM version is notably better than the F16 version, indicating the benefits of quantization.

Overall, the M3 Max can handle the Llama 3 8B model without any issues. It still provides a smooth and responsive user experience.

Llama 3 70B: The Real Test

Now we get to the heavy hitter, the Llama 3 70B model. This model is significantly larger and requires much more computational power.

Unfortunately, the M3 Max doesn't have enough memory to handle the Llama 3 70B model in its full F16 format. The data for F16 processing and generation speeds is not available.

However, the M3 Max can successfully run the Llama 3 70B model with the Q4KM quantization format:

| Model | Type | Tokens/second |

|---|---|---|

| Llama 3 70B | Q4KM Processing | 62.88 |

| Llama 3 70B | Q4KM Generation | 7.53 |

While the token speed drops dramatically compared to smaller models, the M3 Max still manages to run the Llama 3 70B model.

Think of it this way: Imagine you have a car that can easily handle a small passenger car. Now try to fit a large, heavy truck in the same space. The car might still move, but it will be much slower and may struggle to handle the weight.

The M3 Max is like that powerful car. It can handle the Llama 3 70B model, but it needs some help to achieve its full potential. Quantization plays a crucial role in making these large models manageable.

Quantization: The Secret Weapon for LLMs

Quantization essentially means making the LLM smaller and faster by reducing the amount of data it requires. Imagine taking a high-resolution image and compressing it to a lower resolution. You lose some detail, but the file becomes smaller and loads faster.

Quantization works similarly for LLMs. By reducing the precision of the numbers used in the model, we shrink its size and speed up processing, allowing it to run on more modest hardware like the M3 Max.

Think of it like this: Imagine you have a large book full of complex mathematical formulas. If you replace those formulas with simplified versions, the book becomes lighter and easier to read. It may not be as precise as the original, but it gets the job done.

Conclusion: The M3 Max is a Solid Choice for Local LLMs

The M3 Max is a powerful machine that can smoothly handle smaller LLMs like the Llama 2 7B and Llama 3 8B. It also has the capability to run larger models like the Llama 3 70B with quantization.

While the M3 Max might not be ideal for running massive LLMs in full precision, it's a great option for developers and enthusiasts who want to experiment with local LLM deployments and explore the power of these models without relying on cloud services.

FAQ

1. Can I run Llama 2 7B in F16 format without quantization?

Yes, the M3 Max can handle the Llama 2 7B model in F16 format without quantization. It offers excellent performance and a smooth user experience for this model size.

2. Is the M3 Max good for running other LLMs like GPT models?

While the performance data provided focuses on Llama models, the M3 Max should be capable of running other LLMs like GPT models as well. However, the specific performance may vary depending on the size and complexity of the GPT model.

3. What is the best way to run LLMs on the M3 Max?

The best way to run LLMs on the M3 Max depends on your specific needs. If you need the highest possible performance, stick with smaller models or use quantization for larger ones. If you need the most accuracy, use full precision models (F16) but be prepared for longer processing times.

Keywords

Apple M3 Max, Large Language Models (LLMs), Llama 2, Llama 3, Token Speed, Quantization, Local LLMs, GPU Cores, Memory Bandwidth, F16, Q4KM, Q80, Q40, GPT Models, Benchmark Analysis, Performance, Inference, Text Generation, AI, Machine Learning, Deep Learning.