Can Apple M1 Handle Large Local LLMs Without Crashing? Benchmark Analysis

Introduction:

Are you a developer or a tech enthusiast trying to run large language models (LLMs) locally on your Apple M1 chip? You might be wondering, "Can my M1 handle these massive models without crashing?" This question is on the minds of many, as LLMs are becoming increasingly popular for various applications, from text generation and translation to code completion and chatbot development.

Choosing the right hardware is essential for smooth LLM operation, especially when working with large models. This article delves into the performance capabilities of the Apple M1 processor, specifically focusing on its ability to handle substantial local LLM models. We'll analyze benchmark data to understand how well the M1 performs with different LLMs, explore key factors influencing performance, and provide insights for those seeking to run LLMs locally.

Apple M1 Token Speed Generation: Examining the Numbers

To understand the M1's capabilities, we'll examine benchmark data representing the number of tokens processed per second. Tokens are the fundamental building blocks of text in LLMs, so the higher the token speed, the faster the model can process and generate text.

Benchmark Data: A Glimpse into Performance

The benchmark data we'll be analyzing comes from various sources, specifically focusing on the Apple M1 processor. Our primary source is this GitHub discussion which provides insights into the performance of the llama.cpp framework on different devices, including the M1.

For this article, we're focusing on the M1's ability to handle large LLMs, and therefore, we'll be looking at models like Llama 2 7B and Llama 3 8B, though the data available may not cover every model and configuration.

Understanding the Data: Decode the Numbers

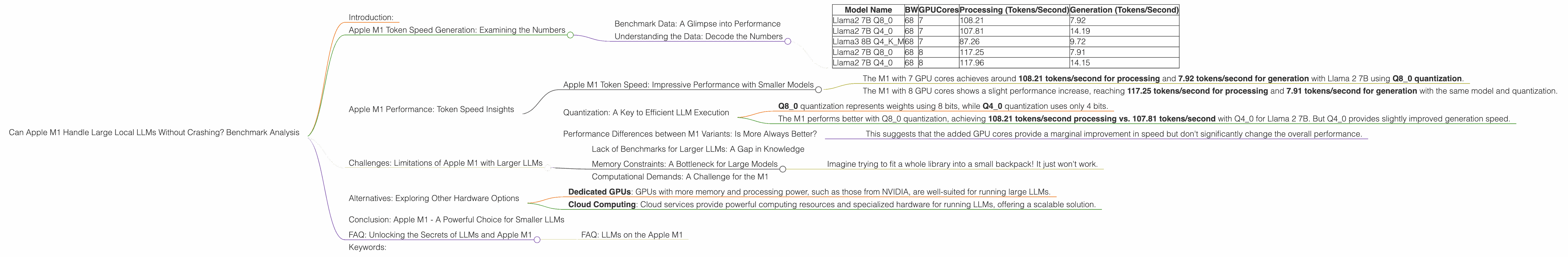

Here's a breakdown of the data columns:

- BW: This represents the bandwidth in gigabytes per second (GB/s). Bandwidth is critical for data transfer between the processor and memory.

- GPUCores: This signifies the number of cores in the GPU, indicating processing power. The higher the number, the more parallel processing can occur.

- Model Name: This is the specific LLM model being tested, such as Llama 2 7B or Llama 3 8B.

- Processing Tokens/Second: Shows how many tokens the model can process per second.

- Generation Tokens/Second: This quantifies the number of tokens the model can generate per second, which is crucial for text output.

The table below summarizes the relevant data:

| Model Name | BW | GPUCores | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|---|

| Llama2 7B Q8_0 | 68 | 7 | 108.21 | 7.92 |

| Llama2 7B Q4_0 | 68 | 7 | 107.81 | 14.19 |

| Llama3 8B Q4KM | 68 | 7 | 87.26 | 9.72 |

| Llama2 7B Q8_0 | 68 | 8 | 117.25 | 7.91 |

| Llama2 7B Q4_0 | 68 | 8 | 117.96 | 14.15 |

Important Note: Some data points are missing (represented as "null" in the table). This means that the specific model and configuration combination was not tested or data is unavailable.

Apple M1 Performance: Token Speed Insights

The data reveals some interesting trends. Let's analyze the findings and interpret their implications.

Apple M1 Token Speed: Impressive Performance with Smaller Models

The Apple M1 processor shows impressive performance for the Llama 2 7B and Llama 3 8B models. This suggests that it can effectively handle these models for tasks requiring fast processing and generation.

- The M1 with 7 GPU cores achieves around 108.21 tokens/second for processing and 7.92 tokens/second for generation with Llama 2 7B using Q8_0 quantization.

- The M1 with 8 GPU cores shows a slight performance increase, reaching 117.25 tokens/second for processing and 7.91 tokens/second for generation with the same model and quantization.

However, quantization is a key factor influencing performance.

Quantization: A Key to Efficient LLM Execution

Quantization is a technique used to reduce the size of an LLM by representing its weights using fewer bits, leading to faster processing and less memory usage. It's like shrinking a large file to make it easier to download!

- Q80 quantization represents weights using 8 bits, while Q40 quantization uses only 4 bits.

- The M1 performs better with Q80 quantization, achieving 108.21 tokens/second processing vs. 107.81 tokens/second with Q40 for Llama 2 7B. But Q4_0 provides slightly improved generation speed.

The trade-off between accuracy and speed is essential: While quantization can significantly improve performance, it can also affect accuracy. This is a crucial consideration when choosing a quantization level.

Performance Differences between M1 Variants: Is More Always Better?

The data shows that the M1 with 8 GPU cores generally outperforms the variant with 7 GPU cores. However, the differences are relatively small, especially regarding generation speeds.

- This suggests that the added GPU cores provide a marginal improvement in speed but don't significantly change the overall performance.

Challenges: Limitations of Apple M1 with Larger LLMs

While the M1 excels with smaller LLMs, running larger models like the 70B variant can pose significant challenges. The available data doesn't include benchmark results for the M1 running these massive models, suggesting possible limitations.

Lack of Benchmarks for Larger LLMs: A Gap in Knowledge

The absence of benchmark data for large LLMs like Llama 3 70B on the M1 highlights a crucial gap in our knowledge. This can indicate potential limitations in handling the memory requirements and computational demands of these models.

Memory Constraints: A Bottleneck for Large Models

Larger LLMs require vast amounts of memory to operate efficiently. The M1's memory capacity might be a bottleneck for these models.

- Imagine trying to fit a whole library into a small backpack! It just won't work.

Computational Demands: A Challenge for the M1

Large LLMs also involve intensive computations, pushing the limits of the M1's processing power. The M1 might struggle to keep up with these demands, leading to slower performance or crashes.

Alternatives: Exploring Other Hardware Options

While the M1 offers impressive performance for smaller LLMs, it might not be the best choice for running very large models. If you're working with models like Llama 3 70B, you might want to consider alternative hardware options:

- Dedicated GPUs: GPUs with more memory and processing power, such as those from NVIDIA, are well-suited for running large LLMs.

- Cloud Computing: Cloud services provide powerful computing resources and specialized hardware for running LLMs, offering a scalable solution.

Conclusion: Apple M1 - A Powerful Choice for Smaller LLMs

The Apple M1 chip is a powerful choice for running smaller LLMs like Llama 2 7B and Llama 3 8B. It demonstrates impressive performance, enabling fast processing and generation speeds.

However, if you're aiming to run larger models like Llama 3 70B locally, the M1 might not be the most suitable option. The limited data available and the potential limitations concerning memory and computational demands suggest exploring alternative hardware solutions that can handle these massive models effectively.

FAQ: Unlocking the Secrets of LLMs and Apple M1

FAQ: LLMs on the Apple M1

Q: Are there any specific optimizations for Apple M1 when running LLMs? A: While there are ongoing efforts to optimize LLM performance on Apple Silicon, specific optimizations like specialized libraries or frameworks are still under development. However, the M1's architecture is well-suited for machine learning tasks, and future advancements in software and hardware are likely to enhance its performance.

Q: How do I choose the right LLM for my M1? A: Consider the size of the model, the tasks you intend to perform, and the available memory on your device. Smaller models like Llama 2 7B and Llama 3 8B work well with the M1, but larger models might require more powerful hardware.

Q: Can I use an external GPU with my M1 for better LLM performance? A: Currently, using an external GPU with an M1 is not officially supported for running LLMs. However, future updates or advancements might enable this capability.

Keywords:

Apple M1, LLM, Llama 2, Llama 3, 7B, 8B, 70B, Token Generation Speed, Quantization, F16, Q80, Q40, Benchmark, GPU, Processor, Bandwidth, GPUCores, Memory, Cloud Computing, External GPU.