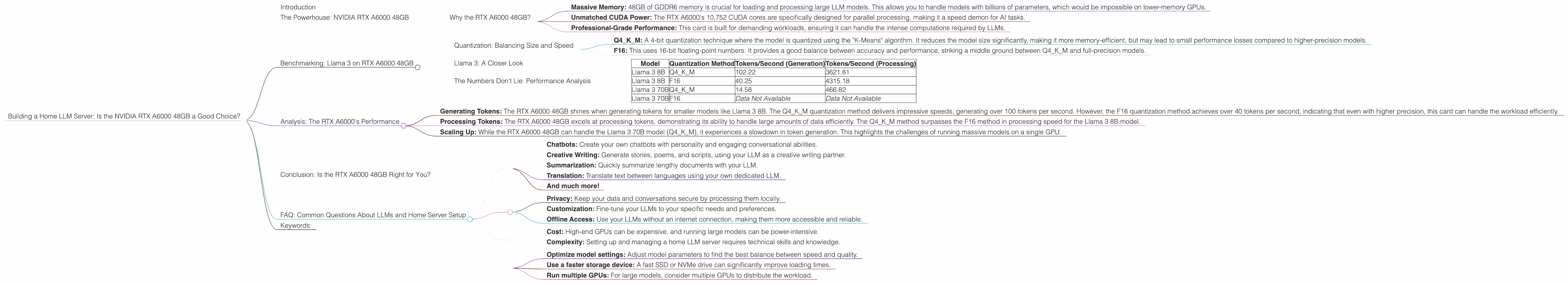

Building a Home LLM Server: Is the NVIDIA RTX A6000 48GB a Good Choice?

Introduction

The world of large language models (LLMs) is exploding, and with it, the desire to run these powerful AI models locally. Imagine having a personal AI assistant that can generate creative text, answer your questions, and even write code—all on your own hardware. This dream is becoming more accessible thanks to powerful GPUs like the NVIDIA RTX A6000 48GB.

But is the RTX A6000 48GB the right choice for building your home LLM server? In this article, we'll dive deep into the performance of this powerful GPU when running popular LLM models like Llama 3. We'll analyze the key factors that influence performance, understand the trade-offs between different quantization methods, and ultimately help you decide if the RTX A6000 48GB is the right fit for your LLM aspirations.

The Powerhouse: NVIDIA RTX A6000 48GB

The NVIDIA RTX A6000 48GB is a beastly graphics card designed for professional workloads, including AI and machine learning. With 48GB of GDDR6 memory and 10,752 CUDA cores, it's a serious contender for running large language models.

Why the RTX A6000 48GB?

- Massive Memory: 48GB of GDDR6 memory is crucial for loading and processing large LLM models. This allows you to handle models with billions of parameters, which would be impossible on lower-memory GPUs.

- Unmatched CUDA Power: The RTX A6000's 10,752 CUDA cores are specifically designed for parallel processing, making it a speed demon for AI tasks.

- Professional-Grade Performance: This card is built for demanding workloads, ensuring it can handle the intense computations required by LLMs.

Benchmarking: Llama 3 on RTX A6000 48GB

Let's put the RTX A6000 48GB through its paces with the Llama 3 family of LLMs. We'll use data from real-world benchmarks to assess its performance on different model sizes and quantization methods.

Quantization: Balancing Size and Speed

LLMs are notoriously large, consuming significant amounts of memory. Quantization is a technique that reduces the size of the model by representing its weights using fewer bits. Think of it like compressing a file—you lose some detail, but you gain storage space and potentially faster processing speeds.

We'll be looking at two popular quantization levels:

- Q4KM: A 4-bit quantization technique where the model is quantized using the "K-Means" algorithm. It reduces the model size significantly, making it more memory-efficient, but may lead to small performance losses compared to higher-precision models.

- F16: This uses 16-bit floating-point numbers. It provides a good balance between accuracy and performance, striking a middle ground between Q4KM and full-precision models.

Llama 3: A Closer Look

Llama 3 8B: This model is a great starting point for experimenting with LLMs. Its relatively small size allows for faster loading and processing times, making it perfect for testing and exploring LLM capabilities.

Llama 3 70B: A much larger model that packs a punch in terms of capabilities and performance. It's capable of more complex tasks and can generate more nuanced and creative outputs.

The Numbers Don't Lie: Performance Analysis

| Model | Quantization Method | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 102.22 | 3621.81 |

| Llama 3 8B | F16 | 40.25 | 4315.18 |

| Llama 3 70B | Q4KM | 14.58 | 466.82 |

| Llama 3 70B | F16 | Data Not Available | Data Not Available |

Important Note: No data on the RTX A6000 48GB's performance for Llama 3 70B F16 is available at this time.

Analysis: The RTX A6000's Performance

- Generating Tokens: The RTX A6000 48GB shines when generating tokens for smaller models like Llama 3 8B. The Q4KM quantization method delivers impressive speeds, generating over 100 tokens per second. However, the F16 quantization method achieves over 40 tokens per second, indicating that even with higher precision, this card can handle the workload efficiently.

- Processing Tokens: The RTX A6000 48GB excels at processing tokens, demonstrating its ability to handle large amounts of data efficiently. The Q4KM method surpasses the F16 method in processing speed for the Llama 3 8B model.

- Scaling Up: While the RTX A6000 48GB can handle the Llama 3 70B model (Q4KM), it experiences a slowdown in token generation. This highlights the challenges of running massive models on a single GPU.

Conclusion: Is the RTX A6000 48GB Right for You?

The NVIDIA RTX A6000 48GB is a fantastic option for building a home LLM server, especially if you're looking to run smaller models like Llama 3 8B. Its massive memory and raw processing power enable impressive performance with both Q4KM and F16 quantization.

However, if you're aiming for the highest speeds with larger models like Llama 3 70B, you might need to consider more powerful GPU setups or alternative approaches like distributed training.

For the vast majority of hobbyists and developers exploring LLMs, the RTX A6000 48GB presents a compelling balance between cost, performance, and capability.

FAQ: Common Questions About LLMs and Home Server Setup

Q: What are the best models to run on my home server?

A: For a home server, starting with smaller models like Llama 3 8B or similar models is a good idea. Larger models like Llama 3 70B require significant computing power and might exceed the capabilities of a single high-end GPU.

Q: What types of tasks can I do with a home LLM server?

A: You can use your home LLM server for a wide range of tasks:

- Chatbots: Create your own chatbots with personality and engaging conversational abilities.

- Creative Writing: Generate stories, poems, and scripts, using your LLM as a creative writing partner.

- Summarization: Quickly summarize lengthy documents with your LLM.

- Translation: Translate text between languages using your own dedicated LLM.

- And much more!

Q: What are the benefits of running LLMs locally?

A: There are a few key benefits to running LLMs on your own hardware:

- Privacy: Keep your data and conversations secure by processing them locally.

- Customization: Fine-tune your LLMs to your specific needs and preferences.

- Offline Access: Use your LLMs without an internet connection, making them more accessible and reliable.

Q: What are the challenges of running LLMs locally?

A: Running LLMs locally can be challenging due to:

- Cost: High-end GPUs can be expensive, and running large models can be power-intensive.

- Complexity: Setting up and managing a home LLM server requires technical skills and knowledge.

Q: How can I improve performance?

A: There are several ways to improve performance:

- Optimize model settings: Adjust model parameters to find the best balance between speed and quality.

- Use a faster storage device: A fast SSD or NVMe drive can significantly improve loading times.

- Run multiple GPUs: For large models, consider multiple GPUs to distribute the workload.

Keywords:

LLM, Large Language Model, NVIDIA RTX A6000 48GB, Home Server, GPU, Llama 3, Llama 3 8B, Llama 3 70B, Quantization, Q4KM, F16, Token Generation, Token Processing, AI, Machine Learning, Deep Learning, NLP, Natural Language Processing, Performance, Benchmark, Cost, Setup, Benefits, Challenges, Optimization.