Building a Home LLM Server: Is the NVIDIA RTX 6000 Ada 48GB a Good Choice?

Introduction: Diving into the World of Local LLMs

Imagine having your own personal AI assistant, ready to answer your questions, write creative content, or even help you with coding. This is the dream of many tech enthusiasts, fueled by the rise of large language models (LLMs) like ChatGPT. But hosting these powerful models on your own machine can feel like a daunting task.

This is where the NVIDIA RTX6000Ada_48GB comes in. This powerful graphics card is becoming a popular choice for running LLMs locally, offering a balance of performance and affordability. But is it the right fit for your needs? Let's dive into the details and explore the possibilities.

The NVIDIA RTX6000Ada_48GB: A Powerful Option for Local LLMs

The NVIDIA RTX6000Ada_48GB is a high-end graphics card designed for professional workloads, including AI and machine learning. This card packs a punch with its massive 48GB of GDDR6 memory and powerful Ada Lovelace architecture, giving it the horsepower to handle demanding tasks like running large language models.

But can this beast handle LLMs like Llama 3, a popular open-source offering? Let's explore its performance with some real-world numbers.

Performance Analysis: RTX6000Ada_48GB vs. Llama 3

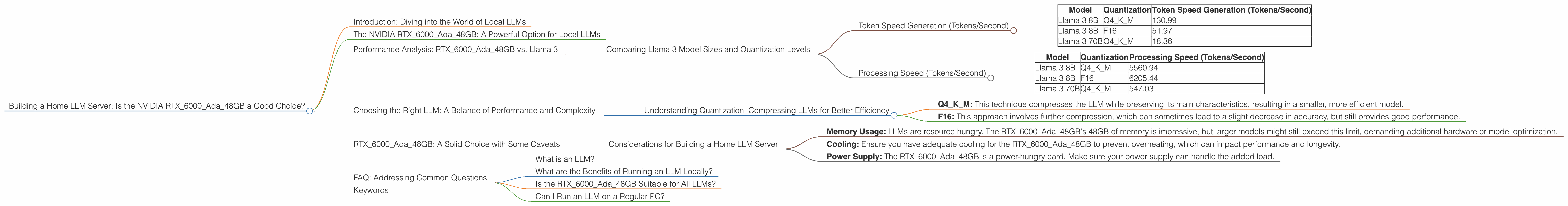

Comparing Llama 3 Model Sizes and Quantization Levels

Our analysis focuses on the NVIDIA RTX6000Ada_48GB performance with Llama 3, a family of LLMs known for their impressive text generation capabilities. We'll be comparing two popular model sizes: Llama 3 8B and Llama 3 70B. Both models are tested in two quantization levels:

- Q4KM: A popular choice for balancing performance and memory usage. Think of it like compressing the model for better efficiency.

- F16: A lighter option that uses less memory, but may result in slightly less accurate results.

Note: We don't have data for Llama 3 70B in F16 quantization with the RTX6000Ada_48GB. This is a common limitation when testing different hardware-model combinations.

Token Speed Generation (Tokens/Second)

| Model | Quantization | Token Speed Generation (Tokens/Second) |

|---|---|---|

| Llama 3 8B | Q4KM | 130.99 |

| Llama 3 8B | F16 | 51.97 |

| Llama 3 70B | Q4KM | 18.36 |

The RTX6000Ada48GB demonstrates good performance when running Llama 3 8B, achieving over 130 tokens per second in Q4K_M quantization. This translates to a pretty snappy response time for text generation, making it suitable for various use cases.

However, when we move to the larger Llama 3 70B model, the performance takes a hit, dropping to 18.36 tokens per second in Q4KM. This is still usable, but you might experience longer wait times for responses, especially for complex prompts.

Processing Speed (Tokens/Second)

| Model | Quantization | Processing Speed (Tokens/Second) |

|---|---|---|

| Llama 3 8B | Q4KM | 5560.94 |

| Llama 3 8B | F16 | 6205.44 |

| Llama 3 70B | Q4KM | 547.03 |

Here, the RTX6000Ada_48GB shines. It can process an incredible number of tokens per second, especially with the smaller Llama 3 8B model. This speed is crucial for tasks that require a lot of processing power, like generating multiple responses or completing complex logical tasks.

Choosing the Right LLM: A Balance of Performance and Complexity

The choice of LLM is important for the success of your home server. Larger models like Llama 3 70B offer greater capabilities and accuracy, but also require more resources and processing power. Smaller models like Llama 3 8B are more manageable and can deliver good performance on even modest hardware.

Understanding Quantization: Compressing LLMs for Better Efficiency

Quantization is a technique used to compress LLMs, making them smaller and more efficient. Think of it like using a smaller file size for an image without losing too much quality. In our analysis, we used Q4KM and F16 quantization:

- Q4KM: This technique compresses the LLM while preserving its main characteristics, resulting in a smaller, more efficient model.

- F16: This approach involves further compression, which can sometimes lead to a slight decrease in accuracy, but still provides good performance.

RTX6000Ada_48GB: A Solid Choice with Some Caveats

The RTX6000Ada_48GB is an excellent card for running LLMs locally. It offers a blend of power and affordability, making it a great option for developers and enthusiasts. However, keep in mind that even with this powerful card, you might face limitations with larger LLMs or complex tasks.

Considerations for Building a Home LLM Server

- Memory Usage: LLMs are resource hungry. The RTX6000Ada_48GB's 48GB of memory is impressive, but larger models might still exceed this limit, demanding additional hardware or model optimization.

- Cooling: Ensure you have adequate cooling for the RTX6000Ada_48GB to prevent overheating, which can impact performance and longevity.

- Power Supply: The RTX6000Ada_48GB is a power-hungry card. Make sure your power supply can handle the added load.

FAQ: Addressing Common Questions

What is an LLM?

A large language model (LLM) is a type of artificial intelligence that uses deep learning algorithms to understand and generate human-like text. Think of it as a superpowered text generator that can write stories, answer questions, and even translate languages.

What are the Benefits of Running an LLM Locally?

Running an LLM locally gives you complete control over your data and privacy. It also allows you to customize the model and access its power without relying on a third-party service.

Is the RTX6000Ada_48GB Suitable for All LLMs?

While the RTX6000Ada_48GB is capable of running many LLMs, it might be overkill for simpler LLMs. The ideal choice depends on the complexity and size of the model you want to run.

Can I Run an LLM on a Regular PC?

Yes, you can run smaller LLMs on a regular PC. However, as we move to more complex models, dedicated GPUs like the RTX6000Ada_48GB become essential for optimal performance.

Keywords

Large Language Model, LLM, Home Server, NVIDIA RTX6000Ada48GB, Llama 3, GPU, Token Generation, Token Processing, Quantization, Q4K_M, F16, AI, Machine Learning, Open Source