Building a Home LLM Server: Is the NVIDIA RTX 5000 Ada 32GB a Good Choice?

Introduction

The world of large language models (LLMs) is rapidly evolving, and the ability to run these models locally is becoming increasingly desirable. Imagine having a powerful AI assistant at your fingertips, capable of generating creative content, translating languages, and even writing code – all on your own hardware. This is the dream of many developers and enthusiasts, and building a home LLM server is the key to achieving it.

But choosing the right hardware is crucial. With so many GPUs available, it can be overwhelming to decide which one is best for your specific needs. In this article, we'll delve into the performance of the NVIDIA RTX 5000 Ada 32GB for running LLMs locally.

Analyzing the NVIDIA RTX 5000 Ada 32GB for Home LLM Server

The NVIDIA RTX 5000 Ada 32GB is a powerful GPU designed for professional applications and gaming. But can it handle the demands of running large language models like Llama 3? Let's take a closer look at the performance:

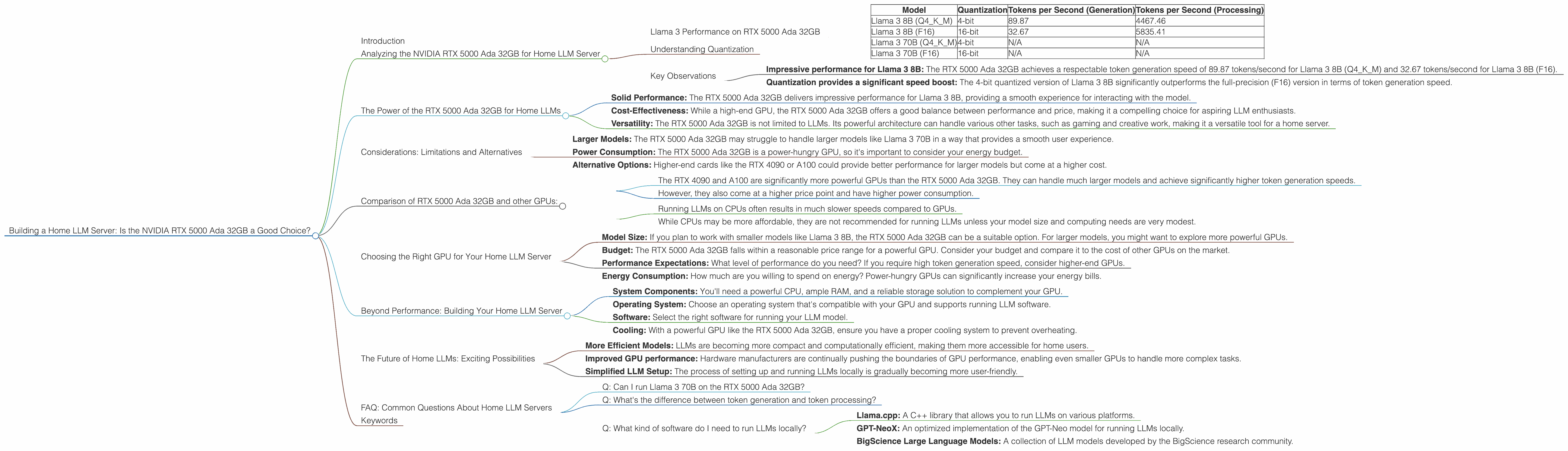

Llama 3 Performance on RTX 5000 Ada 32GB

The following table breaks down the token generation and processing speeds of the RTX 5000 Ada 32GB for the Llama 3 model, in both quantized and full precision formats:

| Model | Quantization | Tokens per Second (Generation) | Tokens per Second (Processing) |

|---|---|---|---|

| Llama 3 8B (Q4KM) | 4-bit | 89.87 | 4467.46 |

| Llama 3 8B (F16) | 16-bit | 32.67 | 5835.41 |

| Llama 3 70B (Q4KM) | 4-bit | N/A | N/A |

| Llama 3 70B (F16) | 16-bit | N/A | N/A |

Explanation of the Data:

- Tokens per Second (Generation): This metric represents how many tokens the GPU can process per second during text generation, the process of creating new text based on user input.

- Tokens per Second (Processing): Represents the number of tokens the GPU can process per second during inference, which involves understanding and responding to user queries.

Note: We currently lack data for Llama 3 70B on the RTX 5000 Ada 32GB.

Understanding Quantization

Let's decode the jargon! Quantization is a technique used to reduce the size of LLM models and improve their performance on limited hardware. It involves reducing the precision of the model's weights, which are the numbers that represent the model's knowledge. Think of it like using a smaller ruler to measure something – you lose some precision, but you gain efficiency.

In the table above, "Q4KM" indicates that the model is quantized to 4 bits using the "K-Means" algorithm. The "F16" indicates full precision using 16-bit floating-point numbers.

Key Observations

- Impressive performance for Llama 3 8B: The RTX 5000 Ada 32GB achieves a respectable token generation speed of 89.87 tokens/second for Llama 3 8B (Q4KM) and 32.67 tokens/second for Llama 3 8B (F16).

- Quantization provides a significant speed boost: The 4-bit quantized version of Llama 3 8B significantly outperforms the full-precision (F16) version in terms of token generation speed.

The Power of the RTX 5000 Ada 32GB for Home LLMs

The RTX 5000 Ada 32GB is a powerful GPU that can handle the demands of running smaller LLM models like Llama 3 8B locally. Here are some advantages of using this GPU for a home LLM server:

- Solid Performance: The RTX 5000 Ada 32GB delivers impressive performance for Llama 3 8B, providing a smooth experience for interacting with the model.

- Cost-Effectiveness: While a high-end GPU, the RTX 5000 Ada 32GB offers a good balance between performance and price, making it a compelling choice for aspiring LLM enthusiasts.

- Versatility: The RTX 5000 Ada 32GB is not limited to LLMs. Its powerful architecture can handle various other tasks, such as gaming and creative work, making it a versatile tool for a home server.

Considerations: Limitations and Alternatives

While the RTX 5000 Ada 32GB is a solid choice, it’s important to acknowledge its limitations and consider alternative options depending on your specific needs:

- Larger Models: The RTX 5000 Ada 32GB may struggle to handle larger models like Llama 3 70B in a way that provides a smooth user experience.

- Power Consumption: The RTX 5000 Ada 32GB is a power-hungry GPU, so it's important to consider your energy budget.

- Alternative Options: Higher-end cards like the RTX 4090 or A100 could provide better performance for larger models but come at a higher cost.

Comparison of RTX 5000 Ada 32GB and other GPUs:

Although this article focuses on the RTX 5000 Ada 32GB, comparing its performance to other popular choices might help you decide:

Comparison with RTX 4090 and A100:

- The RTX 4090 and A100 are significantly more powerful GPUs than the RTX 5000 Ada 32GB. They can handle much larger models and achieve significantly higher token generation speeds.

- However, they also come at a higher price point and have higher power consumption.

Comparison with CPU-Based LLMs:

- Running LLMs on CPUs often results in much slower speeds compared to GPUs.

- While CPUs may be more affordable, they are not recommended for running LLMs unless your model size and computing needs are very modest.

Choosing the Right GPU for Your Home LLM Server

The best GPU for your home LLM server depends on your specific needs and budget. Consider the following factors:

- Model Size: If you plan to work with smaller models like Llama 3 8B, the RTX 5000 Ada 32GB can be a suitable option. For larger models, you might want to explore more powerful GPUs.

- Budget: The RTX 5000 Ada 32GB falls within a reasonable price range for a powerful GPU. Consider your budget and compare it to the cost of other GPUs on the market.

- Performance Expectations: What level of performance do you need? If you require high token generation speed, consider higher-end GPUs.

- Energy Consumption: How much are you willing to spend on energy? Power-hungry GPUs can significantly increase your energy bills.

Beyond Performance: Building Your Home LLM Server

While choosing the right GPU is essential, it's just one piece of the puzzle. Building a successful home LLM server requires planning and considering other factors, such as:

- System Components: You'll need a powerful CPU, ample RAM, and a reliable storage solution to complement your GPU.

- Operating System: Choose an operating system that's compatible with your GPU and supports running LLM software.

- Software: Select the right software for running your LLM model.

- Cooling: With a powerful GPU like the RTX 5000 Ada 32GB, ensure you have a proper cooling system to prevent overheating.

The Future of Home LLMs: Exciting Possibilities

The field of LLMs is rapidly evolving, with new models and advancements emerging constantly. This means that the technology for building home LLM servers will also continue to improve. In the future, we can expect:

- More Efficient Models: LLMs are becoming more compact and computationally efficient, making them more accessible for home users.

- Improved GPU performance: Hardware manufacturers are continually pushing the boundaries of GPU performance, enabling even smaller GPUs to handle more complex tasks.

- Simplified LLM Setup: The process of setting up and running LLMs locally is gradually becoming more user-friendly.

FAQ: Common Questions About Home LLM Servers

Q: Can I run Llama 3 70B on the RTX 5000 Ada 32GB?

A: While it's possible to run Llama 3 70B on the RTX 5000 Ada 32GB, you are likely to experience slow speeds and potential bottlenecks due to the GPU's relatively limited memory capacity.

Q: What's the difference between token generation and token processing?

A: Token generation refers to the creation of new text outputs, while token processing involves understanding and responding to existing text input. Think of it like the difference between composing a new song (generation) and listening to a song (processing).

Q: What kind of software do I need to run LLMs locally?

A: There are several popular software options for running LLMs. Some popular examples include:

- Llama.cpp: A C++ library that allows you to run LLMs on various platforms.

- GPT-NeoX: An optimized implementation of the GPT-Neo model for running LLMs locally.

- BigScience Large Language Models: A collection of LLM models developed by the BigScience research community.

Keywords

LLM, home server, NVIDIA, RTX 5000 Ada, GPU, Llama 3, token generation, token processing, quantization, performance, budget, energy consumption, hardware, software, BigScience, GPT-NeoX, Llama.cpp.