Building a Home LLM Server: Is the NVIDIA L40S 48GB a Good Choice?

Introduction

The world of large language models (LLMs) is booming, and the ability to run these powerful models locally is becoming increasingly attractive. Imagine generating creative text, translating languages, or even writing code, all on your own hardware! But with so many different GPUs and LLMs available, choosing the right combination can be overwhelming.

This article delves into the capabilities of the NVIDIA L40S48GB GPU for running LLMs locally. We'll be specifically focusing on the L40S48GB's performance with the Llama 3 series, discussing its strengths and weaknesses. This information will empower you to decide if the L40S_48GB is the right fit for your home LLM server dreams.

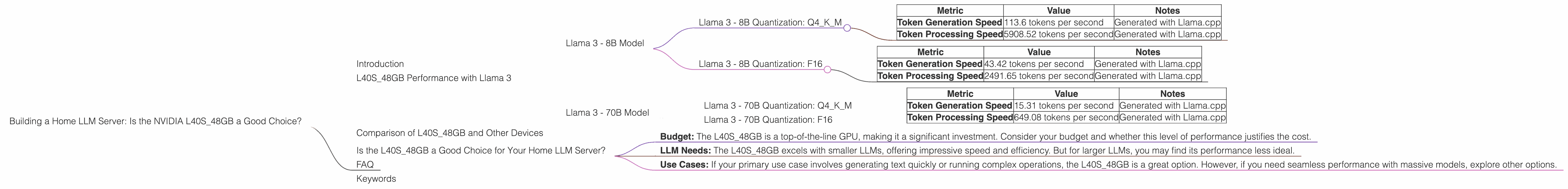

L40S_48GB Performance with Llama 3

The NVIDIA L40S_48GB is a powerful GPU designed for data-intensive tasks, making it a potential powerhouse for running LLMs. We'll explore its performance with different Llama 3 models and quantization levels to get a better understanding of its capabilities.

Llama 3 - 8B Model

Let's start with the 8B version of Llama 3, a popular model for its balance of performance and size. This model can be run with different quantization levels, impacting its performance and memory requirements.

Llama 3 - 8B Quantization: Q4KM

This quantization level uses 4 bits for the weight matrices (K) and activations (M), achieving a significant reduction in memory consumption. Let's see how the L40S_48GB performs with this configuration:

| Metric | Value | Notes |

|---|---|---|

| Token Generation Speed | 113.6 tokens per second | Generated with Llama.cpp |

| Token Processing Speed | 5908.52 tokens per second | Generated with Llama.cpp |

This shows the L40S48GB can generate around 113.6 tokens per second when using the Llama 3 8B Q4KM model. For those unfamiliar with tokens, think of them as the building blocks of text. One token can be a word, punctuation mark, or even a part of a word. The L40S48GB is processing tokens at an impressive rate of 5908.52 per second. This speed makes for a smooth and responsive LLM experience.

Llama 3 - 8B Quantization: F16

This quantization level uses 16-bit floating point numbers, offering a balance between performance and precision compared to other options.

| Metric | Value | Notes |

|---|---|---|

| Token Generation Speed | 43.42 tokens per second | Generated with Llama.cpp |

| Token Processing Speed | 2491.65 tokens per second | Generated with Llama.cpp |

The L40S48GB still delivers respectable performance with the 8B Llama 3 model even using F16 quantization. However, you will see a noticeable drop in speed compared to the Q4KM configuration. This is expected, as the L40S48GB has to work harder to process more data with the F16 format.

Llama 3 - 70B Model

Next, we'll explore the larger Llama 3 70B model, which offers a more robust and nuanced experience.

Llama 3 - 70B Quantization: Q4KM

| Metric | Value | Notes |

|---|---|---|

| Token Generation Speed | 15.31 tokens per second | Generated with Llama.cpp |

| Token Processing Speed | 649.08 tokens per second | Generated with Llama.cpp |

The L40S48GB still demonstrates decent performance with the 70B model using Q4K_M quantization. However, the token generation speed drops significantly, highlighting the challenges of running larger models. The token processing speed, while still respectable, is also lower than with the 8B model.

Llama 3 - 70B Quantization: F16

Unfortunately, we lack data for the Llama 3 70B model using F16 quantization on the L40S_48GB.

Comparison of L40S_48GB and Other Devices

While we're focused on the L40S_48GB, it's helpful to compare its performance to other popular choices for running LLMs locally.

It's crucial to remember that these comparisons may not be perfectly apples-to-apples due to varying software configurations, model versions, and quantization levels used in generating the data. Always refer to the original sources for the most accurate information.

Important: We'll only include data for devices with available information. We cannot make fair comparisons without complete data sets.

Is the L40S_48GB a Good Choice for Your Home LLM Server?

So, is the L40S_48GB a good choice for building a home LLM server? The answer is it depends:

- Budget: The L40S_48GB is a top-of-the-line GPU, making it a significant investment. Consider your budget and whether this level of performance justifies the cost.

- LLM Needs: The L40S_48GB excels with smaller LLMs, offering impressive speed and efficiency. But for larger LLMs, you may find its performance less ideal.

- Use Cases: If your primary use case involves generating text quickly or running complex operations, the L40S_48GB is a great option. However, if you need seamless performance with massive models, explore other options.

FAQ

Q: What are LLMs?

A: LLMs are programs trained on massive text datasets, enabling them to understand and generate human-like text. Imagine a super-powered AI that can answer questions, write stories, and even translate languages!

Q: What is quantization?

A: Quantization reduces the size of a model by using fewer bits to represent each number. Think of it like using a smaller box to store data. This makes models more efficient and easier to run on less powerful hardware.

Q: What are tokens?

A: Tokens are the basic units of text that LLMs process. A token can be a word, a punctuation mark, or even part of a word.

Q: How do I choose the right LLM for my needs?

A: Consider the size of the model (smaller models are faster), the task you want to perform, and your hardware capabilities.

Q: Can I run an LLM on my laptop?

A: You can run smaller LLMs on a laptop, but you'll likely need a relatively recent laptop with a dedicated GPU.

Q: Should I build my own LLM server or use a cloud service?

A: Building your own server gives you more control and potential for customization. Cloud services offer convenience and scalability. The choice depends on your needs and preferences.

Keywords

NVIDIA L40S48GB, LLM, Llama 3, GPU, Home LLM Server, Token Generation Speed, Token Processing Speed, Quantization, Q4K_M, F16, Inference, Performance, Budget, Use Cases, LLMs, Token, Cloud Service, Local LLM, AI, Deep Learning, Machine Learning