Building a Home LLM Server: Is the NVIDIA A40 48GB a Good Choice?

Introduction

Imagine building a personal AI assistant, capable of generating creative text, translating languages, and answering your questions with impressive accuracy. This is the realm of Large Language Models (LLMs), powerful artificial intelligence systems that are revolutionizing the way we interact with technology.

But running these behemoths locally requires serious hardware muscle. Enter the NVIDIA A40_48GB, a high-performance GPU designed for demanding workloads. This article dives deep into the A40's capabilities for running LLMs, exploring its strengths, limitations, and overall suitability for building your own home LLM server.

The NVIDIA A40_48GB: A Powerhouse for LLMs

The NVIDIA A40_48GB is a beast among GPUs, featuring 48GB of high-bandwidth memory and a massive number of CUDA cores. In the realm of LLMs, this translates to:

- Lightning-fast inference: Processing massive amounts of data and generating text at speeds that are practically unimaginable for standard CPUs.

- Handling large models: With a large memory buffer, the A40 can effortlessly accommodate even the most gargantuan LLMs, like the 70B parameter behemoths.

- Reduced latency: Experience snappy responses without the delays that can occur with less powerful hardware.

Benchmarking the A40 with Llama 3 Models

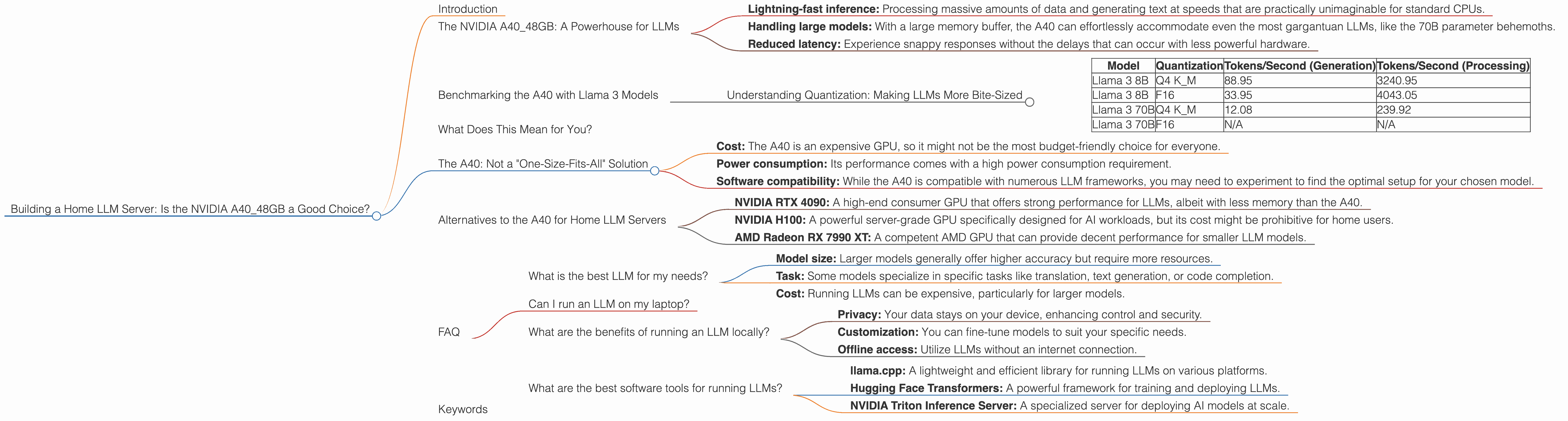

To assess the A40's prowess, we'll dive into its performance with popular Llama 3 models, running on different quantization levels (Q4 and F16) and measuring the token generation and processing speeds.

Note: We're focusing on the A40_48GB and excluding performance data for other devices.

Understanding Quantization: Making LLMs More Bite-Sized

Quantization is like a diet for your LLM. It shrinks the model's size by reducing the number of bits used to represent the model's parameters. Think of it like this: Instead of using a full plate of data, you're having a bite-sized snack. While smaller, these "bites" are still powerful enough for the model to operate.

- Q4: This means each number in the model is represented using only 4 bits. It's a very compact representation, leading to smaller models and potentially faster inference speeds.

- F16: Uses 16 bits to represent each number, providing a bit more precision than Q4.

Let's delve into the numbers:

| Model | Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 8B | Q4 K_M | 88.95 | 3240.95 |

| Llama 3 8B | F16 | 33.95 | 4043.05 |

| Llama 3 70B | Q4 K_M | 12.08 | 239.92 |

| Llama 3 70B | F16 | N/A | N/A |

Observations:

- Smaller models benefit from F16: The 8B Llama 3 model shows a significant boost in processing speed with F16 compared to Q4, while generation speed is lower.

- Q4 is king for 70B: The A40 delivers a remarkable performance for the 70B Llama 3 model with Q4. This highlights the trade-off between precision and speed.

- F16 performance for 70B is missing: Unfortunately, no data is available for F16 performance of the 70B model on the A40. This likely means that running the 70B model with F16 would be extremely demanding, potentially exceeding the memory capacity of the A40 or leading to significantly slower processing speeds.

What Does This Mean for You?

The A40_48GB is a powerful tool for running LLMs locally. Its performance is outstanding, especially for smaller models and using Q4 quantization. However, if you plan to work with gigantic models like the 70B Llama 3, you'll need to consider the trade-offs between model size, quantization, and performance.

The A40: Not a "One-Size-Fits-All" Solution

While the A40 is excellent for LLM development and deployment, it's not a magic bullet for every situation. Keep in mind:

- Cost: The A40 is an expensive GPU, so it might not be the most budget-friendly choice for everyone.

- Power consumption: Its performance comes with a high power consumption requirement.

- Software compatibility: While the A40 is compatible with numerous LLM frameworks, you may need to experiment to find the optimal setup for your chosen model.

Alternatives to the A40 for Home LLM Servers

If the A40 isn't within your budget or power requirements, consider these alternatives:

- NVIDIA RTX 4090: A high-end consumer GPU that offers strong performance for LLMs, albeit with less memory than the A40.

- NVIDIA H100: A powerful server-grade GPU specifically designed for AI workloads, but its cost might be prohibitive for home users.

- AMD Radeon RX 7990 XT: A competent AMD GPU that can provide decent performance for smaller LLM models.

FAQ

What is the best LLM for my needs?

The "best" LLM depends entirely on your specific use case. Consider these factors:

- Model size: Larger models generally offer higher accuracy but require more resources.

- Task: Some models specialize in specific tasks like translation, text generation, or code completion.

- Cost: Running LLMs can be expensive, particularly for larger models.

Can I run an LLM on my laptop?

It's possible to run smaller LLMs on a laptop with a dedicated GPU, but don't expect the same level of performance as a dedicated server.

What are the benefits of running an LLM locally?

- Privacy: Your data stays on your device, enhancing control and security.

- Customization: You can fine-tune models to suit your specific needs.

- Offline access: Utilize LLMs without an internet connection.

What are the best software tools for running LLMs?

Popular options include:

- llama.cpp: A lightweight and efficient library for running LLMs on various platforms.

- Hugging Face Transformers: A powerful framework for training and deploying LLMs.

- NVIDIA Triton Inference Server: A specialized server for deploying AI models at scale.

Keywords

NVIDIA A40_48GB, GPU, LLM, Large Language Model, Llama 3, quantization, Q4, F16, token generation, token processing, inference, home server, AI, machine learning, deep learning, performance benchmark, model size, cost, power consumption, software compatibility, alternatives, RTX 4090, H100, Radeon RX 7990 XT, privacy, customization, offline access, software tools, llama.cpp, Hugging Face Transformers, NVIDIA Triton Inference Server.