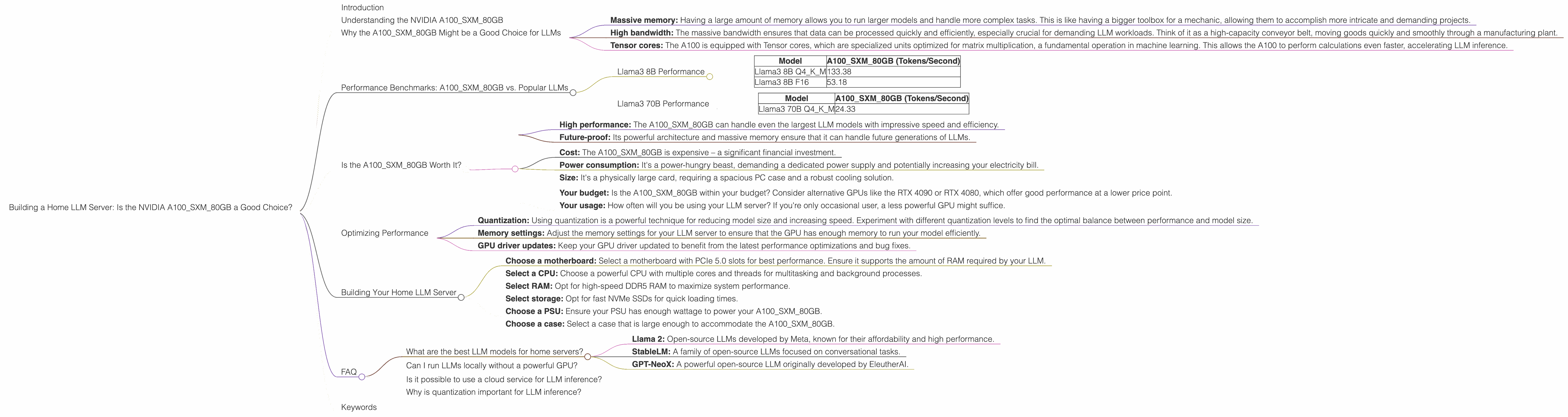

Building a Home LLM Server: Is the NVIDIA A100 SXM 80GB a Good Choice?

Introduction

The world of large language models (LLMs) is exploding, and it's not just the models themselves that are growing in size. The demand for powerful hardware to run these models locally is also surging. If you're a developer, researcher, or simply someone who wants to tinker with LLMs, building your own home server might be the perfect solution. But with so many different GPUs on the market, choosing the right one can be a daunting task.

This article focuses on the NVIDIA A100SXM80GB, a powerful GPU designed for high-performance computing, and its suitability for running LLMs at home. We'll explore its capabilities, delve into the performance benchmarks with popular LLMs, and ultimately answer the crucial question: is it a good choice for your home LLM server?

Understanding the NVIDIA A100SXM80GB

The A100SXM80GB is a behemoth in the world of GPUs, boasting a massive 80GB of HBM2e memory and a massive 40GB/s memory bandwidth. This makes it a top contender for demanding workloads like AI training and inference.

Imagine the GPU's memory as a giant warehouse, where data is stored and processed. With 80GB, the A100 can hold a massive amount of data close by, allowing for lightning-fast retrieval and processing. The 40GB/s bandwidth is like a superhighway connecting the warehouse to the rest of the system, enabling a rapid flow of data in and out.

Why the A100SXM80GB Might be a Good Choice for LLMs

The A100SXM80GB packs a punch, making it an enticing option for LLM enthusiasts. Here's why:

- Massive memory: Having a large amount of memory allows you to run larger models and handle more complex tasks. This is like having a bigger toolbox for a mechanic, allowing them to accomplish more intricate and demanding projects.

- High bandwidth: The massive bandwidth ensures that data can be processed quickly and efficiently, especially crucial for demanding LLM workloads. Think of it as a high-capacity conveyor belt, moving goods quickly and smoothly through a manufacturing plant.

- Tensor cores: The A100 is equipped with Tensor cores, which are specialized units optimized for matrix multiplication, a fundamental operation in machine learning. This allows the A100 to perform calculations even faster, accelerating LLM inference.

Performance Benchmarks: A100SXM80GB vs. Popular LLMs

Now, let's delve into the real numbers. We'll examine how the A100SXM80GB performs with different LLM models. For this analysis, we'll focus on two key metrics:

- Token speed generation: This measures how many tokens the GPU can process per second. More tokens per second means faster inference and quicker responses from your LLM.

- Quantization: LLMs can be compressed using quantization, which reduces the memory footprint and allows for faster inference on devices with limited memory. The A100 is capable of running LLMs with both full precision (F16) and quantized (Q4KM) formats.

Here's a breakdown of the performance benchmarks:

Llama3 8B Performance

| Model | A100SXM80GB (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 133.38 |

| Llama3 8B F16 | 53.18 |

The A100SXM80GB demonstrates impressive performance with Llama3 8B, generating tokens at a remarkable speed. Quantizing Llama3 8B to Q4KM format yields a significant performance boost, nearly tripling the token speed compared to the F16 format. This exemplifies the power of quantization in boosting LLM efficiency on limited memory devices.

Llama3 70B Performance

| Model | A100SXM80GB (Tokens/Second) |

|---|---|

| Llama3 70B Q4KM | 24.33 |

The A100SXM80GB can successfully run Llama3 70B, delivering a respectable token speed. Note that Llama3 70B performance was only tested with the Q4KM format. We couldn't find any performance data for Llama3 70B with F16 or other quantization formats.

Is the A100SXM80GB Worth It?

The A100SXM80GB is a potent GPU, offering impressive performance for LLMs like Llama3 8B and 70B. However, the decision to invest in this GPU for your home LLM server depends on your needs and budget.

Here's a concise breakdown:

Pros:

- High performance: The A100SXM80GB can handle even the largest LLM models with impressive speed and efficiency.

- Future-proof: Its powerful architecture and massive memory ensure that it can handle future generations of LLMs.

Cons:

- Cost: The A100SXM80GB is expensive – a significant financial investment.

- Power consumption: It's a power-hungry beast, demanding a dedicated power supply and potentially increasing your electricity bill.

- Size: It's a physically large card, requiring a spacious PC case and a robust cooling solution.

Consider these factors:

- Your budget: Is the A100SXM80GB within your budget? Consider alternative GPUs like the RTX 4090 or RTX 4080, which offer good performance at a lower price point.

- Your usage: How often will you be using your LLM server? If you're only occasional user, a less powerful GPU might suffice.

Optimizing Performance

Even with a powerhouse like the A100SXM80GB, optimization is crucial for maximizing performance. Here are some tips:

- Quantization: Using quantization is a powerful technique for reducing model size and increasing speed. Experiment with different quantization levels to find the optimal balance between performance and model size.

- Memory settings: Adjust the memory settings for your LLM server to ensure that the GPU has enough memory to run your model efficiently.

- GPU driver updates: Keep your GPU driver updated to benefit from the latest performance optimizations and bug fixes.

Building Your Home LLM Server

Once you've decided on the A100SXM80GB (or another GPU), it's time to build your LLM server. Here's a general guide:

- Choose a motherboard: Select a motherboard with PCIe 5.0 slots for best performance. Ensure it supports the amount of RAM required by your LLM.

- Select a CPU: Choose a powerful CPU with multiple cores and threads for multitasking and background processes.

- Select RAM: Opt for high-speed DDR5 RAM to maximize system performance.

- Select storage: Opt for fast NVMe SSDs for quick loading times.

- Choose a PSU: Ensure your PSU has enough wattage to power your A100SXM80GB.

- Choose a case: Select a case that is large enough to accommodate the A100SXM80GB.

FAQ

What are the best LLM models for home servers?

There are many great LLM models available, each with different strengths and weaknesses. Some popular choices include:

- Llama 2: Open-source LLMs developed by Meta, known for their affordability and high performance.

- StableLM: A family of open-source LLMs focused on conversational tasks.

- GPT-NeoX: A powerful open-source LLM originally developed by EleutherAI.

Can I run LLMs locally without a powerful GPU?

Yes, you can run smaller LLMs on a CPU, but the performance will be significantly slower. For larger, more complex models, a dedicated GPU is highly recommended.

Is it possible to use a cloud service for LLM inference?

Yes, there are many cloud services like Google AI Platform and Amazon SageMaker that offer pre-trained LLMs and inference capabilities. This can be a good option if you don't want to invest in a powerful GPU or build your own server.

Why is quantization important for LLM inference?

Quantization reduces the size of the LLM model by representing weights and activations with fewer bits, enabling faster inference on devices with limited memory. This is like compressing a large file to make it smaller and easier to share online.

Keywords

LLM, A100SXM80GB, GPU, Home server, Token speed, Quantization, Llama3, Inference, Performance, Bandwidth, Memory, Cost, Power consumption, Building a server, Optimization, Open-source LLMs, Cloud services, Quantization.