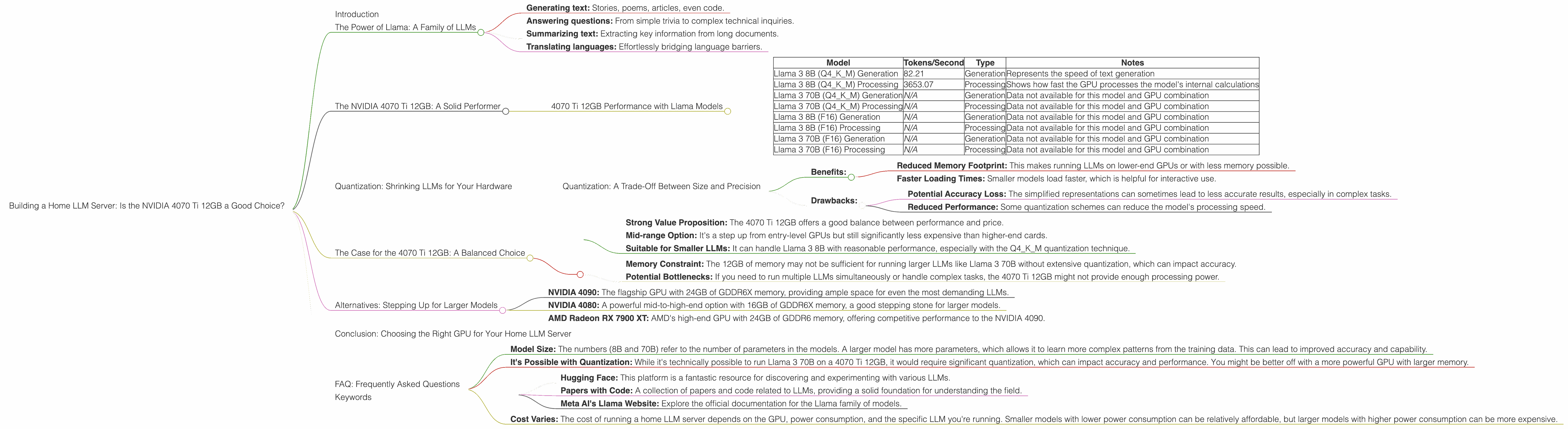

Building a Home LLM Server: Is the NVIDIA 4070 Ti 12GB a Good Choice?

Introduction

Imagine having your own personal AI assistant right at home, able to answer any question, generate creative content, and even help you write code. Sounds like something out of a sci-fi movie, right? Well, with the rise of Large Language Models (LLMs) and the power of modern GPUs, this dream is becoming a reality.

But choosing the right hardware for your home LLM server is crucial, and it's not just about throwing the most expensive GPU at the problem. You need to consider factors like model size, performance, memory, and budget.

In this article, we'll explore whether the NVIDIA 4070 Ti 12GB is a good choice to power your home LLM server, focusing on the popular Llama family of models. We'll delve into performance numbers, discuss the pros and cons, and offer insights to help you make an informed decision.

The Power of Llama: A Family of LLMs

LLMs are essentially AI models trained on massive datasets of text and code. They can perform tasks like:

- Generating text: Stories, poems, articles, even code.

- Answering questions: From simple trivia to complex technical inquiries.

- Summarizing text: Extracting key information from long documents.

- Translating languages: Effortlessly bridging language barriers.

The Llama family of models, developed by Meta AI, is known for its impressive capabilities and open-source nature. This allows researchers and enthusiasts to experiment and build upon these models, pushing the boundaries of AI.

The NVIDIA 4070 Ti 12GB: A Solid Performer

The NVIDIA 4070 Ti 12GB is a powerful mid-range GPU that strikes a balance between performance and affordability. Its 12GB of GDDR6X memory provides enough bandwidth for running large-scale LLM models, while its CUDA cores offer impressive processing power.

4070 Ti 12GB Performance with Llama Models

Let's see how the NVIDIA 4070 Ti 12GB handles the various Llama models using the data provided:

| Model | Tokens/Second | Type | Notes |

|---|---|---|---|

| Llama 3 8B (Q4KM) Generation | 82.21 | Generation | Represents the speed of text generation |

| Llama 3 8B (Q4KM) Processing | 3653.07 | Processing | Shows how fast the GPU processes the model's internal calculations |

| Llama 3 70B (Q4KM) Generation | N/A | Generation | Data not available for this model and GPU combination |

| Llama 3 70B (Q4KM) Processing | N/A | Processing | Data not available for this model and GPU combination |

| Llama 3 8B (F16) Generation | N/A | Generation | Data not available for this model and GPU combination |

| Llama 3 8B (F16) Processing | N/A | Processing | Data not available for this model and GPU combination |

| Llama 3 70B (F16) Generation | N/A | Generation | Data not available for this model and GPU combination |

| Llama 3 70B (F16) Processing | N/A | Processing | Data not available for this model and GPU combination |

Key Observations:

- Solid Performance for Llama 3 8B: The 4070 Ti 12GB demonstrates decent performance with the Llama 3 8B model, particularly in terms of processing speed.

- Limited Data for Larger Models: No data is available for the 4070 Ti 12GB with larger models like Llama 3 70B. This is likely due to the memory limitations of the 12GB card.

- Quantization & Precision: The data provided indicates that the 4070 Ti 12GB was benchmarked using the Q4KM quantization scheme for the Llama 3 8B model. This method reduces the memory footprint of the model, allowing it to run on a 12GB card. However, it comes with some trade-offs in accuracy and performance.

Quantization: Shrinking LLMs for Your Hardware

Quantization is a process that reduces the size of an LLM by using less precise representations of its weights. Think of it as reducing the number of shades in an image, from millions of colors to just a few hundred. The result is a smaller, lighter image, but with some loss of fidelity. Similarly, quantization reduces the memory requirements for the LLM but may slightly impact its accuracy and performance.

Quantization: A Trade-Off Between Size and Precision

Benefits:

- Reduced Memory Footprint: This makes running LLMs on lower-end GPUs or with less memory possible.

- Faster Loading Times: Smaller models load faster, which is helpful for interactive use.

Drawbacks:

- Potential Accuracy Loss: The simplified representations can sometimes lead to less accurate results, especially in complex tasks.

- Reduced Performance: Some quantization schemes can reduce the model's processing speed.

The Case for the 4070 Ti 12GB: A Balanced Choice

The NVIDIA 4070 Ti 12GB appears to be a suitable option for running smaller LLMs, like Llama 3 8B, particularly if you're employing quantization techniques to keep the memory footprint manageable.

Here's a breakdown of its benefits:

- Strong Value Proposition: The 4070 Ti 12GB offers a good balance between performance and price.

- Mid-range Option: It's a step up from entry-level GPUs but still significantly less expensive than higher-end cards.

- Suitable for Smaller LLMs: It can handle Llama 3 8B with reasonable performance, especially with the Q4KM quantization technique.

However, consider these limitations:

- Memory Constraint: The 12GB of memory may not be sufficient for running larger LLMs like Llama 3 70B without extensive quantization, which can impact accuracy.

- Potential Bottlenecks: If you need to run multiple LLMs simultaneously or handle complex tasks, the 4070 Ti 12GB might not provide enough processing power.

Alternatives: Stepping Up for Larger Models

If you're planning to run larger LLM models like Llama 3 70B or explore even more advanced models, you may need to consider more powerful GPUs with larger memory capacities. Some popular options include:

- NVIDIA 4090: The flagship GPU with 24GB of GDDR6X memory, providing ample space for even the most demanding LLMs.

- NVIDIA 4080: A powerful mid-to-high-end option with 16GB of GDDR6X memory, a good stepping stone for larger models.

- AMD Radeon RX 7900 XT: AMD's high-end GPU with 24GB of GDDR6 memory, offering competitive performance to the NVIDIA 4090.

However, these higher-end options come with a significantly higher price tag.

Conclusion: Choosing the Right GPU for Your Home LLM Server

The choice of GPU for your home LLM server ultimately depends on your specific needs. If you primarily plan to experiment with smaller LLMs like Llama 3 8B and are comfortable with the potential trade-offs of quantization, the NVIDIA 4070 Ti 12GB offers a good balance between performance and affordability.

However, if you're planning to run large LLMs or perform complex tasks like generating high-resolution images or fine-tuning massive models, consider investing in a more powerful GPU with a larger memory capacity.

FAQ: Frequently Asked Questions

Q: What is the difference between Llama 3 8B and Llama 3 70B?

- Model Size: The numbers (8B and 70B) refer to the number of parameters in the models. A larger model has more parameters, which allows it to learn more complex patterns from the training data. This can lead to improved accuracy and capability.

Q: Can I use a 4070 Ti 12GB to run Llama 3 70B?

- It's Possible with Quantization: While it's technically possible to run Llama 3 70B on a 4070 Ti 12GB, it would require significant quantization, which can impact accuracy and performance. You might be better off with a more powerful GPU with larger memory.

Q: What are the best resources for learning more about LLMs?

- Hugging Face: This platform is a fantastic resource for discovering and experimenting with various LLMs.

- Papers with Code: A collection of papers and code related to LLMs, providing a solid foundation for understanding the field.

- Meta AI's Llama Website: Explore the official documentation for the Llama family of models.

Q: Is it expensive to run a home LLM Server?

- Cost Varies: The cost of running a home LLM server depends on the GPU, power consumption, and the specific LLM you're running. Smaller models with lower power consumption can be relatively affordable, but larger models with higher power consumption can be more expensive.

Keywords

LLM, Large Language Model, GPU, NVIDIA 4070 Ti, Llama 3 8B, Llama 3 70B, Quantization, Performance, Token Speed, Model Size, Memory, Budget, Home Server, AI, Natural Language Processing, Text Generation, Language Translation, Open Source, Deep Learning