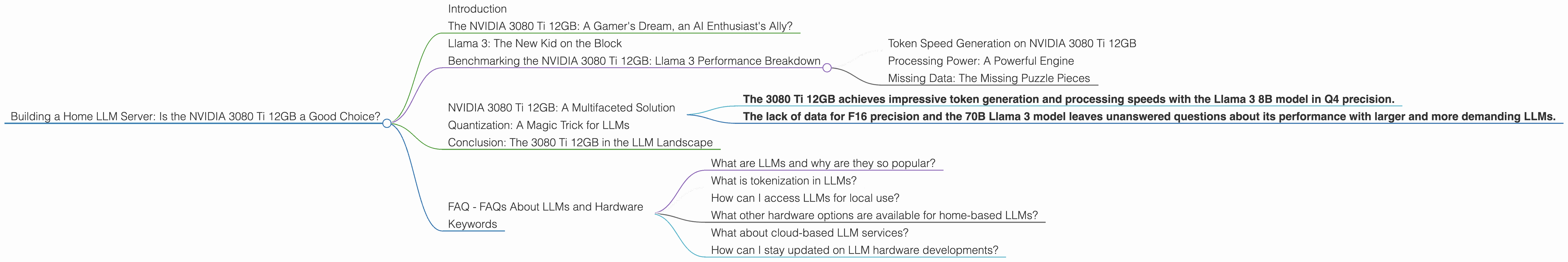

Building a Home LLM Server: Is the NVIDIA 3080 Ti 12GB a Good Choice?

Introduction

The world of large language models (LLMs) is booming, with ever-increasing capabilities and a growing desire to run these models locally. But for those venturing into the exciting world of building a home LLM server, a critical question arises: what's the best hardware to power this ambitious project? We're going to dive deep into the performance of the NVIDIA 3080 Ti 12GB, a popular choice for gamers and AI enthusiasts alike. This article will focus on its ability to handle the demanding workloads of LLMs, specifically the Llama 3 family.

The NVIDIA 3080 Ti 12GB: A Gamer's Dream, an AI Enthusiast's Ally?

The NVIDIA 3080 Ti 12GB, a powerhouse in the world of graphics cards, holds great appeal for AI enthusiasts, especially those looking to run LLMs locally. With its 12GB of GDDR6X memory and impressive processing power, it promises smooth performance, but will it deliver on that promise when it comes to LLMs?

Let's delve into the numbers:

Llama 3: The New Kid on the Block

Llama 3, the latest iteration of the open-source LLM, has been making waves. We'll be focusing on the 8B (8 billion parameters) model for our analysis, as it's a popular choice for local deployment due to its balance of performance and resource requirements.

Benchmarking the NVIDIA 3080 Ti 12GB: Llama 3 Performance Breakdown

While the 3080 Ti 12GB boasts impressive gaming capabilities, its performance in the realm of LLMs can be gauged by analyzing its token generation and processing speeds. We'll focus on the Llama 3 8B model in Q4 quantization (4-bit precision), a common tactic for reducing memory footprint and boosting speed.

Token Speed Generation on NVIDIA 3080 Ti 12GB

The NVIDIA 3080 Ti 12GB achieves a remarkable token generation speed of 106.71 tokens per second for the Llama 3 8B model with Q4 quantization. This means the card can process around 100 tokens every second, which translates to a decent, albeit not blazing-fast, experience, especially when compared to higher-end solutions (we'll explore those in later articles).

Think of tokens like words in a sentence. The more tokens per second your system can process, the faster your LLM can generate responses.

Processing Power: A Powerful Engine

The 3080 Ti 12GB shines when it comes to processing power. It boasts a processing speed of 3556.67 tokens per second for the Llama 3 8B model with Q4 quantization. This indicates that the card can efficiently handle the heavy calculations required to process the LLM's internal operations.

Imagine a car engine. More horsepower means the engine can push harder and faster. More processing power means your GPU can handle the complex computations of LLMs more efficiently.

Missing Data: The Missing Puzzle Pieces

Unfortunately, data for the 3080 Ti 12GB's performance with the Llama 3 8B model in F16 precision (16-bit floating-point precision) is not available. Also, there's no information on its performance with the larger 70B Llama 3 model in either Q4 or F16 precision.

This data gap leaves us with a limited understanding of the 3080 Ti 12GB's capabilities when working with different LLM models and precision levels.

NVIDIA 3080 Ti 12GB: A Multifaceted Solution

The NVIDIA 3080 Ti 12GB, on the surface, appears to be a good choice for home-based LLMs. However, the limited data available prevents a fuller picture of its true potential.

- The 3080 Ti 12GB achieves impressive token generation and processing speeds with the Llama 3 8B model in Q4 precision.

- The lack of data for F16 precision and the 70B Llama 3 model leaves unanswered questions about its performance with larger and more demanding LLMs.

Choosing the right hardware for your home LLM server is a complex decision. The 3080 Ti 12GB certainly deserves consideration, but further research and testing are crucial to make an informed choice.

Quantization: A Magic Trick for LLMs

Quantization is a fancy word for making LLMs smaller and faster. Think of it as squeezing a huge suitcase of clothes into a tiny carry-on bag. By using fewer bits to represent numbers, we can shrink the LLM's size and speed up its processing.

Q4 quantization uses only 4 bits to represent numbers, while F16 precision uses 16 bits. This translates to a significant reduction in memory usage and faster processing for Q4 models.

Conclusion: The 3080 Ti 12GB in the LLM Landscape

The NVIDIA 3080 Ti 12GB remains a viable option for home-based LLM servers, especially when running smaller models like the Llama 3 8B with Q4 quantization. However, its potential for running larger models with different precision levels remains unclear.

Before making a decision, consider your specific needs, model requirements, and the availability of detailed benchmark data. The LLM landscape is constantly evolving, so stay updated on the latest benchmarks and developments to make an informed choice for your home LLM server.

FAQ - FAQs About LLMs and Hardware

What are LLMs and why are they so popular?

LLMs are computer programs trained on massive amounts of text data, enabling them to understand, generate, and translate human language in a way that feels remarkably human-like. They've become increasingly popular due to their ability to automate tasks, create engaging content, and power innovative applications across diverse fields.

What is tokenization in LLMs?

Tokenization is the process of breaking down text into individual units called "tokens," which are like building blocks for LLMs. Think of it like dissecting a sentence into words. The more tokens your system can process per second, the faster your LLM can generate responses.

How can I access LLMs for local use?

Many open-source LLMs are available for download and deployment on your personal computer. Platforms like Hugging Face provide a vast repository of models, making it easy to explore and experiment with different LLMs.

What other hardware options are available for home-based LLMs?

Beyond the NVIDIA 3080 Ti 12GB, other GPU options like the 4090 series, as well as CPUs with large amounts of RAM, can be used to power LLMs. Each option offers different levels of performance and resource demands, so it's important to match your hardware choice to your LLM and workload.

What about cloud-based LLM services?

Cloud-based LLM services offer a convenient alternative to running LLMs locally. They provide access to powerful hardware and require minimal setup, making them particularly attractive for users who prioritize ease of use and scalability.

How can I stay updated on LLM hardware developments?

Keep an eye on tech blogs, forums, and developer communities for the latest benchmarks and announcements related to LLM hardware. Following the work of research groups and AI companies can also provide valuable insights into new developments and trends.

Keywords

LLMs, large language models, NVIDIA 3080 Ti, 3080 Ti 12GB, GPU, token speed, processing power, Llama 3, quantization, Q4, F16, home LLM server, AI, machine learning, natural language processing, performance, benchmarking, hardware, software, cloud services.