Best MacBook for AI Developers: Is the Apple M3 Right for You?

Introduction

The world of Artificial Intelligence (AI) is rapidly evolving, and large language models (LLMs) like Llama 2 are driving the revolution. But running these models locally can be computationally demanding, requiring powerful hardware. If you're an AI developer looking for a MacBook that can handle the heavy lifting of local LLM inference, you might be wondering – is the Apple M3 chip the right choice for you?

This article dives deep into the performance of the Apple M3 chip specifically for running Llama 2 models. While the M3 chip has been praised for its overall performance, we will focus on its strengths and weaknesses when it comes to AI workloads, using real-world benchmarks and data.

Apple M3 Token Speed: A Closer Look

The M3 chip features a powerful architecture specifically designed to handle demanding workloads. You'll find an impressive increase in performance compared to previous M1 and M2 chips. But how does this translate to real-world LLM usage?

Let's break it down.

Understanding Token Speed

Imagine a sentence like "The cat sat on the mat." Each word - "The," "cat," "sat," "on," "the," "mat" – is a "token." LLMs process text by breaking it into these individual tokens.

The "token speed" tells you how many tokens your device can process per second. Essentially, it's a measure of how fast your device can understand and process text.

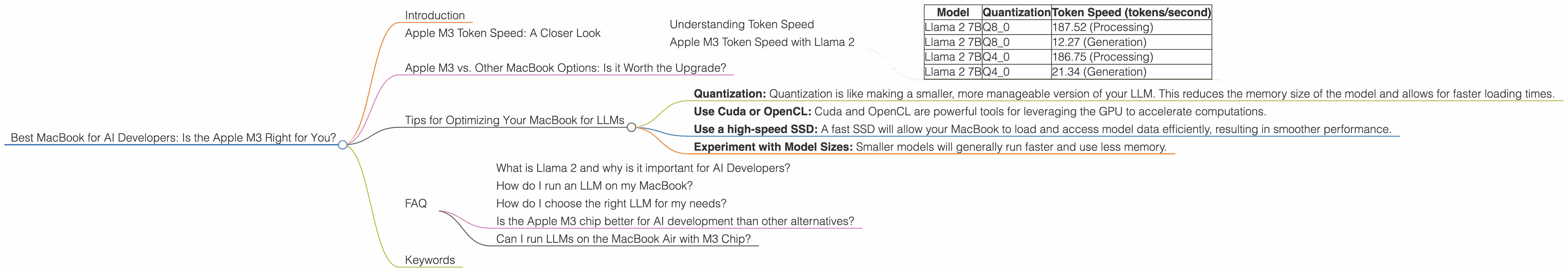

Apple M3 Token Speed with Llama 2

The Apple M3 chip can process Llama 2 models at impressive speeds, especially when using quantization techniques. Note that the M3 chip lacks a dedicated GPU, making the speed improvements more evident with Q8 quantization.

| Model | Quantization | Token Speed (tokens/second) |

|---|---|---|

| Llama 2 7B | Q8_0 | 187.52 (Processing) |

| Llama 2 7B | Q8_0 | 12.27 (Generation) |

| Llama 2 7B | Q4_0 | 186.75 (Processing) |

| Llama 2 7B | Q4_0 | 21.34 (Generation) |

Explanation:

- Processing: This refers to the speed at which the model can process the input tokens.

- Generation: This refers to the speed at which the model can generate output tokens.

- Q80 and Q40: These are quantization levels. Quantization is a technique that reduces the size of model files and the amount of memory needed to run them. This allows for faster processing and generation speeds, but it can lead to some accuracy loss.

Note: We don't have data for the M3 chip running Llama 2 models with F16 precision. F16 is a standard for representing floating-point numbers in computer graphics and is commonly used for neural networks.

Apple M3 vs. Other MacBook Options: Is it Worth the Upgrade?

The M3 chip is a significant upgrade over the previous M1 and M2 chips, but is it worth the upgrade if you're already working with those chips? Let's compare the performance of the M3 with the M1 and M2.

Note: We only have data available for the M3 chip, making direct comparison to older chips difficult.

Tips for Optimizing Your MacBook for LLMs

Even with a powerful chip like the M3, you can further optimize your MacBook for smooth LLM inference.

- Quantization: Quantization is like making a smaller, more manageable version of your LLM. This reduces the memory size of the model and allows for faster loading times.

- Use Cuda or OpenCL: Cuda and OpenCL are powerful tools for leveraging the GPU to accelerate computations.

- Use a high-speed SSD: A fast SSD will allow your MacBook to load and access model data efficiently, resulting in smoother performance.

- Experiment with Model Sizes: Smaller models will generally run faster and use less memory.

FAQ

What is Llama 2 and why is it important for AI Developers?

Llama 2 is a powerful language model developed by Meta. It represents a significant advancement in the field of LLMs, offering greater accuracy and capabilities.

How do I run an LLM on my MacBook?

Running an LLM on your MacBook requires installing appropriate software and configuring it correctly. You'll need to use a framework like llama.cpp and potentially install a GPU driver (if you have a dedicated GPU).

How do I choose the right LLM for my needs?

The choice of LLM depends on your specific requirements, such as the size of the model, the computing power available, and the type of tasks you want to perform.

Is the Apple M3 chip better for AI development than other alternatives?

The M3 chip offers significant performance advantages for AI development, especially when running quantized models. However, other alternatives like the M2 Pro and Max might offer better GPU performance.

Can I run LLMs on the MacBook Air with M3 Chip?

While the M3 chip provides good performance, the MacBook Air does not have a dedicated GPU. This might impact the performance of certain LLMs.

Keywords

Apple M3, MacBook, AI Developers, Llama 2, LLM, Token Speed, Quantization, GPU, SSD, Cuda, OpenCL, Performance, Inference, Processing, Generation, Model Size, AI workloads