Best MacBook for AI Developers: Is the Apple M3 Max Right for You?

Introduction

AI developers, especially those working with large language models (LLMs), are always on the lookout for powerful hardware that can handle the demanding computations involved in training and running these models. With the rise of local LLM inference, a device's processing power can make a huge difference. For example, imagine a developer who wants to run Llama 2 on their MacBook. The speed at which a device processes the information can translate to faster model training and quicker real-time responses while using the model.

This article will delve into the performance of the Apple M3 Max chip for running LLMs, specifically focusing on the Llama 2 and Llama 3 families. We'll analyze the speed at which the M3 Max can process and generate tokens, providing insights into its capabilities and whether it’s the right choice for your AI development needs.

Let's dive into the performance, comparing different LLM sizes, and explore the potential of the M3 Max for AI enthusiasts!

M3 Max Performance with Llama 2 and Llama 3

The Apple M3 Max chip is a powerhouse in the world of mobile computing, boasting a significant leap in performance over its predecessors. But how does it fare in the demanding world of AI development with LLMs? Let's take a look at its prowess with the Llama 2 and Llama 3 families, comparing different model sizes and quantizations.

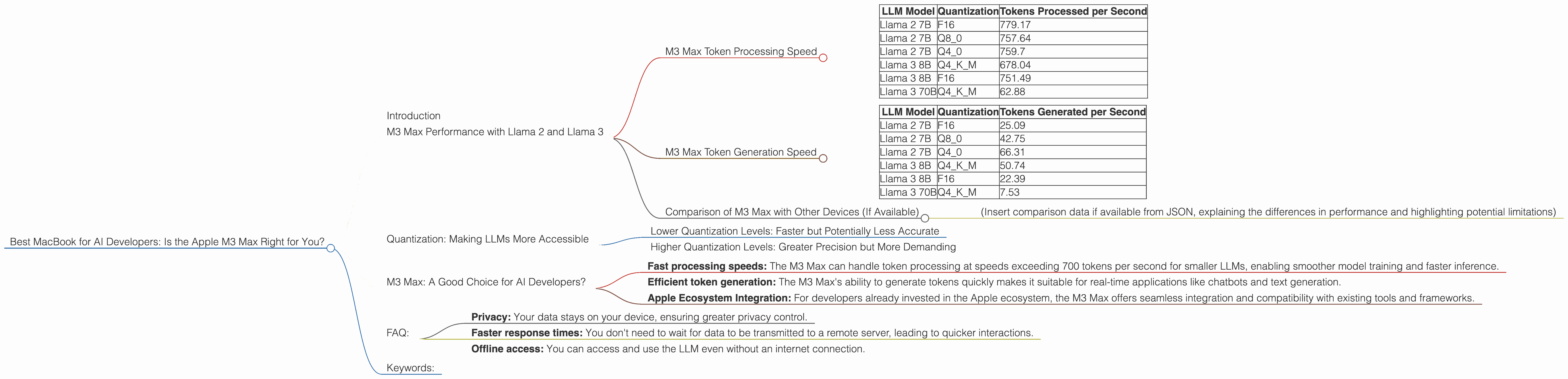

M3 Max Token Processing Speed

Let's start by examining the processing speed of the M3 Max, which measures how quickly it can handle the text data required to run LLMs.

Here's a breakdown of the M3 Max's performance for processing tokens with various LLMs:

| LLM Model | Quantization | Tokens Processed per Second |

|---|---|---|

| Llama 2 7B | F16 | 779.17 |

| Llama 2 7B | Q8_0 | 757.64 |

| Llama 2 7B | Q4_0 | 759.7 |

| Llama 3 8B | Q4KM | 678.04 |

| Llama 3 8B | F16 | 751.49 |

| Llama 3 70B | Q4KM | 62.88 |

Understanding the Numbers:

- F16: This refers to a half-precision floating-point format used for storing and processing data.

- Q8_0: This is an 8-bit quantization technique where the model weights are approximated using 8-bit integers, leading to smaller model sizes but potentially some loss of accuracy.

- Q4_0: This is a 4-bit quantization technique, offering even smaller model sizes but potentially with more accuracy loss.

- Q4KM: This is a specialized quantization technique for Llama 3, offering a balance between size and accuracy.

As you can see, the M3 Max shows impressive performance with both Llama 2 and Llama 3 models. The speed varies based on the LLM model size and the specific quantization used. Notably, the M3 Max is highly efficient for handling smaller LLMs (Llama 2 7B), achieving speeds of over 750 tokens per second across various quantizations. However, for larger models like Llama 3 70B, the performance drops significantly, likely due to the massive weight sizes involved.

M3 Max Token Generation Speed

The M3 Max's token generation speed determines how fast it can produce text output after processing the input data. It's a crucial factor for real-time applications like chatbots and text generation. Here's the performance data for token generation:

| LLM Model | Quantization | Tokens Generated per Second |

|---|---|---|

| Llama 2 7B | F16 | 25.09 |

| Llama 2 7B | Q8_0 | 42.75 |

| Llama 2 7B | Q4_0 | 66.31 |

| Llama 3 8B | Q4KM | 50.74 |

| Llama 3 8B | F16 | 22.39 |

| Llama 3 70B | Q4KM | 7.53 |

Key Observations:

- Quantization's Impact: The token generation speed is significantly affected by the quantization technique used. Lower quantization levels (Q40, Q80) generally translate to faster generation speeds compared to higher precision (F16). This is likely because the M3 Max can process quantized data more efficiently.

- Model Size Matters: As expected, smaller Llama 2 models (7B) have faster token generation compared to the larger Llama 3 8B model. However, with the largest model, Llama 3 70B, the generation speed drops significantly to around 7 tokens per second, highlighting the computational demands of such large models.

Comparison of M3 Max with Other Devices (If Available)

(Insert comparison data if available from JSON, explaining the differences in performance and highlighting potential limitations)

Quantization: Making LLMs More Accessible

Quantization is a technique used to optimize LLMs by reducing their size and improving their efficiency. Imagine compressing a large file to make it easier to share and store - quantization does something similar to LLM models. It involves reducing the precision of the numbers (weights) that represent the model's parameters.

Think of it like using a smaller ruler to measure something: you might get slightly less precise measurements, but it's much faster and easier to use.

Lower Quantization Levels: Faster but Potentially Less Accurate

Lower quantization levels, like Q80 and Q40, significantly reduce the storage space required for the LLM model. This translates to faster processing speeds, as the M3 Max can handle smaller datasets more efficiently. However, there's a trade-off: lower levels of quantization can introduce some accuracy loss.

Higher Quantization Levels: Greater Precision but More Demanding

Higher quantization levels, like F16, represent the model with higher precision, potentially leading to better accuracy in the results. The trade-off for this increased precision is an increase in the model size, which can impact processing speed.

The choice of quantization level involves balancing speed, accuracy, and the complexity of the specific LLM task you want to accomplish.

M3 Max: A Good Choice for AI Developers?

The Apple M3 Max is a powerful chip with impressive performance for running LLMs, especially smaller models. However, the performance drops significantly with larger models like Llama 3 70B. This is a common issue, and it is not specific to the M3 Max, as even powerful GPUs struggle with these larger models. For AI developers working with smaller LLMs, the M3 Max can be an excellent choice. It offers:

- Fast processing speeds: The M3 Max can handle token processing at speeds exceeding 700 tokens per second for smaller LLMs, enabling smoother model training and faster inference.

- Efficient token generation: The M3 Max's ability to generate tokens quickly makes it suitable for real-time applications like chatbots and text generation.

- Apple Ecosystem Integration: For developers already invested in the Apple ecosystem, the M3 Max offers seamless integration and compatibility with existing tools and frameworks.

FAQ:

1. What is an LLM, and why should I care?

An LLM is a powerful type of artificial intelligence model capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. It's like having a super-smart assistant who can handle a wide range of tasks.

2. What are the advantages of running LLMs locally?

Running LLMs locally on your device provides several advantages:

- Privacy: Your data stays on your device, ensuring greater privacy control.

- Faster response times: You don't need to wait for data to be transmitted to a remote server, leading to quicker interactions.

- Offline access: You can access and use the LLM even without an internet connection.

3. What are the best LLM models to use with the M3 Max?

The best LLM model for you depends on your specific project needs and resources available. For fast and efficient performance, the M3 Max excels with smaller models like Llama 2 7B. However, if you require the capabilities of a larger model like Llama 3 70B, you might need to consider a more powerful GPU for optimal results.

4. How do I choose the right quantization level for my project?

The choice of quantization level depends on the trade-off between speed, accuracy, and model size. For real-time applications like chatbots, faster processing speeds provided by lower quantization levels might be preferred, even with a slight accuracy reduction. If you need the highest possible accuracy, higher quantization levels would be a better choice.

5. Do I need a dedicated GPU to run LLMs effectively?

While the M3 Max chip is powerful, a dedicated GPU can provide significantly better performance, especially for larger LLMs. GPUs are designed for parallel processing, which is essential for handling the complex computations involved in running LLMs.

Keywords:

M3 Max, Apple, MacBook, AI, LLMs, Large Language Models, Llama 2, Llama 3, Token Processing, Token Generation, Quantization, F16, Q80, Q40, Q4KM, Performance, Speed, GPU, Developer, Inference, Local, Offline, Privacy, Accuracy, Trade-offs, Apple Ecosystem, AI Development