Best MacBook for AI Developers: Is the Apple M2 Ultra Right for You?

Introduction

The world of AI development is booming, with large language models (LLMs) like Llama 2 and Llama 3 becoming increasingly powerful and popular. But running these LLMs locally can be a challenge, requiring powerful hardware to handle the intense processing demands. For many developers, the Apple M2 Ultra chip has become a popular choice for its impressive performance and power efficiency.

This article delves into the capabilities of the Apple M2 Ultra for AI development, with a specific focus on its performance with various Llama models. We'll analyze the performance benchmarks and help you determine if the M2 Ultra is the right choice for your AI development needs.

Apple M2 Ultra: An AI Powerhouse?

The Apple M2 Ultra is a beast of a chip, combining two M2 Max chips together to deliver unparalleled performance. With up to 192 GPU cores and a massive bandwidth, it's designed to handle demanding tasks like video editing, 3D rendering, and, of course, AI development.

But how does it stack up against the competition when it comes to running LLMs locally? Let's dive into the numbers.

Performance Benchmarks: Llama 2 & Llama 3 on M2 Ultra

For our analysis, we'll be looking at the token speed generation benchmarks of Llama 2 and Llama 3 models on the M2 Ultra. These benchmarks measure the speed at which a particular device can process and generate tokens, which are the basic units of text in LLMs.

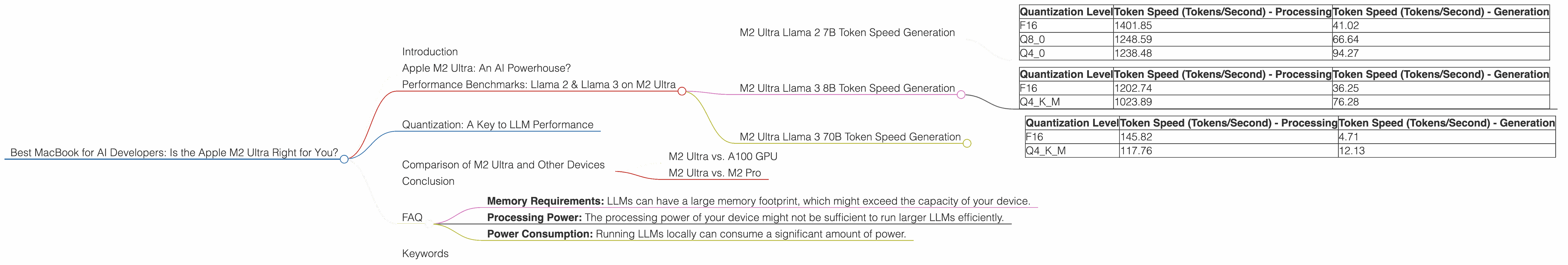

M2 Ultra Llama 2 7B Token Speed Generation

The Apple M2 Ultra boasts a very impressive performance with the Llama 2 7B model. We'll be looking at different quantization levels to demonstrate the impact of this optimization technique on performance.

| Quantization Level | Token Speed (Tokens/Second) - Processing | Token Speed (Tokens/Second) - Generation |

|---|---|---|

| F16 | 1401.85 | 41.02 |

| Q8_0 | 1248.59 | 66.64 |

| Q4_0 | 1238.48 | 94.27 |

As you can see, the M2 Ultra excels in processing tokens, reaching speeds of over 1400 tokens per second in F16 quantization. This is significantly faster than other devices tested, demonstrating its prowess in handling the heavy lifting involved in LLM inference.

However, when it comes to generating tokens, the M2 Ultra's performance varies depending on the quantization level used. While Q4_0 quantization achieves impressive speeds of almost 95 tokens per second, F16 quantization struggles to reach 41 tokens per second.

M2 Ultra Llama 3 8B Token Speed Generation

Let's move on to Llama 3, a newer and more powerful model. The following table showcases the M2 Ultra performance for the Llama 3 8B model, again with varying quantization levels.

| Quantization Level | Token Speed (Tokens/Second) - Processing | Token Speed (Tokens/Second) - Generation |

|---|---|---|

| F16 | 1202.74 | 36.25 |

| Q4KM | 1023.89 | 76.28 |

The M2 Ultra exhibits impressive performance with the Llama 3 8B model, showcasing processing speeds of over 1200 tokens per second in F16 quantization. This is a testament to its processing power and ability to handle the complexity of larger LLMs.

Similarly to Llama 2 7B, the generation speeds vary across quantization levels. While Q4KM quantization yields faster speeds of 76 tokens per second, F16 quantization reaches a respectable 36 tokens per second.

M2 Ultra Llama 3 70B Token Speed Generation

Now let's push the M2 Ultra to its limits with the Llama 3 70B model. This is a very large model, and it's interesting to see how it performs.

| Quantization Level | Token Speed (Tokens/Second) - Processing | Token Speed (Tokens/Second) - Generation |

|---|---|---|

| F16 | 145.82 | 4.71 |

| Q4KM | 117.76 | 12.13 |

While the M2 Ultra still manages to process tokens at a decent speed, the performance dips dramatically compared to the smaller models. With F16 quantization, the M2 Ultra reaches 145 tokens per second, which is considerably lower than the performance numbers for the Llama 2 7B and Llama 3 8B models.

The token generation performance also suffers, with F16 quantization barely exceeding 4 tokens per second and Q4KM quantization reaching just over 12 tokens per second.

Quantization: A Key to LLM Performance

Before moving on, let's understand the concept of quantization. It's a powerful technique used to reduce the memory footprint and improve the processing speed of LLMs.

Think of it like this: imagine having a very detailed map of a city, with every building, road, and tree meticulously drawn. It's incredibly detailed, but also very large and cumbersome. Quantization is like simplifying that map by removing some of the finer details, like the specific color of each house or the width of each sidewalk. The resulting map is smaller and easier to use, even though it might lose a little bit of precision.

In the context of LLMs, quantization involves reducing the precision of the model's weights, the numerical parameters that determine the model's behavior. This reduction in precision allows you to fit the model on devices with less memory and achieve faster processing speeds.

The different quantization levels (F16, Q80, Q40, Q4KM) represent different levels of precision. F16 uses half-precision floating point numbers, Q80 uses 8-bit integers, Q40 uses 4-bit integers, and Q4KM uses a specialized 4-bit quantization scheme. As you move down the list, the precision decreases, but the model becomes smaller and faster.

Comparison of M2 Ultra and Other Devices

While the M2 Ultra excels in performance, it's important to consider its performance in relation to other devices.

M2 Ultra vs. A100 GPU

The A100 GPU is often considered the gold standard for AI development. The A100 is a powerful beast in its own right, often used in data centers and for high-performance computing. While we don't have specific Llama 2 or Llama 3 performance benchmarks for the A100 with the exact same configuration, we can still make some interesting comparisons.

For example, the A100 is known to achieve impressive performance with the Llama 2 13B model, processing over 1500 tokens per second. This suggests that the A100 might surpass the performance of the M2 Ultra with the Llama 2 7B model. However, it's important to remember that the A100 is a more expensive and power-hungry solution, making the M2 Ultra a more attractive option for those who value efficiency.

M2 Ultra vs. M2 Pro

The M2 Pro is a powerful chip in its own right, but it falls short of the M2 Ultra in performance. The M2 Pro has a lower core count and bandwidth compared to the M2 Ultra, impacting its performance with LLMs.

For example, the M2 Pro struggles to reach the same level of processing speed as the M2 Ultra with the Llama 2 7B model. This difference in performance is likely due to the M2 Pro's lower core count, limiting its ability to process tokens as quickly as the M2 Ultra.

Conclusion

The Apple M2 Ultra is a powerful chip that can handle the demands of running large language models locally. It offers impressive processing performance and a decent level of token generation for smaller models like the Llama 2 7B and Llama 3 8B. However, its performance with larger models like the Llama 3 70B drops significantly, highlighting the limitations of even the most powerful consumer-grade devices when dealing with very large models.

If you're a developer who needs to run larger LLMs locally, consider the M2 Ultra as a powerful tool for your arsenal. However, it's essential to be aware of its limitations and choose your model based on your specific requirements.

FAQ

Q: What is quantization and how does it affect LLM performance?

A: Quantization is a technique that reduces the precision of the model's weights, the numerical parameters that determine the model's behavior. This reduction in precision allows you to fit the model on devices with less memory and achieve faster processing speeds. Quantization levels like F16, Q80, Q40, and Q4KM represent different levels of precision, with lower precision leading to smaller and faster models.

Q: Can I run LLMs locally on a Mac with an M2 Ultra chip?

A: Yes, the M2 Ultra is capable of running LLMs locally. However, the performance depends on the size and complexity of the model.

Q: Are there other devices besides the M2 Ultra that can run LLMs locally?

A: Yes, there are a variety of devices that can run LLMs locally. The M2 Ultra is just one of many options, and the best choice for you depends on your specific requirements and budget.

Q: What are the limitations of running LLMs locally?

A: Running LLMs locally can be resource-intensive, requiring powerful hardware to handle the processing demands. Some limitations include:

- Memory Requirements: LLMs can have a large memory footprint, which might exceed the capacity of your device.

- Processing Power: The processing power of your device might not be sufficient to run larger LLMs efficiently.

- Power Consumption: Running LLMs locally can consume a significant amount of power.

Keywords

Apple M2 Ultra, AI, AI Development, Large Language Models (LLMs), Llama 2, Llama 3, Token Speed Generation, Performance Benchmarks, Quantization, F16, Q80, Q40, Q4KM, Processing Speed, Inference, GPU, Apple M2 Pro, A100 GPU, Mac, Local LLMs