Best MacBook for AI Developers: Is the Apple M2 Right for You?

The world of AI is buzzing with excitement, particularly with the rise of large language models (LLMs) like GPT-3 and ChatGPT. But what if you want to explore LLMs locally, without relying on cloud services? This is where your trusty MacBook comes in, and the Apple M2 chip might be the key to unlocking the power of AI on your desktop.

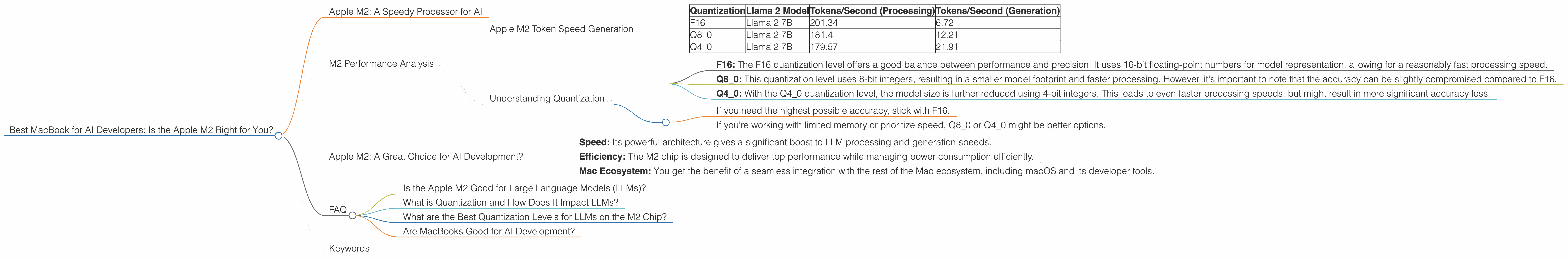

This article delves into the capabilities of the Apple M2 chip for running LLMs. We'll analyze specific benchmarks for the Llama 2 model, comparing performance across different quantization levels (F16, Q80, Q40) and highlighting the impact on token speed generation. By the end, you'll have a better understanding of whether the Apple M2 is the right choice for your LLM development journey.

Apple M2: A Speedy Processor for AI

The Apple M2 chip is a powerhouse designed for demanding tasks, and running LLMs is certainly one of them. But how does it stack up against other options? Let's dive into the numbers to find out.

M2 Performance Analysis

We'll focus on the Llama 2 model, a popular choice for developers exploring local LLM processing. The M2 chip offers impressive performance with different quantization levels.

Apple M2 Token Speed Generation

The following table summarizes the token speed generation on the M2 chip for different Llama 2 setups:

| Quantization | Llama 2 Model | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| F16 | Llama 2 7B | 201.34 | 6.72 |

| Q8_0 | Llama 2 7B | 181.4 | 12.21 |

| Q4_0 | Llama 2 7B | 179.57 | 21.91 |

Note: Unfortunately, we have no data for Llama 7B models with different quantization levels on the M2 chip.

As you can see, even though the M2 chip provides impressive token speed generation for Llama 2 7B, the performance varies significantly depending on the quantization level used.

Understanding Quantization

Quantization is a technique used to compress LLM models, reducing the size of the model file without sacrificing too much accuracy. Think of it like compressing a photo: you reduce its size to make it easier to store and share, but there might be a slight loss of quality. Similarly, quantization compresses the LLM model, making it faster to process but potentially reducing its accuracy.

- F16: The F16 quantization level offers a good balance between performance and precision. It uses 16-bit floating-point numbers for model representation, allowing for a reasonably fast processing speed.

- Q8_0: This quantization level uses 8-bit integers, resulting in a smaller model footprint and faster processing. However, it's important to note that the accuracy can be slightly compromised compared to F16.

- Q40: With the Q40 quantization level, the model size is further reduced using 4-bit integers. This leads to even faster processing speeds, but might result in more significant accuracy loss.

The choice of quantization level depends on your specific priorities:

- If you need the highest possible accuracy, stick with F16.

- If you're working with limited memory or prioritize speed, Q80 or Q40 might be better options.

Apple M2: A Great Choice for AI Development?

The Apple M2 chip demonstrates impressive capabilities for running LLMs, particularly when considering the Llama 2 7B model. It offers a solid balance between performance and efficiency, allowing for a seamless local development experience.

Here are some key advantages of using the M2 chip:

- Speed: Its powerful architecture gives a significant boost to LLM processing and generation speeds.

- Efficiency: The M2 chip is designed to deliver top performance while managing power consumption efficiently.

- Mac Ecosystem: You get the benefit of a seamless integration with the rest of the Mac ecosystem, including macOS and its developer tools.

So, if you're looking for a MacBook specifically for AI development and you are focusing on the Llama 2 7B model, the Apple M2 is a strong contender.

FAQ

Is the Apple M2 Good for Large Language Models (LLMs)?

The Apple M2 chip demonstrates good performance for running LLMs like Llama 2 7B. However, the performance is variable depending on the chosen quantization level. The F16 quantization level offers a good balance between performance and accuracy, while Q80 and Q40 offer faster speeds with potential trade-offs in accuracy.

What is Quantization and How Does It Impact LLMs?

Quantization is a technique used to compress LLM models, reducing their size without sacrificing too much accuracy. It involves representing model weights with less precision, typically using smaller numerical formats like 8-bit integers. Quantization can significantly boost processing speed while reducing memory requirements but might lead to some loss of accuracy.

What are the Best Quantization Levels for LLMs on the M2 Chip?

The best quantization level for LLMs on the M2 chip depends on your specific needs. If you prioritize accuracy, F16 is a good choice. If you're working with limited memory or prioritize speed, Q80 or Q40 might be more suitable.

Are MacBooks Good for AI Development?

MacBooks are a great option for AI developers, offering a blend of performance, portability, and a robust development ecosystem. The Apple M series chips are especially well-suited for running LLMs, providing efficient processing power for demanding AI tasks.

Keywords

MacBook, Apple M2, AI, LLM, Large Language Model, Llama 2, Llama 7B, Token Speed, Quantization, F16, Q80, Q40, AI Development, Performance, Efficiency, Mac Ecosystem, Developer Tools, AI-Specific, Machine Learning, Deep Learning, Neural Networks, AI Hardware