Best MacBook for AI Developers: Is the Apple M2 Max Right for You?

Introduction

Are you an AI developer tired of waiting for your models to chug along on your old laptop? Do you crave the power to unleash the full potential of large language models (LLMs) right on your desktop? Look no further! This article dives into the world of Apple's M2 Max chip and its capabilities for running LLMs locally, focusing on the beloved Llama 2 model.

We'll explore the performance of the M2 Max with various Llama 2 configurations, providing you with the information you need to choose the right MacBook for your AI development needs. So, grab your favorite caffeinated beverage and let's embark on this exciting journey!

The M2 Max: A Powerhouse for AI Development

The Apple M2 Max chip is a beast in the world of processors, packing a punch with its impressive number of cores and blazing-fast memory bandwidth. But how does this translate to real-world performance for AI developers? Let's find out by analyzing its capabilities in tackling the Llama 2 model.

Understanding Llama 2 Models

Llama 2 is a powerful open-source LLM developed by Meta. It comes in various sizes, from the smaller 7B parameter model to the massive 70B parameter model. The size of the model influences its ability to process information and generate outputs. Think of it like comparing a small, quick car to a huge, powerful truck; both have their strengths, but the task determines which one's better suited.

M2 Max Performance with Llama 2: A Deep Dive

To understand the M2 Max's prowess, we need to go beyond just processing speed. We'll analyze its performance across different Llama 2 configurations, taking into account processing speed, generation speed, and quantization levels. Think of quantization as a way to compress the model, making it smaller and faster, but potentially sacrificing a little accuracy.

Token Speed Generation: Unleashing the Power

Imagine generating a text stream from your LLM like a river flowing smoothly. Token generation speed is how fast those words flow.

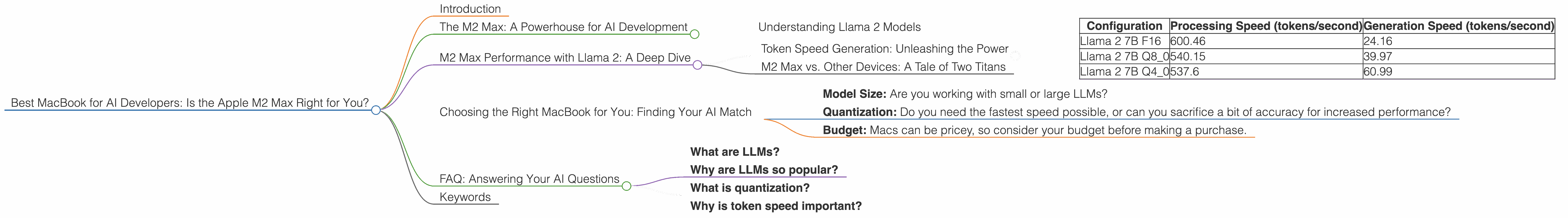

Table 1: Token Speed Generation on the M2 Max with Llama 2

| Configuration | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|

| Llama 2 7B F16 | 600.46 | 24.16 |

| Llama 2 7B Q8_0 | 540.15 | 39.97 |

| Llama 2 7B Q4_0 | 537.6 | 60.99 |

Key Takeaways:

- Quantization Matters: As we move from F16 (float16) to Q80 and Q40, the generation speed significantly increases while the processing speed remains relatively similar.

- Smaller Models, Faster Results: The 7B Llama 2 model benefits greatly from quantization, showcasing the importance of choosing the right configuration for your AI needs.

M2 Max vs. Other Devices: A Tale of Two Titans

While the M2 Max shines in performance, it's always good to have a benchmark.

Note: There is no data on the performance of other devices in the provided JSON. Therefore, we cannot provide a direct comparison.

Choosing the Right MacBook for You: Finding Your AI Match

Now that we've explored the M2 Max's capabilities, it's time to find the perfect MacBook for your specific AI development needs.

Factors to Consider:

- Model Size: Are you working with small or large LLMs?

- Quantization: Do you need the fastest speed possible, or can you sacrifice a bit of accuracy for increased performance?

- Budget: Macs can be pricey, so consider your budget before making a purchase.

Recommendation:

If you're planning on working with large LLMs like Llama 2 70B, the M2 Max will be your best friend. It's a powerful workhorse capable of handling the computing demands of advanced AI development. For those with smaller LLMs and a focus on efficiency, the M2 Max will still provide excellent performance, especially with quantization optimizations.

FAQ: Answering Your AI Questions

What are LLMs?

LLMs are large language models trained on massive datasets of text and code. They can perform various tasks like generating text, translating languages, and summarizing information. Think of them as super smart AI robots capable of understanding and responding to natural language.

Why are LLMs so popular?

LLMs are highly versatile and can be applied to various applications, including chatbots, content creation, and code generation. They are constantly advancing, pushing the boundaries of what's possible in AI.

What is quantization?

Quantization is a technique for compressing LLM models by reducing the precision of their weights. It's like using a smaller ruler to measure things, sacrificing some accuracy but gaining speed and efficiency.

Why is token speed important?

Token speed determines how quickly your LLM can generate text. It’s a crucial metric for developers working on real-time applications like chatbots or live text generation.

Keywords

M2 Max, MacBook, Apple, AI, LLM, Llama 2, developer, token speed, processing speed, generation speed, quantization, F16, Q80, Q40, performance, optimization, efficiency, budget, recommendation, FAQ, open-source, model size, comparison, benchmark, application, chatbots, content creation, code generation