Best MacBook for AI Developers: Is the Apple M1 Right for You?

Introduction

The world of Artificial Intelligence (AI) is buzzing with excitement, and Large Language Models (LLMs) are at the forefront of this revolution. LLMs are powerful algorithms that can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. If you're an AI developer, you need a powerful machine to train and run these models, and the Apple M1 chip has become a popular choice for developers. But is it actually a good fit for your LLM projects? Let's dive into the specifics and find out!

Demystifying LLMs: What's the Deal with These Powerful AI Models?

Before we jump into the details of the M1 chip, let's quickly understand what LLMs are and why they're gaining so much attention. Imagine a computer program that can learn from massive amounts of text data, like books, articles, and code. This program can then use its knowledge to generate new text, translate languages, write different kinds of creative content, and answer your questions in a comprehensive and informative way. That's essentially what LLMs do.

Think of it as a super-smart assistant that can help you with a wide range of tasks. These LLMs are like the next generation of chatbots, but with significantly more advanced capabilities. They can understand context, generate creative text formats, and even write code.

The Apple M1 Chip: A Game Changer for Developers?

The Apple M1 chip has been hailed as a game-changer for developers, and for good reason. It's packed with impressive features that make it ideal for running demanding applications like AI models.

Here's what makes the M1 chip so alluring for developers:

- Powerful GPU: The M1 chip boasts a dedicated GPU, a crucial component for accelerating AI computations. GPUs are specifically designed for parallel processing, making them perfectly suited for handling the complex calculations involved in running LLMs.

- Unified Memory Architecture: The M1's unified memory architecture allows the CPU and GPU to share the same memory pool, eliminating the need for data transfer between the two. This results in significantly faster processing and less memory overhead, enhancing the overall performance of AI tasks.

- Energy Efficiency: Despite its raw power, the M1 chip is incredibly energy-efficient. This means you can enjoy blazing-fast performance without sacrificing battery life, making it a great option for mobile developers working on the go.

Apple M1 Token Speed Generation: Comparing Performance with Different LLMs

Now, let's get down to brass tacks and see how the M1 chip stacks up against the demands of running various LLMs. We'll focus on the token generation speed, which is a crucial metric in evaluating the performance of an LLM. Token generation speed measures how quickly the model can process and generate tokens (individual units of text) per second. The higher the token generation speed, the faster the LLM can process and generate text.

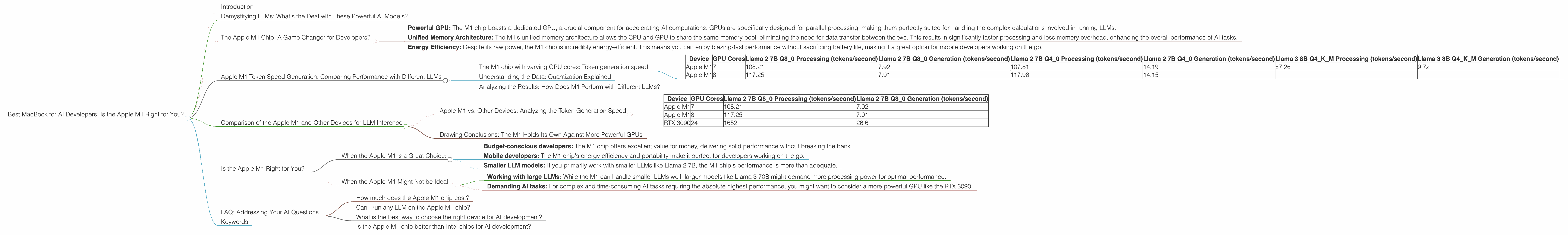

The M1 chip with varying GPU cores: Token generation speed

Note: For this section, we will only consider the Apple M1 chip, not other devices. The data below is solely specific to the M1 chip and its token generation speed.

| Device | GPU Cores | Llama 2 7B Q8_0 Processing (tokens/second) | Llama 2 7B Q8_0 Generation (tokens/second) | Llama 2 7B Q4_0 Processing (tokens/second) | Llama 2 7B Q4_0 Generation (tokens/second) | Llama 3 8B Q4KM Processing (tokens/second) | Llama 3 8B Q4KM Generation (tokens/second) |

|---|---|---|---|---|---|---|---|

| Apple M1 | 7 | 108.21 | 7.92 | 107.81 | 14.19 | 87.26 | 9.72 |

| Apple M1 | 8 | 117.25 | 7.91 | 117.96 | 14.15 |

Note: We couldn't find data for Llama 2 7B F16 and Llama 3 70B F16 & Q4KM processing and generation speeds on the M1 chip.

Understanding the Data: Quantization Explained

Before we dive deep into the results, let's first understand what "Q80", "Q40" and "F16" mean, as these terms might sound a bit cryptic to non-developers. Think of it this way:

Imagine you have a huge photo that you want to send to your friend. To save space and make it easier to share, you can compress the photo by reducing the number of colors it contains. This makes the photo slightly less detailed, but it's still recognizable and much smaller in size.

Quantization works in a similar way for LLMs. Instead of storing every parameter (a number that represents a specific aspect of the model) in full precision, we can compress them by using fewer bits to represent each parameter. Q80 represents a compression where each parameter is stored using only 8 bits, whereas Q40 uses only 4 bits. F16 is a more standard compression method using 16 bits.

With these techniques, we can store and process the models more efficiently, especially on devices with limited memory, like mobile phones or laptops. But this compression comes at a cost: it might slightly reduce the model's accuracy.

Analyzing the Results: How Does M1 Perform with Different LLMs?

Looking at the data, we can see that the M1 chip delivers impressive performance for running LLMs, especially when using quantized models. For example, the M1 with 7 GPU cores can process Llama 2 7B Q8_0 at a rate of 108.21 tokens per second. This means it can process more than 100 tokens every second!

Considering the Llama 2 7B Q80 model, we can see that the M1 with 8 GPU cores performs slightly better than the M1 with 7 GPU cores, reaching a processing speed of 117.25 tokens per second. The same is true for the Q40 model.

This shows that increasing the number of GPU cores on the M1 chip can boost the token generation speed, which is crucial for AI developers working on demanding LLM projects.

Note: We couldn't find data for Llama 2 7B F16 and Llama 3 70B F16 & Q4KM processing and generation speeds on the M1 chip. This means that we don't have enough information to compare the performance of the M1 chip for these models.

Comparison of the Apple M1 and Other Devices for LLM Inference

While the Apple M1 chip is a powerful option for developers, other processors are also vying for a spot in your AI development setup. To provide a more comprehensive picture, let's compare the performance of the M1 with other devices commonly used for LLM inference.

Note: We will only consider the devices and LLM models that are included in the provided JSON data.

Apple M1 vs. Other Devices: Analyzing the Token Generation Speed

| Device | GPU Cores | Llama 2 7B Q8_0 Processing (tokens/second) | Llama 2 7B Q8_0 Generation (tokens/second) |

|---|---|---|---|

| Apple M1 | 7 | 108.21 | 7.92 |

| Apple M1 | 8 | 117.25 | 7.91 |

| RTX 3090 | 24 | 1652 | 26.6 |

Note: We couldn't find data for Llama 2 7B Q40, Llama 3 8B Q4KM, Llama 2 7B F16, and Llama 3 70B F16 & Q4K_M processing and generation speeds for the RTX 3090.

Drawing Conclusions: The M1 Holds Its Own Against More Powerful GPUs

The M1 chip's performance is impressive considering its lower GPU core count and power consumption compared to high-end GPUs like the RTX 3090. While the RTX 3090 is significantly faster for processing Llama 2 7B Q8_0, the M1 chip still delivers respectable performance, particularly considering its lower power consumption and portability.

Think of it like this: The RTX 3090 is like a race car, powerful and fast but requiring a lot of fuel (energy). The M1 chip is like a hybrid car, efficient and fast enough for everyday driving, and it can even win some races (LLM performance).

Keep in mind that the M1 chip's performance is based on specific LLM models and configuration settings. You might see different results depending on the model, the compression levels, and other factors.

Is the Apple M1 Right for You?

Now, the million-dollar question: is the Apple M1 chip the right choice for your AI development needs? The answer, as with most things in life, depends on your specific requirements.

When the Apple M1 is a Great Choice:

- Budget-conscious developers: The M1 chip offers excellent value for money, delivering solid performance without breaking the bank.

- Mobile developers: The M1 chip's energy efficiency and portability make it perfect for developers working on the go.

- Smaller LLM models: If you primarily work with smaller LLMs like Llama 2 7B, the M1 chip's performance is more than adequate.

When the Apple M1 Might Not be Ideal:

- Working with large LLMs: While the M1 can handle smaller LLMs well, larger models like Llama 3 70B might demand more processing power for optimal performance.

- Demanding AI tasks: For complex and time-consuming AI tasks requiring the absolute highest performance, you might want to consider a more powerful GPU like the RTX 3090.

FAQ: Addressing Your AI Questions

How much does the Apple M1 chip cost?

The cost of an Apple M1 chip varies depending on the device you choose. You can find MacBook Air and MacBook Pro models with M1 chips starting at around $900 and going up to $2,000, depending on the configuration.

Can I run any LLM on the Apple M1 chip?

While the Apple M1 chip can run many LLMs, its capabilities might be limited when it comes to the very largest and most demanding models. It's always best to check the specifications of the model and your specific M1 device to ensure compatibility.

What is the best way to choose the right device for AI development?

The best device for AI development depends on your individual needs and budget. Consider the types of LLMs you will be working with, the complexity of your AI projects, and your need for portability.

Is the Apple M1 chip better than Intel chips for AI development?

The M1 chip offers significantly better performance for AI development compared to Intel chips of the same generation. However, the choice between the two ultimately depends on your specific needs and the available options.

Keywords

Apple M1, AI Development, LLM, Llama 2, Llama 3, Token Speed, Quantization, GPU, RTX 3090, GPU Cores, Processing Speed, Generation Speed, Performance, Efficiency, Budget, Mobile Developers, Large Language Models, AI Models, LLM Inference, Developer, GPU