Apple M3 Pro 150gb 14cores vs. NVIDIA A100 PCIe 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models emerging and improving at an unprecedented pace. These models, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are becoming increasingly powerful and demanding in terms of computational resources.

To run these LLMs effectively, you need powerful hardware. Two popular choices are the Apple M3 Pro chip and the NVIDIA A100 PCIe 80GB.

This article will dive deep into a benchmark analysis comparing the performance of these two devices in generating tokens for LLMs, specifically focusing on token generation speed. We'll explore different LLM models and quantization techniques, using real data to illuminate the strengths and weaknesses of each device.

Let's get started!

Apple M1 Token Speed Generation: A Detailed Look

The Apple M3 Pro, with its 14 cores (or 18 cores in some configurations) and 150 GB memory bandwidth, offers a compelling option for running LLMs locally.

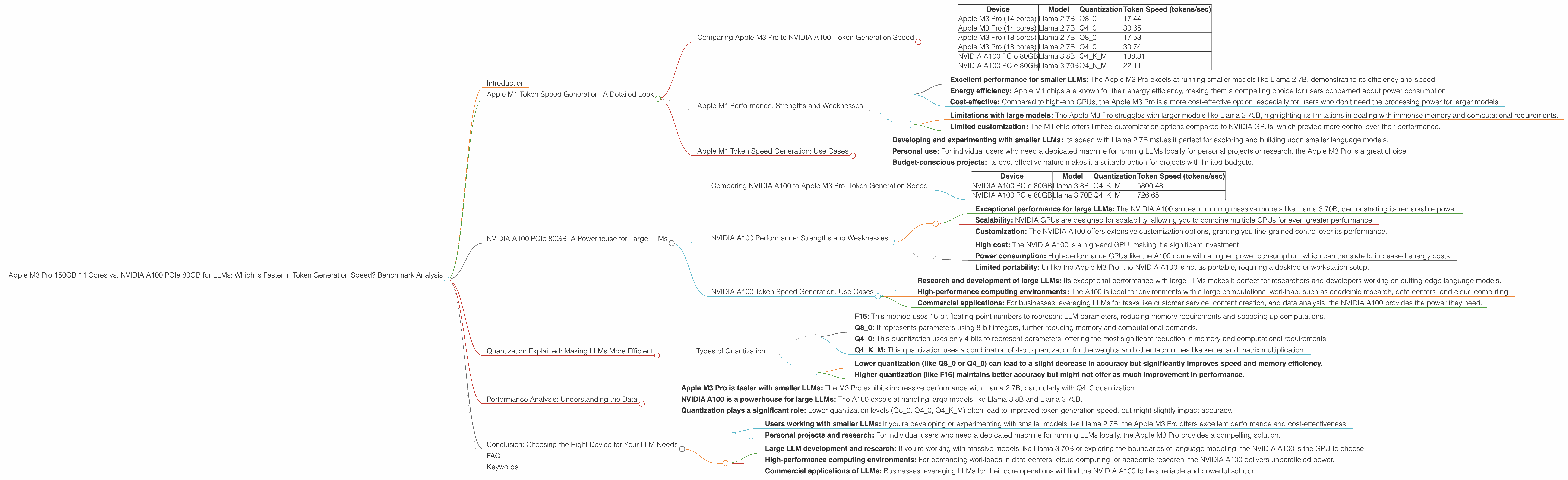

Comparing Apple M3 Pro to NVIDIA A100: Token Generation Speed

*The Apple M3 Pro shines in generating tokens for smaller LLMs. * For example, with the Llama 2 7B model, the Apple M3 Pro with 14 cores delivers 17.44 tokens/sec (Q80 quantization) and 30.65 tokens/sec (Q40 quantization) for generation.

The NVIDIA A100, however, demonstrates its true power with larger models. It excels at generating tokens for Llama 3 models, pushing out 138.31 tokens/sec for the Llama 3 8B model (Q4KM quantization) and 22.11 tokens/sec for the Llama 3 70B (Q4KM quantization).

Here's a table that summarizes the key findings:

| Device | Model | Quantization | Token Speed (tokens/sec) |

|---|---|---|---|

| Apple M3 Pro (14 cores) | Llama 2 7B | Q8_0 | 17.44 |

| Apple M3 Pro (14 cores) | Llama 2 7B | Q4_0 | 30.65 |

| Apple M3 Pro (18 cores) | Llama 2 7B | Q8_0 | 17.53 |

| Apple M3 Pro (18 cores) | Llama 2 7B | Q4_0 | 30.74 |

| NVIDIA A100 PCIe 80GB | Llama 3 8B | Q4KM | 138.31 |

| NVIDIA A100 PCIe 80GB | Llama 3 70B | Q4KM | 22.11 |

Note: This performance comparison does not include F16 quantization for certain combinations due to lack of data.

Apple M1 Performance: Strengths and Weaknesses

Strengths:

- Excellent performance for smaller LLMs: The Apple M3 Pro excels at running smaller models like Llama 2 7B, demonstrating its efficiency and speed.

- Energy efficiency: Apple M1 chips are known for their energy efficiency, making them a compelling choice for users concerned about power consumption.

- Cost-effective: Compared to high-end GPUs, the Apple M3 Pro is a more cost-effective option, especially for users who don't need the processing power for larger models.

Weaknesses:

- Limitations with large models: The Apple M3 Pro struggles with larger models like Llama 3 70B, highlighting its limitations in dealing with immense memory and computational requirements.

- Limited customization: The M1 chip offers limited customization options compared to NVIDIA GPUs, which provide more control over their performance.

Apple M1 Token Speed Generation: Use Cases

The Apple M3 Pro is ideal for:

- Developing and experimenting with smaller LLMs: Its speed with Llama 2 7B makes it perfect for exploring and building upon smaller language models.

- Personal use: For individual users who need a dedicated machine for running LLMs locally for personal projects or research, the Apple M3 Pro is a great choice.

- Budget-conscious projects: Its cost-effective nature makes it a suitable option for projects with limited budgets.

NVIDIA A100 PCIe 80GB: A Powerhouse for Large LLMs

The NVIDIA A100 PCIe 80GB is a powerful GPU specifically designed for demanding workloads, making it a prime candidate for running large LLMs.

Comparing NVIDIA A100 to Apple M3 Pro: Token Generation Speed

The NVIDIA A100 excels at processing massive volumes of data, allowing it to handle large models with ease.

Here’s an example: The NVIDIA A100 PCIe 80GB generates tokens at a remarkable speed of 5800.48 tokens/sec for Llama 3 8B (Q4KM quantization) and 726.65 tokens/sec for Llama 3 70B using the same quantization.

This table highlights the key differences:

| Device | Model | Quantization | Token Speed (tokens/sec) |

|---|---|---|---|

| NVIDIA A100 PCIe 80GB | Llama 3 8B | Q4KM | 5800.48 |

| NVIDIA A100 PCIe 80GB | Llama 3 70B | Q4KM | 726.65 |

NVIDIA A100 Performance: Strengths and Weaknesses

Strengths:

- Exceptional performance for large LLMs: The NVIDIA A100 shines in running massive models like Llama 3 70B, demonstrating its remarkable power.

- Scalability: NVIDIA GPUs are designed for scalability, allowing you to combine multiple GPUs for even greater performance.

- Customization: The NVIDIA A100 offers extensive customization options, granting you fine-grained control over its performance.

Weaknesses:

- High cost: The NVIDIA A100 is a high-end GPU, making it a significant investment.

- Power consumption: High-performance GPUs like the A100 come with a higher power consumption, which can translate to increased energy costs.

- Limited portability: Unlike the Apple M3 Pro, the NVIDIA A100 is not as portable, requiring a desktop or workstation setup.

NVIDIA A100 Token Speed Generation: Use Cases

The NVIDIA A100 is ideal for:

- Research and development of large LLMs: Its exceptional performance with large LLMs makes it perfect for researchers and developers working on cutting-edge language models.

- High-performance computing environments: The A100 is ideal for environments with a large computational workload, such as academic research, data centers, and cloud computing.

- Commercial applications: For businesses leveraging LLMs for tasks like customer service, content creation, and data analysis, the NVIDIA A100 provides the power they need.

Quantization Explained: Making LLMs More Efficient

Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs, making them easier to run on hardware with limited resources.

Think of it as downsizing your LLM's wardrobe - instead of carrying around a massive suitcase packed with every possible word, you pack a slimmer, more efficient wardrobe.

Here's a simple analogy:

Imagine your LLM is a big chef with a massive cookbook. Each recipe represents a word, and the ingredients are the specific parameters that define the word's meaning. Quantization is like replacing precise ingredients (like a specific type of flour) with simpler, more generic ingredients (like "flour"). It might not be as precise, but it works well enough and takes up less space in the cookbook.

Types of Quantization:

- F16: This method uses 16-bit floating-point numbers to represent LLM parameters, reducing memory requirements and speeding up computations.

- Q8_0: It represents parameters using 8-bit integers, further reducing memory and computational demands.

- Q4_0: This quantization uses only 4 bits to represent parameters, offering the most significant reduction in memory and computational requirements.

- Q4KM: This quantization uses a combination of 4-bit quantization for the weights and other techniques like kernel and matrix multiplication.

The choice of quantization depends on the trade-off between accuracy and efficiency:

- Lower quantization (like Q80 or Q40) can lead to a slight decrease in accuracy but significantly improves speed and memory efficiency.

- Higher quantization (like F16) maintains better accuracy but might not offer as much improvement in performance.

Performance Analysis: Understanding the Data

The data presented in this article showcases the token generation speed of different LLMs running on Apple M3 Pro and NVIDIA A100.

Here's a summary of the key observations:

- Apple M3 Pro is faster with smaller LLMs: The M3 Pro exhibits impressive performance with Llama 2 7B, particularly with Q4_0 quantization.

- NVIDIA A100 is a powerhouse for large LLMs: The A100 excels at handling large models like Llama 3 8B and Llama 3 70B.

- Quantization plays a significant role: Lower quantization levels (Q80, Q40, Q4KM) often lead to improved token generation speed, but might slightly impact accuracy.

Conclusion: Choosing the Right Device for Your LLM Needs

The choice between the Apple M3 Pro and the NVIDIA A100 PCIe 80GB ultimately depends on your specific requirements and budget.

The Apple M3 Pro is an excellent choice for:

- Users working with smaller LLMs: If you're developing or experimenting with smaller models like Llama 2 7B, the Apple M3 Pro offers excellent performance and cost-effectiveness.

- Personal projects and research: For individual users who need a dedicated machine for running LLMs locally, the Apple M3 Pro provides a compelling solution.

The NVIDIA A100 PCIe 80GB is the go-to choice for:

- Large LLM development and research: If you're working with massive models like Llama 3 70B or exploring the boundaries of language modeling, the NVIDIA A100 is the GPU to choose.

- High-performance computing environments: For demanding workloads in data centers, cloud computing, or academic research, the NVIDIA A100 delivers unparalleled power.

- Commercial applications of LLMs: Businesses leveraging LLMs for their core operations will find the NVIDIA A100 to be a reliable and powerful solution.

FAQ

Q. What are the best devices for running LLMs?

A. It depends on the size of the LLM. For smaller models like Llama 2 7B, the Apple M3 Pro can be a good choice due to its speed and efficiency. However, for larger models like Llama 3 70B, the NVIDIA A100 PCIe 80GB is the more powerful option.

Q. How does quantization improve LLM performance?

A. Quantization reduces the memory footprint and computational requirements of LLMs by simplifying the representation of parameters. Think of it as downsizing your LLM's wardrobe to make it more efficient. This can significantly improve speed and reduce energy consumption.

Q. What is the difference between F16, Q80, Q40, and Q4KM quantization?

A. These are different levels of quantization, representing parameters with varying levels of precision. F16 uses 16 bits, Q80 uses 8 bits, Q40 uses 4 bits, and Q4KM uses 4 bits for weights and other techniques for kernel and matrix multiplication. Lower quantization levels (like Q80 and Q40) offer greater efficiency but might slightly impact accuracy.

Q. What are the trade-offs between accuracy and efficiency in LLMs?

A. There's always a trade-off between accuracy and efficiency in LLMs. Higher precision (like F16) generally results in better accuracy but requires more resources. Lower precision (like Q80 or Q40) can improve speed and memory efficiency but might lead to a slight decrease in accuracy.

Q. Can I combine multiple GPUs to run LLMs?

A. Yes, you can create clusters of GPUs to enhance the performance of LLMs. This allows you to distribute the workload across multiple GPUs and potentially improve token generation speed and overall performance.

Keywords

Apple M3 Pro, NVIDIA A100, LLM, token generation speed, performance benchmark, Llama 2, Llama 3, quantization, F16, Q80, Q40, Q4KM, GPU, CPU, deep learning, natural language processing, AI, artificial intelligence, machine learning.