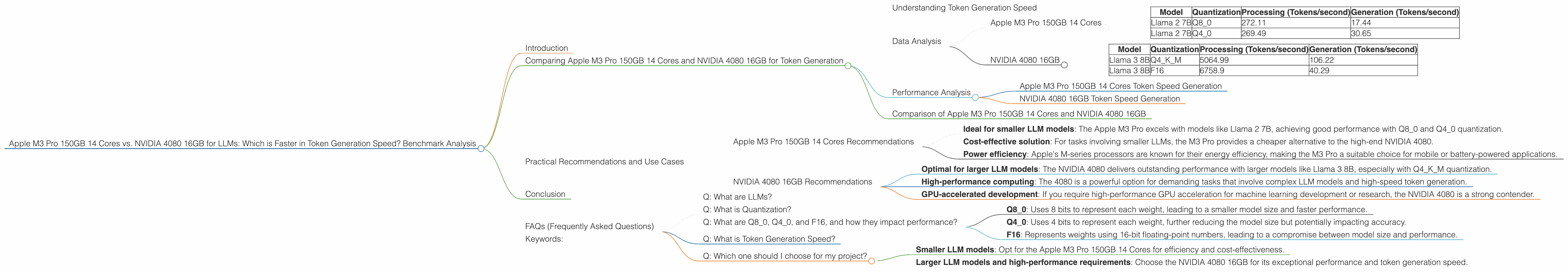

Apple M3 Pro 150gb 14cores vs. NVIDIA 4080 16GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the rapidly evolving landscape of large language models (LLMs), efficient inference and token generation are crucial for smooth user experiences and seamless workflows. However, the choice of hardware significantly impacts performance, making it essential to select a device that can keep up with the demands of these complex models. This article delves into the performance comparison between two powerful contenders: the Apple M3 Pro 150GB 14-core processor and the NVIDIA 4080 16GB graphics card, specifically focusing on their token generation speeds for different LLM models and quantization levels.

Think of it this way: imagine a race between a cheetah and a race car. The cheetah, representing the Apple M3 Pro, is nimble and efficient for smaller tasks, while the race car, resembling the NVIDIA 4080, is powerful and built for speed on a larger scale. We will analyze their strengths and weaknesses to identify the ideal device for your LLM tasks.

Comparing Apple M3 Pro 150GB 14 Cores and NVIDIA 4080 16GB for Token Generation

This section presents a comprehensive comparison of the Apple M3 Pro and NVIDIA 4080, focusing on their token generation speeds for various LLM models and quantization levels. The data presented is based on the publicly available benchmarks listed below.

Understanding Token Generation Speed

Token generation speed refers to the rate at which a device can process and output tokens, which are the building blocks of text in LLMs. A faster token generation speed implies a smoother and more responsive experience when interacting with LLMs, especially for tasks involving text generation, translation, or summarization.

Data Analysis

Apple M3 Pro 150GB 14 Cores

| Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 272.11 | 17.44 |

| Llama 2 7B | Q4_0 | 269.49 | 30.65 |

NVIDIA 4080 16GB

| Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 5064.99 | 106.22 |

| Llama 3 8B | F16 | 6758.9 | 40.29 |

Note: Data for Llama 2 7B F16, Llama 3 70B Q4KM, Llama 3 70B F16 quantization levels and for the Llama 3 70B models are unavailable.

Performance Analysis

Apple M3 Pro 150GB 14 Cores Token Speed Generation

The Apple M3 Pro demonstrates impressive performance with the Llama 2 7B model, especially when using Q80 and Q40 quantization. However, the token generation speed is significantly lower compared to processing speed, indicating a potential bottleneck during text output.

NVIDIA 4080 16GB Token Speed Generation

The NVIDIA 4080 exhibits exceptional token generation speed for both Llama 3 8B models, particularly with the Q4KM quantization. This impressive performance suggests the 4080 is well-suited for tasks requiring rapid text output.

Comparison of Apple M3 Pro 150GB 14 Cores and NVIDIA 4080 16GB

For smaller LLM models, like Llama 2 7B, the Apple M3 Pro offers a viable option with its efficient processing and relatively acceptable token generation speed. The availability of various quantization levels further adds to its versatility. However, when dealing with larger models, such as Llama 3 8B, the NVIDIA 4080 clearly outperforms the M3 Pro in terms of both processing and token generation, particularly when using Q4KM quantization.

Practical Recommendations and Use Cases

Apple M3 Pro 150GB 14 Cores Recommendations

- Ideal for smaller LLM models: The Apple M3 Pro excels with models like Llama 2 7B, achieving good performance with Q80 and Q40 quantization.

- Cost-effective solution: For tasks involving smaller LLMs, the M3 Pro provides a cheaper alternative to the high-end NVIDIA 4080.

- Power efficiency: Apple's M-series processors are known for their energy efficiency, making the M3 Pro a suitable choice for mobile or battery-powered applications.

NVIDIA 4080 16GB Recommendations

- Optimal for larger LLM models: The NVIDIA 4080 delivers outstanding performance with larger models like Llama 3 8B, especially with Q4KM quantization.

- High-performance computing: The 4080 is a powerful option for demanding tasks that involve complex LLM models and high-speed token generation.

- GPU-accelerated development: If you require high-performance GPU acceleration for machine learning development or research, the NVIDIA 4080 is a strong contender.

Conclusion

The choice between the Apple M3 Pro 150GB 14 Cores and NVIDIA 4080 16GB depends mainly on your specific needs and the type of LLMs you are working with. For smaller models and tasks requiring moderate token generation speed, the Apple M3 Pro offers a cost-effective and energy-efficient solution. However, for larger models and demanding applications requiring faster token generation, the NVIDIA 4080 shines with its powerful performance.

FAQs (Frequently Asked Questions)

Q: What are LLMs?

LLMs, or Large Language Models, are powerful AI systems that can understand and generate human-like text. They learn from vast amounts of text data, enabling them to perform a wide range of tasks like writing, translating, and answering questions.

Q: What is Quantization?

Quantization is a technique that reduces the size of LLM models by representing their weights with fewer bits. This smaller size allows for faster processing and lower memory consumption, making LLMs accessible even on less powerful devices.

Q: What are Q80, Q40, and F16, and how they impact performance?

These terms refer to different quantization levels: - Q80: Uses 8 bits to represent each weight, leading to a smaller model size and faster performance. - Q40: Uses 4 bits to represent each weight, further reducing the model size but potentially impacting accuracy. - F16: Represents weights using 16-bit floating-point numbers, leading to a compromise between model size and performance.

Q: What is Token Generation Speed?

Token generation speed refers to the rate at which a device can process and output tokens, which are the building blocks of text in LLMs. A faster token generation speed translates to a smoother and more responsive user experience.

Q: Which one should I choose for my project?

The best choice depends on your specific needs:

- Smaller LLM models: Opt for the Apple M3 Pro 150GB 14 Cores for efficiency and cost-effectiveness.

- Larger LLM models and high-performance requirements: Choose the NVIDIA 4080 16GB for its exceptional performance and token generation speed.

Keywords:

Apple M3 Pro, NVIDIA 4080, LLM, token generation speed, quantization, Llama 2, Llama 3, benchmark, performance, processing, generation, inference, GPU, GPU acceleration, machine learning, AI, deep learning.