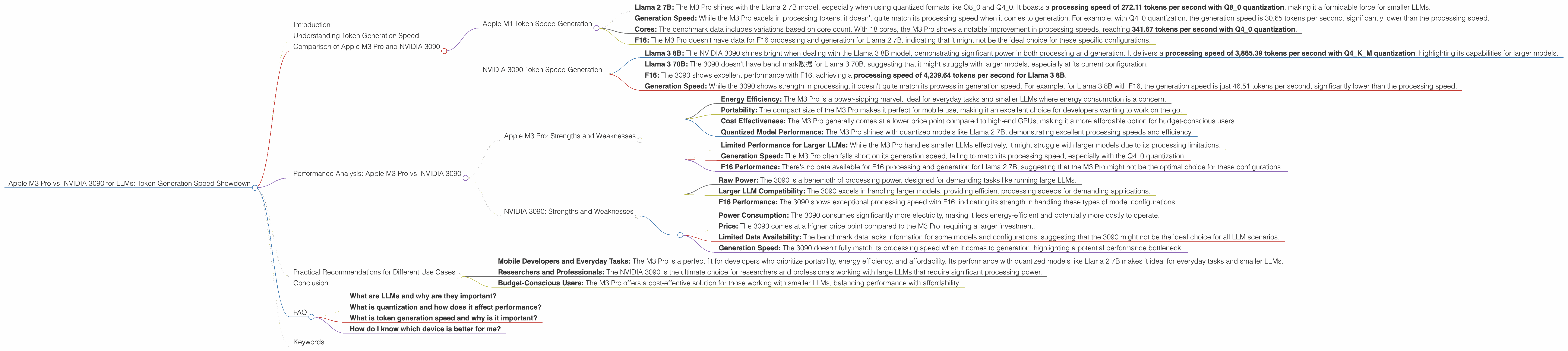

Apple M3 Pro 150gb 14cores vs. NVIDIA 3090 24GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with new models like Llama 2 and Llama 3 capturing the imagination of developers and sparking innovation. But choosing the right hardware for running these models locally becomes a crucial decision. Today, we're diving into the performance battleground between two popular contenders: the Apple M3 Pro 150GB 14 cores and the NVIDIA 3090 24GB.

These are both powerful contenders, but their strengths lie in different areas. Which one reigns supreme when it comes to token generation speed? Let's find out by dissecting benchmark data and revealing their hidden talents.

Understanding Token Generation Speed

Before we dive into the specifics, let's clarify what we mean by "token generation speed." Think of tokens as the building blocks of text for LLMs. They're like puzzle pieces that form words, sentences, and eventually, complete text.

Token generation speed reflects how fast a device can process these tokens, ultimately determining how quickly the LLM can generate text. Imagine you're creating a story – the faster your device processes tokens, the quicker you can build the narrative, word by word.

Comparison of Apple M3 Pro and NVIDIA 3090

Here's where things get interesting. The Apple M3 Pro and the NVIDIA 3090 are like two athletes with different specialties. The M3 Pro shines in its energy efficiency and compact size, making it ideal for everyday tasks and smaller LLMs. The NVIDIA 3090, on the other hand, packs a powerful punch for larger LLMs, excelling in scenarios where brute force is needed.

Apple M1 Token Speed Generation

Let's start with the Apple M3 Pro. This beast of a chip is designed with a focus on energy efficiency and portability, making it a fantastic choice for everyday users and developers.

Here are some key takeaways from the benchmark data:

- Llama 2 7B: The M3 Pro shines with the Llama 2 7B model, especially when using quantized formats like Q80 and Q40. It boasts a processing speed of 272.11 tokens per second with Q8_0 quantization, making it a formidable force for smaller LLMs.

- Generation Speed: While the M3 Pro excels in processing tokens, it doesn't quite match its processing speed when it comes to generation. For example, with Q4_0 quantization, the generation speed is 30.65 tokens per second, significantly lower than the processing speed.

- Cores: The benchmark data includes variations based on core count. With 18 cores, the M3 Pro shows a notable improvement in processing speeds, reaching 341.67 tokens per second with Q4_0 quantization.

- F16: The M3 Pro doesn't have data for F16 processing and generation for Llama 2 7B, indicating that it might not be the ideal choice for these specific configurations.

Overall, the Apple M3 Pro demonstrates exceptional processing speed with quantized models like Llama 2 7B, showcasing its efficiency and speed for everyday tasks and smaller LLMs.

NVIDIA 3090 Token Speed Generation

Now, let's turn our attention to the NVIDIA 3090, a true powerhouse known for its muscle and raw processing power. This GPU is typically favored by gamers and professionals who demand the highest level of performance.

Here's a breakdown of its performance based on the benchmark data:

- Llama 3 8B: The NVIDIA 3090 shines bright when dealing with the Llama 3 8B model, demonstrating significant power in both processing and generation. It delivers a processing speed of 3,865.39 tokens per second with Q4KM quantization, highlighting its capabilities for larger models.

- Llama 3 70B: The 3090 doesn't have benchmark数据 for Llama 3 70B, suggesting that it might struggle with larger models, especially at its current configuration.

- F16: The 3090 shows excellent performance with F16, achieving a processing speed of 4,239.64 tokens per second for Llama 3 8B.

- Generation Speed: While the 3090 shows strength in processing, it doesn't quite match its prowess in generation speed. For example, for Llama 3 8B with F16, the generation speed is just 46.51 tokens per second, significantly lower than the processing speed.

The NVIDIA 3090 emerges as a champion for larger LLMs like Llama 3 8B, showcasing its powerful processing capabilities and ability to handle complex models. However, it struggles with certain model and quantization combinations, highlighting its need for optimization.

Performance Analysis: Apple M3 Pro vs. NVIDIA 3090

When it comes to token generation speed, the comparison is more nuanced than a simple "winner takes all" contest. Both devices offer excellent performance, but their strengths lie in different areas, making the choice depend on your LLM needs.

Apple M3 Pro: Strengths and Weaknesses

Strengths:

- Energy Efficiency: The M3 Pro is a power-sipping marvel, ideal for everyday tasks and smaller LLMs where energy consumption is a concern.

- Portability: The compact size of the M3 Pro makes it perfect for mobile use, making it an excellent choice for developers wanting to work on the go.

- Cost Effectiveness: The M3 Pro generally comes at a lower price point compared to high-end GPUs, making it a more affordable option for budget-conscious users.

- Quantized Model Performance: The M3 Pro shines with quantized models like Llama 2 7B, demonstrating excellent processing speeds and efficiency.

Weaknesses:

- Limited Performance for Larger LLMs: While the M3 Pro handles smaller LLMs effectively, it might struggle with larger models due to its processing limitations.

- Generation Speed: The M3 Pro often falls short on its generation speed, failing to match its processing speed, especially with the Q4_0 quantization.

- F16 Performance: There's no data available for F16 processing and generation for Llama 2 7B, suggesting that the M3 Pro might not be the optimal choice for these configurations.

NVIDIA 3090: Strengths and Weaknesses

Strengths:

- Raw Power: The 3090 is a behemoth of processing power, designed for demanding tasks like running large LLMs.

- Larger LLM Compatibility: The 3090 excels in handling larger models, providing efficient processing speeds for demanding applications.

- F16 Performance: The 3090 shows exceptional processing speed with F16, indicating its strength in handling these types of model configurations.

Weaknesses:

- Power Consumption: The 3090 consumes significantly more electricity, making it less energy-efficient and potentially more costly to operate.

- Price: The 3090 comes at a higher price point compared to the M3 Pro, requiring a larger investment.

- Limited Data Availability: The benchmark data lacks information for some models and configurations, suggesting that the 3090 might not be the ideal choice for all LLM scenarios.

- Generation Speed: The 3090 doesn't fully match its processing speed when it comes to generation, highlighting a potential performance bottleneck.

Practical Recommendations for Different Use Cases

Here's a breakdown of how to choose between the M3 Pro and the 3090 based on your needs:

- Mobile Developers and Everyday Tasks: The M3 Pro is a perfect fit for developers who prioritize portability, energy efficiency, and affordability. Its performance with quantized models like Llama 2 7B makes it ideal for everyday tasks and smaller LLMs.

- Researchers and Professionals: The NVIDIA 3090 is the ultimate choice for researchers and professionals working with large LLMs that require significant processing power.

- Budget-Conscious Users: The M3 Pro offers a cost-effective solution for those working with smaller LLMs, balancing performance with affordability.

Conclusion

The race between the Apple M3 Pro and the NVIDIA 3090 for LLM performance is not a straightforward one. Both devices offer unique strengths and weaknesses, making the optimal choice dependent on your specific LLM needs, desired performance, and budget. The M3 Pro shines with its efficiency and portability, making it ideal for everyday tasks and smaller LLMs. The NVIDIA 3090, on the other hand, provides unmatched raw power for larger LLMs, but at a higher cost.

Ultimately, understanding your requirements and carefully evaluating the strengths and weaknesses of each device will guide you towards the perfect LLM companion for your coding adventures.

FAQ

What are LLMs and why are they important?

LLMs are like super-smart computer programs that understand and generate human-like text. They're used for tasks like translating languages, writing stories, and even answering your questions. They're fundamentally changing how we interact with technology.

What is quantization and how does it affect performance?

Think of quantization as a way to make LLMs more nimble and use less memory. It's like simplifying the instructions for the model, making it work faster but with a slight trade-off in accuracy. The M3 Pro excels with quantized models, whereas the 3090 shows greater power with specific configurations that don't require quantization.

What is token generation speed and why is it important?

Token generation speed is how fast a device can process the building blocks of text for LLMs. Faster processing speeds mean quicker responses and more efficient interactions with your LLM.

How do I know which device is better for me?

The best device depends on your LLM needs. If you're working with smaller models and value portability and energy efficiency, the M3 Pro might be suitable. For larger models and demanding tasks, the 3090's raw power is likely a better choice.

Keywords

Apple M3 Pro, NVIDIA 3090, LLM, Llama 2, Llama 3, token generation speed, benchmark, performance, processing, generation, quantization, F16, Q40, Q80, use cases, strengths, weaknesses, recommendations, development, AI, machine learning, natural language processing.