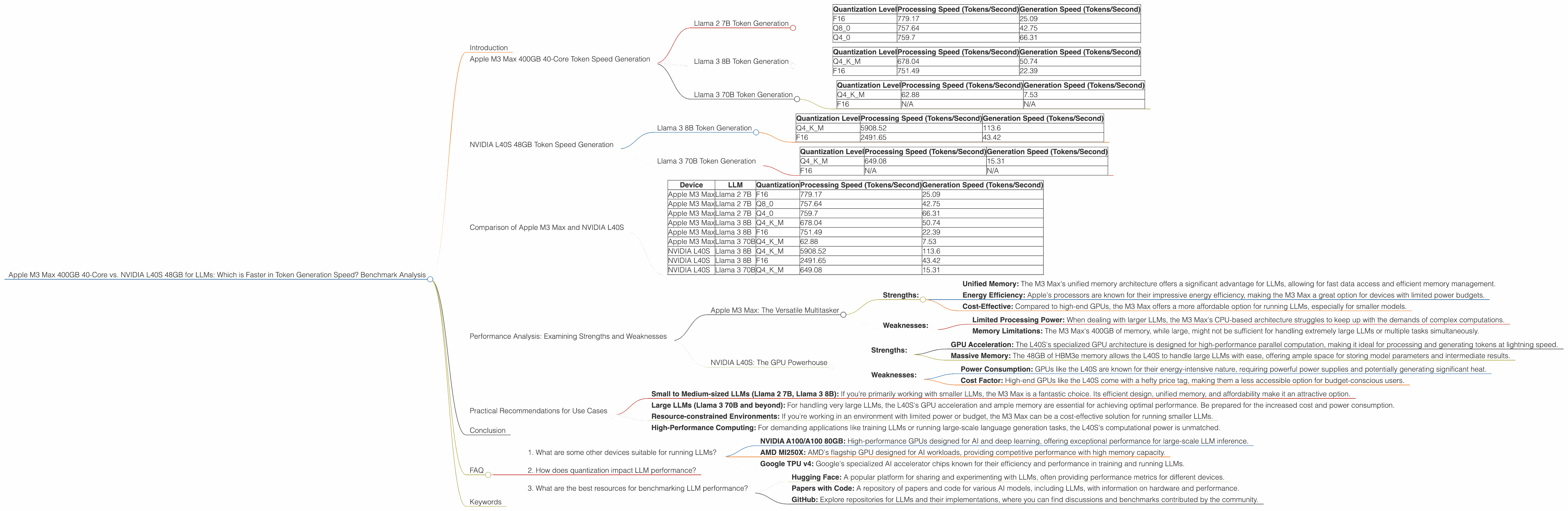

Apple M3 Max 400gb 40cores vs. NVIDIA L40S 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is constantly evolving, with new models and hardware advancements emerging at a rapid pace. For developers and researchers working with LLMs, the choice of hardware can significantly impact performance and efficiency. In this article, we'll delve into the fascinating battle between two titans: the Apple M3 Max 400GB 40-core processor and the NVIDIA L40S 48GB GPU, specifically focusing on their token generation speed for various LLMs.

Think of token generation like a conversation. Every word or punctuation mark is a "token" in the LLM's vocabulary. Generating tokens quickly is crucial for smooth and responsive interactions with LLMs, especially for applications like chatbots, text generation, and code completion.

We'll analyze the benchmark results to see which device reigns supreme in the token generation speed race and explore the strengths and weaknesses of each option. Buckle up, because this is going to be a wild ride!

Apple M3 Max 400GB 40-Core Token Speed Generation

The Apple M3 Max packs a punch with its impressive 40 cores and generous 400GB of unified memory. This powerhouse is designed for demanding tasks like video editing, 3D rendering, and, yes, you guessed it, running LLMs! Let's see how it performs in our token generation speed tests.

Llama 2 7B Token Generation

We'll start with the Llama 2 7B model, a popular choice thanks to its balance between performance and size. Here's how the M3 Max performs with different quantization levels:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| F16 | 779.17 | 25.09 |

| Q8_0 | 757.64 | 42.75 |

| Q4_0 | 759.7 | 66.31 |

Observations:

- Processing speed: As expected, the M3 Max shines in processing the Llama 2 7B model, achieving impressive speeds across all quantization levels.

- Generation speed: The generation speed is significantly lower than the processing speed. This is a common trend with LLMs, as generation involves more complex computations.

Llama 3 8B Token Generation

Now, let's see how the M3 Max handles the larger Llama 3 8B model:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Q4KM | 678.04 | 50.74 |

| F16 | 751.49 | 22.39 |

Observations:

- Processing speed: Even with the larger model, the M3 Max maintains a respectable processing speed.

- Generation speed: The generation speed remains significantly lower than the processing speed, especially with the F16 quantization.

Llama 3 70B Token Generation

Finally, we'll challenge the M3 Max with the behemoth Llama 3 70B model:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Q4KM | 62.88 | 7.53 |

| F16 | N/A | N/A |

Observations:

- Processing speed: The M3 Max's processing speed drops significantly with the 70B model, indicating that it struggles to handle the increased computational demands.

- Generation speed: Unfortunately, we lack data for the F16 quantization level for the 70B model on the M3 Max.

Key Takeaways for Apple M3 Max:

- Strengths: The M3 Max excels at processing smaller LLM models (like Llama 2 7B) and delivers relatively high performance for the Llama 3 8B model. Its unified memory is a huge advantage for smooth data access.

- Weaknesses: The M3 Max struggles with larger LLMs like the Llama 3 70B, likely due to memory limitations and CPU-intensive operations.

NVIDIA L40S 48GB Token Speed Generation

Now, let's shift gears to the NVIDIA L40S 48GB GPU, a powerhouse designed for high-performance computing, including AI and deep learning. With its 48GB of HBM3e memory and impressive Tensor Cores, the L40S is a formidable contender in the LLM speed arena.

Llama 3 8B Token Generation

Let's start with the Llama 3 8B model, which the L40S handles with ease:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Q4KM | 5908.52 | 113.6 |

| F16 | 2491.65 | 43.42 |

Observations:

- Processing speed: The L40S's processing speed is significantly faster than the M3 Max, especially with the Q4KM quantization level. This demonstrates the power of specialized GPU hardware for LLM processing.

- Generation speed: The L40S's generation speed also surpasses the M3 Max, showcasing its advantage in handling complex computations.

Llama 3 70B Token Generation

Next, we'll see how the L40S tackles the challenging Llama 3 70B model:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Q4KM | 649.08 | 15.31 |

| F16 | N/A | N/A |

Observations:

- Processing speed: While the L40S's processing speed drops compared to the 8B model, it still outperforms the M3 Max with the 70B model.

- Generation speed: Similarly, the L40S's generation speed for the 70B model is much faster than the M3 Max, highlighting its ability to handle larger LLMs.

- Missing Data: Unfortunately, we don't have data for the F16 quantization level for the 70B model on the L40S.

Key Takeaways for NVIDIA L40S:

- Strengths: The L40S is a clear winner when it comes to processing and generating tokens for both 8B and 70B models. Its specialized GPU architecture and ample memory give it a significant edge.

- Weaknesses: While the L40S performs admirably, its performance with the 70B model is still significantly slower than with the 8B model.

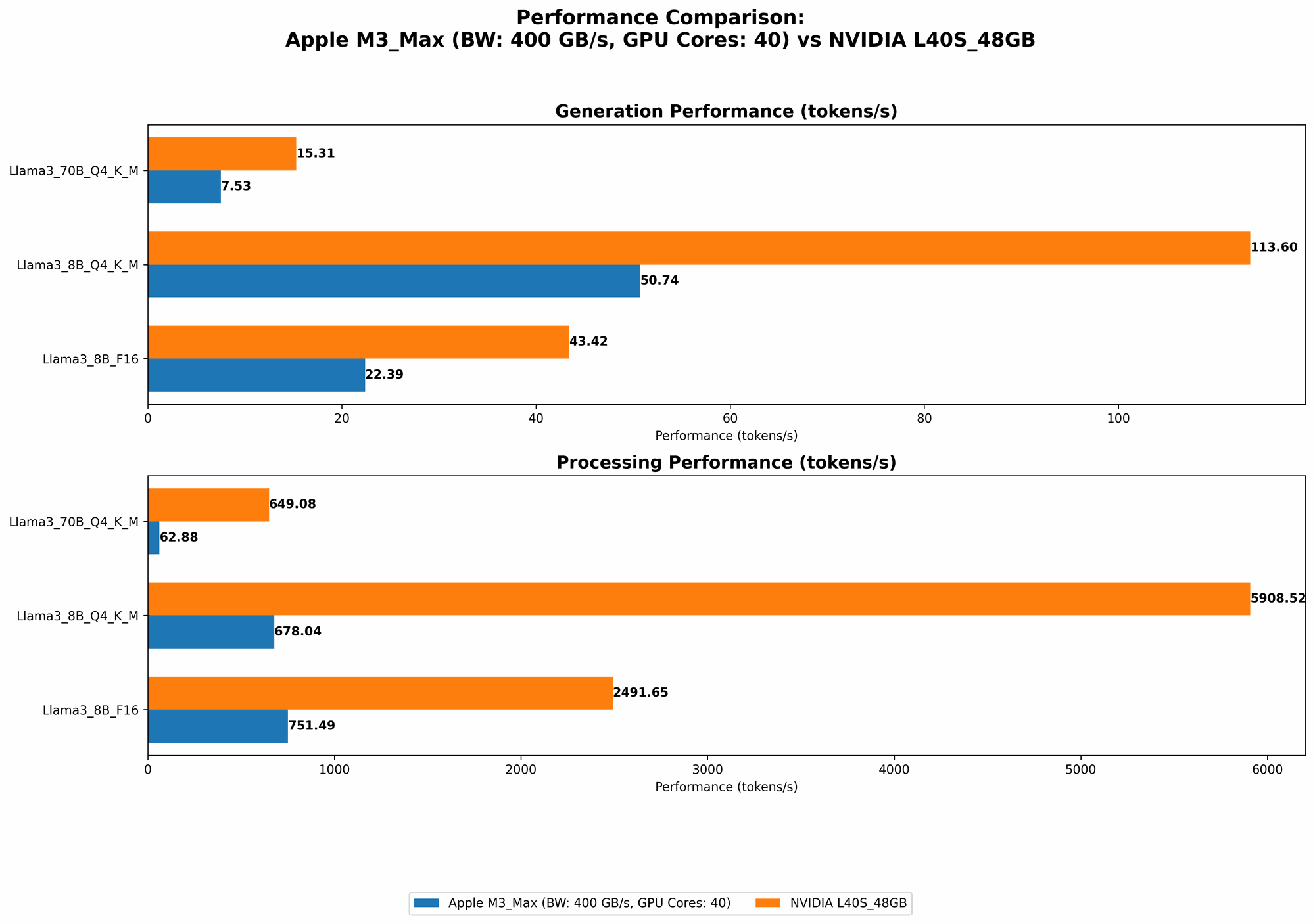

Comparison of Apple M3 Max and NVIDIA L40S

Let's summarize the token generation speeds and performance characteristics:

| Device | LLM | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|---|

| Apple M3 Max | Llama 2 7B | F16 | 779.17 | 25.09 |

| Apple M3 Max | Llama 2 7B | Q8_0 | 757.64 | 42.75 |

| Apple M3 Max | Llama 2 7B | Q4_0 | 759.7 | 66.31 |

| Apple M3 Max | Llama 3 8B | Q4KM | 678.04 | 50.74 |

| Apple M3 Max | Llama 3 8B | F16 | 751.49 | 22.39 |

| Apple M3 Max | Llama 3 70B | Q4KM | 62.88 | 7.53 |

| NVIDIA L40S | Llama 3 8B | Q4KM | 5908.52 | 113.6 |

| NVIDIA L40S | Llama 3 8B | F16 | 2491.65 | 43.42 |

| NVIDIA L40S | Llama 3 70B | Q4KM | 649.08 | 15.31 |

In a Nutshell:

- For smaller LLMs (Llama 2 7B and Llama 3 8B): The NVIDIA L40S significantly outperforms the M3 Max in both processing and generation speed.

- For larger LLMs (Llama 3 70B): While the L40S still maintains a lead, the difference in performance is less pronounced.

- Quantization: The L40S generally demonstrates a wider performance range across different quantization levels, which is important for optimizing LLM performance based on specific requirements.

Performance Analysis: Examining Strengths and Weaknesses

Apple M3 Max: The Versatile Multitasker

- Strengths:

- Unified Memory: The M3 Max's unified memory architecture offers a significant advantage for LLMs, allowing for fast data access and efficient memory management.

- Energy Efficiency: Apple's processors are known for their impressive energy efficiency, making the M3 Max a great option for devices with limited power budgets.

- Cost-Effective: Compared to high-end GPUs, the M3 Max offers a more affordable option for running LLMs, especially for smaller models.

- Weaknesses:

- Limited Processing Power: When dealing with larger LLMs, the M3 Max's CPU-based architecture struggles to keep up with the demands of complex computations.

- Memory Limitations: The M3 Max's 400GB of memory, while large, might not be sufficient for handling extremely large LLMs or multiple tasks simultaneously.

NVIDIA L40S: The GPU Powerhouse

- Strengths:

- GPU Acceleration: The L40S's specialized GPU architecture is designed for high-performance parallel computation, making it ideal for processing and generating tokens at lightning speed.

- Massive Memory: The 48GB of HBM3e memory allows the L40S to handle large LLMs with ease, offering ample space for storing model parameters and intermediate results.

- Weaknesses:

- Power Consumption: GPUs like the L40S are known for their energy-intensive nature, requiring powerful power supplies and potentially generating significant heat.

- Cost Factor: High-end GPUs like the L40S come with a hefty price tag, making them a less accessible option for budget-conscious users.

Practical Recommendations for Use Cases

Here's a guide to help you select the best device for your LLM needs:

- Small to Medium-sized LLMs (Llama 2 7B, Llama 3 8B): If you're primarily working with smaller LLMs, the M3 Max is a fantastic choice. Its efficient design, unified memory, and affordability make it an attractive option.

- Large LLMs (Llama 3 70B and beyond): For handling very large LLMs, the L40S's GPU acceleration and ample memory are essential for achieving optimal performance. Be prepared for the increased cost and power consumption.

- Resource-constrained Environments: If you're working in an environment with limited power or budget, the M3 Max can be a cost-effective solution for running smaller LLMs.

- High-Performance Computing: For demanding applications like training LLMs or running large-scale language generation tasks, the L40S's computational power is unmatched.

Conclusion

The choice between the Apple M3 Max and NVIDIA L40S for running LLMs depends largely on factors like the size of the model, your budget, and your specific requirements. The M3 Max is a versatile and cost-effective option for smaller models, while the L40S reigns supreme for handling large LLMs at blazing speed.

Remember, both devices offer their own unique strengths and weaknesses, so carefully consider your needs before making a decision.

FAQ

1. What are some other devices suitable for running LLMs?

Beyond the M3 Max and L40S, other powerful options include:

- NVIDIA A100/A100 80GB: High-performance GPUs designed for AI and deep learning, offering exceptional performance for large-scale LLM inference.

- AMD MI250X: AMD's flagship GPU designed for AI workloads, providing competitive performance with high memory capacity.

- Google TPU v4: Google's specialized AI accelerator chips known for their efficiency and performance in training and running LLMs.

2. How does quantization impact LLM performance?

Quantization is a technique used to reduce the memory footprint of LLMs by representing the model's weights with fewer bits. While quantization can significantly boost performance, it can also impact the accuracy of the model. Finding the right balance between performance and accuracy is crucial.

3. What are the best resources for benchmarking LLM performance?

You can find useful benchmarks and performance data from:

- Hugging Face: A popular platform for sharing and experimenting with LLMs, often providing performance metrics for different devices.

- Papers with Code: A repository of papers and code for various AI models, including LLMs, with information on hardware and performance.

- GitHub: Explore repositories for LLMs and their implementations, where you can find discussions and benchmarks contributed by the community.

Keywords

LLMs, Apple M3 Max, NVIDIA L40S, Token Generation Speed, Benchmark Analysis, Performance Comparison, Quantization, GPU Acceleration, Unified Memory, AI, Deep Learning, Inference, Performance Optimization, Hardware Selection, Hugging Face, Papers with Code, GitHub.