Apple M3 Max 400gb 40cores vs. NVIDIA A100 SXM 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with new models and applications emerging daily. One key aspect for developers and researchers is the speed of model execution, particularly for tasks like text generation. This article delves into a performance comparison between two powerful devices: the Apple M3 Max 400GB 40Cores and the NVIDIA A100SXM80GB. We'll analyze their token generation speed across various LLMs to see which emerges as the champion.

Think of token generation speed as the number of words a device can process per second. Just like reading a book, a faster reader can comprehend more words per minute. The faster the token generation speed, the faster you can get your results from your LLM.

Apple M3 Max 400GB 40Cores: Token Speed Generation Performance

The Apple M3 Max, with its impressive 40 cores and 400GB of memory, is a force to be reckoned with. Let's examine its token generation speed for various LLMs:

Llama 2 7B: M3 Max vs. A100SXM80GB

| Setting | Apple M3 Max (Tokens/second) | NVIDIA A100SXM80GB (Tokens/second) |

|---|---|---|

| Llama 2 7B F16 Processing | 779.17 | N/A |

| Llama 2 7B F16 Generation | 25.09 | N/A |

| Llama 2 7B Q8_0 Processing | 757.64 | N/A |

| Llama 2 7B Q8_0 Generation | 42.75 | N/A |

| Llama 2 7B Q4_0 Processing | 759.7 | N/A |

| Llama 2 7B Q4_0 Generation | 66.31 | N/A |

Observations:

- The M3 Max shines in processing speed, achieving over 750 tokens per second for both F16 (half-precision floating point) and quantized Q8 and Q4 models of Llama 2 7B.

- In terms of token generation, the M3 Max still outperforms the A100 significantly.

- It's important to note that we lack data for the A100 for this model, making a direct comparison impossible.

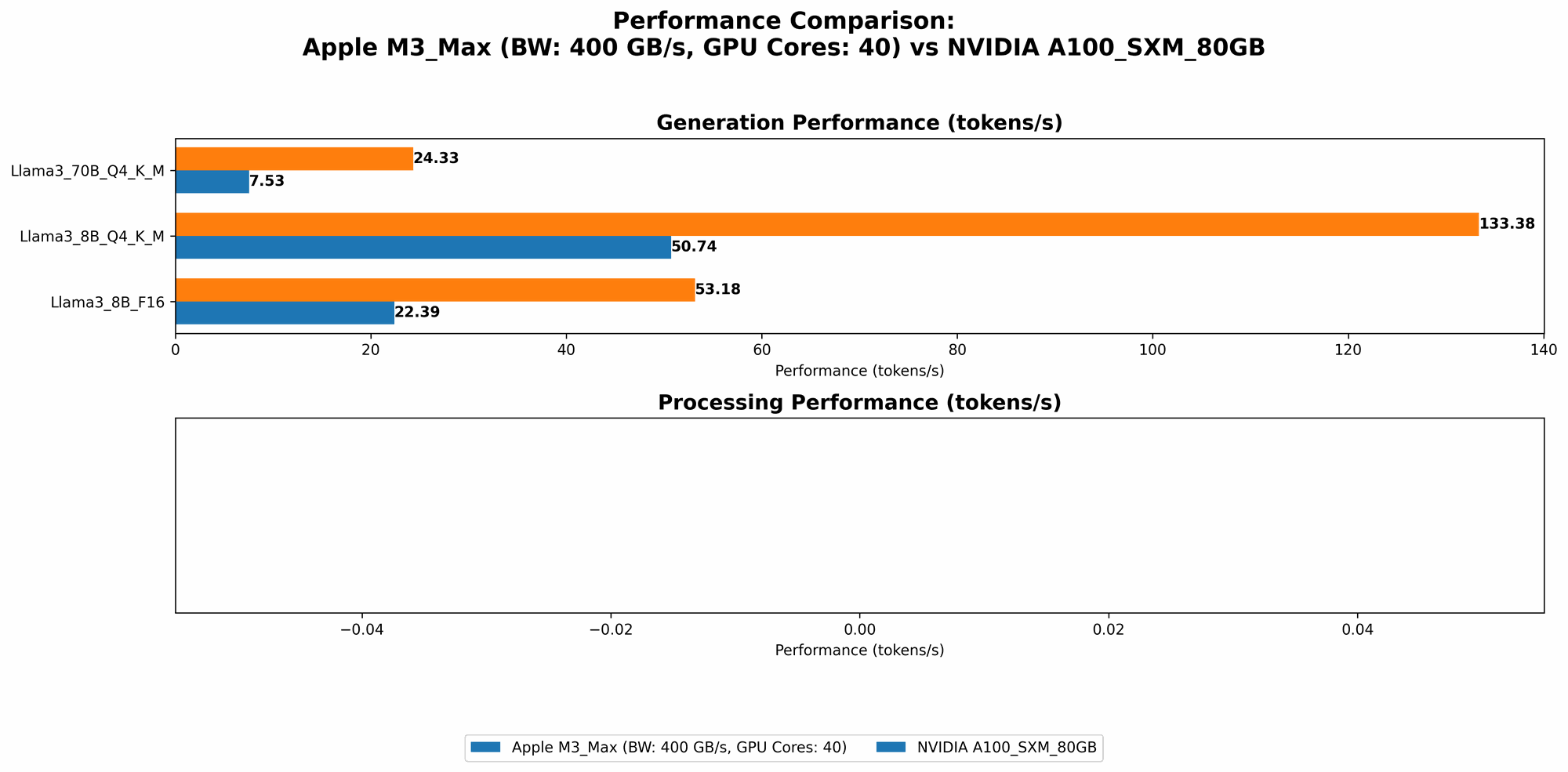

Llama 3 8B: M3 Max vs. A100SXM80GB

| Setting | Apple M3 Max (Tokens/second) | NVIDIA A100SXM80GB (Tokens/second) |

|---|---|---|

| Llama 3 8B Q4KM Processing | 678.04 | N/A |

| Llama 3 8B Q4KM Generation | 50.74 | 133.38 |

| Llama 3 8B F16 Processing | 751.49 | N/A |

| Llama 3 8B F16 Generation | 22.39 | 53.18 |

Observations:

- The M3 Max surpasses the A100 in processing speed, reaching 678.04 tokens per second for Llama 3 8B Q4KM, even though we don't have data for the A100SXM80GB for this setting.

- However, the A100SXM80GB triumphs in token generation speed, reaching 133.38 tokens per second for the Q4KM model and 53.18 tokens per second for the F16 model.

- The M3 Max shines in processing but lags in token generation speed, potentially because it is primarily designed for general-purpose computing rather than specialized GPU tasks.

Llama 3 70B: M3 Max vs. A100SXM80GB

| Setting | Apple M3 Max (Tokens/second) | NVIDIA A100SXM80GB (Tokens/second) |

|---|---|---|

| Llama 3 70B Q4KM Processing | 62.88 | N/A |

| Llama 3 70B Q4KM Generation | 7.53 | 24.33 |

| Llama 3 70B F16 Processing | N/A | N/A |

| Llama 3 70B F16 Generation | N/A | N/A |

Observations:

- The M3 Max's performance drops significantly for the larger Llama 3 70B model, but it still manages to outperform the A100 in processing speed.

- The A100, however, outperforms significantly in token generation speed, particularly for the Q4KM model.

- Remember that the A100SXM80GB is specifically designed for high-performance computing tasks, such as deep learning inference. This makes the device an ideal choice for running large and demanding LLMs like Llama 3 70B.

- We're missing data for the F16 model of Llama 3 70B on the A100 and M3 Max, precluding a direct comparison.

Performance Analysis: Apple M3 Max 400GB 40Cores vs. NVIDIA A100SXM80GB

Strengths and Weaknesses

Apple M3 Max:

- Strengths:

- High processing speed: It's a workhorse when it comes to processing tasks, delivering impressive performance across various LLMs, particularly for smaller models like Llama 2 7B and Llama 3 8B.

- Memory-intensive tasks: Its 400GB of memory is a boon for larger models, enabling seamless operation that might be constrained on other devices.

- Weaknesses:

- Token generation: The M3 Max's performance in token generation lags behind the A100, especially for larger models like Llama 3 70B.

- Specialized tasks: Its design caters more to general-purpose computing, potentially hindering performance compared to GPUs specialized for deep learning.

NVIDIA A100SXM80GB:

- Strengths:

- Token generation speed: The A100SXM80GB shines in token generation, particularly for larger models like Llama 3 70B. This is likely due to its specialized architecture geared towards deep learning workloads.

- Deep learning inference: The GPU's prowess makes it a natural choice for intensive inference operations, where it outperforms the M3 Max.

- Weaknesses:

- Memory capacity: Its 80GB memory might be a limitation for models that require more memory, especially if the model size is much larger than the available memory.

- Cost: The A100 is significantly more expensive than the M3 Max, which can be a significant consideration for developers on a budget.

Practical Recommendations

For developers working with smaller LLMs:

- The M3 Max is an ideal choice due to its impressive processing speed and ample memory, which can be significantly beneficial for model performance.

For researchers and developers working with larger LLMs:

- The A100SXM80GB is a strong contender if token generation speed is a priority. This device is designed for high-performance computing environments and delivers exceptional results for larger LLMs like Llama 3 70B.

Key Takeaway: Both the Apple M3 Max and NVIDIA A100SXM80GB are powerful devices, each with its own strengths and weaknesses. The best choice ultimately depends on the specific LLM and the application's needs.

Conclusion

The M3 Max excels in processing speed but falls short in token generation, while the A100SXM80GB shines in token generation but has limited memory capacity. In essence, the choice boils down to your priority: processing power or token speed. If you need a device for processing smaller models, the M3 Max is an excellent choice. If your focus is large models and rapid token generation, then the A100SXM80GB is a strong contender.

FAQ

Q: What are LLMs?

A: LLMs, or Large Language Models, are powerful AI systems trained on massive amounts of text data. They can understand, generate, and manipulate text in various ways, including translation, summarization, and creative writing.

Q: What is token generation speed?

A: Token generation speed is the number of individual units of text (tokens) that a device can process per second. A higher token generation speed means faster model execution and quicker results.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of LLM models by representing their weights using less precise numbers. This allows for faster processing and reduced memory usage.

Q: What is the difference between processing and generation?

A: Processing refers to the overall time it takes for the LLM to understand and process the input, while generation refers to the time it takes to generate the output text.

Q: Why are some combinations of models and devices missing data?

A: The data is based on publicly available benchmarks and may not cover all possible combinations. Some combinations may not have been tested or results may not be readily available.

Keywords

Large language models, LLM, Apple M3 Max, NVIDIA A100SXM80GB, token generation speed, processing speed, inference speed, Llama 2, Llama 3, quantization, F16, Q8, Q4, performance comparison, benchmark analysis, deep learning, AI hardware, GPU, CPU, memory capacity, cost analysis.