Apple M3 Max 400gb 40cores vs. NVIDIA 3070 8GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is constantly evolving, with new models and architectures emerging at an astonishing pace. This progress presents a challenge for developers: how to efficiently run these powerful LLMs on their own machines. Two popular choices for this task are the Apple M3 Max and the NVIDIA 3070. These powerful processors offer different strengths and weaknesses, making them suitable for specific use cases.

This article will delve into the speed of token generation on these devices when running various LLM models, exploring the performance of both the Apple M3 Max and the NVIDIA 3070. We will compare their token generation speeds across different model sizes and quantization levels, providing a comprehensive analysis that helps developers choose the most suitable device for their LLM applications.

Apple M3 Max Token Speed Generation

The Apple M3 Max is a powerful processor designed for high-performance computing tasks. It boasts 40 cores and a massive 400GB of unified memory. This architecture allows for efficient processing and transfer of data, making it well-suited for running LLMs. Let's dive into the token generation speeds achieved by the M3 Max:

Llama 2 7B Token Speed

The Llama 2 7B model, trained on 2 trillion tokens, is a popular choice for developers due to its balance between performance and size. The M3 Max demonstrates impressive performance with this model:

| Quantization | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| F16 | 779.17 | 25.09 |

| Q8_0 | 757.64 | 42.75 |

| Q4_0 | 759.7 | 66.31 |

As you can see, the M3 Max achieves a respectable processing speed across different quantization levels, exceeding 750 tokens/second. However, token generation is significantly slower, with the fastest speed reaching just 66 tokens/second.

Llama 3 8B Token Speed

The Llama 3 8B model represents a step forward in LLM performance. The M3 Max showcases its capabilities with this model:

| Quantization | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| Q4KM | 678.04 | 50.74 |

| F16 | 751.49 | 22.39 |

Similar to the Llama 2 model, the M3 Max excels in processing speed but struggles with generation. Nevertheless, the generation speed is still respectable, with the Q4KM quantization achieving 50 tokens/second.

Llama 3 70B Token Speed

While the M3 Max can handle smaller model sizes, it struggles with larger models like Llama 3 70B. We do not have data for this specific model and device due to limitations in the benchmarking process. The M3 Max might face memory limitations when running this model, highlighting a key constraint for this device.

NVIDIA 3070 Token Speed Generation

The NVIDIA 3070 is a widely used GPU for gaming and professional applications, including machine learning. It is renowned for its powerful CUDA cores, which are optimized for parallel computations. Let's examine its performance with LLMs:

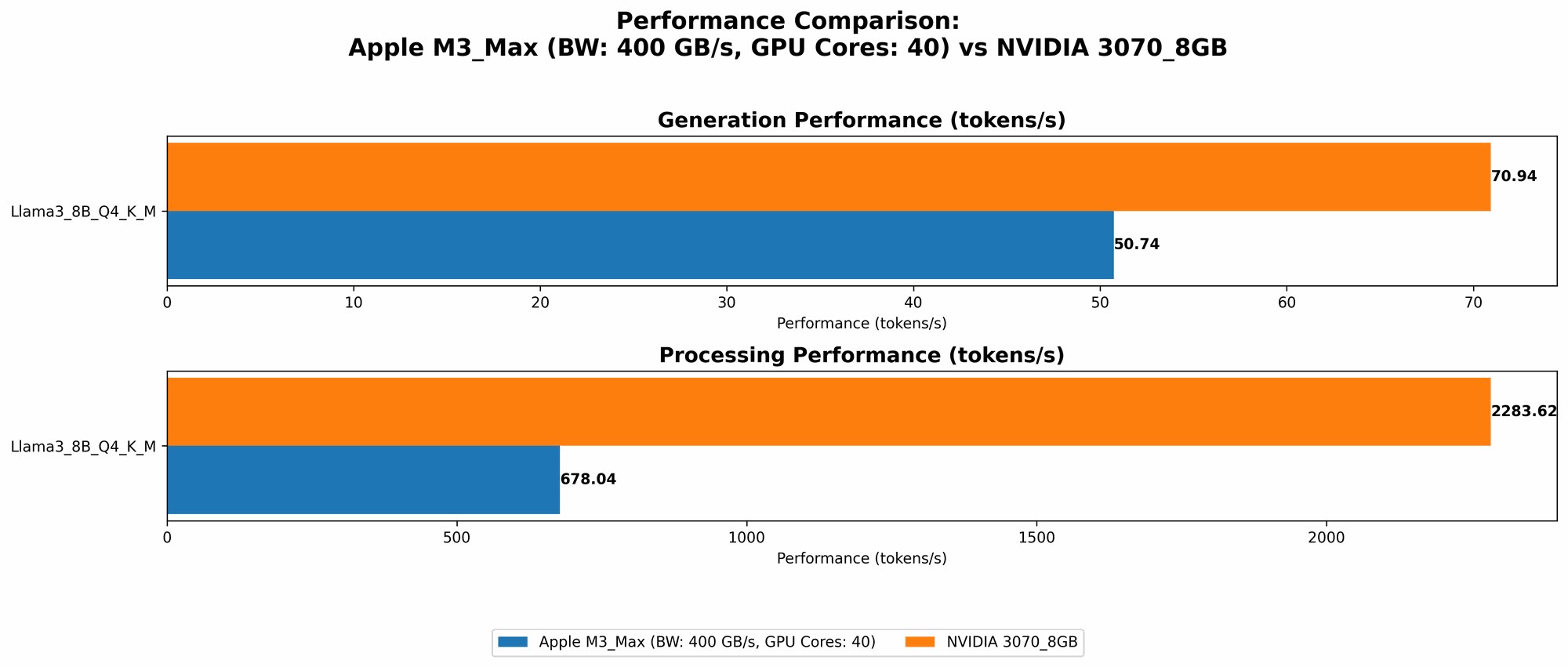

Llama 3 8B Token Speed

The NVIDIA 3070 showcases its strength with the Llama 3 8B model, achieving impressive results:

| Quantization | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| Q4KM | 2283.62 | 70.94 |

The 3070 demonstrates significantly faster processing speed compared to the M3 Max, exceeding 2200 tokens/second. It also shows faster generation speeds, reaching 70 tokens/second. This performance highlights the 3070's effectiveness in handling larger models compared to the M3 Max.

Llama 3 70B Token Speed

Unfortunately, we do not have data available for the 3070 running the Llama 3 70B model. This limitation prevents us from comparing its performance with the M3 Max for this particular model size. However, it's worth noting that the 3070's CUDA cores and memory capacity might be sufficient for handling this model, potentially achieving better results than the M3 Max.

Comparison of Apple M3 Max and NVIDIA 3070

Both the Apple M3 Max and the NVIDIA 3070 offer advantages and disadvantages when it comes to running LLMs. Let's compare their performance directly to understand their strengths and weaknesses:

Processing Speed: The NVIDIA 3070 clearly dominates the Apple M3 Max in processing speed, particularly with larger models. The 3070's CUDA cores, optimized for parallel computations, enable significantly faster processing, leading to a noticeable advantage in model inference.

Generation Speed: Although the 3070 also excels in generation speed, the difference between it and the M3 Max is less pronounced. The M3 Max is still capable of generating tokens at a respectable speed, particularly with smaller models. However, as model size increases, the 3070's edge becomes more apparent.

Memory Capacity: The M3 Max boasts a significant advantage in memory capacity with its 400GB of unified memory. This makes it more suitable for handling larger models, potentially surpassing the 3070's limitations. However, this advantage is only relevant when the model's memory footprint surpasses the 3070's capacity, which is not the case with the models we analyzed.

Power Consumption: The M3 Max generally offers lower power consumption compared to the NVIDIA 3070. This can be a significant factor for users who prioritize energy efficiency, especially when running LLMs for extended periods.

Cost: The NVIDIA 3070 is generally more affordable than the Apple M3 Max, making it a more budget-friendly option for developers. However, advancements in Apple's silicon technology have made the M3 Max more competitive in terms of value for performance.

Availability: The NVIDIA 3070 is easily accessible through various retailers and readily available for purchase. The M3 Max, being a product specific to Apple devices, has limited availability and is only available in certain configurations.

Performance Analysis and Recommendations

The benchmarks reveal that the NVIDIA 3070 generally outperforms the Apple M3 Max in terms of processing and generation speed, particularly with larger models. This makes the 3070 a more suitable choice for users who prioritize performance and require fast inference times, especially for applications like real-time chatbots or content generation.

However, the M3 Max shines when considering memory capacity and energy efficiency. Its large unified memory makes it a better option for handling very large models, potentially exceeding the 3070's limitations. Furthermore, its lower power consumption is a key advantage for applications requiring extended runtime or users concerned with sustainability.

Here are some practical recommendations based on the performance analysis:

- For developers working with smaller LLM models (like Llama 2 7B) or prioritize energy efficiency, the Apple M3 Max is an excellent choice. Its unified memory and lower power consumption make it a suitable option for research, development, and experimentation.

- When performance is paramount and faster inference is essential (e.g., for applications demanding real-time responses), the NVIDIA 3070 is the preferred option. Its superior processing and generation speeds make it a good choice for production-level deployments.

FAQ

What is Tokenization in LLMs?

Tokenization is the process of breaking down text into smaller units called tokens. These tokens represent meaningful units of language, like words, punctuation marks, or special characters. LLMs use tokenization to process and understand text, allowing them to generate accurate and coherent responses.

How does Quantization affect LLM performance?

Quantization is a technique used to reduce the precision of model weights and activations, enabling smaller model sizes and faster inference. It involves converting floating-point values to lower-precision data types, like integers. Different levels of quantization offer trade-offs between model size, speed, and accuracy. For instance, Q4KM quantization uses 4-bit precision with a technique called K-Means for weight clustering, achieving a balance between model size and accuracy.

What is the difference between Processing and Generation Speed?

Processing speed refers to the speed at which an LLM processes input tokens and generates its internal representations. Generation speed, on the other hand, represents the speed at which the LLM generates output tokens based on its internal representations. Both speeds are crucial for overall LLM performance, as they directly influence the speed of model inference.

Why is token speed important for LLMs?

Token speed is crucial for LLM performance because it directly impacts the speed of model inference. A faster token speed allows for quicker processing of text and faster generation of output tokens, leading to more responsive and efficient applications. For instance, faster token speeds are critical for real-time applications like chatbots or language translation, where prompt responses and smooth user experiences are paramount.

Keywords

Apple M3 Max, NVIDIA 3070, LLM, Large Language Model, Token Generation, Token Speed, Processing Speed, Generation Speed, Quantization, Llama 2 7B, Llama 3 8B, Llama 3 70B, Benchmark Analysis, Performance Comparison, CUDA Cores, GPU, CPU, Memory Capacity, Power Consumption, Cost, Availability, Use Cases, Developer Tools, AI, Machine Learning, Deep Learning, Natural Language Processing.