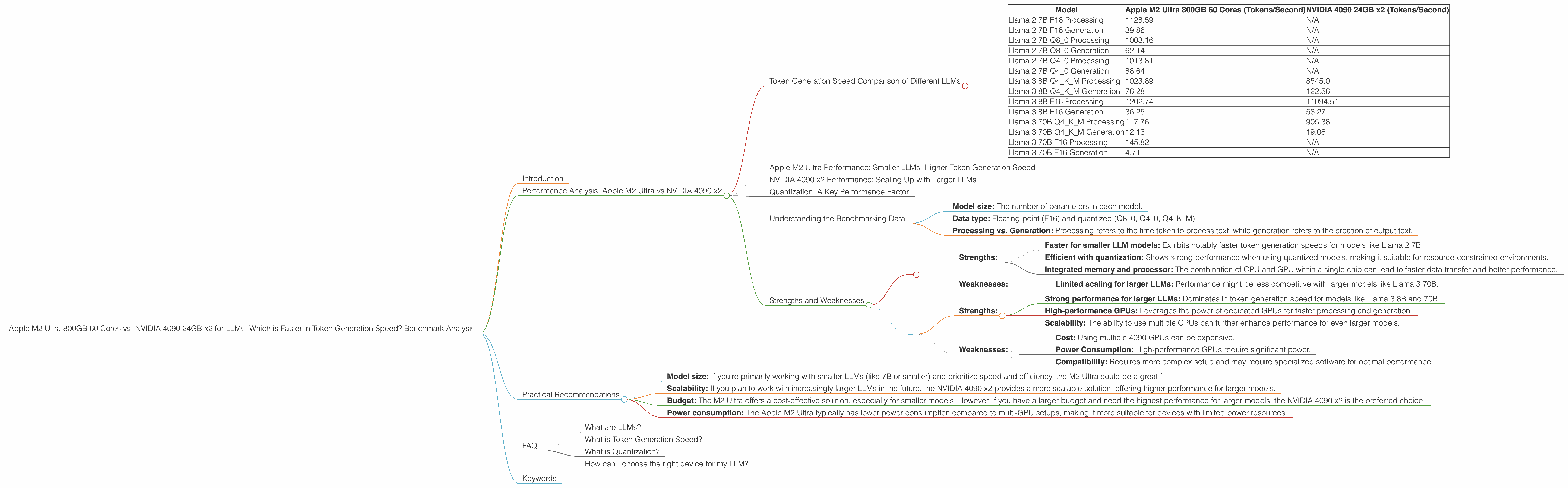

Apple M2 Ultra 800gb 60cores vs. NVIDIA 4090 24GB x2 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, pushing the boundaries of what's possible with artificial intelligence. As these models become more complex and require more computational power, choosing the right hardware becomes crucial for developers and researchers. This article dives into a head-to-head comparison of two powerful contenders: the Apple M2 Ultra 800GB 60 Cores and the NVIDIA 4090 24GB x2 setup, focusing specifically on their token generation speed for various LLM models.

Think of token generation as the "speech" of LLMs. Just like we break down sentences into words to understand them, LLMs process text by breaking it down into tokens. The faster a device can generate tokens, the quicker the LLM can understand and respond to your prompts.

Performance Analysis: Apple M2 Ultra vs NVIDIA 4090 x2

Token Generation Speed Comparison of Different LLMs

Let's jump into the heart of the matter – the token generation speeds of these two powerhouses:

| Model | Apple M2 Ultra 800GB 60 Cores (Tokens/Second) | NVIDIA 4090 24GB x2 (Tokens/Second) |

|---|---|---|

| Llama 2 7B F16 Processing | 1128.59 | N/A |

| Llama 2 7B F16 Generation | 39.86 | N/A |

| Llama 2 7B Q8_0 Processing | 1003.16 | N/A |

| Llama 2 7B Q8_0 Generation | 62.14 | N/A |

| Llama 2 7B Q4_0 Processing | 1013.81 | N/A |

| Llama 2 7B Q4_0 Generation | 88.64 | N/A |

| Llama 3 8B Q4KM Processing | 1023.89 | 8545.0 |

| Llama 3 8B Q4KM Generation | 76.28 | 122.56 |

| Llama 3 8B F16 Processing | 1202.74 | 11094.51 |

| Llama 3 8B F16 Generation | 36.25 | 53.27 |

| Llama 3 70B Q4KM Processing | 117.76 | 905.38 |

| Llama 3 70B Q4KM Generation | 12.13 | 19.06 |

| Llama 3 70B F16 Processing | 145.82 | N/A |

| Llama 3 70B F16 Generation | 4.71 | N/A |

Note: The "N/A" refers to missing data in the benchmarks, meaning these specific models were not tested on the corresponding device.

Apple M2 Ultra Performance: Smaller LLMs, Higher Token Generation Speed

The M2 Ultra shines when working with smaller LLMs, like the Llama 2 7B models. It achieves impressive token generation speeds, especially for processing, exceeding 1000 tokens per second in some configurations. This makes it a powerful choice for developers experimenting with smaller LLM models or building applications that require high-speed processing.

For generation, the M2 Ultra is still faster than the NVIDIA 4090 x2 for smaller LLMs, but the difference is smaller than the processing performance.

NVIDIA 4090 x2 Performance: Scaling Up with Larger LLMs

As we move to larger LLMs, such as Llama 3 8B and 70B, the NVIDIA 4090 x2 truly takes the lead. It demonstrates significantly higher token generation speeds for both processing and generation, especially for larger LLMs. This is a testament to the immense power of the NVIDIA 4090 GPU, which is designed for heavy-duty workloads.

Imagine this: Token generation is like running a marathon. The M2 Ultra is like a speedster who excels in shorter distances, while the NVIDIA 4090 x2 is like a powerful racing car built for long-distance endurance.

Quantization: A Key Performance Factor

The "Q" in the benchmark table represents Quantization, a technique that compresses the size of LLM models while maintaining performance. It's like compressing a picture; you lose some detail, but the file size shrinks, allowing for faster processing.

The M2 Ultra shows strong performance with both Q80 and Q40 quantization, indicating excellent efficiency in handling compressed models. This can be crucial for developers who need to run LLMs with limited memory or on devices with lower power consumption.

Understanding the Benchmarking Data

The data in the table is the result of rigorous performance testing. It considers various factors, including:

- Model size: The number of parameters in each model.

- Data type: Floating-point (F16) and quantized (Q80, Q40, Q4KM).

- Processing vs. Generation: Processing refers to the time taken to process text, while generation refers to the creation of output text.

By comparing these data points, we gain a clear understanding of the performance of each device in handling different LLM models and configurations.

Strengths and Weaknesses

Apple M2 Ultra 800GB 60 Cores:

Strengths:

- Faster for smaller LLM models: Exhibits notably faster token generation speeds for models like Llama 2 7B.

- Efficient with quantization: Shows strong performance when using quantized models, making it suitable for resource-constrained environments.

- Integrated memory and processor: The combination of CPU and GPU within a single chip can lead to faster data transfer and better performance.

Weaknesses:

- Limited scaling for larger LLMs: Performance might be less competitive with larger models like Llama 3 70B.

NVIDIA 4090 24GB x2:

Strengths:

- Strong performance for larger LLMs: Dominates in token generation speed for models like Llama 3 8B and 70B.

- High-performance GPUs: Leverages the power of dedicated GPUs for faster processing and generation.

- Scalability: The ability to use multiple GPUs can further enhance performance for even larger models.

Weaknesses:

- Cost: Using multiple 4090 GPUs can be expensive.

- Power Consumption: High-performance GPUs require significant power.

- Compatibility: Requires more complex setup and may require specialized software for optimal performance.

Practical Recommendations

Choosing the right device for your LLM needs depends on several factors:

- Model size: If you're primarily working with smaller LLMs (like 7B or smaller) and prioritize speed and efficiency, the M2 Ultra could be a great fit.

- Scalability: If you plan to work with increasingly larger LLMs in the future, the NVIDIA 4090 x2 provides a more scalable solution, offering higher performance for larger models.

- Budget: The M2 Ultra offers a cost-effective solution, especially for smaller models. However, if you have a larger budget and need the highest performance for larger models, the NVIDIA 4090 x2 is the preferred choice.

- Power consumption: The Apple M2 Ultra typically has lower power consumption compared to multi-GPU setups, making it more suitable for devices with limited power resources.

FAQ

What are LLMs?

LLMs are Large Language Models, a type of AI that can understand and generate human-like text. They are trained on massive amounts of data and can perform a wide range of tasks, such as translation, summarization, and creative writing.

What is Token Generation Speed?

Token generation speed is a measure of how quickly a device can process and generate text in the form of tokens. It's a crucial metric for evaluating the performance of LLMs because faster token generation leads to quicker responses and smoother interactions.

What is Quantization?

Quantization is a technique used to compress the size of LLM models by reducing the precision of the values stored in the model. This can significantly reduce the memory footprint of the model and improve performance by allowing for faster data processing.

How can I choose the right device for my LLM?

The best device for you depends on your specific needs. Consider the size of the LLM you plan to use, your budget, and your power consumption constraints.

Keywords

LLMs, Large Language Models, Apple M2 Ultra, NVIDIA 4090, Token Generation Speed, Performance Benchmark, Quantization, Llama 2, Llama 3, Processing, Generation, F16, Q80, Q40, Q4KM.