Apple M2 Ultra 800gb 60cores vs. NVIDIA 4070 Ti 12GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and applications emerging constantly. As LLMs become increasingly powerful, the demand for hardware capable of running them efficiently also grows. This article compares the performance of two popular hardware configurations: Apple M2 Ultra 800GB 60 cores and NVIDIA 4070 Ti 12GB, focusing on their token generation speed for various LLM models.

Understanding Token Generation Speed

Before diving into the benchmark analysis, let's understand what token generation speed means. Think of it like typing speed on a keyboard. The faster you type, the quicker you can generate text. In LLMs, tokens are the fundamental units of text. They can be individual characters, words, or subword units. Token generation speed measures how many tokens a device can process per second, which directly impacts the latency and overall performance of your LLM.

Apple M2 Ultra 800GB 60 Cores Performance

The Apple M2 Ultra 800GB 60 cores is a powerful processor designed for high-performance computing tasks. Here's a breakdown of its performance with various LLMs:

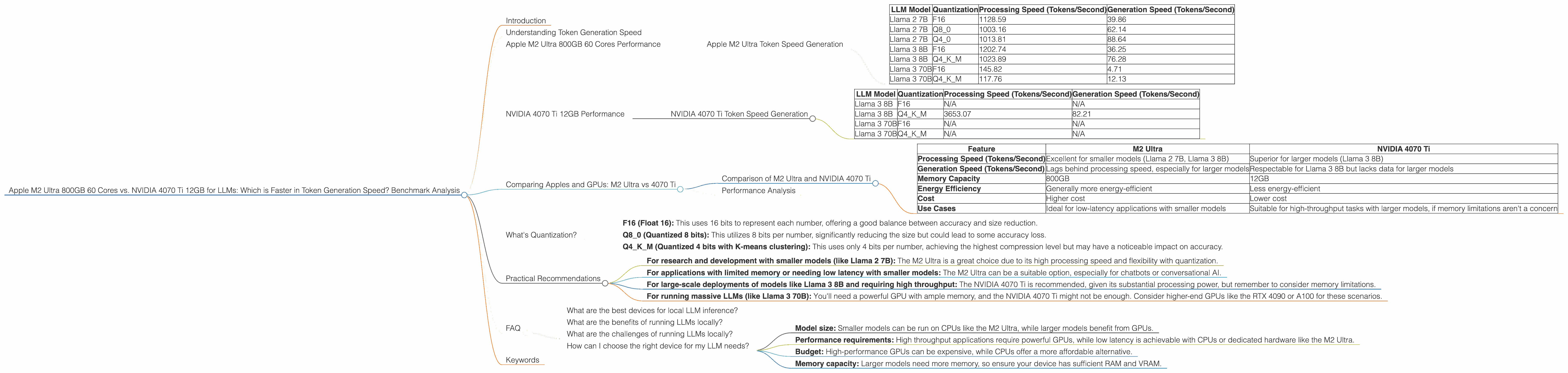

Apple M2 Ultra Token Speed Generation

| LLM Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B | F16 | 1128.59 | 39.86 |

| Llama 2 7B | Q8_0 | 1003.16 | 62.14 |

| Llama 2 7B | Q4_0 | 1013.81 | 88.64 |

| Llama 3 8B | F16 | 1202.74 | 36.25 |

| Llama 3 8B | Q4KM | 1023.89 | 76.28 |

| Llama 3 70B | F16 | 145.82 | 4.71 |

| Llama 3 70B | Q4KM | 117.76 | 12.13 |

Observations:

- M2 Ultra demonstrates impressive processing speeds for both Llama 2 7B and Llama 3 8B models across various quantization levels.

- While processing speeds are high, generation speeds lag behind, particularly for larger models like Llama 3 70B. This suggests that the M2 Ultra excels in processing information but may struggle with generating text output efficiently for massive models.

- The M2 Ultra also shows a significant improvement in processing speed with the 76-core configuration compared to 60 cores, especially noticeable with Llama 2 7B.

Strengths:

- High processing speeds, particularly for smaller models (Llama 2 7B and Llama 3 8B)

- Excellent performance across various quantization levels, offering flexibility for optimization.

Weaknesses:

- Generation speed limitations for larger models like Llama 3 70B

- Limited support for GPU-accelerated inference, which is crucial for achieving peak performance with large LLMs.

Use cases:

- Ideal for research and development where you need fast processing speeds for smaller models.

- Suitable for applications requiring low latency, like chatbots or conversational AI, when dealing with smaller models.

NVIDIA 4070 Ti 12GB Performance

The NVIDIA 4070 Ti 12GB is a powerful graphics card specifically designed for gaming and graphics-intensive tasks. However, it can also be used for accelerating LLM inference with significant efficiency gains.

NVIDIA 4070 Ti Token Speed Generation

| LLM Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 3 8B | F16 | N/A | N/A |

| Llama 3 8B | Q4KM | 3653.07 | 82.21 |

| Llama 3 70B | F16 | N/A | N/A |

| Llama 3 70B | Q4KM | N/A | N/A |

Observations:

- The 4070 Ti excels in processing speeds for Llama 3 8B, surpassing the M2 Ultra significantly.

- Generation speed for Llama 3 8B is respectable, though still behind the processing speed.

- We lack data for the 4070 Ti with Llama 3 70B and F16 quantization for Llama 3 8B, making a comprehensive comparison across all model sizes and quantization levels difficult.

Strengths:

- Outstanding processing power for Llama 3 8B, demonstrating superior performance compared to M2 Ultra.

- Optimized for GPU-accelerated inference, which is essential for high performance with larger LLMs.

Weaknesses:

- Data availability limitations prevent a full comparison across all models and quantization levels.

- The 4070 Ti may struggle with larger models due to limited memory capacity, although its performance for Llama 3 8B is promising.

Use Cases:

- Ideal for running larger models (like Llama 3 8B) with acceptable latency.

- Suitable for applications requiring high throughput for generating text, such as content creation or summarization.

Comparing Apples and GPUs: M2 Ultra vs 4070 Ti

Let's compare the two devices head-to-head, highlighting their strengths and weaknesses in a more intuitive way:

Comparison of M2 Ultra and NVIDIA 4070 Ti

| Feature | M2 Ultra | NVIDIA 4070 Ti |

|---|---|---|

| Processing Speed (Tokens/Second) | Excellent for smaller models (Llama 2 7B, Llama 3 8B) | Superior for larger models (Llama 3 8B) |

| Generation Speed (Tokens/Second) | Lags behind processing speed, especially for larger models | Respectable for Llama 3 8B but lacks data for larger models |

| Memory Capacity | 800GB | 12GB |

| Energy Efficiency | Generally more energy-efficient | Less energy-efficient |

| Cost | Higher cost | Lower cost |

| Use Cases | Ideal for low-latency applications with smaller models | Suitable for high-throughput tasks with larger models, if memory limitations aren't a concern |

Analogies to make the differences clearer:

- M2 Ultra is like a super fast car but with a small trunk. It can breeze through small deliveries, but you'll need multiple trips for larger loads.

- NVIDIA 4070 Ti is like a truck with a powerful engine. It handles large hauls with ease but consumes more fuel.

Performance Analysis

The M2 Ultra shines with smaller LLMs, offering impressive processing speeds and flexibility across different quantization levels. However, its generation speed limitations with larger models make it less suitable for applications demanding high throughput. The NVIDIA 4070 Ti, on the other hand, demonstrates superior processing power for Llama 3 8B, but its limited memory capacity could pose limitations for scaling to even larger models. It's crucial to consider the specific LLM size and the application requirements when choosing a device.

What's Quantization?

Quantization is a technique used to reduce the size of LLM models without sacrificing much accuracy. It works by reducing the number of bits used to represent each number in the model. Imagine you have a photograph with millions of colors. Quantization is like reducing that image to a smaller number of colors, making it less detailed but also much smaller.

- F16 (Float 16): This uses 16 bits to represent each number, offering a good balance between accuracy and size reduction.

- Q8_0 (Quantized 8 bits): This utilizes 8 bits per number, significantly reducing the size but could lead to some accuracy loss.

- Q4KM (Quantized 4 bits with K-means clustering): This uses only 4 bits per number, achieving the highest compression level but may have a noticeable impact on accuracy.

Practical Recommendations

- For research and development with smaller models (like Llama 2 7B): The M2 Ultra is a great choice due to its high processing speed and flexibility with quantization.

- For applications with limited memory or needing low latency with smaller models: The M2 Ultra can be a suitable option, especially for chatbots or conversational AI.

- For large-scale deployments of models like Llama 3 8B and requiring high throughput: The NVIDIA 4070 Ti is recommended, given its substantial processing power, but remember to consider memory limitations.

- For running massive LLMs (like Llama 3 70B): You'll need a powerful GPU with ample memory, and the NVIDIA 4070 Ti might not be enough. Consider higher-end GPUs like the RTX 4090 or A100 for these scenarios.

FAQ

What are the best devices for local LLM inference?

The best device depends on your specific needs. For smaller models, the M2 Ultra offers impressive performance. For larger models, a powerful GPU like the NVIDIA 4070 Ti might be preferred, but consider its memory limitations.

What are the benefits of running LLMs locally?

Local inference provides greater control, privacy, and responsiveness compared to cloud-based solutions. It eliminates reliance on internet connectivity and potential latency issues.

What are the challenges of running LLMs locally?

Local inference can be computationally demanding, requiring powerful hardware. Obtaining and managing these resources might be costly and demanding.

How can I choose the right device for my LLM needs?

Consider these factors:

- Model size: Smaller models can be run on CPUs like the M2 Ultra, while larger models benefit from GPUs.

- Performance requirements: High throughput applications require powerful GPUs, while low latency is achievable with CPUs or dedicated hardware like the M2 Ultra.

- Budget: High-performance GPUs can be expensive, while CPUs offer a more affordable alternative.

- Memory capacity: Larger models need more memory, so ensure your device has sufficient RAM and VRAM.

Keywords

Apple M2 Ultra, NVIDIA 4070 Ti, LLM, token generation speed, Llama 2, Llama 3, benchmark analysis, quantization, F16, Q80, Q4K_M, processing speed, generation speed, performance comparison, GPU, CPU, local inference, AI, machine learning, deep learning.