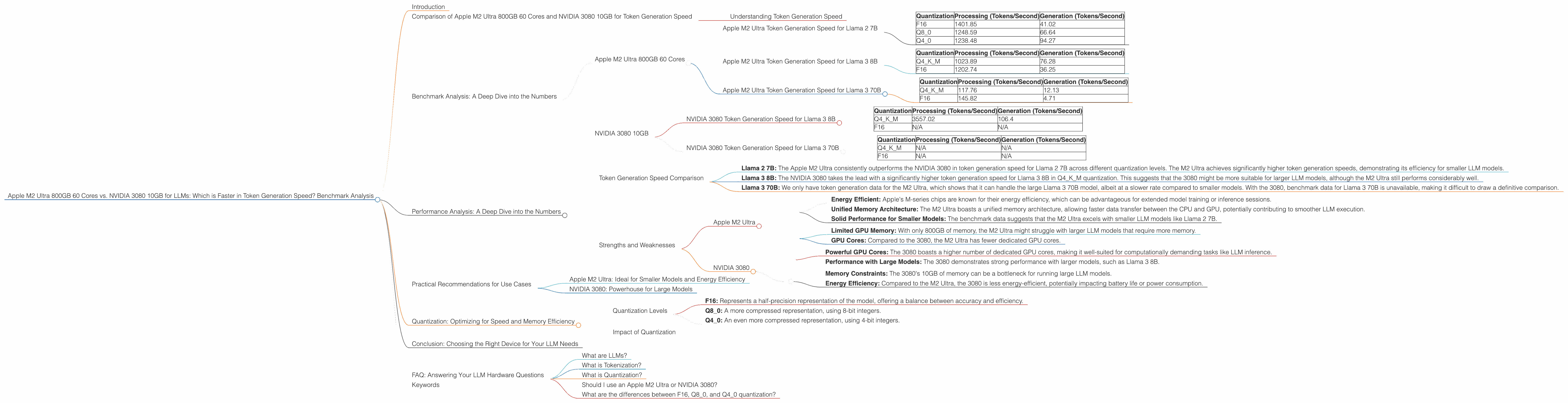

Apple M2 Ultra 800gb 60cores vs. NVIDIA 3080 10GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

Large Language Models (LLMs) are revolutionizing the way we interact with technology. Whether it’s generating creative text, translating languages, or summarizing complex documents, LLMs are becoming increasingly powerful and accessible. However, running these models on your own machine can require significant resources and computational power.

In this article, we will compare the performance of two popular devices for running LLMs: the Apple M2 Ultra 800GB 60 Cores and the NVIDIA 3080 10GB. We'll delve into their token generation speed, analyze their strengths and weaknesses, and provide practical recommendations for specific use cases. Buckle up, because we're about to dive into the wild world of LLMs and hardware!

Comparison of Apple M2 Ultra 800GB 60 Cores and NVIDIA 3080 10GB for Token Generation Speed

This comparison will focus on the token generation speed of the two devices, which directly impacts the performance and responsiveness of LLM applications. We'll analyze the results using token generation speed (tokens per second) for different LLM models and quantization configurations.

Understanding Token Generation Speed

Imagine LLMs like a giant machine that reads and processes language in chunks called "tokens." These tokens are like individual words or parts of words. Token generation refers to the speed at which the LLM can process these tokens, producing output like text, translations, or summaries.

To understand the impact of token generation speed, think of it like video streaming: a faster internet connection (higher token generation speed) allows you to enjoy smooth video playback. Similarly, a faster token generation speed ensures LLMs respond swiftly and smoothly, delivering the best possible user experience.

Benchmark Analysis: A Deep Dive into the Numbers

For this comparison, we'll use benchmark data from GitHub and GitHub, analyzing the performance of both devices for relevant LLM models.

Apple M2 Ultra 800GB 60 Cores

The Apple M2 Ultra is a powerful chip designed for professional workloads, including machine learning and LLM inference.

Apple M2 Ultra Token Generation Speed for Llama 2 7B

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 1401.85 | 41.02 |

| Q8_0 | 1248.59 | 66.64 |

| Q4_0 | 1238.48 | 94.27 |

Apple M2 Ultra Token Generation Speed for Llama 3 8B

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Q4KM | 1023.89 | 76.28 |

| F16 | 1202.74 | 36.25 |

Apple M2 Ultra Token Generation Speed for Llama 3 70B

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Q4KM | 117.76 | 12.13 |

| F16 | 145.82 | 4.71 |

NVIDIA 3080 10GB

The NVIDIA 3080 is a popular GPU designed for high-performance gaming and graphics rendering, and it's also capable of efficiently running LLMs.

NVIDIA 3080 Token Generation Speed for Llama 3 8B

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Q4KM | 3557.02 | 106.4 |

| F16 | N/A | N/A |

NVIDIA 3080 Token Generation Speed for Llama 3 70B

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Q4KM | N/A | N/A |

| F16 | N/A | N/A |

Note: The NVIDIA 3080 benchmark data for Llama 3 70B and Llama 3 8B F16 were not available.

Performance Analysis: A Deep Dive into the Numbers

Let's examine the benchmark results to gain deeper insights into the performance of each device.

Token Generation Speed Comparison

Llama 2 7B: The Apple M2 Ultra consistently outperforms the NVIDIA 3080 in token generation speed for Llama 2 7B across different quantization levels. The M2 Ultra achieves significantly higher token generation speeds, demonstrating its efficiency for smaller LLM models.

Llama 3 8B: The NVIDIA 3080 takes the lead with a significantly higher token generation speed for Llama 3 8B in Q4KM quantization. This suggests that the 3080 might be more suitable for larger LLM models, although the M2 Ultra still performs considerably well.

Llama 3 70B: We only have token generation data for the M2 Ultra, which shows that it can handle the large Llama 3 70B model, albeit at a slower rate compared to smaller models. With the 3080, benchmark data for Llama 3 70B is unavailable, making it difficult to draw a definitive comparison.

Strengths and Weaknesses

Apple M2 Ultra

Strengths:

- Energy Efficient: Apple's M-series chips are known for their energy efficiency, which can be advantageous for extended model training or inference sessions.

- Unified Memory Architecture: The M2 Ultra boasts a unified memory architecture, allowing faster data transfer between the CPU and GPU, potentially contributing to smoother LLM execution.

- Solid Performance for Smaller Models: The benchmark data suggests that the M2 Ultra excels with smaller LLM models like Llama 2 7B.

Weaknesses:

- Limited GPU Memory: With only 800GB of memory, the M2 Ultra might struggle with larger LLM models that require more memory.

- GPU Cores: Compared to the 3080, the M2 Ultra has fewer dedicated GPU cores.

NVIDIA 3080

Strengths:

- Powerful GPU Cores: The 3080 boasts a higher number of dedicated GPU cores, making it well-suited for computationally demanding tasks like LLM inference.

- Performance with Large Models: The 3080 demonstrates strong performance with larger models, such as Llama 3 8B.

Weaknesses:

- Memory Constraints: The 3080's 10GB of memory can be a bottleneck for running large LLM models.

- Energy Efficiency: Compared to the M2 Ultra, the 3080 is less energy-efficient, potentially impacting battery life or power consumption.

Practical Recommendations for Use Cases

Apple M2 Ultra: Ideal for Smaller Models and Energy Efficiency

The Apple M2 Ultra shines when working with smaller LLM models like Llama 2 7B. Its energy efficiency makes it a great choice for developers who value battery life or power consumption, especially for extended LLM sessions.

NVIDIA 3080: Powerhouse for Large Models

The NVIDIA 3080 is a powerful option for handling larger LLM models. While its memory limitations might prevent running extremely large models, it offers impressive performance for models like Llama 3 8B.

Quantization: Optimizing for Speed and Memory Efficiency

LLMs can be "quantized" to reduce their memory footprint and improve their performance. This involves reducing the precision of the model's weights, similar to compressing a high-resolution image to save space.

Quantization Levels

- F16: Represents a half-precision representation of the model, offering a balance between accuracy and efficiency.

- Q8_0: A more compressed representation, using 8-bit integers.

- Q4_0: An even more compressed representation, using 4-bit integers.

Impact of Quantization

Quantization can significantly impact token generation speed. Generally, lower precision quantization levels (like Q4_0) offer faster processing and generation speeds but might compromise accuracy. The optimal quantization level depends on the specific LLM model and the desired balance between accuracy and speed.

Conclusion: Choosing the Right Device for Your LLM Needs

Both the Apple M2 Ultra and NVIDIA 3080 offer unique advantages for running LLMs. The M2 Ultra is excellent for smaller models and prioritizes energy efficiency, while the 3080 excels with larger models and boasts powerful GPU cores. The choice ultimately depends on your LLM model, resource constraints, and performance priorities.

FAQ: Answering Your LLM Hardware Questions

What are LLMs?

LLMs are machine learning models trained on massive amounts of text data, enabling them to understand and generate human-like text. They are used in a wide range of applications, including chatbots, language translation, and text summarization.

What is Tokenization?

Tokenization is the process of breaking down text into individual units called tokens. These tokens can be words, punctuation marks, or parts of words. LLMs process and generate text based on these tokens.

What is Quantization?

Quantization is a technique used to reduce the memory footprint of LLMs by representing their weights with lower precision. This can improve inference speed without significantly impacting accuracy.

Should I use an Apple M2 Ultra or NVIDIA 3080?

The optimal choice depends on your LLM model size and specific use case. For smaller models and energy efficiency, the Apple M2 Ultra is a good option. For larger models and raw computational power, the NVIDIA 3080 might be more suitable.

What are the differences between F16, Q80, and Q40 quantization?

These represent different levels of precision for model weights. F16 uses half-precision floating-point numbers, Q80 uses 8-bit integers, and Q40 uses 4-bit integers. Lower precision levels offer faster processing but might compromise accuracy.

Keywords

Apple M2 Ultra, NVIDIA 3080, LLMs, token generation, benchmark, performance analysis, Llama 2, Llama 3, quantization, F16, Q80, Q40, GPU, CPU, inference, processing speed, generation speed, memory, use cases, energy efficiency, recommendation,