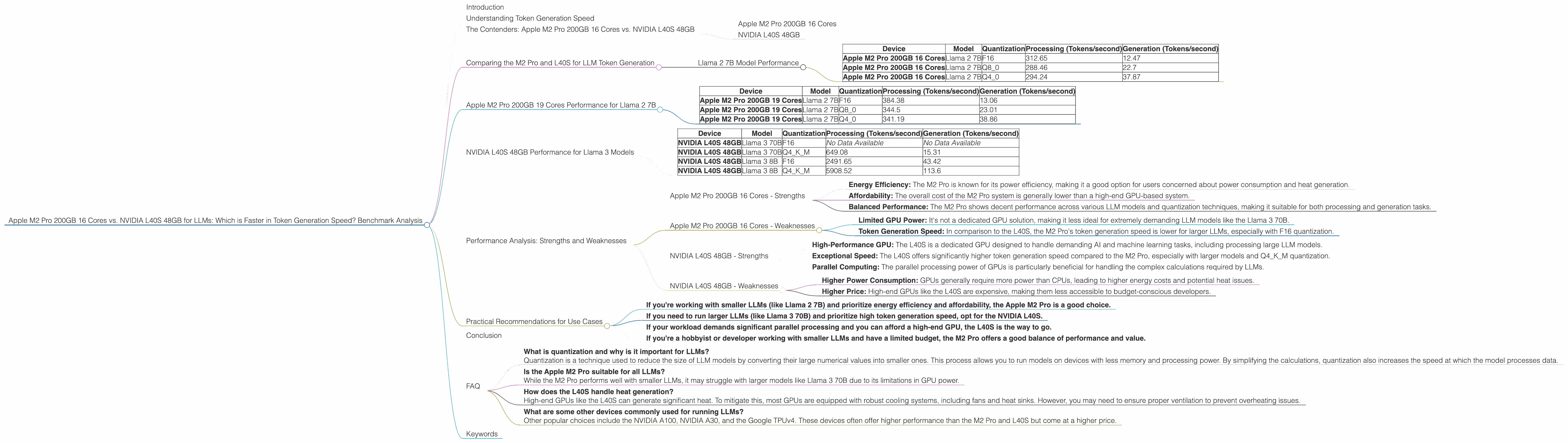

Apple M2 Pro 200gb 16cores vs. NVIDIA L40S 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, with new and powerful models emerging frequently. These models, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are pushing the boundaries of what’s possible with AI. But running these models locally requires powerful hardware.

In this article, we’re diving deep into a head-to-head comparison of two popular choices for running LLMs: the Apple M2 Pro 200GB 16 core chip and the NVIDIA L40S 48GB GPU. We’ll analyze their performance in token generation speed for various LLM models and explore their strengths and weaknesses. This analysis will help you make an informed decision about which device best suits your needs for local LLM development or deployment.

Understanding Token Generation Speed

Before we dive into the benchmark results, let's quickly understand what token generation speed is and why it's crucial for LLMs.

Tokens are the building blocks of text in LLMs. Think of them like words or parts of words, but in a more granular way. When you use an LLM, it processes the input text and generates output text, one token at a time. The speed at which it generates these tokens directly impacts the overall performance of the model, determining how quickly it can process information and respond to requests.

Higher token generation speed means faster response times, smoother user experiences, and generally more efficient LLM usage.

The Contenders: Apple M2 Pro 200GB 16 Cores vs. NVIDIA L40S 48GB

Apple M2 Pro 200GB 16 Cores

The Apple M2 Pro is a powerful chip designed for high-performance computing tasks, including machine learning. It offers a significant performance boost compared to its predecessor, the M1 Pro, with 16 cores and up to 200GB of memory. The M2 Pro is known for its exceptional energy efficiency and relatively lower power consumption, making it a popular choice for developers and users who prioritize a balance between performance and affordability.

NVIDIA L40S 48GB

The NVIDIA L40S is a high-end GPU specifically designed for demanding AI workloads and machine learning. It boasts 48 GB of high-bandwidth memory and impressive processing power, making it a powerhouse for running large LLMs. NVIDIA GPUs are known for their superior performance in parallel computing, which makes them highly effective for processing the intricate calculations required by LLMs.

Comparing the M2 Pro and L40S for LLM Token Generation

We’ve gathered data from various benchmark tests to provide a clear picture of how these two devices perform when running different LLM models. Let's dive into the results.

Llama 2 7B Model Performance

| Device | Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|---|

| Apple M2 Pro 200GB 16 Cores | Llama 2 7B | F16 | 312.65 | 12.47 |

| Apple M2 Pro 200GB 16 Cores | Llama 2 7B | Q8_0 | 288.46 | 22.7 |

| Apple M2 Pro 200GB 16 Cores | Llama 2 7B | Q4_0 | 294.24 | 37.87 |

Observations:

- The M2 Pro demonstrates strong performance in processing the Llama 2 7B model, achieving speeds of over 300 tokens/second with F16 quantization.

- The M2 Pro shows a good balance between processing and generation speed, with noticeable improvements in generation performance when using Q80 or Q40 quantization.

- This data suggests that the M2 Pro could be a solid choice for running Llama 2 7B, especially if you prioritize higher token generation speed.

Apple M2 Pro 200GB 19 Cores Performance for Llama 2 7B

| Device | Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|---|

| Apple M2 Pro 200GB 19 Cores | Llama 2 7B | F16 | 384.38 | 13.06 |

| Apple M2 Pro 200GB 19 Cores | Llama 2 7B | Q8_0 | 344.5 | 23.01 |

| Apple M2 Pro 200GB 19 Cores | Llama 2 7B | Q4_0 | 341.19 | 38.86 |

Observations: * The M2 Pro with 19 cores achieves even higher processing speeds compared to the 16-core version. * While generation speed still remains lower than processing speed, the M2 Pro still demonstrates impressive performance for the Llama 2 7B model.

NVIDIA L40S 48GB Performance for Llama 3 Models

| Device | Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|---|

| NVIDIA L40S 48GB | Llama 3 70B | F16 | No Data Available | No Data Available |

| NVIDIA L40S 48GB | Llama 3 70B | Q4KM | 649.08 | 15.31 |

| NVIDIA L40S 48GB | Llama 3 8B | F16 | 2491.65 | 43.42 |

| NVIDIA L40S 48GB | Llama 3 8B | Q4KM | 5908.52 | 113.6 |

Observations:

- The L40S shows impressive performance with the Llama 3 8B model, especially when using Q4KM quantization. It achieves processing speeds exceeding 5000 tokens/second and generation speeds over 100 tokens/second.

- Unfortunately, we don't have data for the L40S running the Llama 3 70B model with F16 quantization. This would have provided a more comprehensive comparison. However, the L40S still demonstrates impressive performance for the Llama 3 8B models, suggesting its capability for handling larger models.

Performance Analysis: Strengths and Weaknesses

Apple M2 Pro 200GB 16 Cores - Strengths

- Energy Efficiency: The M2 Pro is known for its power efficiency, making it a good option for users concerned about power consumption and heat generation.

- Affordability: The overall cost of the M2 Pro system is generally lower than a high-end GPU-based system.

- Balanced Performance: The M2 Pro shows decent performance across various LLM models and quantization techniques, making it suitable for both processing and generation tasks.

Apple M2 Pro 200GB 16 Cores - Weaknesses

- Limited GPU Power: It's not a dedicated GPU solution, making it less ideal for extremely demanding LLM models like the Llama 3 70B.

- Token Generation Speed: In comparison to the L40S, the M2 Pro's token generation speed is lower for larger LLMs, especially with F16 quantization.

NVIDIA L40S 48GB - Strengths

- High-Performance GPU: The L40S is a dedicated GPU designed to handle demanding AI and machine learning tasks, including processing large LLM models.

- Exceptional Speed: The L40S offers significantly higher token generation speed compared to the M2 Pro, especially with larger models and Q4KM quantization.

- Parallel Computing: The parallel processing power of GPUs is particularly beneficial for handling the complex calculations required by LLMs.

NVIDIA L40S 48GB - Weaknesses

- Higher Power Consumption: GPUs generally require more power than CPUs, leading to higher energy costs and potential heat issues.

- Higher Price: High-end GPUs like the L40S are expensive, making them less accessible to budget-conscious developers.

Practical Recommendations for Use Cases

Here are some guidelines for choosing the right device based on your specific needs:

- If you're working with smaller LLMs (like Llama 2 7B) and prioritize energy efficiency and affordability, the Apple M2 Pro is a good choice.

- If you need to run larger LLMs (like Llama 3 70B) and prioritize high token generation speed, opt for the NVIDIA L40S.

- If your workload demands significant parallel processing and you can afford a high-end GPU, the L40S is the way to go.

- If you're a hobbyist or developer working with smaller LLMs and have a limited budget, the M2 Pro offers a good balance of performance and value.

Conclusion

The choice between the Apple M2 Pro and the NVIDIA L40S ultimately depends on your specific needs and priorities. The M2 Pro provides a balanced performance, energy efficiency, and affordability, making it suitable for a wide range of LLM workloads. On the other hand, the L40S is a powerhouse for demanding LLMs, offering exceptional speed and parallel processing capabilities. By carefully considering your needs and budget, you can select the device that best fits your LLM development or deployment requirements.

FAQ

What is quantization and why is it important for LLMs? Quantization is a technique used to reduce the size of LLM models by converting their large numerical values into smaller ones. This process allows you to run models on devices with less memory and processing power. By simplifying the calculations, quantization also increases the speed at which the model processes data.

Is the Apple M2 Pro suitable for all LLMs? While the M2 Pro performs well with smaller LLMs, it may struggle with larger models like Llama 3 70B due to its limitations in GPU power.

How does the L40S handle heat generation? High-end GPUs like the L40S can generate significant heat. To mitigate this, most GPUs are equipped with robust cooling systems, including fans and heat sinks. However, you may need to ensure proper ventilation to prevent overheating issues.

What are some other devices commonly used for running LLMs? Other popular choices include the NVIDIA A100, NVIDIA A30, and the Google TPUv4. These devices often offer higher performance than the M2 Pro and L40S but come at a higher price.

Keywords

Apple M2 Pro, NVIDIA L40S, LLM, Token Generation Speed, Llama 2 7B, Llama 3 8B, Llama 3 70B, Quantization, F16, Q80, Q40, Q4KM, Performance, Benchmark Analysis, GPU, CPU, Processing, Generation, AI, Machine Learning, Development, Deployment, Local, Hardware, Deep Learning