Apple M2 Pro 200gb 16cores vs. NVIDIA A40 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, with new models and applications emerging every day. These powerful AI systems offer compelling possibilities for natural language processing, code generation, and creative writing. However, their computational demands are immense, requiring specialized hardware to achieve optimal performance.

Two popular contenders for running LLMs on a local machine are the Apple M2 Pro and the NVIDIA A40. This article delves into a head-to-head comparison of these devices, focusing on their token generation speed for various Llama models, a popular open-source LLM platform. We will analyze the performance data, highlight key strengths and weaknesses, and provide practical recommendations for selecting the right device for your LLM projects.

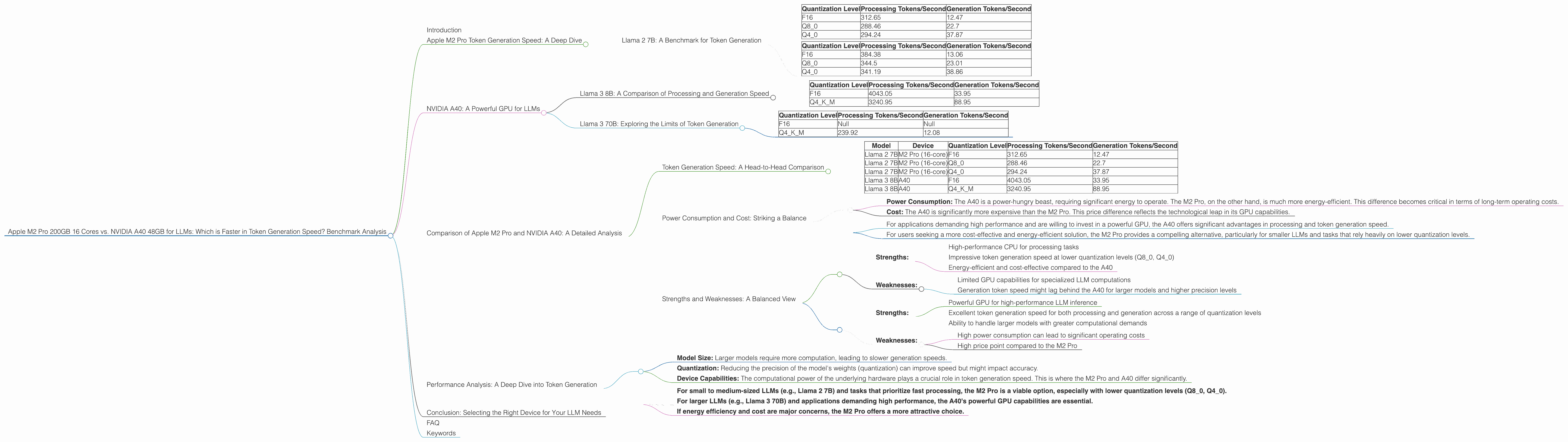

Apple M2 Pro Token Generation Speed: A Deep Dive

The Apple M2 Pro, with its powerful 16-core CPU and 200GB of memory, has emerged as a compelling option for local LLM deployment. Let's examine its token generation speed for different Llama models and quantization levels:

Llama 2 7B: A Benchmark for Token Generation

The Apple M2 Pro demonstrates impressive token generation speeds for Llama 2 7B, particularly at lower precision levels. Here's a breakdown:

| Quantization Level | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|

| F16 | 312.65 | 12.47 |

| Q8_0 | 288.46 | 22.7 |

| Q4_0 | 294.24 | 37.87 |

Key Observations:

- The M2 Pro excels in processing tokens, achieving impressive speeds across all quantization levels. This suggests its strength in performing the mathematical operations involved in LLM inference.

- When it comes to generation, the device shows more variation. While the Q4_0 configuration achieves the highest generation speed, the F16 configuration lags behind considerably. This highlights that quantization can significantly impact the speed of token generation.

Comparison to Other M2 Pro Configurations

For a 19-core M2 Pro configuration, the token generation speed increases slightly for both processing and generation, although the difference is less pronounced in generation:

| Quantization Level | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|

| F16 | 384.38 | 13.06 |

| Q8_0 | 344.5 | 23.01 |

| Q4_0 | 341.19 | 38.86 |

Practical Implications:

- For tasks requiring fast processing, the M2 Pro delivers exceptional performance.

- For applications where real-time response is critical, the lower quantization levels (Q80 and Q40) prove advantageous, as they deliver faster token generation without sacrificing too much accuracy.

- However, the M2 Pro's generation speed when using F16 precision might be a concern for applications that demand a rapid response time.

NVIDIA A40: A Powerful GPU for LLMs

The NVIDIA A40, with its massive 48GB of memory and dedicated Tensor Cores, stands as a formidable force in the LLM landscape. While data for Llama 2 is unavailable, we can assess its performance on the Llama 3 models:

Llama 3 8B: A Comparison of Processing and Generation Speed

The A40 demonstrates its power by generating tokens for Llama 3 8B at remarkable speeds. Here's a breakdown:

| Quantization Level | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|

| F16 | 4043.05 | 33.95 |

| Q4KM | 3240.95 | 88.95 |

Key Observations:

- The A40 excels significantly in processing tokens, showcasing its GPU power.

- In terms of generation, both F16 and Q4KM achieve impressive speeds, indicating its ability to handle intricate calculations involved in token generation.

Llama 3 70B: Exploring the Limits of Token Generation

The A40 also handles the larger Llama 3 70B model effectively, although its performance drops:

| Quantization Level | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|

| F16 | Null | Null |

| Q4KM | 239.92 | 12.08 |

Key Observations:

- Data for F16 is unavailable, suggesting limitations in handling this larger model with high precision.

- While the Q4KM configuration still showcases remarkable processing speed, the generation speed drops considerably compared to the 8B model. This underscores the challenges in handling larger models with increasing complexity.

Practical Implications:

- The A40 shines in processing large models due to its GPU capabilities.

- Its ability to generate tokens at high speeds for both 8B and 70B models, even with lower precision, makes it a viable solution for various LLM applications.

- The lack of data for F16 on the 70B model suggests that the A40 might struggle with high-precision inference for very large models.

Comparison of Apple M2 Pro and NVIDIA A40: A Detailed Analysis

To better understand the strengths and weaknesses of the M2 Pro and A40, let's compare their performance in token generation:

Token Generation Speed: A Head-to-Head Comparison

Note: The following comparison only includes data available for both devices (Llama 2 7B for M2 Pro and Llama 3 8B for A40).

| Model | Device | Quantization Level | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|---|---|

| Llama 2 7B | M2 Pro (16-core) | F16 | 312.65 | 12.47 |

| Llama 2 7B | M2 Pro (16-core) | Q8_0 | 288.46 | 22.7 |

| Llama 2 7B | M2 Pro (16-core) | Q4_0 | 294.24 | 37.87 |

| Llama 3 8B | A40 | F16 | 4043.05 | 33.95 |

| Llama 3 8B | A40 | Q4KM | 3240.95 | 88.95 |

Key Observations:

- The A40 outperforms the M2 Pro significantly in processing speed, particularly for the Llama 3 8B model.

- In terms of token generation, the A40 exhibits better performance for Llama 3 8B at both F16 and Q4KM precision levels.

- While the M2 Pro excels in token generation for the Q40 quantization level, the A40 still outperforms it in F16 and Q4K_M.

Power Consumption and Cost: Striking a Balance

- Power Consumption: The A40 is a power-hungry beast, requiring significant energy to operate. The M2 Pro, on the other hand, is much more energy-efficient. This difference becomes critical in terms of long-term operating costs.

- Cost: The A40 is significantly more expensive than the M2 Pro. This price difference reflects the technological leap in its GPU capabilities.

Practical Implications:

- For applications demanding high performance and are willing to invest in a powerful GPU, the A40 offers significant advantages in processing and token generation speed.

- For users seeking a more cost-effective and energy-efficient solution, the M2 Pro provides a compelling alternative, particularly for smaller LLMs and tasks that rely heavily on lower quantization levels.

Strengths and Weaknesses: A Balanced View

Apple M2 Pro: * Strengths: * High-performance CPU for processing tasks * Impressive token generation speed at lower quantization levels (Q80, Q40) * Energy-efficient and cost-effective compared to the A40 * Weaknesses: * Limited GPU capabilities for specialized LLM computations * Generation token speed might lag behind the A40 for larger models and higher precision levels

NVIDIA A40: * Strengths: * Powerful GPU for high-performance LLM inference * Excellent token generation speed for both processing and generation across a range of quantization levels * Ability to handle larger models with greater computational demands * Weaknesses: * High power consumption can lead to significant operating costs * High price point compared to the M2 Pro

Performance Analysis: A Deep Dive into Token Generation

What is Token Generation?

Token generation is the process by which an LLM produces new text based on the input it receives. The speed at which an LLM can generate tokens directly impacts the responsiveness and overall performance of the model.

Factors Influencing Token Generation Speed:

- Model Size: Larger models require more computation, leading to slower generation speeds.

- Quantization: Reducing the precision of the model's weights (quantization) can improve speed but might impact accuracy.

- Device Capabilities: The computational power of the underlying hardware plays a crucial role in token generation speed. This is where the M2 Pro and A40 differ significantly.

Practical Recommendations:

- For small to medium-sized LLMs (e.g., Llama 2 7B) and tasks that prioritize fast processing, the M2 Pro is a viable option, especially with lower quantization levels (Q80, Q40).

- For larger LLMs (e.g., Llama 3 70B) and applications demanding high performance, the A40's powerful GPU capabilities are essential.

- If energy efficiency and cost are major concerns, the M2 Pro offers a more attractive choice.

Conclusion: Selecting the Right Device for Your LLM Needs

The choice between the Apple M2 Pro and the NVIDIA A40 depends on your specific requirements and priorities. If you prioritize high-performance token generation and are willing to invest in a powerful GPU, the A40 is the clear choice. However, if you're working with smaller models and prioritize energy efficiency and cost-effectiveness, the M2 Pro offers a compelling alternative.

Remember that the world of LLMs is constantly evolving, with new models and hardware emerging regularly. Staying informed about the latest benchmarks and technologies is critical for making informed decisions about your LLM infrastructure.

FAQ

Q: What is quantization, and how does it affect LLM performance?

A: Quantization is a technique used to reduce the size of a model's weight parameters by representing them with fewer bits. Lower precision levels (e.g., Q80, Q40) result in smaller models and faster processing but can impact accuracy. While high precision (F16) offers greater accuracy, it requires more memory and can slow down processing.

Q: What are the best use cases for each device?

A: The M2 Pro is ideal for applications involving smaller LLMs (e.g., Llama 2 7B), tasks that prioritize fast processing, and situations where energy efficiency and cost are critical. The A40 excels in handling larger LLMs, tasks demanding high performance, and applications where power consumption is less of a concern.

Q: How can I choose the right device for my LLM project?

A: Consider factors such as the size of your model, your performance requirements, and your budget. If you are unsure, it's always best to experiment with different options and benchmark their performance on your specific tasks.

Keywords

Apple M2 Pro, NVIDIA A40, LLM, Llama 2, Llama 3, Token Generation, Speed, Benchmark, Performance, Quantization, GPU, CPU, Inference, Processing, Generation, Cost, Power Consumption, Use Cases, LLM Inference, Open Source, Open Source LLMs.