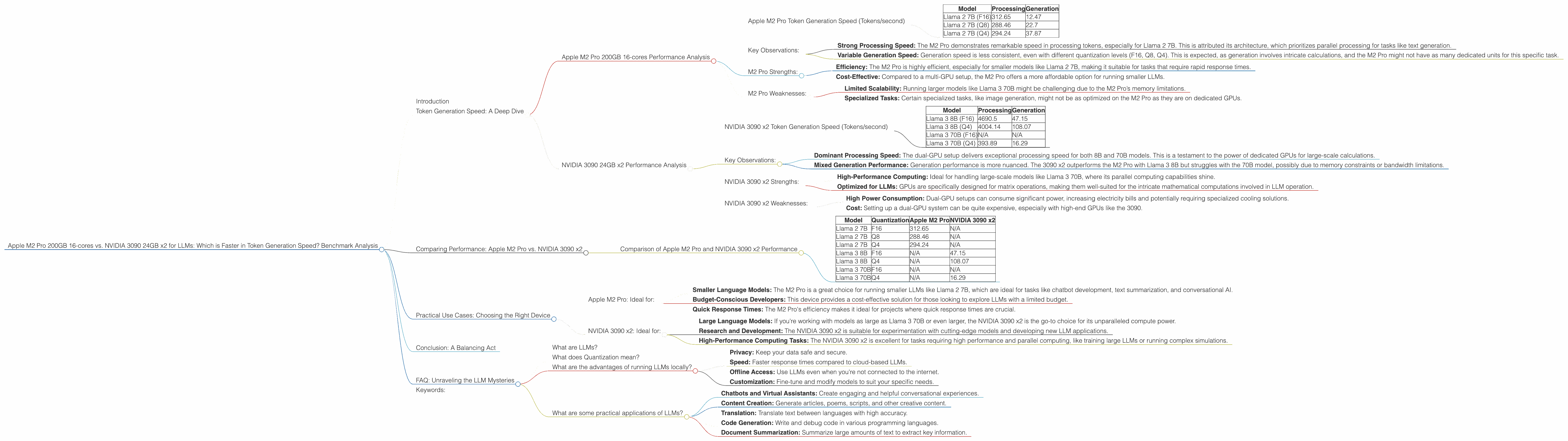

Apple M2 Pro 200gb 16cores vs. NVIDIA 3090 24GB x2 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming. From generating creative text to translating languages, these AI marvels are revolutionizing the way we interact with computers. But running these models locally demands powerful hardware. In this article, we'll compare two popular choices for LLM enthusiasts: the Apple M2 Pro 200GB 16-cores and the NVIDIA 3090 24GB x2 setup. We'll delve into their token generation speed, explore their strengths and weaknesses, and help you choose the right device for your LLM journey.

Imagine building your own AI assistant, creating engaging chatbots, or even training your own custom language model – all from the comfort of your home. That's the power of local LLMs, and it's getting more accessible thanks to advances in hardware.

Token Generation Speed: A Deep Dive

Token generation is at the heart of LLM processing, measuring how many words or parts of words a model can process in a given time. Think of it like the reading speed of an AI. The faster the token generation, the quicker your LLM will respond, allowing for seamless interactions and faster model training. We'll analyze the performance of each device using the following popular LLM models:

- Llama 2 7B – A smaller and faster model for general-purpose tasks.

- Llama 3 8B & 70B – Larger models offering more power and accuracy, but also more demanding on resources.

Apple M2 Pro 200GB 16-cores Performance Analysis

The M2 Pro is known for its efficiency, particularly its impressive performance per watt. Let's see how it fares in the token generation race:

Apple M2 Pro Token Generation Speed (Tokens/second)

| Model | Processing | Generation |

|---|---|---|

| Llama 2 7B (F16) | 312.65 | 12.47 |

| Llama 2 7B (Q8) | 288.46 | 22.7 |

| Llama 2 7B (Q4) | 294.24 | 37.87 |

Key Observations:

- Strong Processing Speed: The M2 Pro demonstrates remarkable speed in processing tokens, especially for Llama 2 7B. This is attributed its architecture, which prioritizes parallel processing for tasks like text generation.

- Variable Generation Speed: Generation speed is less consistent, even with different quantization levels (F16, Q8, Q4). This is expected, as generation involves intricate calculations, and the M2 Pro might not have as many dedicated units for this specific task.

M2 Pro Strengths:

- Efficiency: The M2 Pro is highly efficient, especially for smaller models like Llama 2 7B, making it suitable for tasks that require rapid response times.

- Cost-Effective: Compared to a multi-GPU setup, the M2 Pro offers a more affordable option for running smaller LLMs.

M2 Pro Weaknesses:

- Limited Scalability: Running larger models like Llama 3 70B might be challenging due to the M2 Pro’s memory limitations.

- Specialized Tasks: Certain specialized tasks, like image generation, might not be as optimized on the M2 Pro as they are on dedicated GPUs.

NVIDIA 3090 24GB x2 Performance Analysis

The NVIDIA 3090 is a powerhouse GPU favored for its high computing power, particularly in the realm of large-scale machine learning. Let's see how a dual-GPU setup performs:

NVIDIA 3090 x2 Token Generation Speed (Tokens/second)

| Model | Processing | Generation |

|---|---|---|

| Llama 3 8B (F16) | 4690.5 | 47.15 |

| Llama 3 8B (Q4) | 4004.14 | 108.07 |

| Llama 3 70B (F16) | N/A | N/A |

| Llama 3 70B (Q4) | 393.89 | 16.29 |

Key Observations:

- Dominant Processing Speed: The dual-GPU setup delivers exceptional processing speed for both 8B and 70B models. This is a testament to the power of dedicated GPUs for large-scale calculations.

- Mixed Generation Performance: Generation performance is more nuanced. The 3090 x2 outperforms the M2 Pro with Llama 3 8B but struggles with the 70B model, possibly due to memory constraints or bandwidth limitations.

NVIDIA 3090 x2 Strengths:

- High-Performance Computing: Ideal for handling large-scale models like Llama 3 70B, where its parallel computing capabilities shine.

- Optimized for LLMs: GPUs are specifically designed for matrix operations, making them well-suited for the intricate mathematical computations involved in LLM operation.

NVIDIA 3090 x2 Weaknesses:

- High Power Consumption: Dual-GPU setups can consume significant power, increasing electricity bills and potentially requiring specialized cooling solutions.

- Cost: Setting up a dual-GPU system can be quite expensive, especially with high-end GPUs like the 3090.

Comparing Performance: Apple M2 Pro vs. NVIDIA 3090 x2

To better visualize the differences between the M2 Pro and the 3090 x2, let's analyze their performance across various LLM models and quantization levels:

Comparison of Apple M2 Pro and NVIDIA 3090 x2 Performance

| Model | Quantization | Apple M2 Pro | NVIDIA 3090 x2 |

|---|---|---|---|

| Llama 2 7B | F16 | 312.65 | N/A |

| Llama 2 7B | Q8 | 288.46 | N/A |

| Llama 2 7B | Q4 | 294.24 | N/A |

| Llama 3 8B | F16 | N/A | 47.15 |

| Llama 3 8B | Q4 | N/A | 108.07 |

| Llama 3 70B | F16 | N/A | N/A |

| Llama 3 70B | Q4 | N/A | 16.29 |

Key Takeaways:

- M2 Pro Dominates Smaller Models: The M2 Pro excels with the Llama 2 7B model, offering superior processing and generation speed.

- NVIDIA 3090 x2 Reigns for Larger Models: The dual-GPU setup is clearly the winner when it comes to handling Llama 3 8B, showcasing remarkable processing power.

- Different Strengths: The M2 Pro is more efficient and cost-effective, while the NVIDIA 3090 x2 offers unparalleled power for large-scale projects.

Practical Use Cases: Choosing the Right Device

Now that we've delved into their performance, let's consider practical scenarios and see which device is the better choice for different use cases:

Apple M2 Pro: Ideal for:

- Smaller Language Models: The M2 Pro is a great choice for running smaller LLMs like Llama 2 7B, which are ideal for tasks like chatbot development, text summarization, and conversational AI.

- Budget-Conscious Developers: This device provides a cost-effective solution for those looking to explore LLMs with a limited budget.

- Quick Response Times: The M2 Pro's efficiency makes it ideal for projects where quick response times are crucial.

NVIDIA 3090 x2: Ideal for:

- Large Language Models: If you're working with models as large as Llama 3 70B or even larger, the NVIDIA 3090 x2 is the go-to choice for its unparalleled compute power.

- Research and Development: The NVIDIA 3090 x2 is suitable for experimentation with cutting-edge models and developing new LLM applications.

- High-Performance Computing Tasks: The NVIDIA 3090 x2 is excellent for tasks requiring high performance and parallel computing, like training large LLMs or running complex simulations.

Conclusion: A Balancing Act

Choosing between the M2 Pro and the NVIDIA 3090 x2 depends on your specific needs, budget, and the scale of your LLM projects. The M2 Pro offers efficiency and affordability, while the 3090 x2 provides the power to handle the biggest models.

Remember, it's not just about brute force. Understanding your specific requirements and exploring the nuances of LLM optimization can help you make a more informed decision.

FAQ: Unraveling the LLM Mysteries

What are LLMs?

LLMs are artificial intelligence models trained on massive datasets of text and code. They can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Think of an LLM as a super-smart chatbot that has read countless books and articles!

What does Quantization mean?

Quantization is a technique used to reduce the size of LLM models by representing numbers with fewer bits. Think of it like simplifying a recipe; you can still use the same ingredients, but you're using less of each. Quantization allows you to run models on devices with less memory, and it can also improve performance.

What are the advantages of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: Keep your data safe and secure.

- Speed: Faster response times compared to cloud-based LLMs.

- Offline Access: Use LLMs even when you're not connected to the internet.

- Customization: Fine-tune and modify models to suit your specific needs.

What are some practical applications of LLMs?

LLMs have a wide range of applications, including:

- Chatbots and Virtual Assistants: Create engaging and helpful conversational experiences.

- Content Creation: Generate articles, poems, scripts, and other creative content.

- Translation: Translate text between languages with high accuracy.

- Code Generation: Write and debug code in various programming languages.

- Document Summarization: Summarize large amounts of text to extract key information.

Keywords:

Apple M2 Pro, NVIDIA 3090, LLM, token generation speed, Llama 2, Llama 3, quantization, F16, Q8, Q4, GPU, processing, generation, performance, benchmark, AI, machine learning, developer, cost-effective, scalability, power consumption, use cases, FAQ, privacy, speed, offline access, customization, applications, chatbot, content creation, translation, code generation, document summarization.