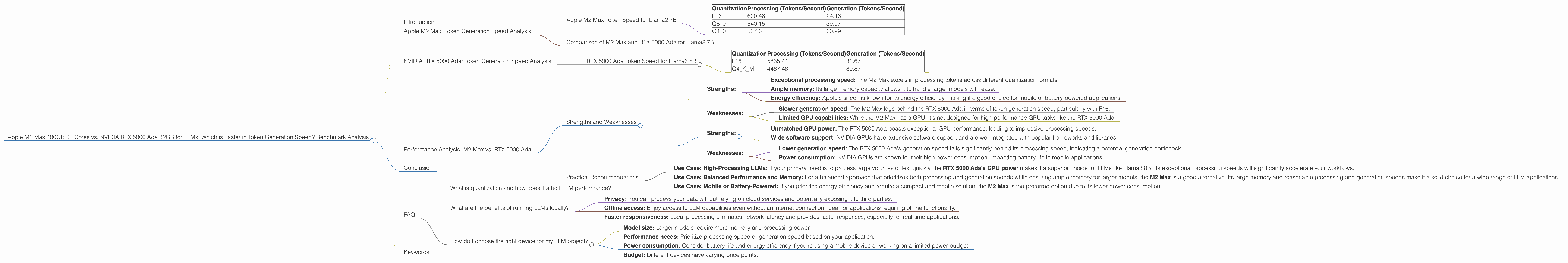

Apple M2 Max 400gb 30cores vs. NVIDIA RTX 5000 Ada 32GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

Large Language Models (LLMs) are revolutionizing the way we interact with computers, opening doors for innovative applications like text generation, translation, and code completion. However, running LLMs locally requires powerful hardware capable of handling the computationally demanding tasks involved. When it comes to choosing the right hardware, two prominent contenders emerge: Apple's M2 Max with its impressive 30 cores and ample 400GB of memory, and NVIDIA's RTX 5000 Ada with its renowned GPU power and 32GB of memory.

This article delves into a comparative analysis of these two devices, focusing on their speed in generating tokens, the building blocks of text in LLMs. We'll be using benchmark data to objectively assess their performance across different LLM models and quantization schemes. By understanding the strengths and weaknesses of each device, developers can make informed decisions about the best hardware for their LLM projects.

Apple M2 Max: Token Generation Speed Analysis

Apple M2 Max Token Speed for Llama2 7B

Let's start with the Apple M2 Max, a powerful chip designed for both CPU-intensive and GPU-accelerated tasks. We'll examine its performance with the Llama2 7B model in different quantization formats:

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 600.46 | 24.16 |

| Q8_0 | 540.15 | 39.97 |

| Q4_0 | 537.6 | 60.99 |

Key Observations:

- Processing Speed: The M2 Max demonstrates impressive processing speeds across all quantization formats, with F16 showing the highest performance at 600.46 tokens per second.

- Generation Speed: While the M2 Max excels in processing speed, its generation speed is relatively slower, particularly for F16, at 24.16 tokens per second.

- Quantization Impact: Quantization clearly impacts generation speed, with Q4_0 showing the best performance at 60.99 tokens per second, likely due to the reduced memory footprint and increased efficiency.

- Memory Advantage: The M2 Max's 400GB of memory provides a significant advantage in handling larger models, allowing for smoother and faster processing.

Comparison of M2 Max and RTX 5000 Ada for Llama2 7B

Unfortunately, we don't have data for Llama2 7B on the RTX 5000 Ada. Therefore, we cannot directly compare the performance of the two devices for this specific model.

NVIDIA RTX 5000 Ada: Token Generation Speed Analysis

RTX 5000 Ada Token Speed for Llama3 8B

Now, let's turn our attention to the NVIDIA RTX 5000 Ada, renowned for its GPU prowess. We'll examine its performance with the Llama3 8B model in different quantization formats:

| Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| F16 | 5835.41 | 32.67 |

| Q4KM | 4467.46 | 89.87 |

Key Observations:

- Processing Speed: The RTX 5000 Ada demonstrates incredible processing speeds, particularly with F16 at 5835.41 tokens per second. This showcases its GPU power.

- Generation Speed: While the RTX 5000 Ada excels in processing, its generation speed is significantly lower, particularly for F16, at 32.67 tokens per second. This suggests a bottleneck in the generation process.

- Quantization Impact: Similar to the M2 Max, quantization influences generation speed, with Q4KM delivering the best performance at 89.87 tokens per second, although still much lower than the processing speed.

Performance Analysis: M2 Max vs. RTX 5000 Ada

Strengths and Weaknesses

Apple M2 Max:

Strengths:

- Exceptional processing speed: The M2 Max excels in processing tokens across different quantization formats.

- Ample memory: Its large memory capacity allows it to handle larger models with ease.

- Energy efficiency: Apple's silicon is known for its energy efficiency, making it a good choice for mobile or battery-powered applications.

Weaknesses:

- Slower generation speed: The M2 Max lags behind the RTX 5000 Ada in terms of token generation speed, particularly with F16.

- Limited GPU capabilities: While the M2 Max has a GPU, it's not designed for high-performance GPU tasks like the RTX 5000 Ada.

NVIDIA RTX 5000 Ada:

Strengths:

- Unmatched GPU power: The RTX 5000 Ada boasts exceptional GPU performance, leading to impressive processing speeds.

- Wide software support: NVIDIA GPUs have extensive software support and are well-integrated with popular frameworks and libraries.

Weaknesses:

- Lower generation speed: The RTX 5000 Ada's generation speed falls significantly behind its processing speed, indicating a potential generation bottleneck.

- Power consumption: NVIDIA GPUs are known for their high power consumption, impacting battery life in mobile applications.

Practical Recommendations

Use Case: High-Processing LLMs: If your primary need is to process large volumes of text quickly, the RTX 5000 Ada's GPU power makes it a superior choice for LLMs like Llama3 8B. Its exceptional processing speeds will significantly accelerate your workflows.

Use Case: Balanced Performance and Memory: For a balanced approach that prioritizes both processing and generation speeds while ensuring ample memory for larger models, the M2 Max is a good alternative. Its large memory and reasonable processing and generation speeds make it a solid choice for a wide range of LLM applications.

Use Case: Mobile or Battery-Powered: If you prioritize energy efficiency and require a compact and mobile solution, the M2 Max is the preferred option due to its lower power consumption.

Conclusion

In the battle for LLM supremacy, both the M2 Max and the RTX 5000 Ada offer distinct advantages. The M2 Max excels in processing speed and memory capacity, making it ideal for handling larger models and prioritizing throughput. Conversely, the RTX 5000 Ada shines with its unparalleled GPU power, offering blazing-fast processing speeds for computationally demanding tasks. However, both devices face similar challenges, exhibiting slower generation speeds than their processing counterparts.

Ultimately, the best device for your LLM project depends on your specific needs and priorities. If you prioritize sheer processing power, the RTX 5000 Ada stands as the champion. However, if you require a balanced approach with ample memory and a more energy-efficient solution, the M2 Max offers a compelling alternative.

FAQ

What is quantization and how does it affect LLM performance?

Quantization is a technique used to reduce the size of LLM parameters, making them more compact and efficient to run on various devices. This is achieved by representing the parameters with lower precision numbers, which leads to a trade-off between accuracy and performance. While quantization can improve inference speed and memory usage, it may slightly impact model accuracy.

What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: You can process your data without relying on cloud services and potentially exposing it to third parties.

- Offline access: Enjoy access to LLM capabilities even without an internet connection, ideal for applications requiring offline functionality.

- Faster responsiveness: Local processing eliminates network latency and provides faster responses, especially for real-time applications.

How do I choose the right device for my LLM project?

Consider these factors:

- Model size: Larger models require more memory and processing power.

- Performance needs: Prioritize processing speed or generation speed based on your application.

- Power consumption: Consider battery life and energy efficiency if you're using a mobile device or working on a limited power budget.

- Budget: Different devices have varying price points.

Keywords

Large Language Models, LLM, Token Generation, Llama2 7B, Llama3 8B, Apple M2 Max, NVIDIA RTX 5000 Ada, Quantization, F16, Q80, Q40, Q4KM, Benchmark, Processing Speed, Generation Speed, GPU, CPU, Memory, Performance Analysis, Strengths, Weaknesses, Practical Recommendations, FAQ, Local LLMs, Privacy, Offline Access, Faster Responsiveness