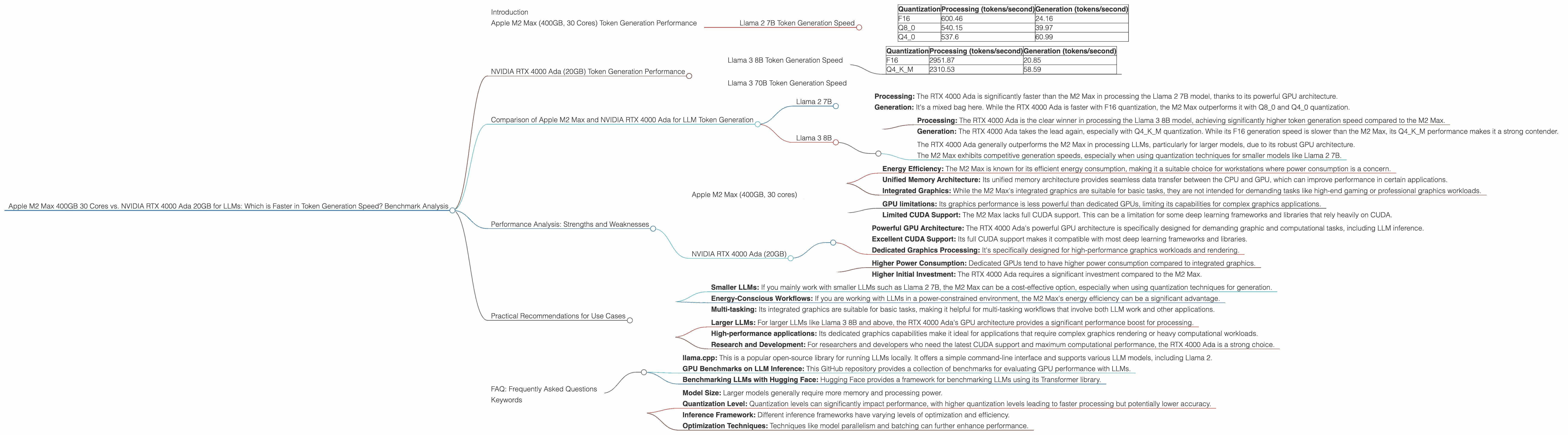

Apple M2 Max 400gb 30cores vs. NVIDIA RTX 4000 Ada 20GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with new models pushing the boundaries of language understanding and generation. These models are becoming increasingly powerful, but running them locally can be challenging due to their demanding computational requirements. Choosing the right hardware is crucial for optimal performance, particularly when it comes to token generation speed, which directly impacts how quickly an LLM can generate text.

This article delves into a head-to-head comparison between two popular devices: the Apple M2 Max (400GB, 30 cores) and the NVIDIA RTX 4000 Ada (20GB), focusing specifically on their performance in generating tokens with various LLM models. We'll analyze benchmark data to determine which device emerges as the champion in this token generation race.

Apple M2 Max (400GB, 30 Cores) Token Generation Performance

Let's start by examining the M2 Max's prowess in token generation. It's a formidable chip designed for professional workflows and boasts impressive computational capabilities. Here’s how it performs with different LLM models and quantization levels:

Llama 2 7B Token Generation Speed

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | 600.46 | 24.16 |

| Q8_0 | 540.15 | 39.97 |

| Q4_0 | 537.6 | 60.99 |

Key takeaways:

- The Apple M2 Max demonstrates strong performance with Llama 2 7B, especially in processing. It can process over 500 tokens per second even with Q4_0 quantization.

- Generation speeds are notably slower, especially for F16. However, they improve significantly with Q80 and Q40 quantization.

NVIDIA RTX 4000 Ada (20GB) Token Generation Performance

The NVIDIA RTX 4000 Ada is well-known for its gaming and professional graphics capabilities. Its powerful GPU architecture is also well-suited for deep learning workloads, including LLM inference.

Llama 3 8B Token Generation Speed

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | 2951.87 | 20.85 |

| Q4KM | 2310.53 | 58.59 |

Key takeaways:

- The RTX 4000 Ada shows exceptional processing speed with both F16 and Q4KM quantization for the Llama 3 8B model. It surpasses the M2 Max significantly.

- Generation performance is surprisingly weaker than the M2 Max for F16 quantization, but it excels in Q4KM quantization.

Llama 3 70B Token Generation Speed

Unfortunately, no benchmark data is available for the RTX 4000 Ada with the Llama 3 70B model. This is due to limitations in the current benchmark study, which doesn't cover all device-model combinations. We'll need to wait for further benchmark results to get a clear picture of the RTX 4000 Ada's performance for larger LLMs.

Comparison of Apple M2 Max and NVIDIA RTX 4000 Ada for LLM Token Generation

Now, let's compare the performance characteristics of the M2 Max and the RTX 4000 Ada across different LLMs:

Llama 2 7B

- Processing: The RTX 4000 Ada is significantly faster than the M2 Max in processing the Llama 2 7B model, thanks to its powerful GPU architecture.

- Generation: It's a mixed bag here. While the RTX 4000 Ada is faster with F16 quantization, the M2 Max outperforms it with Q80 and Q40 quantization.

Llama 3 8B

- Processing: The RTX 4000 Ada is the clear winner in processing the Llama 3 8B model, achieving significantly higher token generation speed compared to the M2 Max.

- Generation: The RTX 4000 Ada takes the lead again, especially with Q4KM quantization. While its F16 generation speed is slower than the M2 Max, its Q4KM performance makes it a strong contender.

Overall:

- The RTX 4000 Ada generally outperforms the M2 Max in processing LLMs, particularly for larger models, due to its robust GPU architecture.

- The M2 Max exhibits competitive generation speeds, especially when using quantization techniques for smaller models like Llama 2 7B.

Performance Analysis: Strengths and Weaknesses

Apple M2 Max (400GB, 30 cores)

Strengths:

- Energy Efficiency: The M2 Max is known for its efficient energy consumption, making it a suitable choice for workstations where power consumption is a concern.

- Unified Memory Architecture: Its unified memory architecture provides seamless data transfer between the CPU and GPU, which can improve performance in certain applications.

- Integrated Graphics: While the M2 Max's integrated graphics are suitable for basic tasks, they are not intended for demanding tasks like high-end gaming or professional graphics workloads.

Weaknesses:

- GPU limitations: Its graphics performance is less powerful than dedicated GPUs, limiting its capabilities for complex graphics applications.

- Limited CUDA Support: The M2 Max lacks full CUDA support. This can be a limitation for some deep learning frameworks and libraries that rely heavily on CUDA.

NVIDIA RTX 4000 Ada (20GB)

Strengths:

- Powerful GPU Architecture: The RTX 4000 Ada's powerful GPU architecture is specifically designed for demanding graphic and computational tasks, including LLM inference.

- Excellent CUDA Support: Its full CUDA support makes it compatible with most deep learning frameworks and libraries.

- Dedicated Graphics Processing: It's specifically designed for high-performance graphics workloads and rendering.

Weaknesses:

- Higher Power Consumption: Dedicated GPUs tend to have higher power consumption compared to integrated graphics.

- Higher Initial Investment: The RTX 4000 Ada requires a significant investment compared to the M2 Max.

Practical Recommendations for Use Cases

Let's break down the ideal scenarios for each device:

Apple M2 Max:

- Smaller LLMs: If you mainly work with smaller LLMs such as Llama 2 7B, the M2 Max can be a cost-effective option, especially when using quantization techniques for generation.

- Energy-Conscious Workflows: If you are working with LLMs in a power-constrained environment, the M2 Max's energy efficiency can be a significant advantage.

- Multi-tasking: Its integrated graphics are suitable for basic tasks, making it helpful for multi-tasking workflows that involve both LLM work and other applications.

NVIDIA RTX 4000 Ada:

- Larger LLMs: For larger LLMs like Llama 3 8B and above, the RTX 4000 Ada's GPU architecture provides a significant performance boost for processing.

- High-performance applications: Its dedicated graphics capabilities make it ideal for applications that require complex graphics rendering or heavy computational workloads.

- Research and Development: For researchers and developers who need the latest CUDA support and maximum computational performance, the RTX 4000 Ada is a strong choice.

FAQ: Frequently Asked Questions

1. What are quantization techniques, and how do they benefit LLM performance?

Quantization is a technique used to reduce the size of LLM models without sacrificing too much accuracy. It essentially converts floating-point numbers (like F16) to smaller data types, like Q80 or Q40, which require less storage space and computational resources. This can lead to faster loading times, reduced memory consumption, and overall improved performance.

Imagine it like compressing a massive photo file—by reducing the number of colors (bits) needed to represent the image, you can significantly reduce the file size without losing too much visual detail. Quantization works similarly with LLMs, reducing the amount of data they need to process.

2. What about the Apple M1 Ultra for LLM performance?

The Apple M1 Ultra is another powerful chip designed for professional workflows. It's also a great choice for LLM workloads, particularly if you need a platform capable of handling multiple smaller LLMs simultaneously. However, its GPU architecture is not as specialized for deep learning as the RTX 4000 Ada, so it may not be the best choice for larger LLMs or demanding deep learning applications.

3. Are there any open-source tools for benchmarking LLM performance on different devices?

Yes, several open-source tools are available for benchmarking LLM performance. These tools provide frameworks to measure token generation speed, inference latency, and other metrics. Some popular options include:

- llama.cpp: This is a popular open-source library for running LLMs locally. It offers a simple command-line interface and supports various LLM models, including Llama 2.

- GPU Benchmarks on LLM Inference: This GitHub repository provides a collection of benchmarks for evaluating GPU performance with LLMs.

- Benchmarking LLMs with Hugging Face: Hugging Face provides a framework for benchmarking LLMs using its Transformer library.

4. Beyond token generation speed, what other factors influence LLM performance?

Several factors influence LLM performance beyond token generation speed. These include:

- Model Size: Larger models generally require more memory and processing power.

- Quantization Level: Quantization levels can significantly impact performance, with higher quantization levels leading to faster processing but potentially lower accuracy.

- Inference Framework: Different inference frameworks have varying levels of optimization and efficiency.

- Optimization Techniques: Techniques like model parallelism and batching can further enhance performance.

Keywords

LLM, Large Language Model, Token Generation, Apple M2 Max, NVIDIA RTX 4000 Ada, GPU, CPU, CUDA, Quantization, Benchmarking, Performance, Llama 2, Llama 3, Inference, Processing, Generation, Speed, Efficiency.