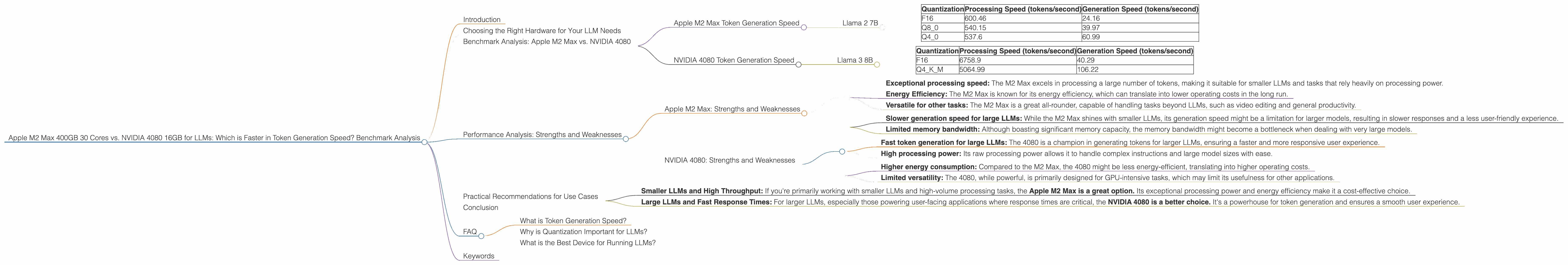

Apple M2 Max 400gb 30cores vs. NVIDIA 4080 16GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, and with it, the need for powerful hardware to run these AI behemoths is growing exponentially. Two popular choices for local LLM deployment are Apple's M2 Max chip and NVIDIA's 4080 GPU. But which reigns supreme when it comes to token generation speed?

This article delves into a benchmark comparison between the Apple M2 Max 400GB 30 Cores and the NVIDIA 4080 16GB, analyzing their performance on various Llama models. We’ll explore the strengths and weaknesses of each device, providing insights into their suitability for different LLM use cases.

Choosing the Right Hardware for Your LLM Needs

Imagine you're building a house. A powerful crane is essential to lift heavy materials, just like a powerful device is key for running large language models. Both the Apple M2 Max and the NVIDIA 4080 are incredibly powerful, but they excel in different aspects.

The Apple M2 Max is like a well-rounded contractor capable of managing many tasks simultaneously. It boasts a high number of cores and impressive memory bandwidth, making it efficient for various types of workloads, including LLM inference. The NVIDIA 4080, on the other hand, is a specialized construction crew, optimized for heavy-duty tasks like 3D rendering and AI training. It's a champion in processing complex instructions, making it a strong contender for large LLM models.

Benchmark Analysis: Apple M2 Max vs. NVIDIA 4080

To understand the performance differences, we'll look at token generation speeds, which directly impact the responsiveness and latency of your LLM application.

Apple M2 Max Token Generation Speed

The Apple M2 Max demonstrates impressive performance in token generation, particularly when dealing with smaller LLM models like Llama 7B.

Here's a breakdown of its performance based on the provided data:

Llama 2 7B

| Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|

| F16 | 600.46 | 24.16 |

| Q8_0 | 540.15 | 39.97 |

| Q4_0 | 537.6 | 60.99 |

As you can see, the M2 Max shines in processing speed, showcasing remarkable capabilities to handle Llama 2 7B models with various quantization levels. However, its generation speed is relatively slower, especially when compared to the 4080 on larger models.

NVIDIA 4080 Token Generation Speed

The NVIDIA 4080, a powerhouse in the GPU world, is a force to be reckoned with when handling larger LLMs. Let's examine its capabilities:

Llama 3 8B

| Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|

| F16 | 6758.9 | 40.29 |

| Q4KM | 5064.99 | 106.22 |

The 4080 excels in processing speed for the Llama 3 8B model, regardless of the quantization method. Its generation speed is also noteworthy, particularly with Q4KM quantization.

Important Note: The data we have for the NVIDIA 4080 doesn't cover Llama 3 70B models. Therefore, we cannot directly compare the performance of the two devices for this larger LLM.

Performance Analysis: Strengths and Weaknesses

Apple M2 Max: Strengths and Weaknesses

Strengths:

- Exceptional processing speed: The M2 Max excels in processing a large number of tokens, making it suitable for smaller LLMs and tasks that rely heavily on processing power.

- Energy Efficiency: The M2 Max is known for its energy efficiency, which can translate into lower operating costs in the long run.

- Versatile for other tasks: The M2 Max is a great all-rounder, capable of handling tasks beyond LLMs, such as video editing and general productivity.

Weaknesses:

- Slower generation speed for large LLMs: While the M2 Max shines with smaller LLMs, its generation speed might be a limitation for larger models, resulting in slower responses and a less user-friendly experience.

- Limited memory bandwidth: Although boasting significant memory capacity, the memory bandwidth might become a bottleneck when dealing with very large models.

NVIDIA 4080: Strengths and Weaknesses

Strengths:

- Fast token generation for large LLMs: The 4080 is a champion in generating tokens for larger LLMs, ensuring a faster and more responsive user experience.

- High processing power: Its raw processing power allows it to handle complex instructions and large model sizes with ease.

Weaknesses:

- Higher energy consumption: Compared to the M2 Max, the 4080 might be less energy-efficient, translating into higher operating costs.

- Limited versatility: The 4080, while powerful, is primarily designed for GPU-intensive tasks, which may limit its usefulness for other applications.

Practical Recommendations for Use Cases

The choice between the Apple M2 Max and the NVIDIA 4080 depends on your specific needs and use case:

- Smaller LLMs and High Throughput: If you're primarily working with smaller LLMs and high-volume processing tasks, the Apple M2 Max is a great option. Its exceptional processing power and energy efficiency make it a cost-effective choice.

- Large LLMs and Fast Response Times: For larger LLMs, especially those powering user-facing applications where response times are critical, the NVIDIA 4080 is a better choice. It's a powerhouse for token generation and ensures a smooth user experience.

Conclusion

The Apple M2 Max and NVIDIA 4080 are both powerful devices with distinct advantages. The M2 Max excels in processing speed for smaller LLMs, while the 4080 shines in generating tokens for larger models. The decision ultimately boils down to your specific LLM use case and the priorities for your application.

FAQ

What is Token Generation Speed?

Token generation speed refers to how quickly a device can produce a sequence of tokens, which are the basic units of text in an LLM. Think of it like the speed at which a typewriter can output characters. A faster token generation speed results in faster LLM responses and a more seamless user experience.

Why is Quantization Important for LLMs?

Quantization is a technique used to reduce the size of an LLM by converting its values from high-precision floating-point numbers to lower-precision formats like Q4, Q8, and F16. This results in smaller model sizes, faster loading times, and improved performance.

What is the Best Device for Running LLMs?

The best device for running LLMs depends on the model size and specific application requirements. There is no singular "best" device, as various factors like processing speed, memory, power consumption, and cost need to be considered.

Keywords

Apple M2 Max, NVIDIA 4080, LLM, Llama model, Token generation speed, Benchmark analysis, Performance, Quantization, Use cases, GPU, Deep learning, NLP, AI, Machine learning,