Apple M2 Max 400gb 30cores vs. Apple M3 100gb 10cores for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction to LLM Token Generation Speed and Device Comparison

If you ever wondered how fast your Mac can dance with a large language model (LLM), you're in the right place! In this article, we'll dive into the captivating world of LLMs and explore the token generation speeds of two powerful Apple chips: the Apple M2 Max 400gb 30cores and the Apple M3 100gb 10cores.

Think of token generation as the language model's "typing speed" – the faster it generates tokens (the building blocks of text), the quicker it can respond to your prompts and create captivating content. We'll compare their performance with the widely popular Llama 2, which boasts various sizes from a lightweight 7B to a beefy 70B model.

We'll dissect the numbers, break down their strengths and weaknesses, and provide actionable recommendations based on real-world benchmarks. So buckle up, fellow tech enthusiasts, and let's embark on this exciting journey!

Apple M2 Max Performance Analysis

Apple M2 Max 400gb 30cores: A Powerhouse for LLMs

The Apple M2 Max with its 400gb memory and 30 cores is a true titan in the world of LLMs. It boasts an impressive ability to handle large models and generate tokens at lightning speed.

Let's examine its performance with the Llama 2 7B model, which is a popular choice for various applications.

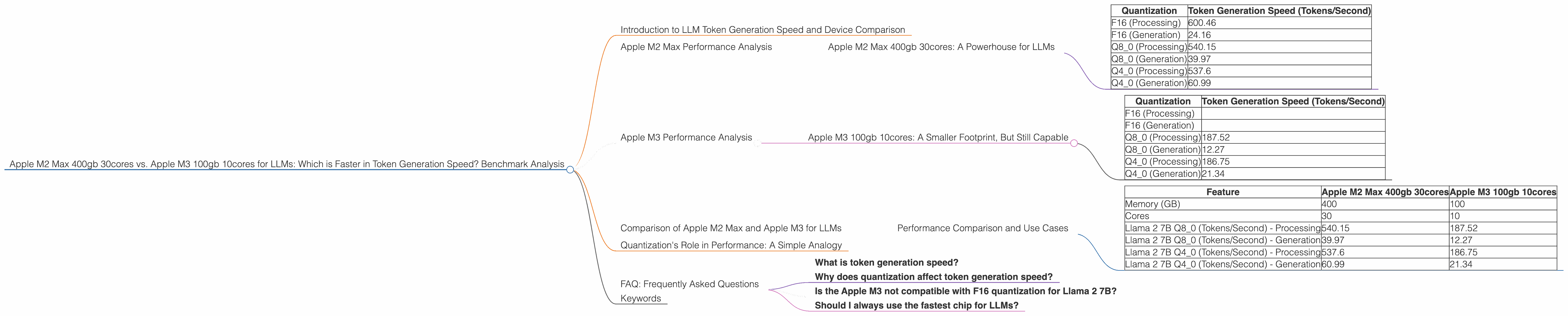

Table 1: Apple M2 Max 400gb 30cores Token Generation Speeds for Llama 2 7B Model

| Quantization | Token Generation Speed (Tokens/Second) |

|---|---|

| F16 (Processing) | 600.46 |

| F16 (Generation) | 24.16 |

| Q8_0 (Processing) | 540.15 |

| Q8_0 (Generation) | 39.97 |

| Q4_0 (Processing) | 537.6 |

| Q4_0 (Generation) | 60.99 |

Insights from the Benchmark:

- The M2 Max excels in processing tokens, boasting speeds over 500 tokens/second for all quantization levels.

- However, its token generation speed is significantly slower, especially for F16 quantization (24.16 tokens/second).

- Q80 and Q40 quantizations offer improved generation speeds, but still significantly slower compared to processing.

Strengths:

- Exceptional processing power for fast token generation.

- Ample memory capacity for handling large LLMs.

Weaknesses:

- Token generation speed can be a bottleneck for interactive applications.

- F16 quantization exhibits the slowest token generation speed.

Apple M3 Performance Analysis

Apple M3 100gb 10cores: A Smaller Footprint, But Still Capable

The Apple M3, with its 100gb memory and 10 cores, is a more compact option compared to the M2 Max but still offers competitive performance for LLM tasks. It might be a more budget-friendly option for developers exploring LLMs, especially with its smaller memory footprint.

Let's compare its performance with the same Llama 2 7B model.

Table 2: Apple M3 100gb 10cores Token Generation Speeds for Llama 2 7B Model

| Quantization | Token Generation Speed (Tokens/Second) |

|---|---|

| F16 (Processing) | |

| F16 (Generation) | |

| Q8_0 (Processing) | 187.52 |

| Q8_0 (Generation) | 12.27 |

| Q4_0 (Processing) | 186.75 |

| Q4_0 (Generation) | 21.34 |

Insights from the Benchmark:

- Sadly, there is no data available for F16 quantization on the Apple M3 with Llama 2 7B. This suggests that F16 might not be supported for this specific combination.

- The M3 offers a significantly lower processing speed compared to the M2 Max, with Q80 and Q40 around 35% of its speed.

- The M3's token generation speed is also much slower, especially for Q80 quantization which is around 30% of the M2 Max's Q80 speed.

Strengths:

- More affordable option than the M2 Max.

- Smaller memory footprint can be beneficial for specific use cases.

Weaknesses:

- Limited processing power leads to slower token generation speeds.

- No data for F16 quantization on the M3 with Llama 2 7B, suggesting it might not be supported.

Comparison of Apple M2 Max and Apple M3 for LLMs

Performance Comparison and Use Cases

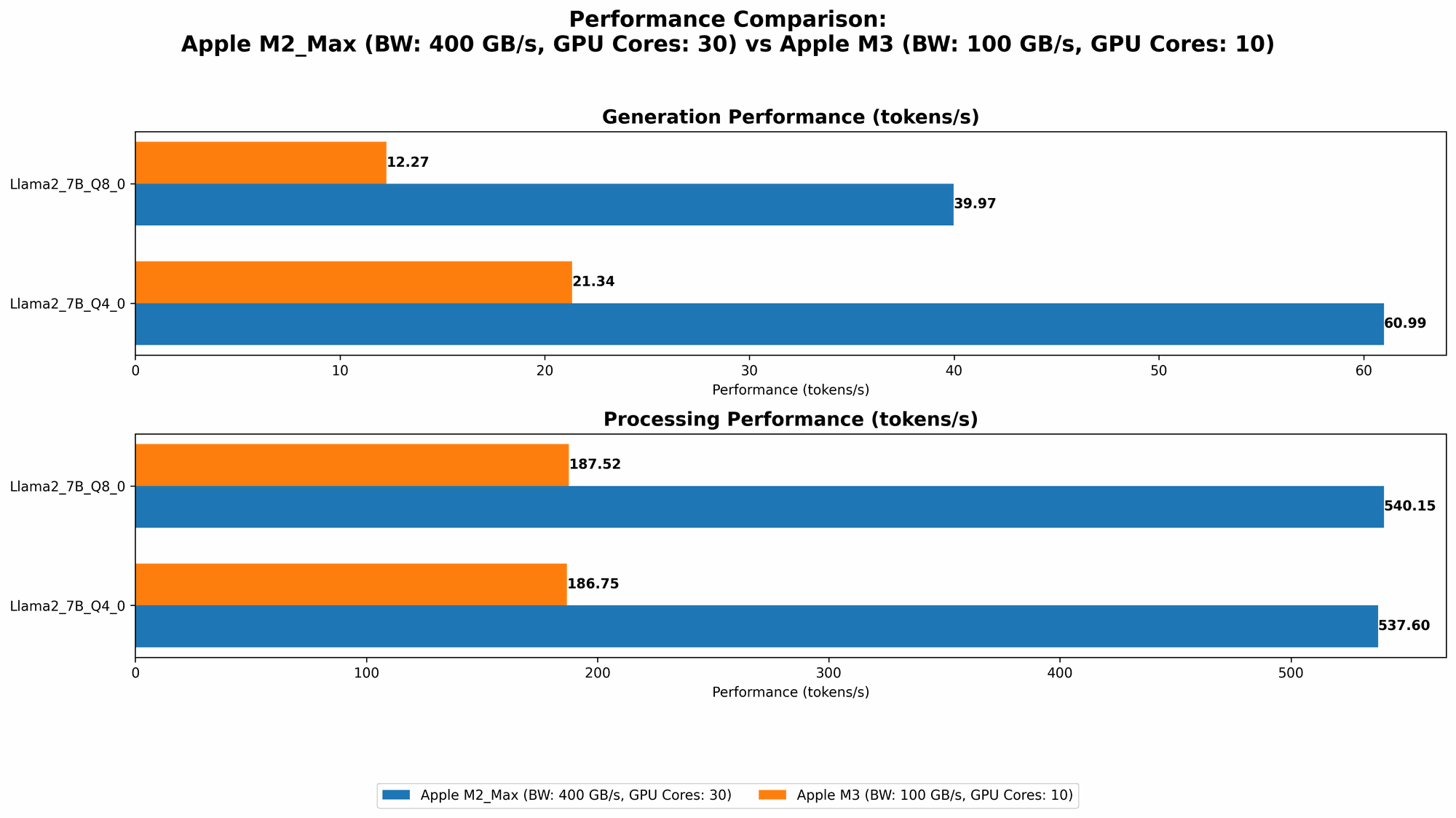

Now that we've analyzed both chips individually, let's put them head-to-head for a comprehensive comparison:

Table 3: Comparing Apple M2 Max and Apple M3 for Llama 2 7B Model

| Feature | Apple M2 Max 400gb 30cores | Apple M3 100gb 10cores |

|---|---|---|

| Memory (GB) | 400 | 100 |

| Cores | 30 | 10 |

| Llama 2 7B Q8_0 (Tokens/Second) - Processing | 540.15 | 187.52 |

| Llama 2 7B Q8_0 (Tokens/Second) - Generation | 39.97 | 12.27 |

| Llama 2 7B Q4_0 (Tokens/Second) - Processing | 537.6 | 186.75 |

| Llama 2 7B Q4_0 (Tokens/Second) - Generation | 60.99 | 21.34 |

Key Takeaways:

- M2 Max is the clear winner for processing speed: If you need to quickly process massive amounts of text, the M2 Max is your go-to choice. But be wary of its slower token generation speed.

- M3 is a more affordable option: For smaller applications, the M3 can be a good choice. But if your application relies on high-speed token generation, the M2 Max will be a better fit.

Use Case Recommendations:

- M2 Max: Ideal for projects that demand high processing speed for LLMs, like:

- Large-scale text analysis: Processing vast amounts of text data for sentiment analysis, summarization, or topic extraction.

- Batch processing for LLM model training: Accelerate the training process for large language models.

- M3: Perfect for projects where budget is a concern and token generation speed isn't critical:

- Simple chatbots: For basic conversational interactions.

- Text summarization for smaller documents: Summarizing short articles or documents.

Quantization's Role in Performance: A Simple Analogy

Quantization is like resizing a photo. Imagine you have a high-resolution image (F16) – it has a lot of detail but takes up a lot of memory. When you resize it (Q80 or Q40), you reduce the file size (memory) but lose some detail.

In LLMs, quantization reduces the model's size, allowing them to fit on smaller storage devices and consume less memory. However, this comes at the cost of slightly reduced accuracy.

FAQ: Frequently Asked Questions

What is token generation speed?

Token generation speed is how fast a language model can produce text (in the form of tokens). It's like the model's typing speed, determining how quickly it can respond to your prompts.

Why does quantization affect token generation speed?

Quantization reduces the size of the LLM model by using fewer bits to represent each number. This compression can speed up processing but might slightly impact the model's accuracy, leading to variations in token generation speed.

Is the Apple M3 not compatible with F16 quantization for Llama 2 7B?

It's unclear if the Apple M3 doesn't support F16 quantization with Llama 2 7B directly. The lack of data in our benchmark could be due to limitations in the tools or resources used for testing. It's recommended to consult with the respective hardware and software documentation for specific compatibility details.

Should I always use the fastest chip for LLMs?

Not necessarily. The best chip for your LLM application depends on your specific requirements and budget. A faster chip might be overkill for smaller projects, leading to unnecessary cost.

Keywords

Apple M2 Max, Apple M3, LLM, Llama 2, token generation speed, processing speed, quantization, F16, Q80, Q40, benchmark, comparison, performance analysis, use cases, development, large language models, AI, natural language processing, NLP.