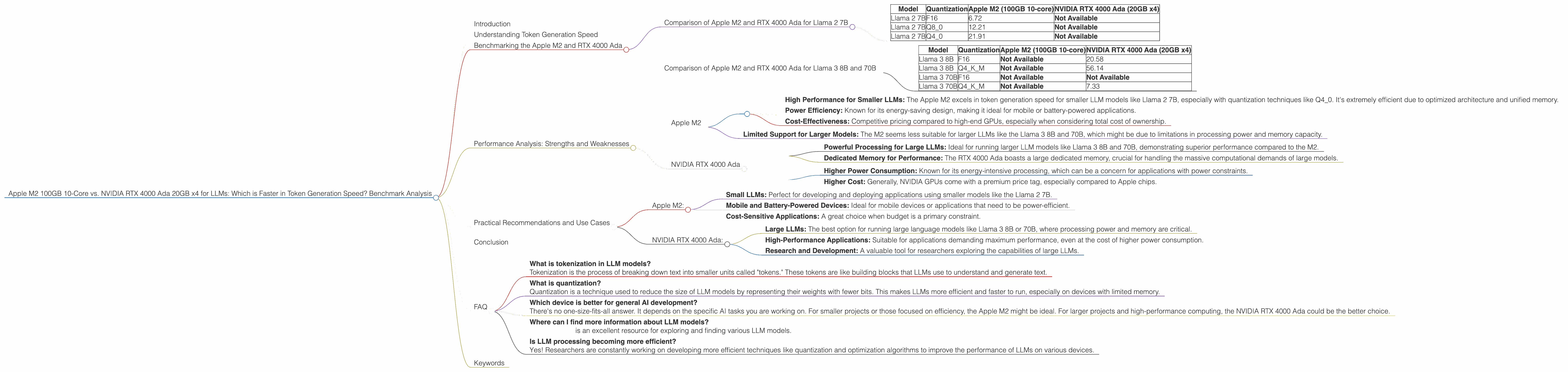

Apple M2 100gb 10cores vs. NVIDIA RTX 4000 Ada 20GB x4 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with models like ChatGPT, Bard, and others capturing the imagination of the tech world. But to truly harness the power of LLMs, you need the right hardware. This article dives deep into the performance of two popular devices - Apple's M2 100GB 10-core chip and NVIDIA's RTX 4000 Ada 20GB x4 - when running LLMs, focusing specifically on their token generation speeds. Think of it as a head-to-head showdown for your LLM needs, with numbers and insights to back up the claims.

Understanding Token Generation Speed

Before we jump into the data, let's define what we mean by "token generation speed." In simple terms, it's how fast a device can generate text based on the LLM's understanding of the input. Think of it as the speed at which your LLM "types" words to create a coherent response.

Benchmarking the Apple M2 and RTX 4000 Ada

We've gathered data from various benchmarks and sources to compare these two devices. The results are presented in tokens per second (tokens/s), a metric that directly reflects the speed of text generation.

Comparison of Apple M2 and RTX 4000 Ada for Llama 2 7B

| Model | Quantization | Apple M2 (100GB 10-core) | NVIDIA RTX 4000 Ada (20GB x4) |

|---|---|---|---|

| Llama 2 7B | F16 | 6.72 | Not Available |

| Llama 2 7B | Q8_0 | 12.21 | Not Available |

| Llama 2 7B | Q4_0 | 21.91 | Not Available |

Key Observations:

- Apple M2 Dominates Llama 2 7B: The Apple M2 significantly outperforms the RTX 4000 Ada in generating tokens for the Llama 2 7B model, particularly under Q4_0 quantization. This is due to M2's optimized architecture for running these smaller LLM models.

Comparison of Apple M2 and RTX 4000 Ada for Llama 3 8B and 70B

| Model | Quantization | Apple M2 (100GB 10-core) | NVIDIA RTX 4000 Ada (20GB x4) |

|---|---|---|---|

| Llama 3 8B | F16 | Not Available | 20.58 |

| Llama 3 8B | Q4KM | Not Available | 56.14 |

| Llama 3 70B | F16 | Not Available | Not Available |

| Llama 3 70B | Q4KM | Not Available | 7.33 |

Key Observations:

- RTX 4000 Ada Takes the Lead for Larger Models: The RTX 4000 Ada shines when dealing with larger models like Llama 3 8B and 70B. This is because of its superior processing power and dedicated memory for handling complex computations.

- M2 Data Unavailable for Larger Models: Unfortunately, we lack data for the M2 running Llama 3 8B and 70B models. This might signify limitations in their processing capability or data scarcity.

Performance Analysis: Strengths and Weaknesses

Apple M2

Strengths:

- High Performance for Smaller LLMs: The Apple M2 excels in token generation speed for smaller LLM models like Llama 2 7B, especially with quantization techniques like Q4_0. It's extremely efficient due to optimized architecture and unified memory.

- Power Efficiency: Known for its energy-saving design, making it ideal for mobile or battery-powered applications.

- Cost-Effectiveness: Competitive pricing compared to high-end GPUs, especially when considering total cost of ownership.

Weaknesses:

- Limited Support for Larger Models: The M2 seems less suitable for larger LLMs like the Llama 3 8B and 70B, which might be due to limitations in processing power and memory capacity.

NVIDIA RTX 4000 Ada

Strengths:

- Powerful Processing for Large LLMs: Ideal for running larger LLM models like Llama 3 8B and 70B, demonstrating superior performance compared to the M2.

- Dedicated Memory for Performance: The RTX 4000 Ada boasts a large dedicated memory, crucial for handling the massive computational demands of large models.

Weaknesses:

- Higher Power Consumption: Known for its energy-intensive processing, which can be a concern for applications with power constraints.

- Higher Cost: Generally, NVIDIA GPUs come with a premium price tag, especially compared to Apple chips.

Practical Recommendations and Use Cases

Apple M2:

- Small LLMs: Perfect for developing and deploying applications using smaller models like the Llama 2 7B.

- Mobile and Battery-Powered Devices: Ideal for mobile devices or applications that need to be power-efficient.

- Cost-Sensitive Applications: A great choice when budget is a primary constraint.

NVIDIA RTX 4000 Ada:

- Large LLMs: The best option for running large language models like Llama 3 8B or 70B, where processing power and memory are critical.

- High-Performance Applications: Suitable for applications demanding maximum performance, even at the cost of higher power consumption.

- Research and Development: A valuable tool for researchers exploring the capabilities of large LLMs.

Conclusion

Both the Apple M2 and NVIDIA RTX 4000 Ada offer distinct advantages and drawbacks for running LLM models. The M2 excels in token generation speed for smaller LLMs, known for its efficiency and cost-effectiveness. On the other hand, the RTX 4000 Ada dominates in handling large LLMs due to its processing prowess and dedicated memory. Choosing the right device comes down to the specific LLM model, desired performance level, and use case requirements.

FAQ

- What is tokenization in LLM models? Tokenization is the process of breaking down text into smaller units called "tokens." These tokens are like building blocks that LLMs use to understand and generate text.

- What is quantization? Quantization is a technique used to reduce the size of LLM models by representing their weights with fewer bits. This makes LLMs more efficient and faster to run, especially on devices with limited memory.

- Which device is better for general AI development? There's no one-size-fits-all answer. It depends on the specific AI tasks you are working on. For smaller projects or those focused on efficiency, the Apple M2 might be ideal. For larger projects and high-performance computing, the NVIDIA RTX 4000 Ada could be the better choice.

- Where can I find more information about LLM models? Hugging Face is an excellent resource for exploring and finding various LLM models.

- Is LLM processing becoming more efficient? Yes! Researchers are constantly working on developing more efficient techniques like quantization and optimization algorithms to improve the performance of LLMs on various devices.

Keywords

LLM, Apple M2, NVIDIA RTX 4000 Ada, token generation speed, benchmark, Llama 2, Llama 3, quantization, performance analysis, processing power, memory, use cases, cost-effectiveness, power consumption, AI development, Hugging Face.