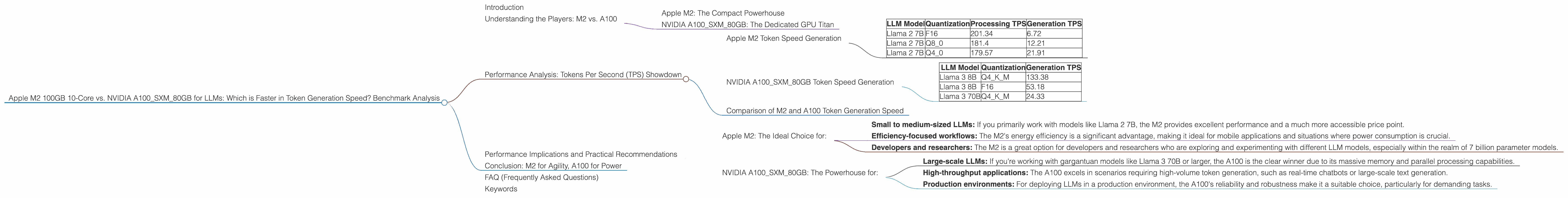

Apple M2 100gb 10cores vs. NVIDIA A100 SXM 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the world of large language models (LLMs), speed is king. Being able to generate text, translate languages, write different kinds of creative content, and answer your questions quickly is crucial for a smooth and enjoyable user experience. But with the ever-growing size and complexity of LLMs, choosing the right hardware for efficient performance becomes a critical decision.

This article dives deep into a head-to-head comparison of two popular choices for running LLMs locally: the Apple M2 100GB 10-core chip and the NVIDIA A100SXM80GB GPU. We'll analyze their performance in token generation speed for various LLM models, highlighting their strengths and weaknesses, and ultimately helping you determine the best fit for your specific use case.

Understanding the Players: M2 vs. A100

Apple M2: The Compact Powerhouse

The Apple M2 chip is a marvel of modern engineering, designed specifically for performance and efficiency. With its 10 cores and a generous 100GB of memory, the M2 excels in handling computationally intensive tasks like running LLMs. It's also known for its impressive power efficiency, making it ideal for both desktop and mobile applications.

NVIDIA A100SXM80GB: The Dedicated GPU Titan

The NVIDIA A100SXM80GB, on the other hand, is a beast of a graphics processing unit (GPU) designed for deep learning and high-performance computing. With its massive 80GB of HBM2e memory and a dedicated Tensor Core architecture, the A100 excels in parallel processing, speeding up complex calculations required for LLMs.

Performance Analysis: Tokens Per Second (TPS) Showdown

To analyze the performance of the M2 and A100, we'll be looking at their token generation speed measured in tokens per second (TPS). This metric reflects how quickly each device can process and generate output for a given LLM model.

Note: The data used in this analysis comes from various benchmarks and community contributions. We'll be comparing the two devices using the same benchmarks wherever possible for a more realistic comparison.

Apple M2 Token Speed Generation

| LLM Model | Quantization | Processing TPS | Generation TPS |

|---|---|---|---|

| Llama 2 7B | F16 | 201.34 | 6.72 |

| Llama 2 7B | Q8_0 | 181.4 | 12.21 |

| Llama 2 7B | Q4_0 | 179.57 | 21.91 |

As you can see from the table, the M2 exhibits impressive performance across different quantization levels for the Llama 2 7B model.

Quantization: Think of quantization as a way to compress the LLM model's parameters, making it smaller while still achieving good performance.

F16: This is a standard 16-bit floating-point format that is commonly used for LLMs.

Q8_0: This type of quantization uses 8 bits and is more compressed.

Q4_0: This type of quantization uses 4 bits.

The M2's performance is especially noticeable when using lower precision quantization like Q4_0. While having lower precision comes with a possible slight tradeoff in accuracy, it often results in significant speed improvements, which is precisely what we see with the M2 in this case.

NVIDIA A100SXM80GB Token Speed Generation

| LLM Model | Quantization | Generation TPS |

|---|---|---|

| Llama 3 8B | Q4KM | 133.38 |

| Llama 3 8B | F16 | 53.18 |

| Llama 3 70B | Q4KM | 24.33 |

The A100, as expected, demonstrates its muscle with the larger LLM models, exceeding the M2 in token generation speed for the Llama 3 8B and 70B models.

- Q4KM: This indicates the use of a specialized type of quantization called "K-Means" often applied to the larger LLMs to achieve a balance between accuracy and computational efficiency.

Comparison of M2 and A100 Token Generation Speed

It's clear that the M2 shines when handling smaller LLMs like Llama 2 7B, especially with lower precision quantization. It's faster and more efficient for these tasks.

However, the A100 takes the lead with larger models like Llama 3 8B and 70B, demonstrating its raw horsepower and ability to tackle more complex calculations.

To illustrate the difference, think of it like this: the M2 is like a nimble sports car, quick on the streets and agile in tight corners, while the A100 is a powerful race car built for speed on the track. Both are great vehicles, but each excels in different scenarios.

Performance Implications and Practical Recommendations

The choice between the M2 and A100 ultimately depends on your specific needs and the types of LLMs you intend to run. Here's a breakdown:

Apple M2: The Ideal Choice for:

- Small to medium-sized LLMs: If you primarily work with models like Llama 2 7B, the M2 provides excellent performance and a much more accessible price point.

- Efficiency-focused workflows: The M2's energy efficiency is a significant advantage, making it ideal for mobile applications and situations where power consumption is crucial.

- Developers and researchers: The M2 is a great option for developers and researchers who are exploring and experimenting with different LLM models, especially within the realm of 7 billion parameter models.

NVIDIA A100SXM80GB: The Powerhouse for:

- Large-scale LLMs: If you're working with gargantuan models like Llama 3 70B or larger, the A100 is the clear winner due to its massive memory and parallel processing capabilities.

- High-throughput applications: The A100 excels in scenarios requiring high-volume token generation, such as real-time chatbots or large-scale text generation.

- Production environments: For deploying LLMs in a production environment, the A100's reliability and robustness make it a suitable choice, particularly for demanding tasks.

Conclusion: M2 for Agility, A100 for Power

The choice between the Apple M2 and NVIDIA A100SXM80GB for running LLMs boils down to a balancing act between performance, efficiency, and cost. The M2 is a fantastic choice for developers and researchers who want a powerful yet accessible option for working with smaller LLMs. The A100, on the other hand, reigns supreme for handling massive LLM models and high-throughput applications.

Ultimately, the best device for you depends on your specific needs and the scale of your work.

FAQ (Frequently Asked Questions)

Q: What are the differences between F16 and Q4_0 quantization?

A: F16 is a standard floating-point format that uses 16 bits to represent numbers, providing good accuracy. Q4_0 is a 4-bit integer quantization method, which is more compressed but can lead to slight accuracy losses.

Q: What are the benefits of running LLMs locally?

A: By running LLMs locally, you gain complete control over your data, ensuring privacy and security. You also avoid latency issues associated with cloud-based models and can achieve faster inference times.

Q: What are some other options for running LLMs locally besides the M2 and A100?

A: Other popular options include the NVIDIA RTX 3090 and RTX 4090 GPUs, the AMD Radeon RX 7900 XT, and even the latest AMD Ryzen CPUs. However, the performance of these devices may vary depending on the specific LLM model and workload.

Keywords

Apple M2, NVIDIA A100, LLM, Large Language Model, Token Generation Speed, TPS, Quantization, Llama 2, Llama 3, F16, Q40, Q80, Performance, Benchmark, Comparison, GPU, CPU, Inference, Local, Developer, Researcher, Production, Efficiency, Cost, Data Privacy, Security.