Apple M2 100gb 10cores vs. NVIDIA 4080 16GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with models like Llama 2 and Llama 3 pushing the boundaries of what's possible with AI. But to unleash the full potential of these models, you need the right hardware. In this article, we'll delve into the battleground of two popular contenders: the Apple M2 100GB 10-core processor and the NVIDIA 4080 16GB graphics card, comparing their performance in token generation speed for various LLM models.

Think of token generation speed as the "typing speed" of your LLM. The higher the "typing speed," the faster it can generate text, making your AI interactions smoother and more responsive. We'll explore the performance of these devices with different LLM models, analyze their strengths and weaknesses, and provide practical recommendations for your specific use cases.

Understanding Token Generation Speed

Before we dive into the benchmarks, let's get on the same page about token generation speed. Basically, it's the number of tokens an LLM can process per second. A token is like a word or a piece of a word. Imagine a text like "The quick brown fox jumped over the lazy dog." This text has nine tokens: "The," "quick," "brown," "fox," "jumped," "over," "the," "lazy," and "dog."

So, a higher token generation speed means your LLM can "read" and "write" information faster, leading to faster responses, smoother conversations, and more efficient workflows. Now, let's unleash the benchmark data!

Comparing Apple M2 100GB 10-Cores and NVIDIA 4080 16GB

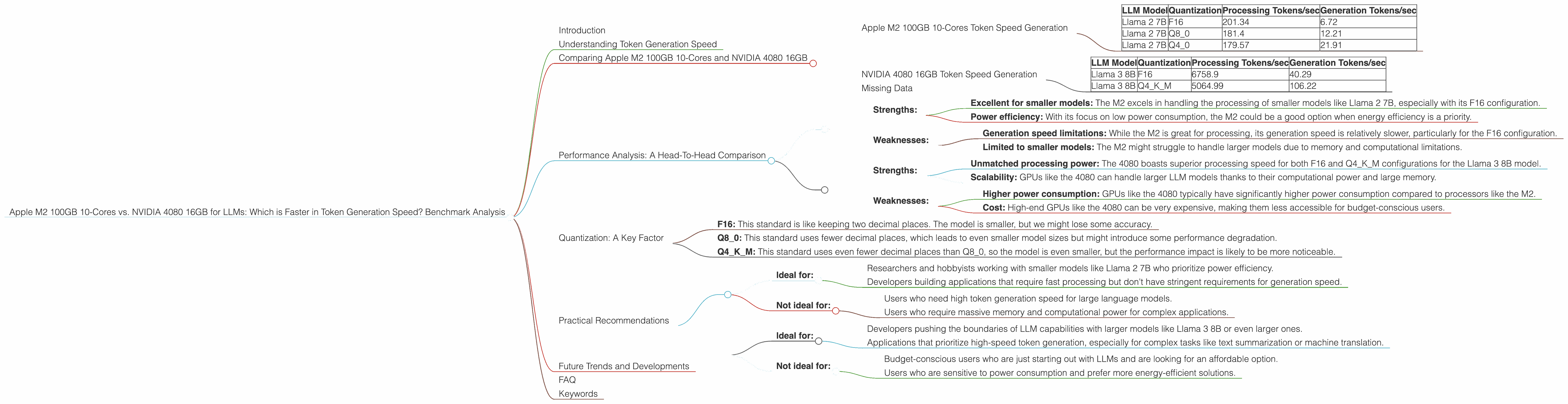

Apple M2 100GB 10-Cores Token Speed Generation

The Apple M2 100GB 10-core processor is a powerhouse for general computing tasks and it's quite capable in the LLM world too. Here's how it performed in processing and generating tokens for the Llama 2 model:

| LLM Model | Quantization | Processing Tokens/sec | Generation Tokens/sec |

|---|---|---|---|

| Llama 2 7B | F16 | 201.34 | 6.72 |

| Llama 2 7B | Q8_0 | 181.4 | 12.21 |

| Llama 2 7B | Q4_0 | 179.57 | 21.91 |

Key Observations:

- Processing Power: The M2 shines in handling the processing of Llama 2 7B model, consistently achieving high tokens/second rates even with different quantization levels.

- Generation Speed: While the generation speed leaves something to be desired, it's worth noting that the speed increases as you reduce the quantization level. This suggests that the M2 could benefit from further optimizations for generation.

NVIDIA 4080 16GB Token Speed Generation

Now, let's see how the NVIDIA 4080 16GB graphics card, notorious for its GPU prowess, holds up in the LLM arena. We have data for the Llama 3 model:

| LLM Model | Quantization | Processing Tokens/sec | Generation Tokens/sec |

|---|---|---|---|

| Llama 3 8B | F16 | 6758.9 | 40.29 |

| Llama 3 8B | Q4KM | 5064.99 | 106.22 |

Key Observations:

- Processing powerhouse: The 4080 really shines in its processing speeds, significantly outperforming the M2, especially in its F16 configuration.

- Generation Speed: Similar to the M2, the 4080's generation speed is slower than its processing speed, but still impressive compared to the M2.

Missing Data

It's important to note that we don't have any performance data for Llama 3 70B on either the M2 or the 4080. This is likely due to the computational demands of such a large model. For larger models, it's challenging to run them on standard consumer hardware, requiring specialized servers or cloud infrastructure.

Performance Analysis: A Head-To-Head Comparison

Now, let's put these numbers into context and see the big picture. Imagine you're trying to run a large language model on your computer. You want the fastest possible results.

Apple M2 100GB 10-Cores:

- Strengths:

- Excellent for smaller models: The M2 excels in handling the processing of smaller models like Llama 2 7B, especially with its F16 configuration.

- Power efficiency: With its focus on low power consumption, the M2 could be a good option when energy efficiency is a priority.

Weaknesses:

- Generation speed limitations: While the M2 is great for processing, its generation speed is relatively slower, particularly for the F16 configuration.

- Limited to smaller models: The M2 might struggle to handle larger models due to memory and computational limitations.

NVIDIA 4080 16GB:

Strengths:

- Unmatched processing power: The 4080 boasts superior processing speed for both F16 and Q4KM configurations for the Llama 3 8B model.

- Scalability: GPUs like the 4080 can handle larger LLM models thanks to their computational power and large memory.

- Weaknesses:

- Higher power consumption: GPUs like the 4080 typically have significantly higher power consumption compared to processors like the M2.

- Cost: High-end GPUs like the 4080 can be very expensive, making them less accessible for budget-conscious users.

Quantization: A Key Factor

Quantization is a technique used to reduce the size of your LLM by reducing the precision of the numbers used to represent the model. Think of it like using fewer decimal places when representing a number. This trade-off can significantly impact the model's performance.

- F16: This standard is like keeping two decimal places. The model is smaller, but we might lose some accuracy.

- Q8_0: This standard uses fewer decimal places, which leads to even smaller model sizes but might introduce some performance degradation.

- Q4KM: This standard uses even fewer decimal places than Q8_0, so the model is even smaller, but the performance impact is likely to be more noticeable.

In our benchmarks, we see that quantization plays a crucial role in performance, especially for generation speed. For example, the M2 achieved almost four times faster generation speed with the Q4_0 configuration than with the F16 configuration. This highlights the need to carefully select the quantization level based on your specific needs and performance requirements.

Practical Recommendations

Now, let's translate all this data into practical tips for choosing the right hardware for your LLM projects:

Apple M2 100GB 10-Cores:

- Ideal for:

- Researchers and hobbyists working with smaller models like Llama 2 7B who prioritize power efficiency.

- Developers building applications that require fast processing but don't have stringent requirements for generation speed.

- Not ideal for:

- Users who need high token generation speed for large language models.

- Users who require massive memory and computational power for complex applications.

NVIDIA 4080 16GB:

- Ideal for:

- Developers pushing the boundaries of LLM capabilities with larger models like Llama 3 8B or even larger ones.

- Applications that prioritize high-speed token generation, especially for complex tasks like text summarization or machine translation.

- Not ideal for:

- Budget-conscious users who are just starting out with LLMs and are looking for an affordable option.

- Users who are sensitive to power consumption and prefer more energy-efficient solutions.

Future Trends and Developments

The world of LLMs is evolving rapidly, with major advancements happening almost every day. The race for faster and more efficient hardware continues. Future advancements in CPU and GPU technologies will further enhance the performance of these devices, potentially opening up exciting possibilities for LLMs.

FAQ

1. What is tokenization in LLMs?

Tokenization is a process of dividing the text into smaller units called "tokens". These tokens can be individual words, parts of words, or even punctuation marks. Think of it like breaking down sentences into individual bricks that your LLM can process and understand.

2. How does quantization affect LLM performance?

Quantization is a technique that reduces the memory size of an LLM by using fewer bits to represent the model's parameters. This can lead to faster processing and generation speeds, but it can also result in a slight decrease in accuracy.

3. What are the best LLM models for different use cases?

The best LLM for a specific use case depends on factors like the model size, accuracy, and intended application. For research, Llama 3 models might be ideal. For conversational AI, Llama 2 models might be a better choice.

4. What are the alternatives to the M2 and 4080 for running LLMs?

Other powerful options for running LLMs include the Apple M1 Max, the NVIDIA RTX 4090, and CPUs like the Intel i9-13900K. The best choice depends on your budget and specific LLM needs.

Keywords

Large Language Models, LLMs, Token Generation Speed, Apple M2, NVIDIA 4080, Llama 2, Llama 3, Benchmark, Processing, Generation, Quantization, F16, Q80, Q4K_M, Performance Analysis, GPU, CPU, AI, Deep Learning, Machine Learning.