Apple M2 100gb 10cores vs. NVIDIA 4070 Ti 12GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is booming, with new models like Llama 2 and Llama 3 being released at a rapid pace. These LLMs are capable of impressive feats like generating text, translating languages, and writing different kinds of creative content. But all this power comes at a cost: running these models locally requires significant computational resources.

This article dives deep into the performance of two popular devices for running LLMs: Apple's M2 chip with 100GB memory and 10 cores, and the NVIDIA 4070 Ti with 12GB of VRAM. We'll analyze their token generation speed for different LLMs and explore their strengths and weaknesses. Whether you're a developer looking to build a local LLM application or just curious about the capabilities of these devices, this analysis will provide valuable insights.

Comparing Apple M2 and NVIDIA 4070 Ti for Token Generation Speed

Let's cut to the chase: which device reigns supreme in token generation speed? We'll analyze the token generation speed of both devices for various Llama models to find out.

Performance Analysis: Apple M2 vs. NVIDIA 4070 Ti

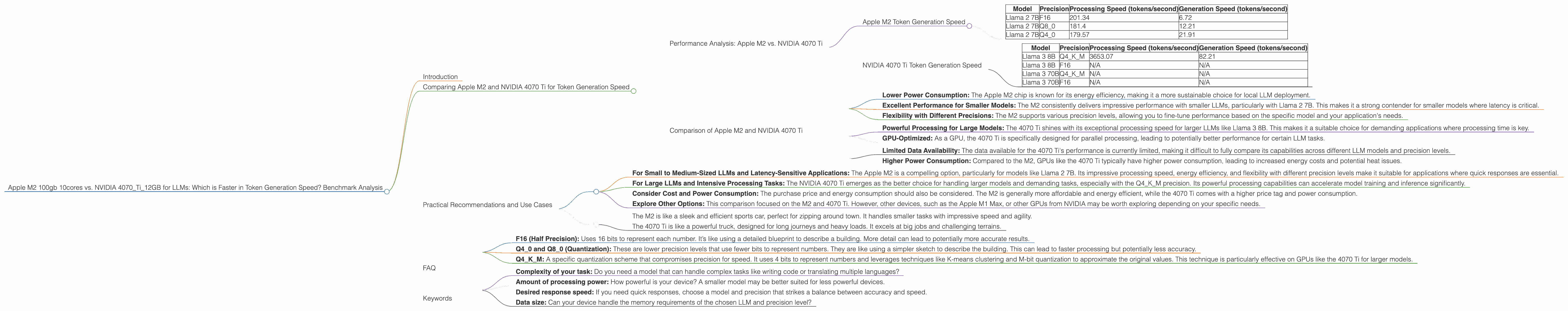

Apple M2 Token Generation Speed

The Apple M2 is known for its impressive performance in processing and generation tasks. Let's break down its performance in the context of LLMs:

| Model | Precision | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama 2 7B | F16 | 201.34 | 6.72 |

| Llama 2 7B | Q8_0 | 181.4 | 12.21 |

| Llama 2 7B | Q4_0 | 179.57 | 21.91 |

Highlights:

- Faster Processing: The M2 demonstrates impressive processing speeds, particularly with the F16 precision. This suggests that the M2 chip is adept at handling the intensive calculations involved in processing LLM data.

- Q40 Precision Benefits Generation: While the Q40 precision exhibits slightly slower processing, it significantly improves generation speed, almost quadrupling the rate compared to F16. This indicates that the M2 can benefit from lower precision models for faster results, particularly in generation tasks where latency matters.

NVIDIA 4070 Ti Token Generation Speed

The NVIDIA 4070 Ti is a powerful GPU designed for demanding tasks like gaming and machine learning. Let's see its potential for running LLMs:

| Model | Precision | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 3653.07 | 82.21 |

| Llama 3 8B | F16 | N/A | N/A |

| Llama 3 70B | Q4KM | N/A | N/A |

| Llama 3 70B | F16 | N/A | N/A |

Key Observations:

- Excellent Processing Power: The 4070 Ti exhibits exceptional processing speed for the Llama 3 8B model with Q4KM precision. This demonstrates the GPU's ability to handle larger and more complex models.

- Limited Generation Speed Data: The 4070 Ti's performance in generation speed is limited to the Llama 3 8B model with Q4KM precision. No data is available for other precisions or larger models.

Comparison of Apple M2 and NVIDIA 4070 Ti

Strengths of the Apple M2:

- Lower Power Consumption: The Apple M2 chip is known for its energy efficiency, making it a more sustainable choice for local LLM deployment.

- Excellent Performance for Smaller Models: The M2 consistently delivers impressive performance with smaller LLMs, particularly with Llama 2 7B. This makes it a strong contender for smaller models where latency is critical.

- Flexibility with Different Precisions: The M2 supports various precision levels, allowing you to fine-tune performance based on the specific model and your application's needs.

Strengths of the NVIDIA 4070 Ti:

- Powerful Processing for Large Models: The 4070 Ti shines with its exceptional processing speed for larger LLMs like Llama 3 8B. This makes it a suitable choice for demanding applications where processing time is key.

- GPU-Optimized: As a GPU, the 4070 Ti is specifically designed for parallel processing, leading to potentially better performance for certain LLM tasks.

Weaknesses:

- Limited Data Availability: The data available for the 4070 Ti's performance is currently limited, making it difficult to fully compare its capabilities across different LLM models and precision levels.

- Higher Power Consumption: Compared to the M2, GPUs like the 4070 Ti typically have higher power consumption, leading to increased energy costs and potential heat issues.

Practical Recommendations and Use Cases

Choosing between the Apple M2 and NVIDIA 4070 Ti depends on your specific needs and the models you intend to run. Here are some practical recommendations based on the analysis:

- For Small to Medium-Sized LLMs and Latency-Sensitive Applications: The Apple M2 is a compelling option, particularly for models like Llama 2 7B. Its impressive processing speed, energy efficiency, and flexibility with different precision levels make it suitable for applications where quick responses are essential.

- For Large LLMs and Intensive Processing Tasks: The NVIDIA 4070 Ti emerges as the better choice for handling larger models and demanding tasks, especially with the Q4KM precision. Its powerful processing capabilities can accelerate model training and inference significantly.

- Consider Cost and Power Consumption: The purchase price and energy consumption should also be considered. The M2 is generally more affordable and energy efficient, while the 4070 Ti comes with a higher price tag and power consumption.

- Explore Other Options: This comparison focused on the M2 and 4070 Ti. However, other devices, such as the Apple M1 Max, or other GPUs from NVIDIA may be worth exploring depending on your specific needs.

Think of it this way:

- The M2 is like a sleek and efficient sports car, perfect for zipping around town. It handles smaller tasks with impressive speed and agility.

- The 4070 Ti is like a powerful truck, designed for long journeys and heavy loads. It excels at big jobs and challenging terrains.

FAQ

1. What is token generation speed and why is it important?

Token generation speed refers to how quickly a device can process and generate text in the form of “tokens” (words or sub-words). Think of it as the speed limit on a highway for text generation. A faster token generation speed means that the device can translate languages, write different kinds of creative content, and answer your questions more quickly.

2. What are the different precision levels (F16, Q80, Q40, Q4KM) and how do they affect performance?

Precision levels relate to how much detail is used in the mathematical calculations for the LLM.

- F16 (Half Precision): Uses 16 bits to represent each number. It’s like using a detailed blueprint to describe a building. More detail can lead to potentially more accurate results.

- Q40 and Q80 (Quantization): These are lower precision levels that use fewer bits to represent numbers. They are like using a simpler sketch to describe the building. This can lead to faster processing but potentially less accuracy.

- Q4KM: A specific quantization scheme that compromises precision for speed. It uses 4 bits to represent numbers and leverages techniques like K-means clustering and M-bit quantization to approximate the original values. This technique is particularly effective on GPUs like the 4070 Ti for larger models.

3. How do I choose the right LLM and precision level for my application?

The best LLM and precision level depend on your specific needs. Smaller models like Llama 2 7B might be sufficient for basic tasks, while larger models like Llama 3 8B or 70B offer more capabilities.

Consider the following factors:

- Complexity of your task: Do you need a model that can handle complex tasks like writing code or translating multiple languages?

- Amount of processing power: How powerful is your device? A smaller model may be better suited for less powerful devices.

- Desired response speed: If you need quick responses, choose a model and precision that strikes a balance between accuracy and speed.

- Data size: Can your device handle the memory requirements of the chosen LLM and precision level?

4. What are the trade-offs between speed and accuracy?

It's like a balancing act. Higher precision levels offer more accuracy, like getting more details on a map, but can take longer to process. Lower precision levels are like getting a quick overview of the map, but might miss some fine details.

Keywords

LLMs, Llama 2, Llama 3, Token Generation Speed, Apple M2, NVIDIA 4070 Ti, GPU, CPU, Precision Levels, F16, Q80, Q40, Q4KM, Quantization, Performance Benchmark, Processing Speed, Generation Speed, Local LLM, Inference, Application Development, Developer, Geek