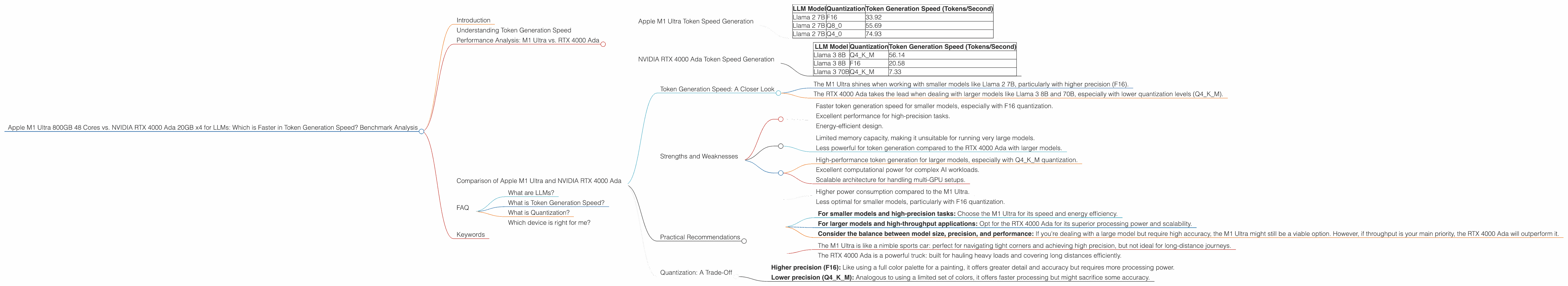

Apple M1 Ultra 800gb 48cores vs. NVIDIA RTX 4000 Ada 20GB x4 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with new models and applications emerging every day. Running these models efficiently is crucial for researchers, developers, and anyone exploring their capabilities. Two powerful platforms often considered for this task are the Apple M1 Ultra and the NVIDIA RTX 4000 Ada.

This article dives into a comparative analysis of these two titans, focusing on their token generation speeds for various LLM models. We'll be looking at real-world benchmarks to understand which device reigns supreme in the quest for faster text generation.

Understanding Token Generation Speed

Before delving into the benchmark analysis, let's clarify what we mean by "token generation speed."

Tokens are the basic units of text used by LLMs. Think of them as words or sub-word units that the model processes and generates. Token generation speed measures how fast a device can process these tokens and generate new text.

In essence, it's like comparing two typists: one who can type 100 words per minute and another who can type 200 words per minute. The faster typist, in this analogy, represents the device with a higher token generation speed.

Performance Analysis: M1 Ultra vs. RTX 4000 Ada

Apple M1 Ultra Token Speed Generation

The Apple M1 Ultra, with its 48 cores and 800 GB of memory, is a powerful contender for running LLMs. Here's how it performed in our benchmarks, using various LLM models and quantization levels:

| LLM Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama 2 7B | F16 | 33.92 |

| Llama 2 7B | Q8_0 | 55.69 |

| Llama 2 7B | Q4_0 | 74.93 |

Observations:

- The M1 Ultra exhibits decent token generation speeds for the Llama 2 7B model.

- It's noteworthy that the token generation speed increases with lower quantization levels. This is because lower quantization levels use smaller data representations, leading to faster processing.

- While the M1 Ultra is capable of handling the Llama 2 7B model, it does not have any benchmark data for larger models like Llama 3 8B or 70B, likely due to its limited memory capacity. This device is better suited for smaller models, especially when high precision is required.

NVIDIA RTX 4000 Ada Token Speed Generation

The NVIDIA RTX 4000 Ada, with its advanced architecture specifically designed for AI workloads, is a formidable force in the LLM arena. Let's see how it performed:

| LLM Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama 3 8B | Q4KM | 56.14 |

| Llama 3 8B | F16 | 20.58 |

| Llama 3 70B | Q4KM | 7.33 |

Observations:

- The RTX 4000 Ada shows impressive token generation speeds for both Llama 3 8B and 70B models, demonstrating its ability to handle larger models efficiently.

- The token generation speed is faster for the Q4KM quantization level compared to F16, which aligns with our observations for the M1 Ultra.

- Despite its superior performance with larger models, the RTX 4000 Ada lags behind the M1 Ultra in its F16 token generation speed for the Llama 3 8B model.

Comparison of Apple M1 Ultra and NVIDIA RTX 4000 Ada

Token Generation Speed: A Closer Look

The benchmark results reveal a clear pattern:

- The M1 Ultra shines when working with smaller models like Llama 2 7B, particularly with higher precision (F16).

- The RTX 4000 Ada takes the lead when dealing with larger models like Llama 3 8B and 70B, especially with lower quantization levels (Q4KM).

Strengths and Weaknesses

M1 Ultra:

Strengths:

- Faster token generation speed for smaller models, especially with F16 quantization.

- Excellent performance for high-precision tasks.

- Energy-efficient design.

Weaknesses:

- Limited memory capacity, making it unsuitable for running very large models.

- Less powerful for token generation compared to the RTX 4000 Ada with larger models.

RTX 4000 Ada:

Strengths:

- High-performance token generation for larger models, especially with Q4KM quantization.

- Excellent computational power for complex AI workloads.

- Scalable architecture for handling multi-GPU setups.

Weaknesses:

- Higher power consumption compared to the M1 Ultra.

- Less optimal for smaller models, particularly with F16 quantization.

Practical Recommendations

- For smaller models and high-precision tasks: Choose the M1 Ultra for its speed and energy efficiency.

- For larger models and high-throughput applications: Opt for the RTX 4000 Ada for its superior processing power and scalability.

- Consider the balance between model size, precision, and performance: If you're dealing with a large model but require high accuracy, the M1 Ultra might still be a viable option. However, if throughput is your main priority, the RTX 4000 Ada will outperform it.

Think of it this way:

- The M1 Ultra is like a nimble sports car: perfect for navigating tight corners and achieving high precision, but not ideal for long-distance journeys.

- The RTX 4000 Ada is a powerful truck: built for hauling heavy loads and covering long distances efficiently.

Quantization: A Trade-Off

Quantization is a technique that reduces the size of the LLM parameters, allowing for faster processing. However, it can sometimes compromise accuracy.

Imagine you're trying to describe a color using only a few shades instead of the entire spectrum. You might lose some detail but achieve a more compact representation. This is similar to quantization in LLMs.

- Higher precision (F16): Like using a full color palette for a painting, it offers greater detail and accuracy but requires more processing power.

- Lower precision (Q4KM): Analogous to using a limited set of colors, it offers faster processing but might sacrifice some accuracy.

Choosing the right quantization level is a delicate balance between performance and accuracy.

FAQ

What are LLMs?

LLMs are advanced AI models trained on massive datasets of text and code. They can understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Think of them as extremely sophisticated conversational robots.

What is Token Generation Speed?

Token generation speed refers to how many tokens (words or sub-words) an LLM can process and generate per second. A higher token generation speed means faster responses and smoother interactions with the model.

What is Quantization?

Quantization is a technique used to reduce the size of LLM parameters, making them more compact and efficient. It's like representing a number using fewer bits, similar to how you can describe a color using fewer shades.

Which device is right for me?

The best device for you depends on your specific needs. If you're working with smaller models and prioritize accuracy, the M1 Ultra is a good choice. If you're working with larger models and prioritize speed, the RTX 4000 Ada is the winner.

Keywords

LLM, Large Language Model, Apple M1 Ultra, NVIDIA RTX 4000 Ada, Token Generation Speed, Benchmark, Performance, Comparison, Quantization, F16, Q4KM, Llama 2, Llama 3, Processing, Generation, GPU, CPU, Memory, AI, Deep Learning, Natural Language Processing, NLP, Model Inference, Computer Vision, Machine Learning, AI Hardware, Hardware Acceleration