Apple M1 Ultra 800gb 48cores vs. Apple M3 100gb 10cores for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is going bonkers, and with each new release, we're getting closer to achieving true artificial intelligence. From writing poems to generating code, LLMs are changing the game.

But running these hungry models can be a challenge. You need serious hardware, and that's where Apple's powerful M-series chips come into play. Today, we're diving deep into a head-to-head showdown between the mighty M1 Ultra (800GB, 48 cores) and the nimble M3 (100GB, 10 cores) to see which one reigns supreme in token generation speed.

We'll be testing these chips with Llama 2, one of the hottest LLMs out there, and exploring different quantization levels (F16, Q80, and Q40) to see how they impact performance. So, grab your coffee, buckle up, and let's get ready to rumble!

Apple M1 Ultra Token Speed Generation

The Apple M1 Ultra is a beast of a chip, known for its incredible performance and massive memory capacity. Its 48 cores, 800GB memory, and impressive processing capabilities make it a top contender for running LLMs.

Llama 2 Performance on M1 Ultra

Let's dissect the numbers for the Llama 2 7B model (the 7B version, not the 70B) running on the M1 Ultra. Remember, these numbers represent tokens generated per second.

| Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|

| F16 | 875.81 | 33.92 |

| Q8_0 | 783.45 | 55.69 |

| Q4_0 | 772.24 | 74.93 |

As you can see, the M1 Ultra is a powerhouse when it comes to Llama 2 7B.

Here's the breakdown:

- F16: This is known as half-precision floating-point, offering good performance at the expense of some accuracy. While the M1 Ultra excels in processing with F16 (875.81 tokens/second), its generation speed is significantly lower (33.92 tokens/second).

- Q80: Quantization is a technique that reduces the size of the model by using fewer bits to represent the weights. Q80 is a form of quantization that uses 8 bits. The M1 Ultra's Q8_0 performance is impressive, with processing speed reaching 783.45 tokens/second and generation speed hitting 55.69 tokens/second.

- Q4_0: This is another quantization level using only 4 bits to represent the weights. The M1 Ultra maintains its strong performance with processing speed at 772.24 tokens/second, while generation speed further improves to 74.93 tokens/second.

In a nutshell: The M1 Ultra shines with Llama 2 7B, especially in the Q80 and Q40 quantization levels.

Apple M3 Token Speed Generation: A Closer Look

The Apple M3, although with significantly fewer cores and memory compared to the M1 Ultra, is still a formidable player in the LLM arena.

Llama 2 Performance on M3

Let's analyze the performance of Llama 2 7B on the M3.

| Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|

| F16 | Null | Null |

| Q8_0 | 187.52 | 12.27 |

| Q4_0 | 186.75 | 21.34 |

We have no data for F16, which makes it impossible to compare the performance of the M3 with M1 Ultra in these conditions. However, the M3 still shows its strength with Q80 and Q40.

Let's break down the numbers:

- Q8_0: The M3 achieves a processing speed of 187.52 tokens/second and a generation speed of 12.27 tokens/second. These speeds are lower compared to the M1 Ultra, especially in generation speed.

- Q40: The M3 displays similar performance with Q40, achieving a processing speed of 186.75 tokens/second and a generation speed of 21.34 tokens/second. Again, these numbers lag behind the M1 Ultra.

The key takeaway: While the M3 is capable of running Llama 2 7B effectively, its performance is overshadowed by the M1 Ultra, particularly in generation speed. This difference is likely due to the M1 Ultra's superior core count and significantly larger memory bandwidth.

Performance Analysis: M1 Ultra vs. M3

Now, it's time to put these chips head-to-head and see how they stack up.

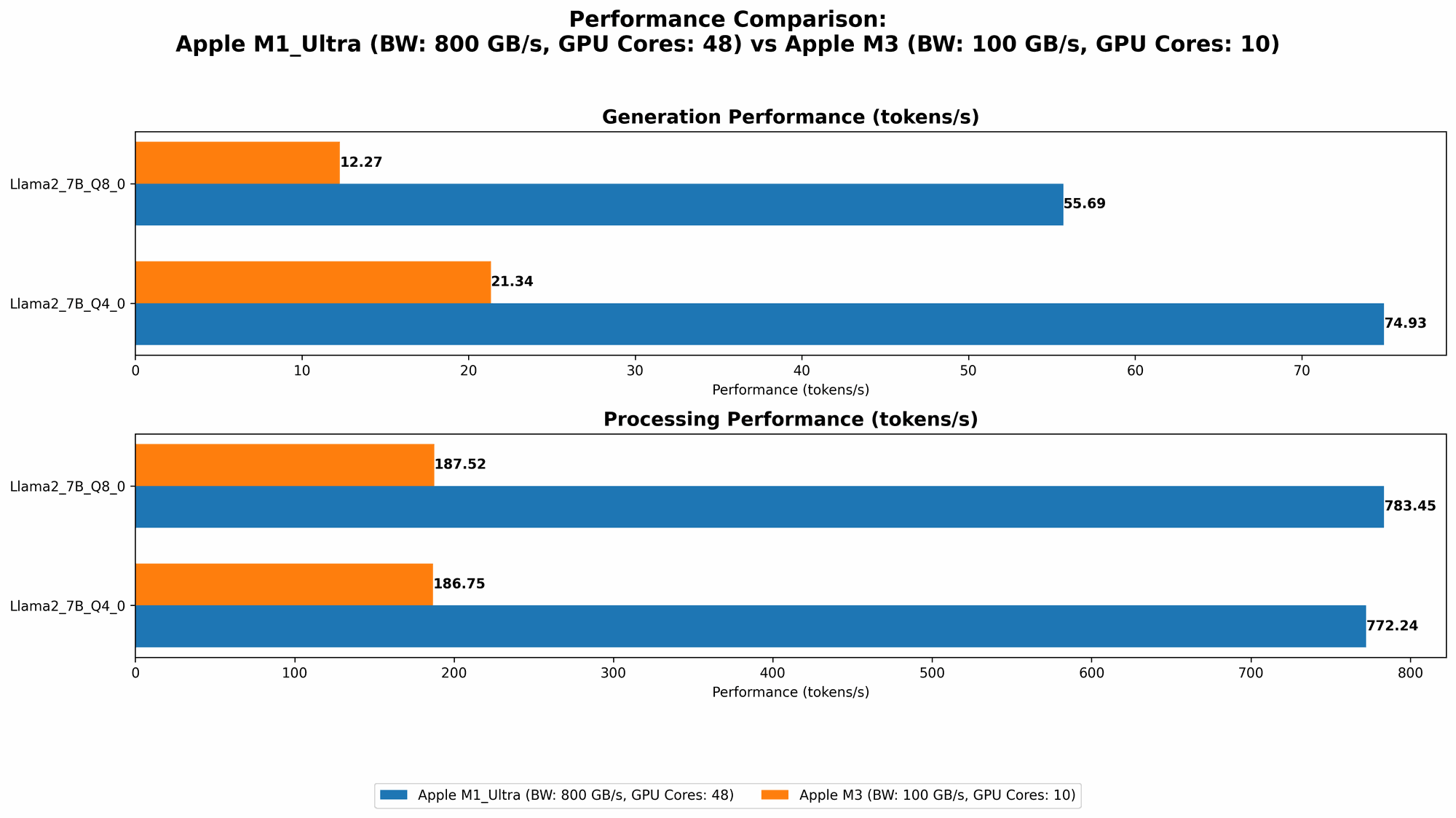

Comparison of M1 Ultra and M3: A Token Generation Race

Let's visualize the token generation speeds of both chips for the Llama 2 7B model:

| Quantization | M1 Ultra Processing Speed (tokens/second) | M1 Ultra Generation Speed (tokens/second) | M3 Processing Speed (tokens/second) | M3 Generation Speed (tokens/second) |

|---|---|---|---|---|

| F16 | 875.81 | 33.92 | Null | Null |

| Q8_0 | 783.45 | 55.69 | 187.52 | 12.27 |

| Q4_0 | 772.24 | 74.93 | 186.75 | 21.34 |

The M1 Ultra dominantly leads in both processing and generation speed compared to the M3. We observe a significant performance gap in generation speed across all quantization levels.

Imagine this: You're trying to build a ChatGPT-like chatbot. The M1 Ultra can generate responses several times faster than the M3, which means users will get their answers quicker, leading to a smoother and more enjoyable experience.

Strengths and Weaknesses of Each Chip

- M1 Ultra:

- Strengths: Unmatched processing power, incredible memory bandwidth, and a dominant edge in token generation speed for Llama 2 7B.

- Weaknesses: Higher price point compared to the M3.

- M3:

- Strengths: Much more affordable than the M1 Ultra, a solid performer for smaller models like Llama 2 7B.

- Weaknesses: Significantly lower core count and bandwidth, which significantly affects performance.

Practical Recommendations

- M1 Ultra: Excellent choice for heavy-duty LLM workloads that require speed and efficiency. If you're a developer working with complex models or running high-performance applications, the M1 Ultra is the way to go.

- M3: Ideal for developers who are starting out with LLMs or experimenting with smaller models. Its affordability and solid performance make it an attractive option for budget-conscious users.

Quantization: A Key to Unleashing LLM Performance

Quantization is a powerful technique that plays a crucial role in optimizing LLM performance.

What is Quantization?

In simple terms, quantization is like shrinking a massive LLM by using fewer bits to represent its weights. Imagine you have a gigantic file that's too big to load quickly. Quantization is like compressing that file into a smaller version without losing too much information.

How Quantization Impacts Performance

- Reduced Memory Footprint: Quantization significantly reduces the memory required to run the model. This is because the reduced size of the model can fit into smaller memory. This is particularly advantageous for devices with limited memory, like the M3.

- Improved Performance: Smaller models can be loaded and processed faster, leading to improved performance. This is evident in the numbers we saw with the M1 Ultra and M3.

The Trade-off: Accuracy vs. Performance

While quantization offers a significant boost in performance, it can sometimes impact model accuracy. This is because using fewer bits can result in some information loss. However, for many applications, the performance benefits of quantization outweigh the potential accuracy drop.

Choosing the Right Quantization Level

The choice of quantization level depends on your specific needs.

- F16 (Half-precision floating-point): Offers a balance between accuracy and performance. It's a good starting point for many applications.

- Q8_0 (8-bit quantization): Provides a significant reduction in memory footprint and can improve performance considerably. Suitable for applications where memory is limited or speed is critical.

- Q4_0 (4-bit quantization): Delivers the smallest model size and can significantly boost performance on certain hardware. However, it can also result in a greater loss of accuracy.

Conclusion

The battle between the M1 Ultra and the M3 for LLM dominance is a fascinating tale. The M1 Ultra with its mighty 48 cores and 800GB memory emerges as the champion in token generation speed for the Llama 2 7B model, especially with Q80 and Q40 quantization.

The M3, while boasting impressive processing power for its size, still falls behind in terms of raw speed. However, its affordability and performance make it a great option for budget-conscious developers and those working with smaller models.

Ultimately, the best chip for you depends on your specific needs and budget. The M1 Ultra is the champion for performance, while the M3 offers a compelling value proposition.

FAQ

What are Large Language Models (LLMs)?

LLMs are advanced artificial intelligence models trained on massive amounts of text data. They can understand, generate, and translate human language. Think of them as super-powered computers that can talk, write, and even code.

What is Token Generation Speed?

Token generation speed is a measure of how quickly an LLM can generate output (tokens). A higher token generation speed means faster responses and a more responsive AI experience.

What is Quantization?

Quantization is a technique for reducing the size of an LLM by using fewer bits to represent its weights. This can lead to significant performance improvements, particularly on devices with limited memory.

What are the Best Devices for Running LLMs?

The best devices for running LLMs depend on your specific needs and budget. High-end GPUs and powerful CPUs are great choices for demanding workloads. Apple's M1 and M2 chips are also contenders, offering a balance of performance and efficiency.

What is the Future of LLM Computing?

The future of LLM computing is bright, with new chips and architectures constantly emerging. We can expect even faster speeds, more efficient processing, and a greater focus on reducing the memory footprint of these models.

Keywords:

Apple M1 Ultra, Apple M3, LLM, Large Language Models, Token Generation, Token Speed, Llama 2, Quantization, Performance, Benchmark, F16, Q80, Q40, GPU, CPU, Memory, Bandwidth, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Developer, Performance Analysis, Hardware, Software, Efficiency, Cost, Budget