Apple M1 Ultra 800gb 48cores vs. Apple M2 Ultra 800gb 60cores for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, and with it comes the need for powerful hardware to run these powerful models. Apple's M1 and M2 Ultra chips are both designed to handle demanding workloads, including machine learning. But which one is the best for running LLMs, specifically for token generation speed? This article will compare the performance of the Apple M1 Ultra 800GB 48 cores and the Apple M2 Ultra 800GB 60 cores on different LLM models, including Llama 2 and Llama 3.

Why Token Generation Speed Matters

If you're a developer working with LLMs, token generation speed is critical. Think of tokens as the building blocks of language, like the letters in a word. The faster your device can generate tokens, the faster your LLM can process text, translate languages, write code, and more. It's like having a super-fast typist who can churn out sentences at lightning speed – the quicker the typing, the faster the final text is complete.

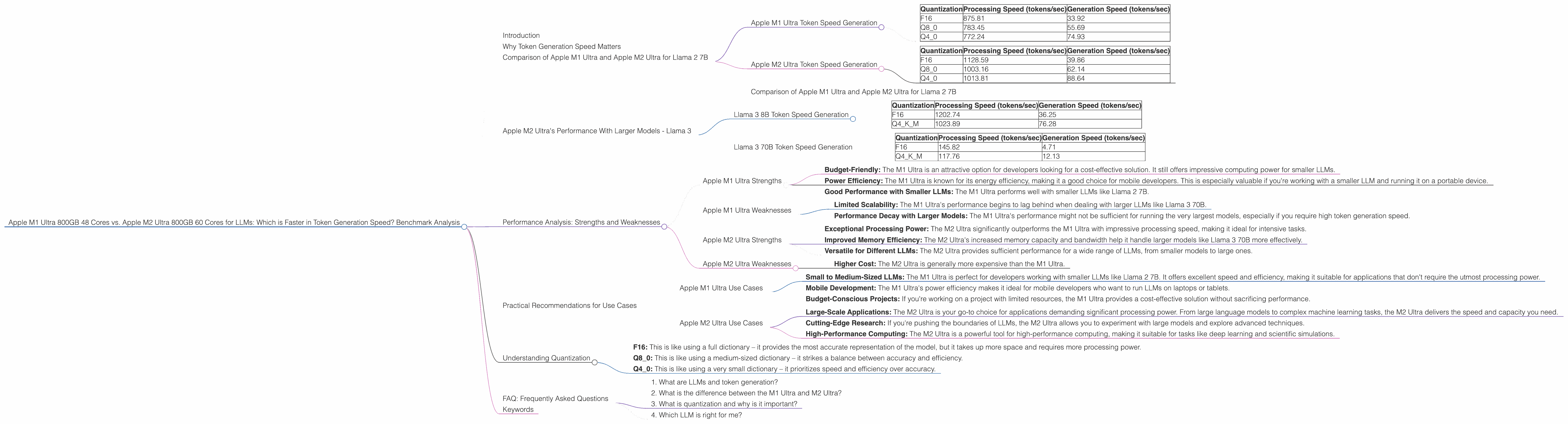

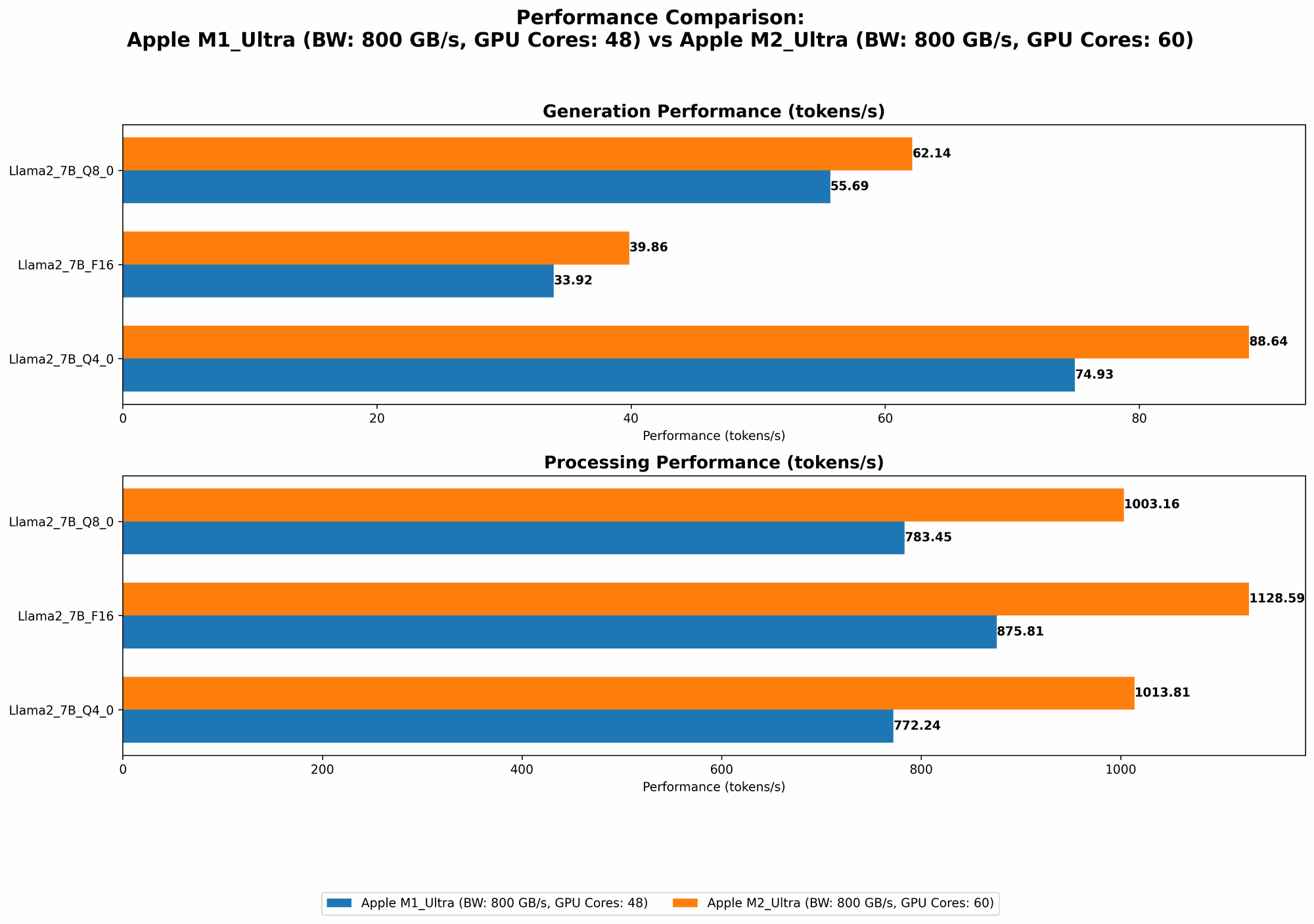

Comparison of Apple M1 Ultra and Apple M2 Ultra for Llama 2 7B

Apple M1 Ultra Token Speed Generation

The Apple M1 Ultra 800GB 48 cores is a powerful chip, but it's starting to show its age compared to the M2 Ultra. Let's take a look at how it performs with the Llama 2 7B model:

| Quantization | Processing Speed (tokens/sec) | Generation Speed (tokens/sec) |

|---|---|---|

| F16 | 875.81 | 33.92 |

| Q8_0 | 783.45 | 55.69 |

| Q4_0 | 772.24 | 74.93 |

As you can see, the M1 Ultra shows decent performance for Llama 2 7B, especially with Q4_0 quantization. The processing speed is impressive, but the generation speed is still not as quick as we'd hope for larger models.

Apple M2 Ultra Token Speed Generation

The Apple M2 Ultra 800GB 60 cores pushes the envelope with its improved architecture and additional cores. Here are the token generation numbers for Llama 2 7B:

| Quantization | Processing Speed (tokens/sec) | Generation Speed (tokens/sec) |

|---|---|---|

| F16 | 1128.59 | 39.86 |

| Q8_0 | 1003.16 | 62.14 |

| Q4_0 | 1013.81 | 88.64 |

The M2 Ultra outperforms the M1 Ultra in both processing and generation speed. It's a significant improvement, especially for the Q4_0 quantization level, where the generation speed is nearly 20% faster.

Comparison of Apple M1 Ultra and Apple M2 Ultra for Llama 2 7B

The M2 Ultra clearly reigns supreme when it comes to processing speed. It offers up to 30% faster processing compared to the M1 Ultra. While both chips are decent at token generation, the M2 Ultra keeps the edge with approximately 15% - 20% faster generation speed, particularly with Q4_0 quantization.

Apple M2 Ultra's Performance With Larger Models - Llama 3

The M2 Ultra doesn't just excel with Llama 2 7B; it's also well-equipped to handle larger models like Llama 3 8B and even 70B.

Llama 3 8B Token Speed Generation

Let's see how the M2 Ultra performs with the Llama 3 8B model:

| Quantization | Processing Speed (tokens/sec) | Generation Speed (tokens/sec) |

|---|---|---|

| F16 | 1202.74 | 36.25 |

| Q4KM | 1023.89 | 76.28 |

The M2 Ultra delivers impressive performance with the Llama 3 8B model, showcasing its ability to handle larger models efficiently.

Llama 3 70B Token Speed Generation

This is where the M2 Ultra truly shines. Let's take a look:

| Quantization | Processing Speed (tokens/sec) | Generation Speed (tokens/sec) |

|---|---|---|

| F16 | 145.82 | 4.71 |

| Q4KM | 117.76 | 12.13 |

While the M2 Ultra's processing speed is still solid for Llama 3 70B, its token generation speed is noticeably slower than the smaller models. This suggests a potential bottleneck with memory capacity. However, it's important to remember that running a 70B model locally is an ambitious task!

Performance Analysis: Strengths and Weaknesses

Apple M1 Ultra Strengths

- Budget-Friendly: The M1 Ultra is an attractive option for developers looking for a cost-effective solution. It still offers impressive computing power for smaller LLMs.

- Power Efficiency: The M1 Ultra is known for its energy efficiency, making it a good choice for mobile developers. This is especially valuable if you're working with a smaller LLM and running it on a portable device.

- Good Performance with Smaller LLMs: The M1 Ultra performs well with smaller LLMs like Llama 2 7B.

Apple M1 Ultra Weaknesses

- Limited Scalability: The M1 Ultra's performance begins to lag behind when dealing with larger LLMs like Llama 3 70B.

- Performance Decay with Larger Models: The M1 Ultra's performance might not be sufficient for running the very largest models, especially if you require high token generation speed.

Apple M2 Ultra Strengths

- Exceptional Processing Power: The M2 Ultra significantly outperforms the M1 Ultra with impressive processing speed, making it ideal for intensive tasks.

- Improved Memory Efficiency: The M2 Ultra's increased memory capacity and bandwidth help it handle larger models like Llama 3 70B more effectively.

- Versatile for Different LLMs: The M2 Ultra provides sufficient performance for a wide range of LLMs, from smaller models to large ones.

Apple M2 Ultra Weaknesses

- Higher Cost: The M2 Ultra is generally more expensive than the M1 Ultra.

Practical Recommendations for Use Cases

Apple M1 Ultra Use Cases

- Small to Medium-Sized LLMs: The M1 Ultra is perfect for developers working with smaller LLMs like Llama 2 7B. It offers excellent speed and efficiency, making it suitable for applications that don't require the utmost processing power.

- Mobile Development: The M1 Ultra's power efficiency makes it ideal for mobile developers who want to run LLMs on laptops or tablets.

- Budget-Conscious Projects: If you're working on a project with limited resources, the M1 Ultra provides a cost-effective solution without sacrificing performance.

Apple M2 Ultra Use Cases

- Large-Scale Applications: The M2 Ultra is your go-to choice for applications demanding significant processing power. From large language models to complex machine learning tasks, the M2 Ultra delivers the speed and capacity you need.

- Cutting-Edge Research: If you're pushing the boundaries of LLMs, the M2 Ultra allows you to experiment with large models and explore advanced techniques.

- High-Performance Computing: The M2 Ultra is a powerful tool for high-performance computing, making it suitable for tasks like deep learning and scientific simulations.

Understanding Quantization

Quantization plays a major role in LLM performance. It's like using a smaller dictionary, making your model leaner and faster – a lighter dictionary is easier to carry and faster to look up words. It's a technique that reduces the size of the LLM weights, which in turn can speed up processing and generation.

- F16: This is like using a full dictionary – it provides the most accurate representation of the model, but it takes up more space and requires more processing power.

- Q8_0: This is like using a medium-sized dictionary – it strikes a balance between accuracy and efficiency.

- Q4_0: This is like using a very small dictionary – it prioritizes speed and efficiency over accuracy.

FAQ: Frequently Asked Questions

1. What are LLMs and token generation?

LLMs are large language models, and they are AI systems trained on massive amounts of text data. They can understand and generate human-like text. Token generation is the process of breaking down text into individual units (tokens) that the LLM can process and generate new text.

2. What is the difference between the M1 Ultra and M2 Ultra?

The M2 Ultra is the latest generation of Apple's powerful chips. It offers improved performance, memory capacity, and energy efficiency compared to the M1 Ultra.

3. What is quantization and why is it important?

Quantization is a technique that compresses LLM weights to reduce their size. This can significantly speed up processing and token generation.

4. Which LLM is right for me?

The best LLM for you depends on your specific needs. Smaller LLMs like Llama 2 7B are great for personal use, while larger LLMs like Llama 3 70B are more suitable for research and advanced applications.

Keywords

Apple M1 Ultra, Apple M2 Ultra, LLM, token generation, Llama 2 7B, Llama 3 8B, Llama 3 70B, GPUCores, BW, F16, Q80, Q40, quantization, performance, benchmark, comparison, speed, efficiency, practical recommendations, use cases, developer, geeky, funny.