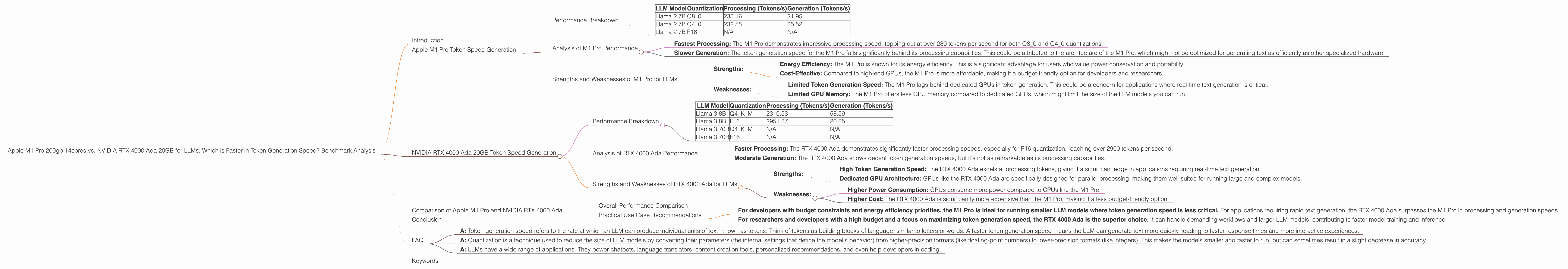

Apple M1 Pro 200gb 14cores vs. NVIDIA RTX 4000 Ada 20GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the realm of large language models (LLMs), efficient token generation is paramount. LLMs are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, but the speed at which they do this depends largely on the hardware they run on. This article delves into the performance comparison of two popular devices for running LLMs: the Apple M1 Pro 200GB 14-core chip and the NVIDIA RTX 4000 Ada 20GB GPU. We'll analyze their token generation speeds for various LLM models and explore the pros and cons of each device for LLM workloads.

Think of LLMs as sophisticated robots that understand and generate text. The better the computer, the faster and smoother these robots can work. We'll see which computer-brain, the M1 Pro or the RTX 4000 Ada, is better equipped for tackling the intricacies of LLMs.

Apple M1 Pro Token Speed Generation

The Apple M1 Pro is a powerful processor that offers a compelling blend of performance and efficiency. We'll examine its capabilities with various LLM models, focusing on token generation speed.

Performance Breakdown

Let's dive into the numbers. The benchmark data for the M1 Pro chip is as follows:

| LLM Model | Quantization | Processing (Tokens/s) | Generation (Tokens/s) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 235.16 | 21.95 |

| Llama 2 7B | Q4_0 | 232.55 | 35.52 |

| Llama 2 7B | F16 | N/A | N/A |

Note: The M1 Pro chip doesn't seem to have data available for F16 quantization for Llama 2 7B. We'll focus on the available information for Q80 and Q40 quantization.

Analysis of M1 Pro Performance

- Fastest Processing: The M1 Pro demonstrates impressive processing speed, topping out at over 230 tokens per second for both Q80 and Q40 quantizations.

- Slower Generation: The token generation speed for the M1 Pro falls significantly behind its processing capabilities. This could be attributed to the architecture of the M1 Pro, which might not be optimized for generating text as efficiently as other specialized hardware.

Strengths and Weaknesses of M1 Pro for LLMs

- Strengths:

- Energy Efficiency: The M1 Pro is known for its energy efficiency. This is a significant advantage for users who value power conservation and portability.

- Cost-Effective: Compared to high-end GPUs, the M1 Pro is more affordable, making it a budget-friendly option for developers and researchers.

- Weaknesses:

- Limited Token Generation Speed: The M1 Pro lags behind dedicated GPUs in token generation. This could be a concern for applications where real-time text generation is critical.

- Limited GPU Memory: The M1 Pro offers less GPU memory compared to dedicated GPUs, which might limit the size of the LLM models you can run.

NVIDIA RTX 4000 Ada 20GB Token Speed Generation

The NVIDIA RTX 4000 Ada 20GB is a powerful GPU designed for high-performance computing tasks, including LLM inference. Let's see how it stacks up in terms of token generation speed.

Performance Breakdown

Here's a breakdown of the benchmark data for the RTX 4000 Ada:

| LLM Model | Quantization | Processing (Tokens/s) | Generation (Tokens/s) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 2310.53 | 58.59 |

| Llama 3 8B | F16 | 2951.87 | 20.85 |

| Llama 3 70B | Q4KM | N/A | N/A |

| Llama 3 70B | F16 | N/A | N/A |

Note: Data for Llama 3 70B on the RTX 4000 Ada is not available.

Analysis of RTX 4000 Ada Performance

- Faster Processing: The RTX 4000 Ada demonstrates significantly faster processing speeds, especially for F16 quantization, reaching over 2900 tokens per second.

- Moderate Generation: The RTX 4000 Ada shows decent token generation speeds, but it's not as remarkable as its processing capabilities.

Strengths and Weaknesses of RTX 4000 Ada for LLMs

- Strengths:

- High Token Generation Speed: The RTX 4000 Ada excels at processing tokens, giving it a significant edge in applications requiring real-time text generation.

- Dedicated GPU Architecture: GPUs like the RTX 4000 Ada are specifically designed for parallel processing, making them well-suited for running large and complex models.

- Weaknesses:

- Higher Power Consumption: GPUs consume more power compared to CPUs like the M1 Pro.

- Higher Cost: The RTX 4000 Ada is significantly more expensive than the M1 Pro, making it a less budget-friendly option.

Comparison of Apple M1 Pro and NVIDIA RTX 4000 Ada

Overall Performance Comparison

Let's compare the performance of the two devices:

Processing: The RTX 4000 Ada outperforms the M1 Pro by a large margin, achieving significantly faster token processing speeds. This translates to faster model loading and inference times, beneficial for applications where speed is paramount.

Generation: The RTX 4000 Ada offers a significant boost over the M1 Pro in generation speed. This advantage is crucial for applications requiring rapid text generation, such as real-time chatbots or content creation tools.

Quantization: Both devices achieve optimal performance with different levels of quantization. The M1 Pro shows good results with Q80 and Q40, while the RTX 4000 Ada excels at F16 and Q4KM for Llama 3 8B.

Practical Use Case Recommendations

- For developers with budget constraints and energy efficiency priorities, the M1 Pro is ideal for running smaller LLM models where token generation speed is less critical. For applications requiring rapid text generation, the RTX 4000 Ada surpasses the M1 Pro in processing and generation speeds.

- For researchers and developers with a high budget and a focus on maximizing token generation speed, the RTX 4000 Ada is the superior choice. It can handle demanding workflows and larger LLM models, contributing to faster model training and inference.

Conclusion

Choosing the right hardware for your LLM workloads depends on your specific requirements and budget. The Apple M1 Pro offers an efficient and cost-effective solution for running smaller models, while the NVIDIA RTX 4000 Ada provides unparalleled performance for demanding workloads and larger LLM models. Ultimately, the best device for you comes down to finding the balance between performance, cost, and energy efficiency.

FAQ

Q: What is token generation speed? * A: Token generation speed refers to the rate at which an LLM can produce individual units of text, known as tokens. Think of tokens as building blocks of language, similar to letters or words. A faster token generation speed means the LLM can generate text more quickly, leading to faster response times and more interactive experiences.

Q: What is quantization? * A: Quantization is a technique used to reduce the size of LLM models by converting their parameters (the internal settings that define the model's behavior) from higher-precision formats (like floating-point numbers) to lower-precision formats (like integers). This makes the models smaller and faster to run, but can sometimes result in a slight decrease in accuracy.

Q: What are some real-world applications of LLMs? * A: LLMs have a wide range of applications. They power chatbots, language translators, content creation tools, personalized recommendations, and even help developers in coding.

Keywords

LLM, token generation, speed, performance, Apple M1 Pro, NVIDIA RTX 4000 Ada, benchmark, comparison, Llama 2, Llama 3, quantization, F16, Q80, Q40, Q4KM, processing, generation, GPU, CPU, cost, energy efficiency, developer, researcher, use case, recommendation, chatbot, content creation, real-time, inference, model, training, accuracy, efficiency.