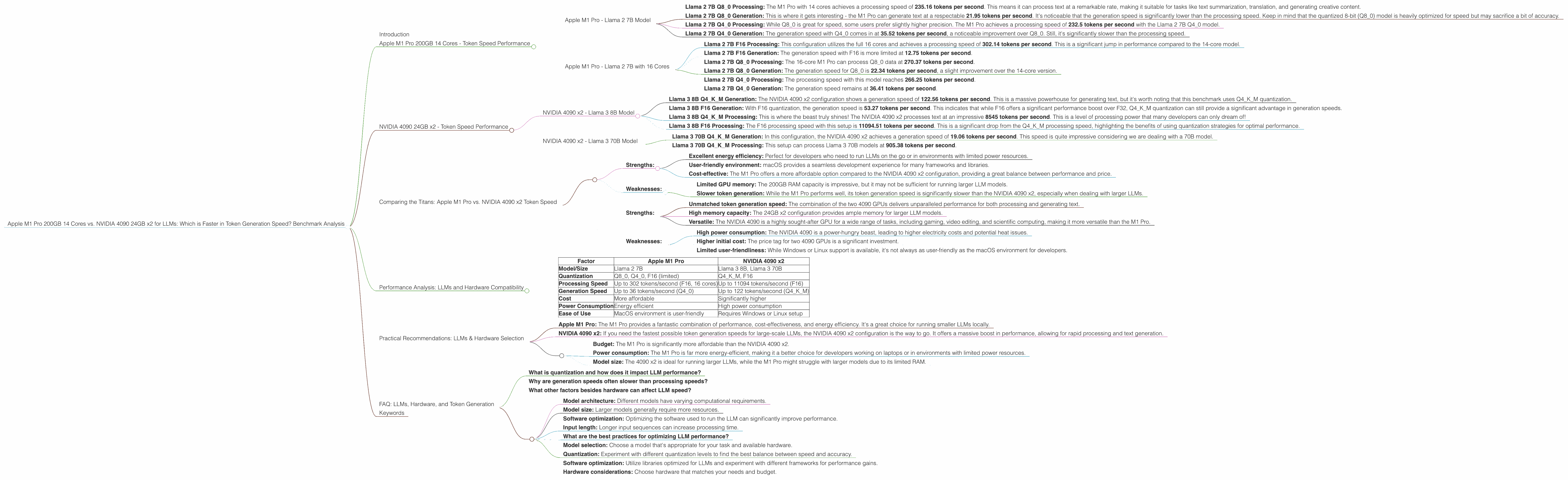

Apple M1 Pro 200gb 14cores vs. NVIDIA 4090 24GB x2 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and applications emerging all the time. These models are capable of performing incredible tasks, from generating realistic text to translating languages, answering questions, and even writing code. However, running these models locally can be demanding, requiring powerful hardware.

This article dives headfirst into the performance comparison of two popular hardware setups for local LLM execution: the Apple M1 Pro 200GB with 14 cores and the NVIDIA 4090 24GB x2. We'll explore their token generation speeds for various LLM models, analyze their strengths and weaknesses, and provide practical recommendations based on real-world benchmarks.

Buckle up, dear reader, because this is a showdown you won't want to miss!

Apple M1 Pro 200GB 14 Cores - Token Speed Performance

The Apple M1 Pro, known for its impressive power efficiency and excellent performance, is a popular choice for developers looking to run LLMs locally. Let's break down its capabilities:

Apple M1 Pro - Llama 2 7B Model

Llama 2 7B Q8_0 Processing: The M1 Pro with 14 cores achieves a processing speed of 235.16 tokens per second. This means it can process text at a remarkable rate, making it suitable for tasks like text summarization, translation, and generating creative content.

Llama 2 7B Q80 Generation: This is where it gets interesting - the M1 Pro can generate text at a respectable 21.95 tokens per second. It's noticeable that the generation speed is significantly lower than the processing speed. Keep in mind that the quantized 8-bit (Q80) model is heavily optimized for speed but may sacrifice a bit of accuracy.

Llama 2 7B Q40 Processing: While Q80 is great for speed, some users prefer slightly higher precision. The M1 Pro achieves a processing speed of 232.5 tokens per second with the Llama 2 7B Q4_0 model.

Llama 2 7B Q40 Generation: The generation speed with Q40 comes in at 35.52 tokens per second, a noticeable improvement over Q8_0. Still, it's significantly slower than the processing speed.

Apple M1 Pro - Llama 2 7B with 16 Cores

The M1 Pro with 16 cores shows slightly better results:

Llama 2 7B F16 Processing: This configuration utilizes the full 16 cores and achieves a processing speed of 302.14 tokens per second. This is a significant jump in performance compared to the 14-core model.

Llama 2 7B F16 Generation: The generation speed with F16 is more limited at 12.75 tokens per second.

Llama 2 7B Q80 Processing: The 16-core M1 Pro can process Q80 data at 270.37 tokens per second.

Llama 2 7B Q80 Generation: The generation speed for Q80 is 22.34 tokens per second, a slight improvement over the 14-core version.

Llama 2 7B Q4_0 Processing: The processing speed with this model reaches 266.25 tokens per second.

Llama 2 7B Q4_0 Generation: The generation speed remains at 36.41 tokens per second.

NVIDIA 4090 24GB x2 - Token Speed Performance

Now, let's turn our attention to the heavyweight champion of the GPU world - the NVIDIA 4090. This beast comes in a dual configuration, boasting a staggering amount of processing power. Let's see what it can do:

NVIDIA 4090 x2 - Llama 3 8B Model

Llama 3 8B Q4KM Generation: The NVIDIA 4090 x2 configuration shows a generation speed of 122.56 tokens per second. This is a massive powerhouse for generating text, but it's worth noting that this benchmark uses Q4KM quantization.

Llama 3 8B F16 Generation: With F16 quantization, the generation speed is 53.27 tokens per second. This indicates that while F16 offers a significant performance boost over F32, Q4KM quantization can still provide a significant advantage in generation speeds.

Llama 3 8B Q4KM Processing: This is where the beast truly shines! The NVIDIA 4090 x2 processes text at an impressive 8545 tokens per second. This is a level of processing power that many developers can only dream of!

Llama 3 8B F16 Processing: The F16 processing speed with this setup is 11094.51 tokens per second. This is a significant drop from the Q4KM processing speed, highlighting the benefits of using quantization strategies for optimal performance.

NVIDIA 4090 x2 - Llama 3 70B Model

Llama 3 70B Q4KM Generation: In this configuration, the NVIDIA 4090 x2 achieves a generation speed of 19.06 tokens per second. This speed is quite impressive considering we are dealing with a 70B model.

Llama 3 70B Q4KM Processing: This setup can process Llama 3 70B models at 905.38 tokens per second.

Comparing the Titans: Apple M1 Pro vs. NVIDIA 4090 x2 Token Speed

Apple M1 Pro:

Strengths:

- Excellent energy efficiency: Perfect for developers who need to run LLMs on the go or in environments with limited power resources.

- User-friendly environment: macOS provides a seamless development experience for many frameworks and libraries.

- Cost-effective: The M1 Pro offers a more affordable option compared to the NVIDIA 4090 x2 configuration, providing a great balance between performance and price.

Weaknesses:

- Limited GPU memory: The 200GB RAM capacity is impressive, but it may not be sufficient for running larger LLM models.

- Slower token generation: While the M1 Pro performs well, its token generation speed is significantly slower than the NVIDIA 4090 x2, especially when dealing with larger LLMs.

NVIDIA 4090 x2: * Strengths: * Unmatched token generation speed: The combination of the two 4090 GPUs delivers unparalleled performance for both processing and generating text. * High memory capacity: The 24GB x2 configuration provides ample memory for larger LLM models. * Versatile: The NVIDIA 4090 is a highly sought-after GPU for a wide range of tasks, including gaming, video editing, and scientific computing, making it more versatile than the M1 Pro.

- Weaknesses:

- High power consumption: The NVIDIA 4090 is a power-hungry beast, leading to higher electricity costs and potential heat issues.

- Higher initial cost: The price tag for two 4090 GPUs is a significant investment.

- Limited user-friendliness: While Windows or Linux support is available, it's not always as user-friendly as the macOS environment for developers.

Performance Analysis: LLMs and Hardware Compatibility

To understand the performance discrepancies, let's look at some of the key factors:

| Factor | Apple M1 Pro | NVIDIA 4090 x2 |

|---|---|---|

| Model/Size | Llama 2 7B | Llama 3 8B, Llama 3 70B |

| Quantization | Q80, Q40, F16 (limited) | Q4KM, F16 |

| Processing Speed | Up to 302 tokens/second (F16, 16 cores) | Up to 11094 tokens/second (F16) |

| Generation Speed | Up to 36 tokens/second (Q4_0) | Up to 122 tokens/second (Q4KM) |

| Cost | More affordable | Significantly higher |

| Power Consumption | Energy efficient | High power consumption |

| Ease of Use | MacOS environment is user-friendly | Requires Windows or Linux setup |

Key Takeaways:

- The NVIDIA 4090 x2 dominates in token generation speed, particularly with larger models like Llama 3 70B. If you're working with massive models and require lightning-fast generation speeds, the NVIDIA 4090 x2 is the clear winner.

- The Apple M1 Pro provides a cost-effective option with respectable token generation speeds, but its performance might be limited for larger models. It excels with smaller models like Llama 2 7B, making it ideal for developers on a budget or with limited power resources.

- The choice of quantization plays a vital role in performance. Q4KM and Q8_0 are optimized for speed, while F16 offers slightly higher accuracy but may come with a performance penalty.

Practical Recommendations: LLMs & Hardware Selection

For developers working with smaller LLMs (like Llama 2 7B) or for tasks that prioritize energy efficiency:

- Apple M1 Pro: The M1 Pro provides a fantastic combination of performance, cost-effectiveness, and energy efficiency. It's a great choice for running smaller LLMs locally.

For developers working with large LLMs (like Llama 3 70B) or for tasks demanding maximum speed:

- NVIDIA 4090 x2: If you need the fastest possible token generation speeds for large-scale LLMs, the NVIDIA 4090 x2 configuration is the way to go. It offers a massive boost in performance, allowing for rapid processing and text generation.

Considerations for choosing between these two titans:

- Budget: The M1 Pro is significantly more affordable than the NVIDIA 4090 x2.

- Power consumption: The M1 Pro is far more energy-efficient, making it a better choice for developers working on laptops or in environments with limited power resources.

- Model size: The 4090 x2 is ideal for running larger LLMs, while the M1 Pro might struggle with larger models due to its limited RAM.

FAQ: LLMs, Hardware, and Token Generation

- What is quantization and how does it impact LLM performance?

Quantization is a technique used to reduce the size of a model by using lower precision data types. This can significantly boost performance because it involves fewer calculations and less memory usage. Imagine reducing a detailed photo to a lower-resolution image: you lose some quality but gain speed and efficiency.

- Why are generation speeds often slower than processing speeds?

Generating text requires the model to predict the next tokens, which is more computationally demanding than simply processing text. The model needs to consider context, language rules, and probabilities, leading to a slower pace. Think of it like a writer struggling to find the perfect word for their story: it takes more time and effort than just reading a pre-written story.

- What other factors besides hardware can affect LLM speed?

Several factors beyond hardware influence LLM speed:

- Model architecture: Different models have varying computational requirements.

- Model size: Larger models generally require more resources.

- Software optimization: Optimizing the software used to run the LLM can significantly improve performance.

Input length: Longer input sequences can increase processing time.

What are the best practices for optimizing LLM performance?

Model selection: Choose a model that's appropriate for your task and available hardware.

- Quantization: Experiment with different quantization levels to find the best balance between speed and accuracy.

- Software optimization: Utilize libraries optimized for LLMs and experiment with different frameworks for performance gains.

- Hardware considerations: Choose hardware that matches your needs and budget.

Keywords

Large language models, LLMs, Apple M1 Pro, NVIDIA 4090, token generation, speed, performance, benchmark, comparison, Llama 2, Llama 3, quantization, processing, generation, cost, power consumption, ease of use, practical recommendations, FAQ, software optimization, hardware considerations.