Apple M1 Pro 200gb 14cores vs. NVIDIA 4090 24GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is booming, with new models popping up every day. But the real challenge is running these models locally. Think about it like this: Imagine you're hosting a massive party for your LLM, but you need the right equipment. Do you have a cozy living room (Apple M1 Pro), or a massive warehouse (NVIDIA 4090)? Today, we're diving into the token generation speed showdown between two popular contenders – Apple's M1 Pro and NVIDIA's 4090 – and we're going to see who wins the "LLM party."

This article will unpack the advantages of each device for running LLMs, specifically looking at how fast they generate tokens. We'll analyze their strengths and weaknesses, and help you decide which device is best for your LLM needs. Don't worry, no coding background required – think of this as a fun and informative guide to understanding the differences in these silicon superstars!

Performance Analysis: M1 Pro vs. 4090 for Token Generation

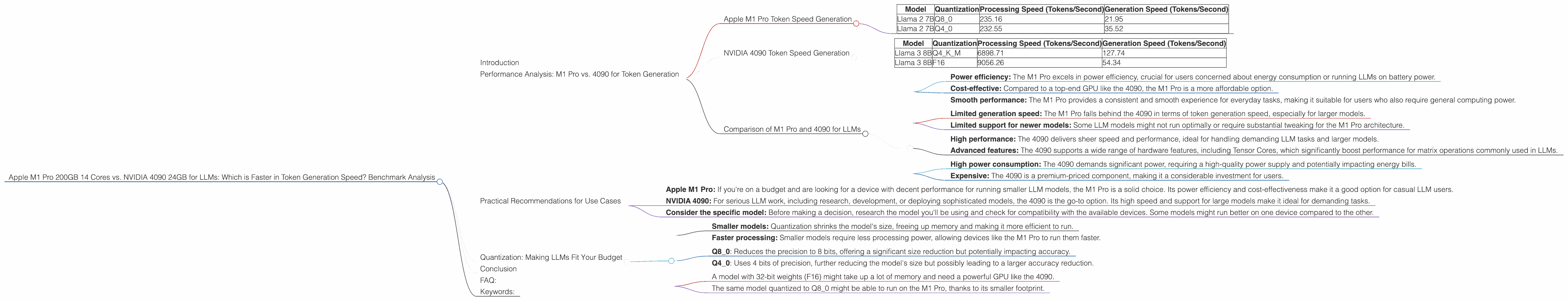

Apple M1 Pro Token Speed Generation

The Apple M1 Pro, with its 14 cores and 200 GB memory, is a solid choice for running LLMs. It's known for its power efficiency and smooth performance, making it a great option if you're looking for a balanced system that doesn't require huge power consumption.

Here's what we see in the benchmark data:

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 235.16 | 21.95 |

| Llama 2 7B | Q4_0 | 232.55 | 35.52 |

As you can see, the M1 Pro is a solid performer for processing tokens, especially when using quantization techniques like Q80 and Q40. The Q8_0 quantization, for example, allows the M1 Pro to process 235.16 tokens a second, which is quite impressive for a non-GPU device.

However, when it comes to generating tokens, the M1 Pro struggles to match the speed of more powerful GPUs. This difference is most obvious for the generation speed, where it falls behind the 4090.

NVIDIA 4090 Token Speed Generation

Now, let's talk about the beast – the NVIDIA 4090. With its 24 GB of RAM and dedicated GPU power, it's like a Ferrari in the world of LLM hardware. The 4090 is built for speed and performance, and it shows in the benchmark data.

Here's a breakdown of the 4090's performance with different LLM models:

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 6898.71 | 127.74 |

| Llama 3 8B | F16 | 9056.26 | 54.34 |

The 4090's advantage is clear. It's a powerhouse for both processing and generating tokens. It can handle larger models like Llama 3 8B with relative ease, processing over 6,000 tokens per second using Q4KM quantization. Even when it comes to generation, it outperforms the M1 Pro by a significant margin.

Comparison of M1 Pro and 4090 for LLMs

Strengths of M1 Pro:

- Power efficiency: The M1 Pro excels in power efficiency, crucial for users concerned about energy consumption or running LLMs on battery power.

- Cost-effective: Compared to a top-end GPU like the 4090, the M1 Pro is a more affordable option.

- Smooth performance: The M1 Pro provides a consistent and smooth experience for everyday tasks, making it suitable for users who also require general computing power.

Weaknesses of M1 Pro:

- Limited generation speed: The M1 Pro falls behind the 4090 in terms of token generation speed, especially for larger models.

- Limited support for newer models: Some LLM models might not run optimally or require substantial tweaking for the M1 Pro architecture.

Strengths of NVIDIA 4090:

- High performance: The 4090 delivers sheer speed and performance, ideal for handling demanding LLM tasks and larger models.

- Advanced features: The 4090 supports a wide range of hardware features, including Tensor Cores, which significantly boost performance for matrix operations commonly used in LLMs.

Weaknesses of NVIDIA 4090:

- High power consumption: The 4090 demands significant power, requiring a high-quality power supply and potentially impacting energy bills.

- Expensive: The 4090 is a premium-priced component, making it a considerable investment for users.

Practical Recommendations for Use Cases

For budget-conscious users:

- Apple M1 Pro: If you're on a budget and are looking for a device with decent performance for running smaller LLM models, the M1 Pro is a solid choice. Its power efficiency and cost-effectiveness make it a good option for casual LLM users.

For professional users and researchers:

- NVIDIA 4090: For serious LLM work, including research, development, or deploying sophisticated models, the 4090 is the go-to option. Its high speed and support for large models make it ideal for demanding tasks.

For users working with specific models:

- Consider the specific model: Before making a decision, research the model you'll be using and check for compatibility with the available devices. Some models might run better on one device compared to the other.

Quantization: Making LLMs Fit Your Budget

Imagine you're trying to fit a giant puzzle into a small box. It's too big! But with quantization, you can make the pieces smaller, allowing it to fit. It's the same with LLMs and their weights.

Quantization is a way to reduce the size of LLM models by reducing the precision of the numbers (weights) used to represent them. Think of it like using fewer decimals when measuring something – it makes the numbers smaller but still gives you a good approximation.

How Quantization Helps

- Smaller models: Quantization shrinks the model's size, freeing up memory and making it more efficient to run.

- Faster processing: Smaller models require less processing power, allowing devices like the M1 Pro to run them faster.

Types of Quantization

- Q8_0: Reduces the precision to 8 bits, offering a significant size reduction but potentially impacting accuracy.

- Q4_0: Uses 4 bits of precision, further reducing the model's size but possibly leading to a larger accuracy reduction.

Example:

- A model with 32-bit weights (F16) might take up a lot of memory and need a powerful GPU like the 4090.

- The same model quantized to Q8_0 might be able to run on the M1 Pro, thanks to its smaller footprint.

Choosing the Right Quantization Level

The choice of quantization level depends on your needs and the specific LLM model. Lower precision (Q80, Q40) might be suitable for tasks where slight accuracy drops are acceptable, while higher precision (F16) is generally favored for tasks requiring high accuracy.

Conclusion

The choice between the Apple M1 Pro and NVIDIA 4090 for running LLMs depends on specific needs and considerations. While the M1 Pro excels in power efficiency and cost-effectiveness, the NVIDIA 4090 reigns supreme in speed and performance, particularly for larger models. Ultimately, the right device for you will depend on your LLM project and your budget!

FAQ:

Q. What is token generation speed?

A. Token generation speed refers to how fast a device can generate new tokens, which are essentially the building blocks of text in LLMs. The higher the token generation speed, the faster the model can produce text outputs.

Q. Quantization: friend or foe?

A. Quantization is like a double-edged sword. It helps make LLMs more efficient but can slightly impact accuracy. The trade-off is generally worth it, especially for smaller devices like the M1 Pro.

Q. What are some use cases for LLMs?

A. LLMs have a wide range of applications, including text generation (e.g., writing articles, poems), translation, code completion, chatbot development, and more!

Q. What are the best LLMs for different tasks?

A. The "best" LLM depends on the specific task. For example, some models excel at code completion, while others are better at creative writing. It's always best to research and compare models for your specific objective.

Keywords:

Apple M1 Pro, NVIDIA 4090, LLM, token generation speed, benchmark analysis, quantization, LLMs, AI, machine learning, deep learning, text generation, language models