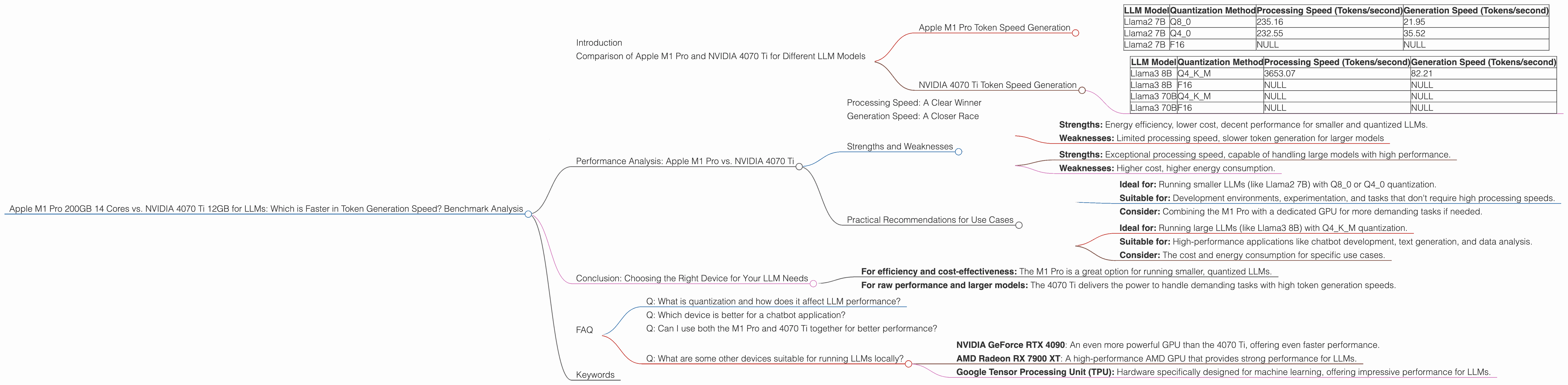

Apple M1 Pro 200gb 14cores vs. NVIDIA 4070 Ti 12GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is booming, and with it, the demand for powerful hardware capable of running these models efficiently. Two popular contenders for this task are the Apple M1 Pro 200GB 14-core chip and the NVIDIA 4070 Ti 12GB GPU. But which one reigns supreme when it comes to generating tokens, the building blocks of text? This article dives deep into a benchmark analysis comparing these two devices, revealing their strengths and weaknesses for running different LLM models.

Imagine you're a developer building a chatbot or a text generation tool. You'll need to choose the right hardware to ensure seamless performance. This analysis will help you make an informed decision, considering factors like model size, quantization, and processing speed.

Comparison of Apple M1 Pro and NVIDIA 4070 Ti for Different LLM Models

This section will compare the performance of the Apple M1 Pro and NVIDIA 4070 Ti by analyzing their token generation speeds for various LLM models. We'll focus on Llama2 and Llama3 model families, keeping in mind the specified model sizes (7B and 8B) in the article title.

Apple M1 Pro Token Speed Generation

The Apple M1 Pro chip, known for its efficiency and power, boasts a 14-core processor and 200GB of memory. Here's how it performs across different LLM models and quantization methods:

| LLM Model | Quantization Method | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 235.16 | 21.95 |

| Llama2 7B | Q4_0 | 232.55 | 35.52 |

| Llama2 7B | F16 | NULL | NULL |

Key Takeaways:

- Impressive processing speeds: The M1 Pro excels in processing tokens, reaching speeds of over 230 tokens per second for both Q80 and Q40 quantization.

- Slower generation: However, the M1 Pro struggles with token generation, with speeds around 20-30 tokens per second for Llama2 7B.

- Missing F16 data: Unfortunately, we lack data for Llama2 7B with F16 quantization on the M1 Pro.

Explanation:

Quantization, a technique used to reduce the size of models, can significantly impact performance. While the M1 Pro shines with Q80 and Q40 quantization, its performance with F16 (without quantization) might be different. This highlights the importance of choosing the right combination of LLM, quantization method, and device for optimal results.

NVIDIA 4070 Ti Token Speed Generation

The NVIDIA 4070 Ti GPU is a powerhouse designed for high-performance computing, boasting 12GB of memory and impressive parallel processing capabilities. Here's how it performs with different Llama3 models:

| LLM Model | Quantization Method | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|

| Llama3 8B | Q4KM | 3653.07 | 82.21 |

| Llama3 8B | F16 | NULL | NULL |

| Llama3 70B | Q4KM | NULL | NULL |

| Llama3 70B | F16 | NULL | NULL |

Key Takeaways:

- Exceptional processing speeds: The 4070 Ti shines in processing tokens, reaching over 3600 tokens per second for Llama3 8B with Q4KM quantization.

- Decent generation speed: The GPU also demonstrates a respectable token generation speed of 82.21 tokens per second for Llama3 8B with Q4KM quantization.

- Missing data: We lack data for Llama3 with F16 quantization and Llama3 70B with both Q4KM and F16 quantization on the 4070 Ti.

Explanation:

The 4070 Ti's strong performance showcases its capabilities for handling larger models like Llama3 8B. The absence of data for F16 and Llama3 70B leaves room for further exploration and analysis.

Performance Analysis: Apple M1 Pro vs. NVIDIA 4070 Ti

Now, let's analyze the performance differences between the Apple M1 Pro and NVIDIA 4070 Ti for token generation.

Processing Speed: A Clear Winner

The NVIDIA 4070 Ti emerges as the clear champion in processing tokens. It boasts significantly faster processing speeds, especially for Q4KM quantized Llama3 8B. This is due to its dedicated GPU architecture, optimized for parallel processing. The M1 Pro, though efficient, falls short in this area.

Think of it like this: Imagine you have a group of people working on a project. The M1 Pro is like a small, efficient team working diligently. The 4070 Ti is like a large factory with specialized machinery, capable of churning out work much faster.

Generation Speed: A Closer Race

In token generation, the gap between the two devices narrows. The 4070 Ti leads with its 82.21 tokens per second, but the M1 Pro manages a respectable 35.52 tokens per second for Llama2 7B with Q4_0 quantization. While the 4070 Ti might be slightly faster, it's not a huge difference considering the M1 Pro's lower cost and energy consumption.

Strengths and Weaknesses

Apple M1 Pro:

- Strengths: Energy efficiency, lower cost, decent performance for smaller and quantized LLMs.

- Weaknesses: Limited processing speed, slower token generation for larger models

NVIDIA 4070 Ti:

- Strengths: Exceptional processing speed, capable of handling large models with high performance.

- Weaknesses: Higher cost, higher energy consumption.

Practical Recommendations for Use Cases

Apple M1 Pro:

- Ideal for: Running smaller LLMs (like Llama2 7B) with Q80 or Q40 quantization.

- Suitable for: Development environments, experimentation, and tasks that don't require high processing speeds.

- Consider: Combining the M1 Pro with a dedicated GPU for more demanding tasks if needed.

NVIDIA 4070 Ti:

- Ideal for: Running large LLMs (like Llama3 8B) with Q4KM quantization.

- Suitable for: High-performance applications like chatbot development, text generation, and data analysis.

- Consider: The cost and energy consumption for specific use cases.

Conclusion: Choosing the Right Device for Your LLM Needs

The choice between the Apple M1 Pro and NVIDIA 4070 Ti ultimately depends on your specific use case and priorities.

- For efficiency and cost-effectiveness: The M1 Pro is a great option for running smaller, quantized LLMs.

- For raw performance and larger models: The 4070 Ti delivers the power to handle demanding tasks with high token generation speeds.

Remember to consider factors like model size, quantization, and the type of tasks you're undertaking when making your decision.

FAQ

Q: What is quantization and how does it affect LLM performance?

Quantization is a technique used to reduce the size of large language models (LLMs) by decreasing the precision of their weights. This makes the models smaller and faster to load and run, but it can also reduce their accuracy.

Imagine you're making a model car out of Lego bricks. You could use different sizes of bricks, like small ones for details or larger ones for the main body. Smaller bricks are like lower precision quantization, while larger bricks are like higher precision. Using smaller bricks allows you to build a smaller car, but it might not be as detailed.

Q: Which device is better for a chatbot application?

For a chatbot application, the NVIDIA 4070 Ti is generally recommended because it can handle the complexity of large models with high token generation speeds. This leads to faster conversational responses and a more enjoyable user experience.

Q: Can I use both the M1 Pro and 4070 Ti together for better performance?

Yes, you can! You can use a combination of the M1 Pro's CPU and the 4070 Ti's GPU for even faster performance. This approach is often used by developers who need to handle complex tasks and large models efficiently.

Q: What are some other devices suitable for running LLMs locally?

Other popular devices include:

- NVIDIA GeForce RTX 4090: An even more powerful GPU than the 4070 Ti, offering even faster performance.

- AMD Radeon RX 7900 XT: A high-performance AMD GPU that provides strong performance for LLMs.

- Google Tensor Processing Unit (TPU): Hardware specifically designed for machine learning, offering impressive performance for LLMs.

Keywords

Apple M1 Pro, NVIDIA 4070 Ti, LLM, Llama2, Llama3, Token Generation Speed, Benchmark, Processing Speed, Generation Speed, Quantization, F16, Q80, Q40, Q4KM, GPU, CPU, Performance Analysis, Use Cases, Chatbot, Text Generation, Development, Cost, Energy Consumption, Performance, Efficiency.