Apple M1 Pro 200gb 14cores vs. Apple M2 Ultra 800gb 60cores for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, offering exciting possibilities for natural language processing tasks. However, running LLMs locally requires powerful hardware capable of handling the demanding computations involved. Choosing the right device can significantly impact performance, especially for token generation speed, which directly affects the fluidity and responsiveness of your LLM applications.

This article delves into a benchmark analysis comparing two potent Apple chips: the M1Pro with 200GB of memory and 14 cores, and the M2Ultra with 800GB of memory and 60 cores. We'll focus on their token generation speed for various LLM models, exploring their strengths and weaknesses to guide you in making informed decisions.

Understanding Token Generation Speed

Token generation speed refers to how quickly an LLM can process input and generate new tokens (the basic units of text). Higher token generation speed translates to faster response times and more efficient LLM usage.

Comparison of Apple M1Pro and M2Ultra for LLM Token Generation

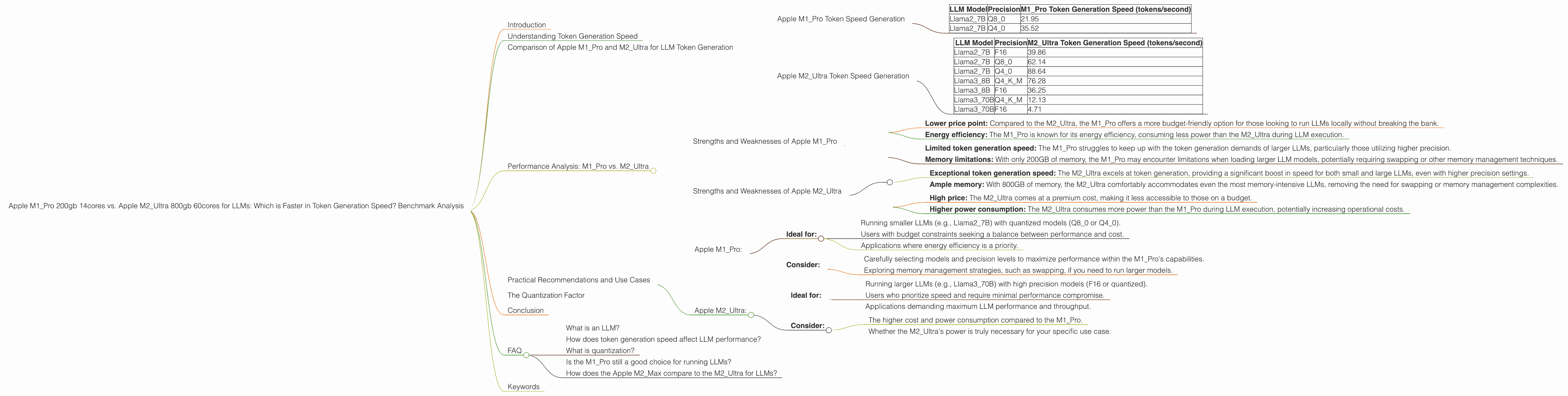

Apple M1_Pro Token Speed Generation

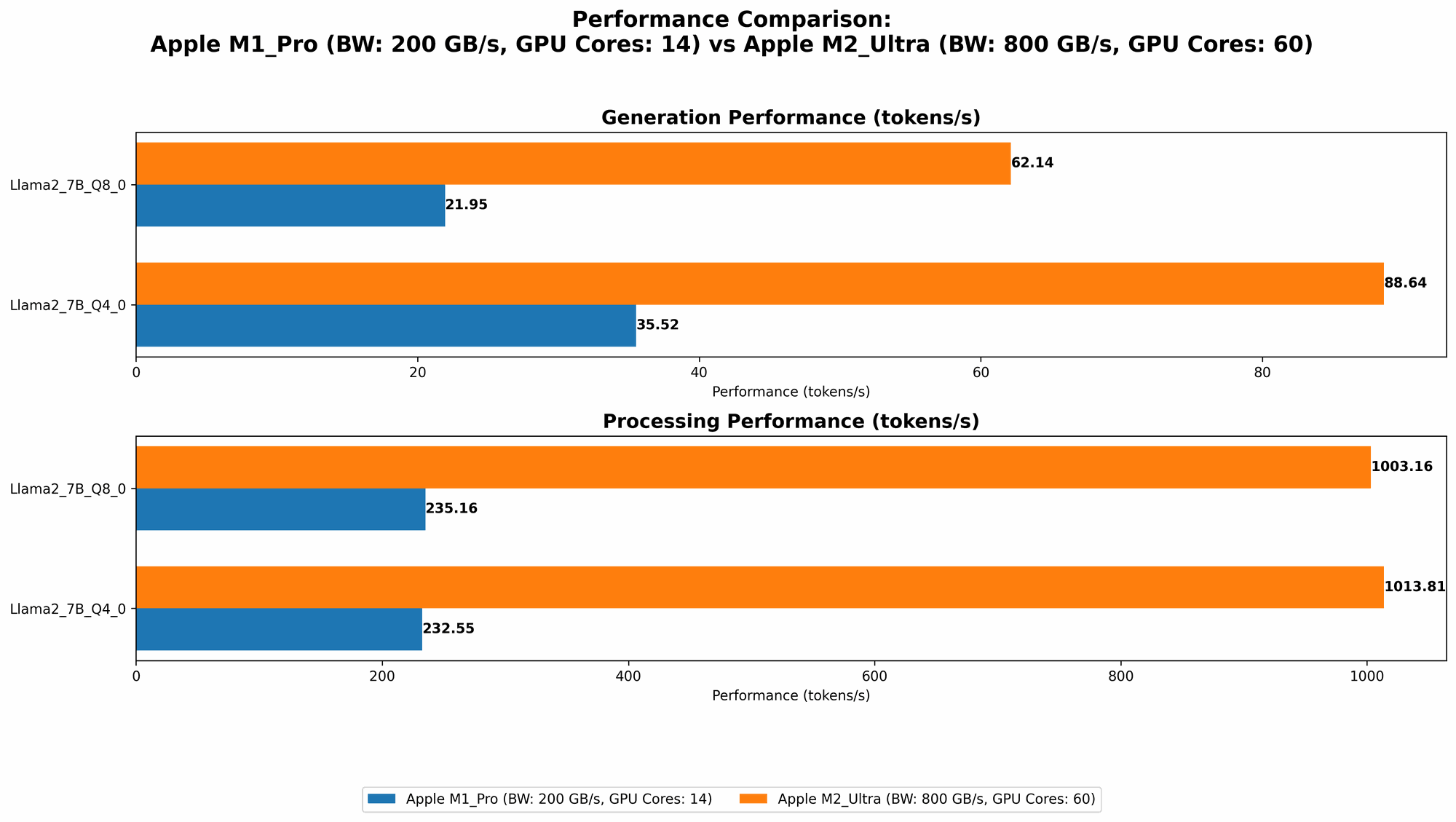

The Apple M1_Pro (200GB, 14 cores) is a solid performer for running LLMs. We'll analyze its performance regarding token generation speed for various Llama2 models, quantized at different precision levels.

Important Note: Data for Llama27B with F16 precision is not available for the Apple M1Pro. The following table only includes the data for Q80 and Q40 precision.

| LLM Model | Precision | M1_Pro Token Generation Speed (tokens/second) |

|---|---|---|

| Llama2_7B | Q8_0 | 21.95 |

| Llama2_7B | Q4_0 | 35.52 |

As you can see, the token generation speed for the Apple M1Pro is significantly lower than the M2Ultra, especially for models with higher precision. This is expected, considering the M1_Pro's lower core count and memory bandwidth.

Apple M2_Ultra Token Speed Generation

The Apple M2Ultra (800GB, 60 cores) is a powerhouse for running demanding LLMs. Let's examine its token generation speed for several popular LLM models, focusing on both F16 and quantized versions (Q80, Q40, Q4K_M).

| LLM Model | Precision | M2_Ultra Token Generation Speed (tokens/second) |

|---|---|---|

| Llama2_7B | F16 | 39.86 |

| Llama2_7B | Q8_0 | 62.14 |

| Llama2_7B | Q4_0 | 88.64 |

| Llama3_8B | Q4KM | 76.28 |

| Llama3_8B | F16 | 36.25 |

| Llama3_70B | Q4KM | 12.13 |

| Llama3_70B | F16 | 4.71 |

The M2Ultra demonstrates its superiority in token generation speed for both smaller and larger LLMs. It consistently outperforms the M1Pro in all scenarios, showcasing the impact of its greater core count and memory bandwidth.

Performance Analysis: M1Pro vs. M2Ultra

Strengths and Weaknesses of Apple M1_Pro

Strengths:

- Lower price point: Compared to the M2Ultra, the M1Pro offers a more budget-friendly option for those looking to run LLMs locally without breaking the bank.

- Energy efficiency: The M1Pro is known for its energy efficiency, consuming less power than the M2Ultra during LLM execution.

Weaknesses:

- Limited token generation speed: The M1_Pro struggles to keep up with the token generation demands of larger LLMs, particularly those utilizing higher precision.

- Memory limitations: With only 200GB of memory, the M1_Pro may encounter limitations when loading larger LLM models, potentially requiring swapping or other memory management techniques.

Strengths and Weaknesses of Apple M2_Ultra

Strengths:

- Exceptional token generation speed: The M2_Ultra excels at token generation, providing a significant boost in speed for both small and large LLMs, even with higher precision settings.

- Ample memory: With 800GB of memory, the M2_Ultra comfortably accommodates even the most memory-intensive LLMs, removing the need for swapping or memory management complexities.

Weaknesses:

- High price: The M2_Ultra comes at a premium cost, making it less accessible to those on a budget.

- Higher power consumption: The M2Ultra consumes more power than the M1Pro during LLM execution, potentially increasing operational costs.

Practical Recommendations and Use Cases

Apple M1_Pro:

- Ideal for:

- Running smaller LLMs (e.g., Llama27B) with quantized models (Q80 or Q4_0).

- Users with budget constraints seeking a balance between performance and cost.

- Applications where energy efficiency is a priority.

- Consider:

- Carefully selecting models and precision levels to maximize performance within the M1_Pro's capabilities.

- Exploring memory management strategies, such as swapping, if you need to run larger models.

Apple M2_Ultra:

- Ideal for:

- Running larger LLMs (e.g., Llama3_70B) with high precision models (F16 or quantized).

- Users who prioritize speed and require minimal performance compromise.

- Applications demanding maximum LLM performance and throughput.

- Consider:

- The higher cost and power consumption compared to the M1Pro.

- Whether the M2Ultra's power is truly necessary for your specific use case.

The Quantization Factor

Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs. Instead of storing weights as 32-bit floating-point numbers (F32), quantization uses smaller data types like 16-bit (F16), 8-bit (Q80), and 4-bit (Q40). The token generation speed can be greatly influenced by the quantization strategy.

Think of it this way: Imagine a heavy suitcase filled with clothes. You could carry the entire suitcase, or you could compress the clothes (quantization) and pack them into a much smaller bag. This makes the bag lighter (less memory) and easier to carry (faster computation).

Conclusion

The Apple M1Pro and M2Ultra offer distinct advantages for running LLMs locally. The M1Pro is a budget-friendly option with decent performance for smaller models and quantized versions. The M2Ultra, with its impressive core count and memory capacity, reigns supreme for larger LLMs and those demanding maximum token generation speed. Ultimately, the optimal choice depends on your specific requirements, budget, and use case.

FAQ

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that can process and generate human-like text. Think of it like a sophisticated text-based chatbot that can understand, translate, summarize, and even write creative content.

How does token generation speed affect LLM performance?

Token generation speed determines how quickly an LLM can process text and generate new text. A faster token generation speed translates to a more responsive and efficient LLM.

What is quantization?

Quantization is a technique used to reduce the size and complexity of LLMs. It involves representing the model's weights using smaller data types, which allows for faster computation and less memory usage.

Is the M1_Pro still a good choice for running LLMs?

Yes, the M1_Pro remains a viable option for running smaller LLMs, especially with quantized models. Its lower price point and energy efficiency make it attractive for users on a budget.

How does the Apple M2Max compare to the M2Ultra for LLMs?

The M2Max is a powerful chip, but the M2Ultra offers significantly more processing power, GPU cores, and memory capacity, making it a better choice for running the most demanding LLMs.

Keywords

LLMs, Large Language Models, token generation speed, Apple M1Pro, Apple M2Ultra, benchmark analysis, performance comparison, Llama2, Llama3, quantization, F16, Q80, Q40, Q4KM, memory bandwidth, core count, practical recommendations, use cases, budget, energy efficiency, power consumption, AI, Natural Language Processing, NLP, developer, geek, local LLMs, hardware, software, performance, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness, performance, performance, efficiency, speed, efficiency, responsiveness, responsiveness.