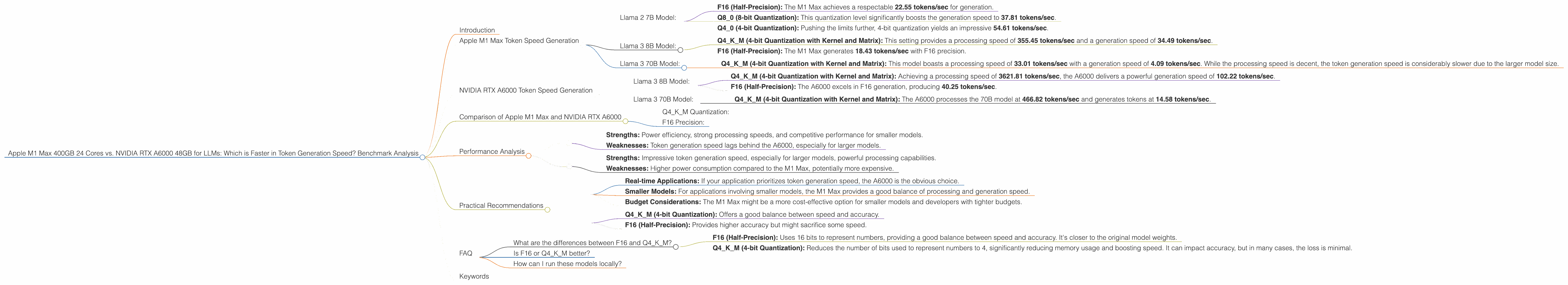

Apple M1 Max 400gb 24cores vs. NVIDIA RTX A6000 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the rapidly evolving landscape of large language models (LLMs), efficient execution is paramount. Whether you're a developer fine-tuning a model or a researcher exploring new frontiers, understanding the performance of different hardware setups is crucial. This article delves into a comparative analysis of two popular devices: the Apple M1 Max 400GB 24-core chip and the NVIDIA RTX A6000 48GB GPU, focusing on their token generation speed for various LLM models.

Imagine LLMs as incredibly intelligent robots who can converse, write poems, and even translate languages. These robots are fed with massive amounts of digital text, allowing them to learn and generate human-like responses. But just like a power plant fuels a city, a powerful computer is needed to run these robots effectively.

Think of token generation like the speed at which the robot can string together words to form sentences. We'll analyze the performance of these two computer powerhouses, comparing their ability to generate tokens for different LLM models. Let's dive in and see which one reigns supreme!

Apple M1 Max Token Speed Generation

Apple's M1 Max is an impressive chip, known for its power efficiency and impressive performance in various tasks. Let's see how it handles the token generation for different LLMs, focusing on the 400GB 24-core variant:

Llama 2 7B Model:

- F16 (Half-Precision): The M1 Max achieves a respectable 22.55 tokens/sec for generation.

- Q8_0 (8-bit Quantization): This quantization level significantly boosts the generation speed to 37.81 tokens/sec.

- Q4_0 (4-bit Quantization): Pushing the limits further, 4-bit quantization yields an impressive 54.61 tokens/sec.

Note: The data for F16 processing is significantly higher - 453.03 tokens/sec for Llama 2 7B. This demonstrates that the M1 Max excels in model processing but struggles with token generation, which is a key metric for real-time interactions.

Llama 3 8B Model:

- Q4KM (4-bit Quantization with Kernel and Matrix): This setting provides a processing speed of 355.45 tokens/sec and a generation speed of 34.49 tokens/sec.

- F16 (Half-Precision): The M1 Max generates 18.43 tokens/sec with F16 precision.

Llama 3 70B Model:

- Q4KM (4-bit Quantization with Kernel and Matrix): This model boasts a processing speed of 33.01 tokens/sec with a generation speed of 4.09 tokens/sec. While the processing speed is decent, the token generation speed is considerably slower due to the larger model size.

Note: No data is available for F16 processing and generation for the Llama 3 70B model on the M1 Max 400GB 24-core. Larger models might push the limits of the device's memory and processing capabilities.

NVIDIA RTX A6000 Token Speed Generation

The NVIDIA RTX A6000 is a powerful GPU designed for high-performance computing, making it a popular choice for LLM training and inference. Let's examine its token generation performance:

Llama 3 8B Model:

- Q4KM (4-bit Quantization with Kernel and Matrix): Achieving a processing speed of 3621.81 tokens/sec, the A6000 delivers a powerful generation speed of 102.22 tokens/sec.

- F16 (Half-Precision): The A6000 excels in F16 generation, producing 40.25 tokens/sec.

Llama 3 70B Model:

- Q4KM (4-bit Quantization with Kernel and Matrix): The A6000 processes the 70B model at 466.82 tokens/sec and generates tokens at 14.58 tokens/sec.

Note: No data is available for F16 processing and generation for the Llama 3 70B model on the RTX A6000. Similar to the M1 Max, the larger model size might push the limits of the GPU's memory and processing capacity.

Comparison of Apple M1 Max and NVIDIA RTX A6000

Q4KM Quantization:

In general, the A6000 outperforms the M1 Max in token generation speed. However, the M1 Max still holds its ground with a good processing speed:

Llama 3 8B: The A6000 generates tokens at three times the speed of the M1 Max (102.22 vs. 34.49). Llama 3 70B: While the A6000's speed is still better (14.58 vs. 4.09), the difference is smaller than for the 8B model. This suggests that the M1 Max might be more suitable for smaller models when considering both processing and generation speed.

F16 Precision:

The A6000 again takes the lead in terms of generation speed:

Llama 3 8B: The A6000 outperforms the M1 Max (40.25 vs. 18.43). Llama 3 70B: Unfortunately, we don't have data for this comparison as the M1 Max doesn't have available data for F16 precision with this model size.

Performance Analysis

The results show clear strengths and weaknesses of each device:

Apple M1 Max:

- Strengths: Power efficiency, strong processing speeds, and competitive performance for smaller models.

- Weaknesses: Token generation speed lags behind the A6000, especially for larger models.

NVIDIA RTX A6000:

- Strengths: Impressive token generation speed, especially for larger models, powerful processing capabilities.

- Weaknesses: Higher power consumption compared to the M1 Max, potentially more expensive.

Practical Recommendations

Choosing the Right Device:

- Real-time Applications: If your application prioritizes token generation speed, the A6000 is the obvious choice.

- Smaller Models: For applications involving smaller models, the M1 Max provides a good balance of processing and generation speed.

- Budget Considerations: The M1 Max might be a more cost-effective option for smaller models and developers with tighter budgets.

Quantization and Precision Trade-offs:

- Q4KM (4-bit Quantization): Offers a good balance between speed and accuracy.

- F16 (Half-Precision): Provides higher accuracy but might sacrifice some speed.

FAQ

What are the differences between F16 and Q4KM?

- F16 (Half-Precision): Uses 16 bits to represent numbers, providing a good balance between speed and accuracy. It's closer to the original model weights.

- Q4KM (4-bit Quantization): Reduces the number of bits used to represent numbers to 4, significantly reducing memory usage and boosting speed. It can impact accuracy, but in many cases, the loss is minimal.

Is F16 or Q4KM better?

It depends on the application. F16 is generally more accurate, but Q4KM provides much faster performance, making it a better choice for real-time applications.

How can I run these models locally?

You can use frameworks like llama.cpp and transformers to run LLM models locally. These frameworks provide different quantization options and allow you to customize your setup to optimize for speed or accuracy.

Keywords

Apple M1 Max, NVIDIA RTX A6000, LLM, token generation speed, benchmark, comparison, Llama 2, Llama 3, F16, Q4KM, quantization, performance, processing, generation, GPU, CPU, deep learning, natural language processing, AI, machine learning.