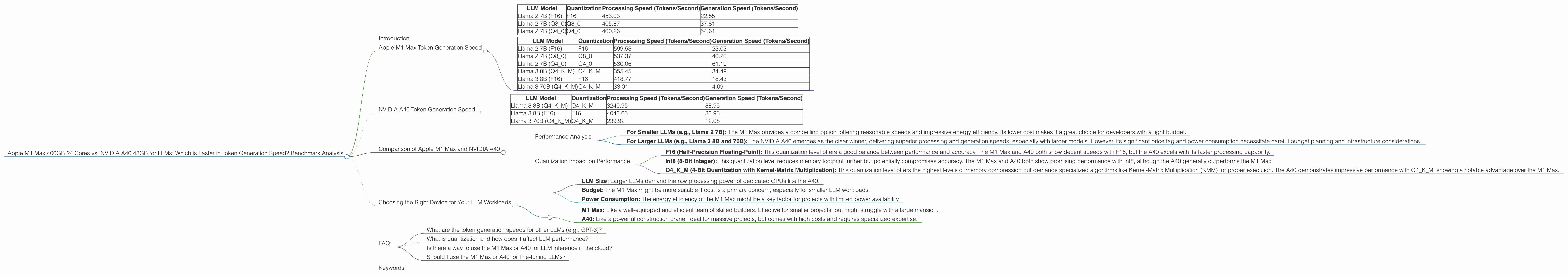

Apple M1 Max 400gb 24cores vs. NVIDIA A40 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the ever-evolving world of large language models (LLMs), the quest for faster and more efficient processing power is an ongoing challenge. As LLMs become increasingly complex, the demand for powerful hardware to handle their demanding computational requirements grows. This article dives into the performance comparison of two popular devices: Apple M1 Max (400GB, 24 cores) and NVIDIA A40 (48GB), focusing on their token generation speed for various LLM models. We'll scrutinize benchmark data to provide a clear picture of their strengths and weaknesses, helping you choose the right device for your LLM workloads.

Imagine you're trying to generate a 100-page document. With a traditional computer, it might take hours or even days. With an LLM optimized for speed, you could get the document in minutes. But which device is the best for this task? That's where this article comes in!

Apple M1 Max Token Generation Speed

The Apple M1 Max is a powerful processor designed for high-performance computing, including AI workloads. Let's examine its token generation speed for different LLMs.

Apple M1 Max 400GB 24 Core Token Generation Speed:

| LLM Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B (F16) | F16 | 453.03 | 22.55 |

| Llama 2 7B (Q8_0) | Q8_0 | 405.87 | 37.81 |

| Llama 2 7B (Q4_0) | Q4_0 | 400.26 | 54.61 |

Apple M1 Max 400GB 32 Core Token Generation Speed:

| LLM Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B (F16) | F16 | 599.53 | 23.03 |

| Llama 2 7B (Q8_0) | Q8_0 | 537.37 | 40.20 |

| Llama 2 7B (Q4_0) | Q4_0 | 530.06 | 61.19 |

| Llama 3 8B (Q4KM) | Q4KM | 355.45 | 34.49 |

| Llama 3 8B (F16) | F16 | 418.77 | 18.43 |

| Llama 3 70B (Q4KM) | Q4KM | 33.01 | 4.09 |

Analyzing the Numbers:

- Llama 2 7B: The M1 Max showcases impressive processing speeds for Llama 2 7B across different quantization levels (F16, Q80, Q40). The Q4_0 quantization level offers the highest generation speed, indicating more significant gains with lower precision.

- Llama 3 8B: The M1 Max demonstrates reasonable performance with Llama 3 8B, achieving a respectable generation speed for Q4KM and F16 quantization.

- Llama 3 70B: The M1 Max struggles with Llama 3 70B. While processing speed is decent, generation speed is significantly lower, indicating potential limitations for larger LLMs.

Apple M1 Max Strengths:

- Energy Efficiency: The M1 Max is renowned for its energy efficiency, making it an attractive option for developers working on projects with power constraints.

- Lower Cost: Compared to high-end GPUs, the M1 Max usually offers a more budget-friendly alternative.

Apple M1 Max Weaknesses:

- Limited Memory: While the 400GB model offers generous storage, the GPU memory itself is smaller compared to dedicated GPUs. This can impact performance for larger LLMs.

- Scaling Limitations: The M1 Max's architecture limits its scaling potential for handling extremely large LLMs.

NVIDIA A40 Token Generation Speed

The NVIDIA A40 is a powerful graphics processing unit (GPU) specifically designed for demanding AI workloads. Let's see how it performs with various LLMs.

NVIDIA A40 48GB Token Generation Speed:

| LLM Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 3 8B (Q4KM) | Q4KM | 3240.95 | 88.95 |

| Llama 3 8B (F16) | F16 | 4043.05 | 33.95 |

| Llama 3 70B (Q4KM) | Q4KM | 239.92 | 12.08 |

Analyzing the Numbers:

- Llama 3 8B: The A40 exhibits phenomenal processing speed and a significantly faster generation speed compared to the M1 Max for both Q4KM and F16 quantization levels. This is due to the dedicated high-performance GPU architecture.

- Llama 3 70B: While still impressive, the A40 shows a notable decrease in processing speed compared to Llama 3 8B with Q4KM quantization. However, the generation speed remains better than the M1 Max for Llama 3 70B.

NVIDIA A40 Strengths:

- High Performance: The A40 is a powerhouse designed for AI tasks, offering exceptional processing and generation speeds for LLMs.

- Scalability: With its robust architecture, the A40 can be used in large clusters for handling massive LLMs.

NVIDIA A40 Weaknesses:

- High Cost: Dedicated GPUs like the A40 come with a substantial price tag, significantly exceeding the cost of the M1 Max.

- Power Consumption: High-performance GPUs like the A40 demand significant power consumption, necessitating appropriate cooling infrastructure.

Comparison of Apple M1 Max and NVIDIA A40

Performance Analysis

The Apple M1 Max and NVIDIA A40 offer distinct advantages and drawbacks when it comes to LLM performance.

- For Smaller LLMs (e.g., Llama 2 7B): The M1 Max provides a compelling option, offering reasonable speeds and impressive energy efficiency. Its lower cost makes it a great choice for developers with a tight budget.

- For Larger LLMs (e.g., Llama 3 8B and 70B): The NVIDIA A40 emerges as the clear winner, delivering superior processing and generation speeds, especially with larger models. However, its significant price tag and power consumption necessitate careful budget planning and infrastructure considerations.

Quantization Impact on Performance

Quantization is a crucial technique for optimizing LLM performance. It reduces model storage and memory requirements, making them more efficient. Let's analyze how different quantization levels impact the token generation speed:

- F16 (Half-Precision Floating-Point): This quantization level offers a good balance between performance and accuracy. The M1 Max and A40 both show decent speeds with F16, but the A40 excels with its faster processing capability.

- Int8 (8-Bit Integer): This quantization level reduces memory footprint further but potentially compromises accuracy. The M1 Max and A40 both show promising performance with Int8, although the A40 generally outperforms the M1 Max.

- Q4KM (4-Bit Quantization with Kernel-Matrix Multiplication): This quantization level offers the highest levels of memory compression but demands specialized algorithms like Kernel-Matrix Multiplication (KMM) for proper execution. The A40 demonstrates impressive performance with Q4KM, showing a notable advantage over the M1 Max.

In a nutshell: Higher precision (F16) requires more computation and can be slower, while lower precision (Q4KM) trades accuracy for faster processing but needs specialized methods.

Choosing the Right Device for Your LLM Workloads

Selecting the ideal device for your LLM workloads hinges on specific requirements such as:

- LLM Size: Larger LLMs demand the raw processing power of dedicated GPUs like the A40.

- Budget: The M1 Max might be more suitable if cost is a primary concern, especially for smaller LLM workloads.

- Power Consumption: The energy efficiency of the M1 Max might be a key factor for projects with limited power availability.

Here's a simple analogy to illustrate:

Imagine you're building a house:

- M1 Max: Like a well-equipped and efficient team of skilled builders. Effective for smaller projects, but might struggle with a large mansion.

- A40: Like a powerful construction crane. Ideal for massive projects, but comes with high costs and requires specialized expertise.

FAQ:

What are the token generation speeds for other LLMs (e.g., GPT-3)?

Unfortunately, the benchmark data provided doesn't cover the performance of the M1 Max or A40 with GPT-3. This data is limited to Llama family models.

What is quantization and how does it affect LLM performance?

Quantization is a technique that reduces the size of LLM models by using fewer bits to represent the model's parameters. It can significantly improve LLM performance by decreasing memory usage and speeding up processing.

Think of it like compressing a photo: You can achieve a smaller file size by sacrificing some image quality.

Is there a way to use the M1 Max or A40 for LLM inference in the cloud?

Yes, cloud providers like Google Cloud, AWS, and Azure offer virtual machines (VMs) with M1 Max and A40 GPUs that can be used for LLM inference. This provides flexibility and scalability, allowing you to access powerful hardware without needing to invest in physical devices.

Should I use the M1 Max or A40 for fine-tuning LLMs?

Both devices can be used for fine-tuning, but the A40 is often preferred due to its significantly faster processing speed. However, the M1 Max might be a good option if you're working with smaller LLMs or have a limited budget.

Keywords:

Apple M1 Max, NVIDIA A40, LLM, token generation, Llama 2, Llama 3, benchmark analysis, AI, GPU, performance comparison, quantization, F16, Q80, Q40, Q4KM, processing speed, generation speed, cloud computing, inference, fine-tuning.